For some of our readers, this is going to feel like a bit of a time warp. In May 2020 the NVIDIA A100 Ampere Reset the Entire AI Industry. Google, AWS, and others have already deployed the A100. We have seen the A100 PCIe Add-in Card Launch and even an updated A100 80GB model two quarters ago. Suffice to say, the industry is well into the A100 cycle. Realistically, we need to be at the half-way point if not later just due to the PCIe Gen5/ CXL infrastructure coming in 2022. AI is a fiercely competitive area where ten months after launch, we have a curious announcement of VMware proudly touting it is the caboose of the A100 train.

There are a lot of “VMware is perfect at everything” folks on the Internet. Instead, we are taking the approach of looking at VMware’s vSphere 7 Update 2 and vSAN 7U2 announcements a bit more critically.

VMware vSphere 7 Update 2 Brings NVIDIA Support

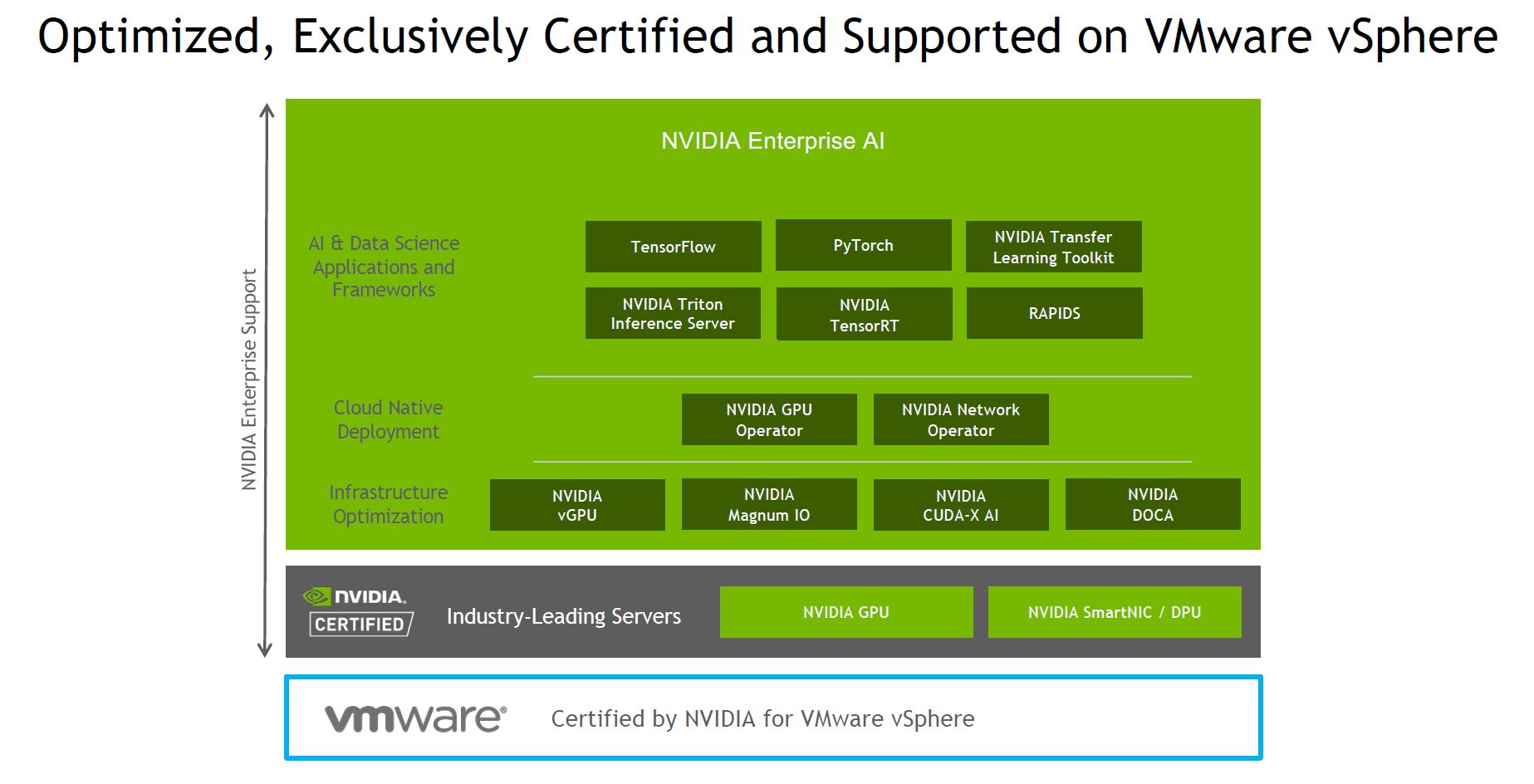

Recently we covered the NVIDIA Certified Servers Add New Revenue Path and one can see that on display here. NVIDIA has its enterprise support stack where it can certify servers for supporting its AI tools. Then VMware can certify NVIDIAs servers for VMware vSphere. This adds another level of support in the mix on new AI servers.

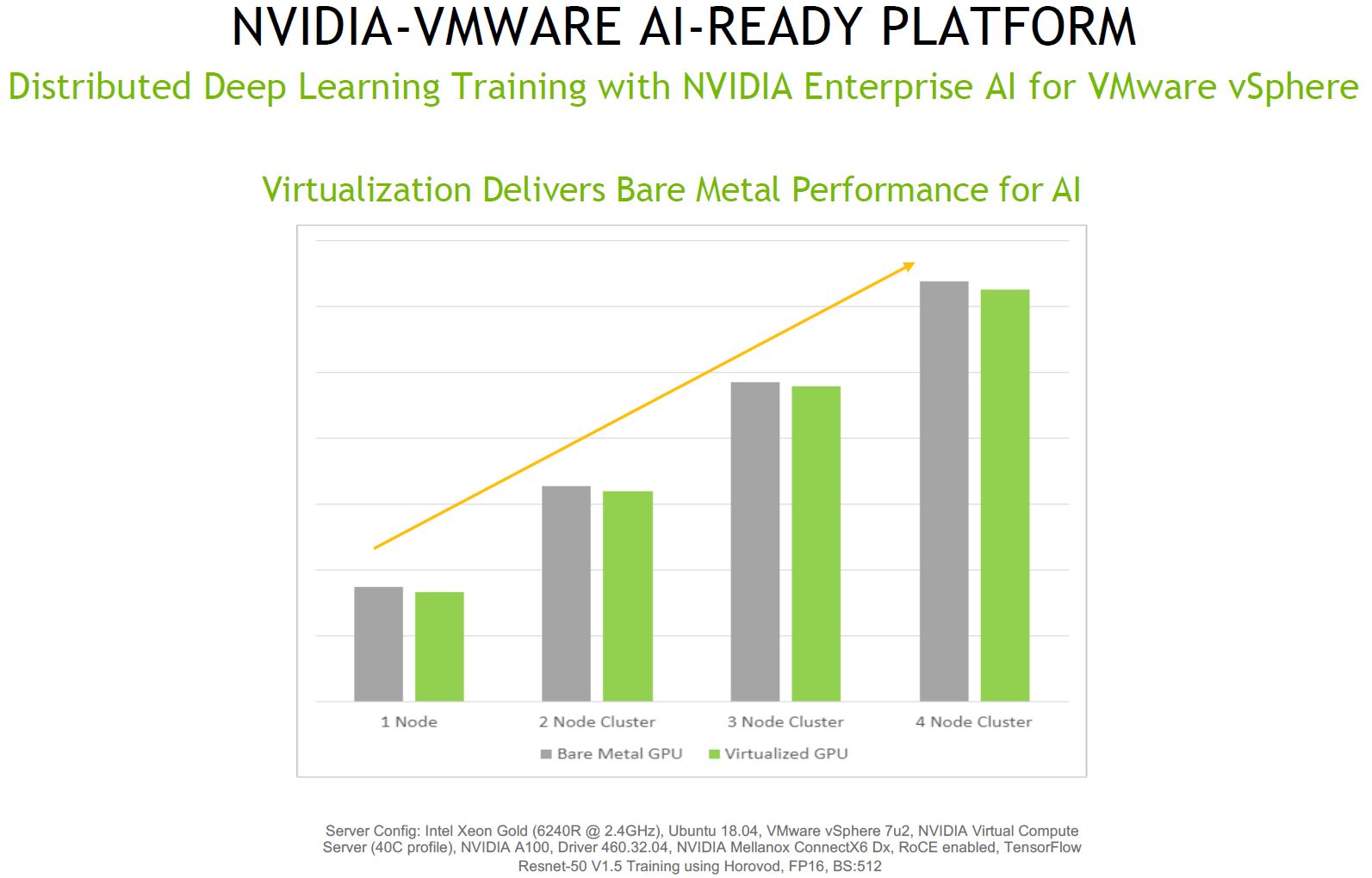

Perhaps the most eyebrow shifting chart in the entire presentation was this one. It shows that one loses performance with VMware virtualization, which one expects. At the same time, many of the cloud providers, such as Microsoft Azure have very high-performance VMs that offer very little by way of performance losses running GPU accelerated workloads. We will let you look at the chart, then discuss some points.

First off, the NVIDIA A100 is a PCIe Gen4 card. Yet is being attached to an Intel Xeon Gold 6240R. As noted in our review of that chip, it is a PCIe Gen3 server. Also NVIDIA Mellanox ConnectX-6 Dx NICs are being used for RoCE. We have a review of the ConnectX-6 coming. That is a PCIe Gen4 capable NIC. Further, there are no Y-axis labels so we have no sense of scale to see what the performance loss is. From an industry perspective, the AMD EPYC 7002 “Rome” and soon EPYC 7003 “Milan” series have been transformational when used in conjunction with the A100. Even major Intel partners that had only Intel servers saw enough benefit with the AMD solution to start offering their first AMD servers just because of the A100. The challenge is that we do not know the degree of performance loss, nor the impact PCIe Gen3 is having. If in either case we are being bottlenecked by PCIe Gen3 speeds, then this chart has little to no relevance since that would be the reason for the performance to be so close.

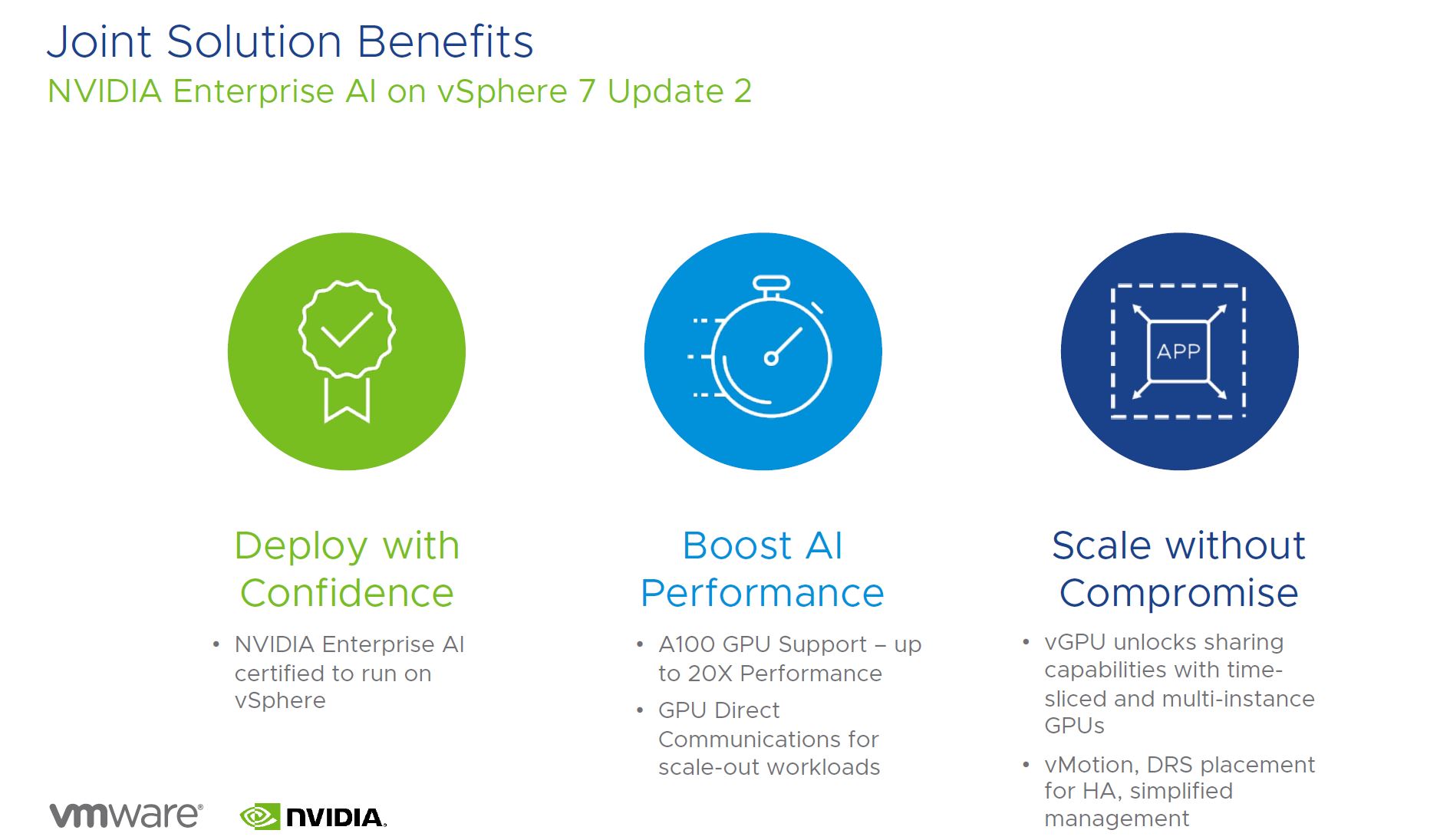

Overall, the key solution benefits are good. One gets the support for new GPUs and one can use features such as vMotion and DRS placement for high-availability. These are great for the VMware ecosystem.

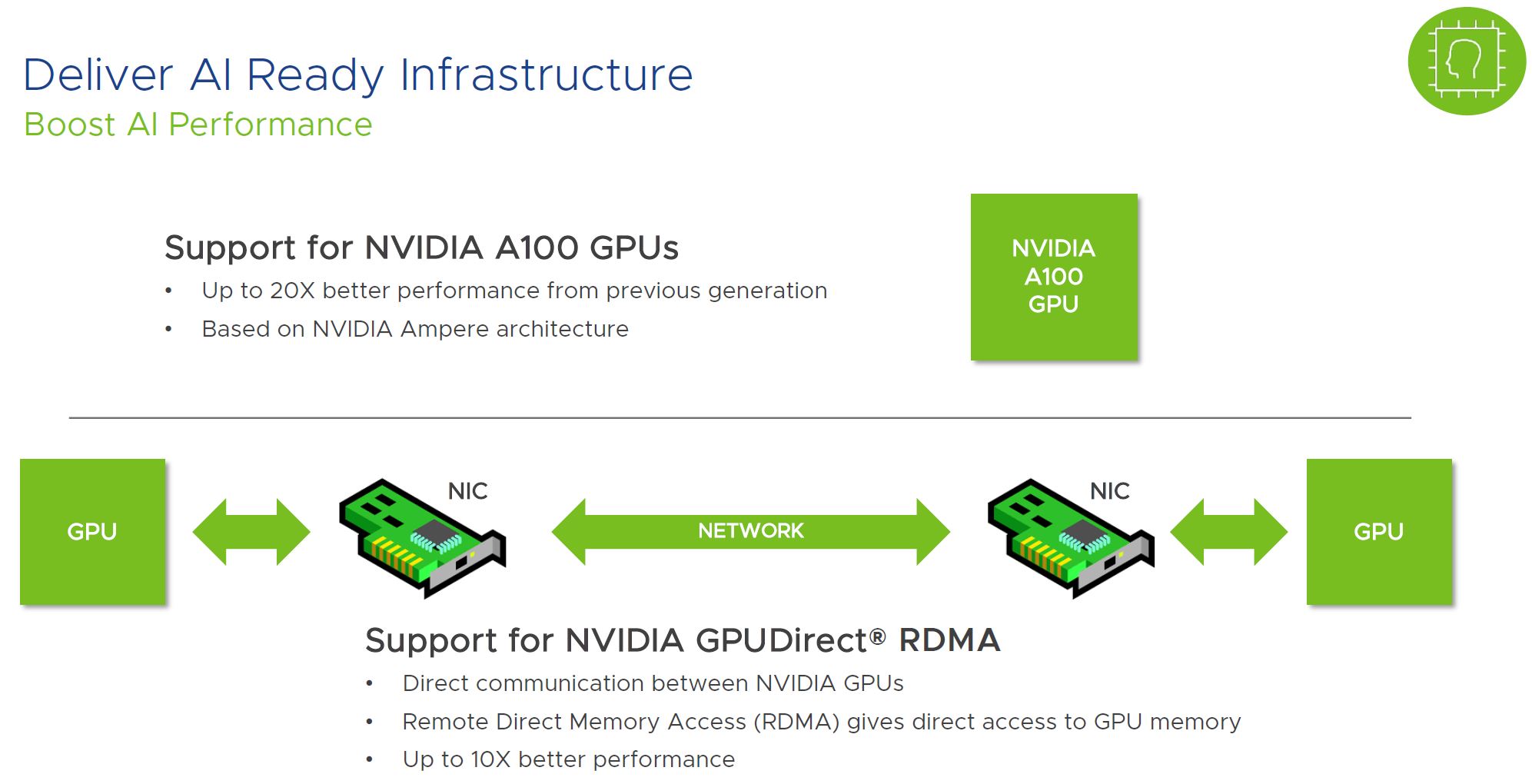

One of the other headline features is the ability to use GPUDirect RDMA. This allows direct communication between GPUs and is a key driver for many NVIDIA technologies to reliably scale-out GPU infrastructure efficiently. It is great that VMware is supporting this.

Also, a feature that many in the VMware community likely saw during the A100 launch as a great fit is the new NVIDIA MIG (multi-instance GPU) functionality where an A100 can have its compute and memory split into up to 7 partitions. This makes a lot of sense why VMware would support this feature. One could get this to work previously with VMware but the new update automates the process.

Overall, the VMware support of NVIDIA’s suite of technologies is great for VMware’s customers. It is absolutely a great announcement for NVIDIA. On the other hand, announcing expanded A100 support in the fourth quarter after the GOU launches seems a bit late.

VMware vSphere 7 Update 2 Other Enhancements

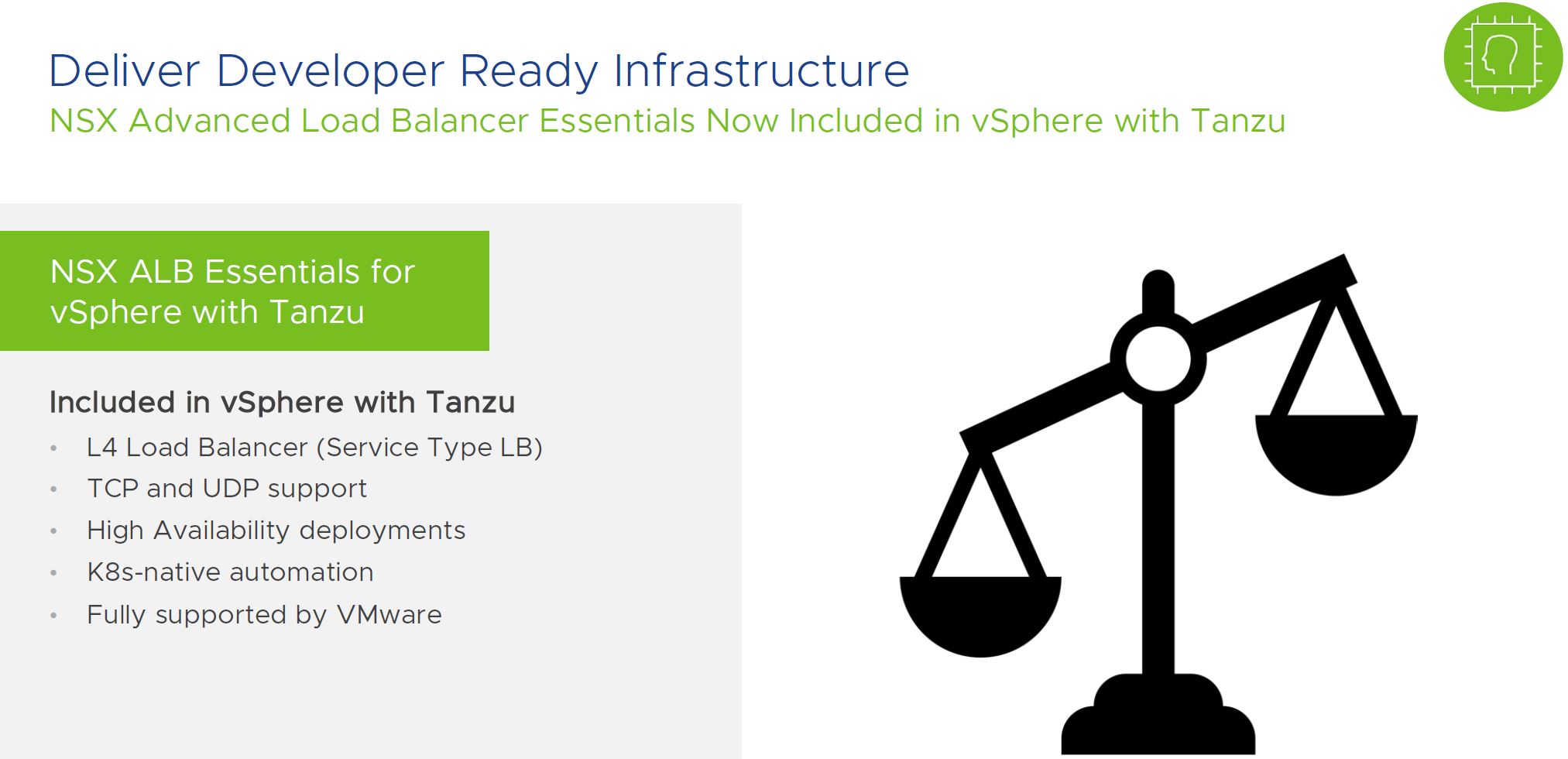

VMware has some cool features. For example, it has a new Service Type NSX Load Balancer. For kubernetes, load balancers are a key feature, so this provides a new option for VMware and Tanzu.

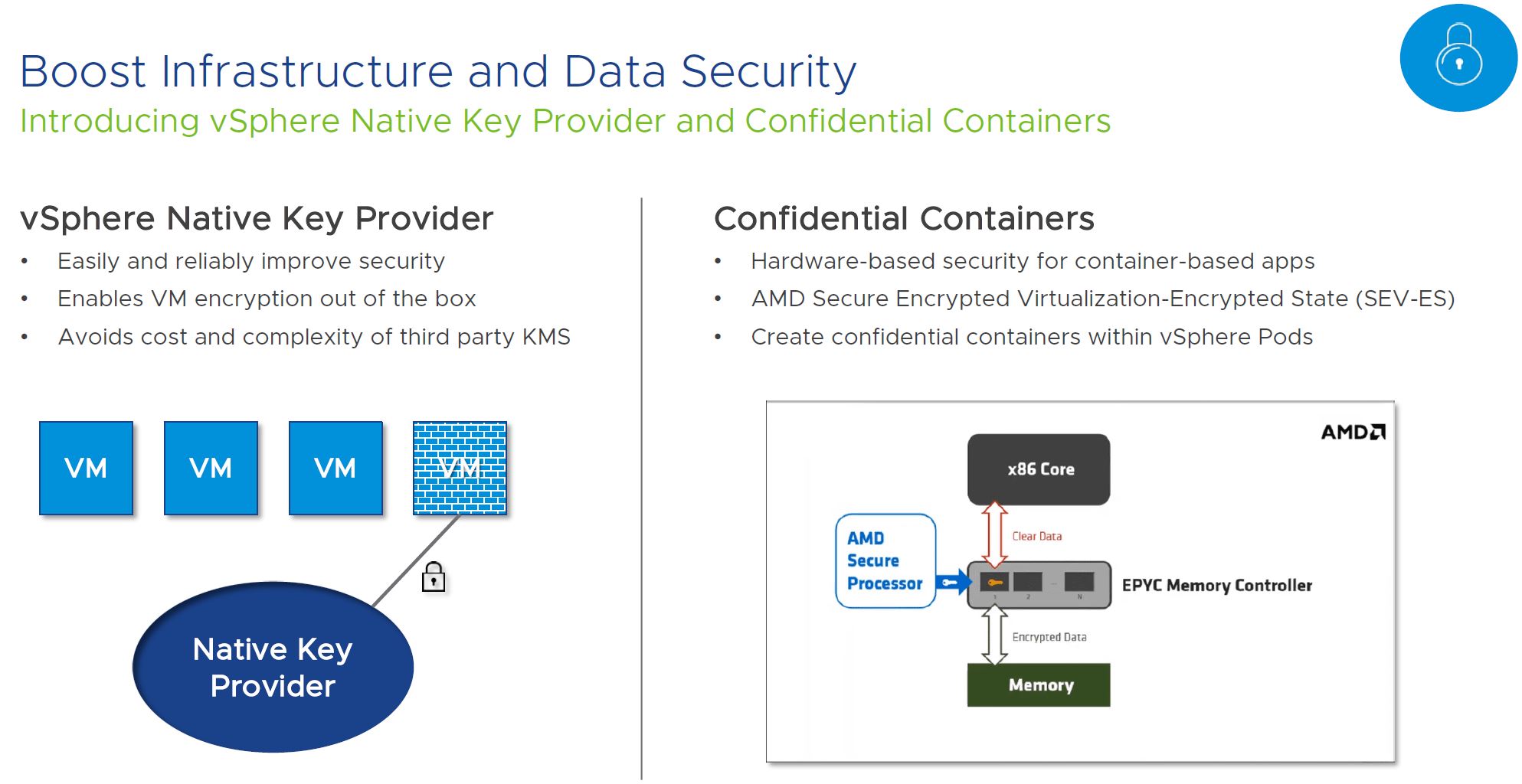

On the data security side, VMware again has great features, but this is a curious announcement as well. Confidential computing is a big deal and major cloud providers such as Google Cloud rolled out Confidential Computing Enabled by AMD EPYC SEV only about three quarters ago. VMware is adding support for confidential containers using AMD SEV-ES. This one makes a lot of sense for VMware’s customers. Still, it is strange VMware does not know anyone at Intel so they could include Ice Lake’s new security features. Given where we are at the launch cycle, and the fact this is a feature Intel has disclosed, it is a bit strange we are not hearing about VMware support for Intel Ice Lake security integration, only AMD. We hope this is simply from not knowing anyone at Intel to call for approval rather than VMware not supporting Intel’s solution. Given how short of a product cycle Ice Lake Xeons are expected to be, having to wait for another update cycle would leave VMware customers waiting for some major Ice Lake Xeon functionality for a big portion of the product lifecycle as is the case on the NVIDIA A100 side.

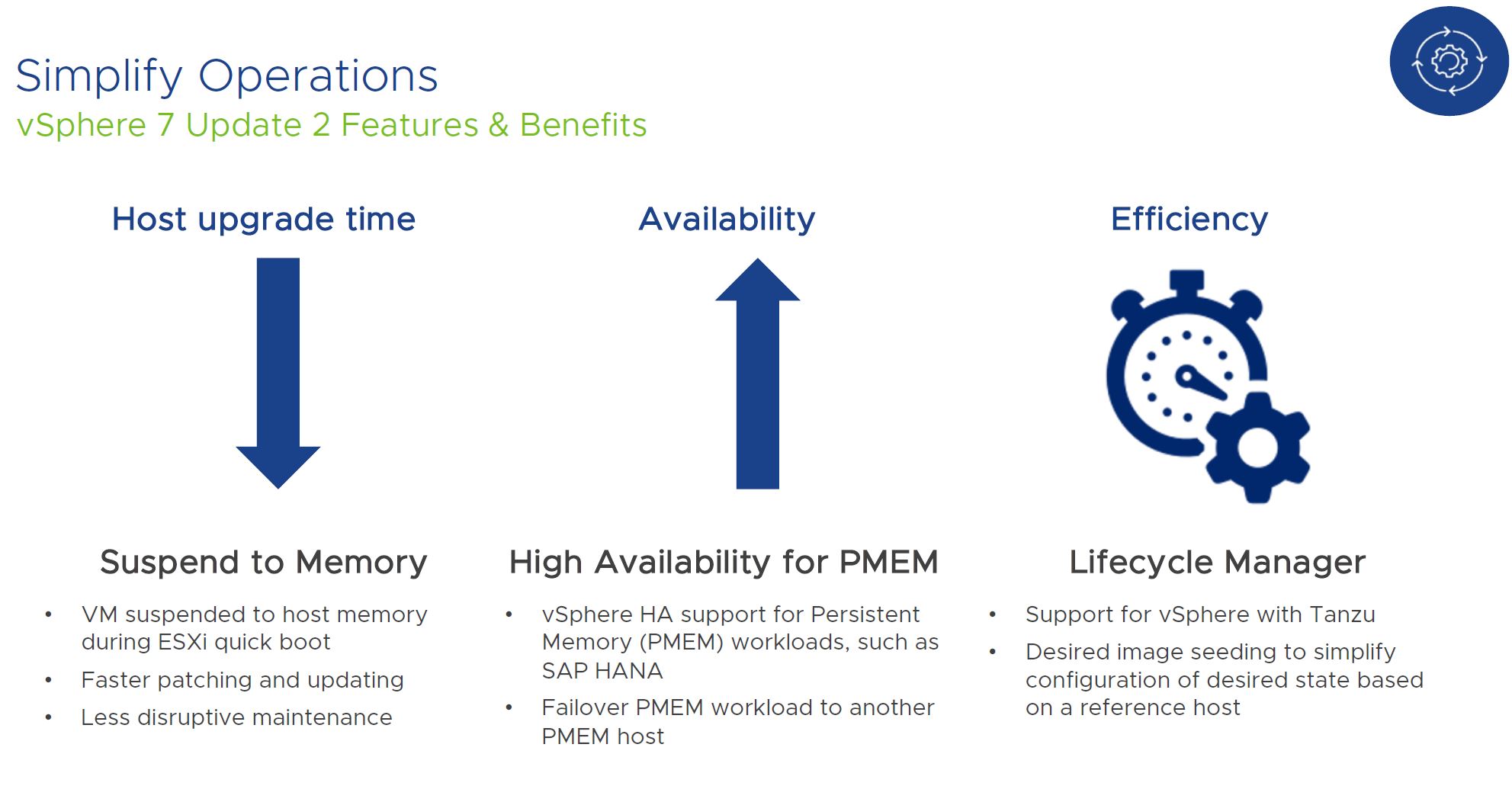

There are a number of other features. The patching is exciting, but perhaps the hardware-focused interesting item here is vSphere HA support for Persistent Memory. Intel is making a major push for the Optane PMem 200 in its next-gen systems, so this is a great feature to see VMware supporting.

Overall, there are some great enhancements in VMware vSphere 7 Update 2, but we also get vSAN 7 Update 2 enhancements as well.

VMware vSAN 7 Update 2 Enhancements

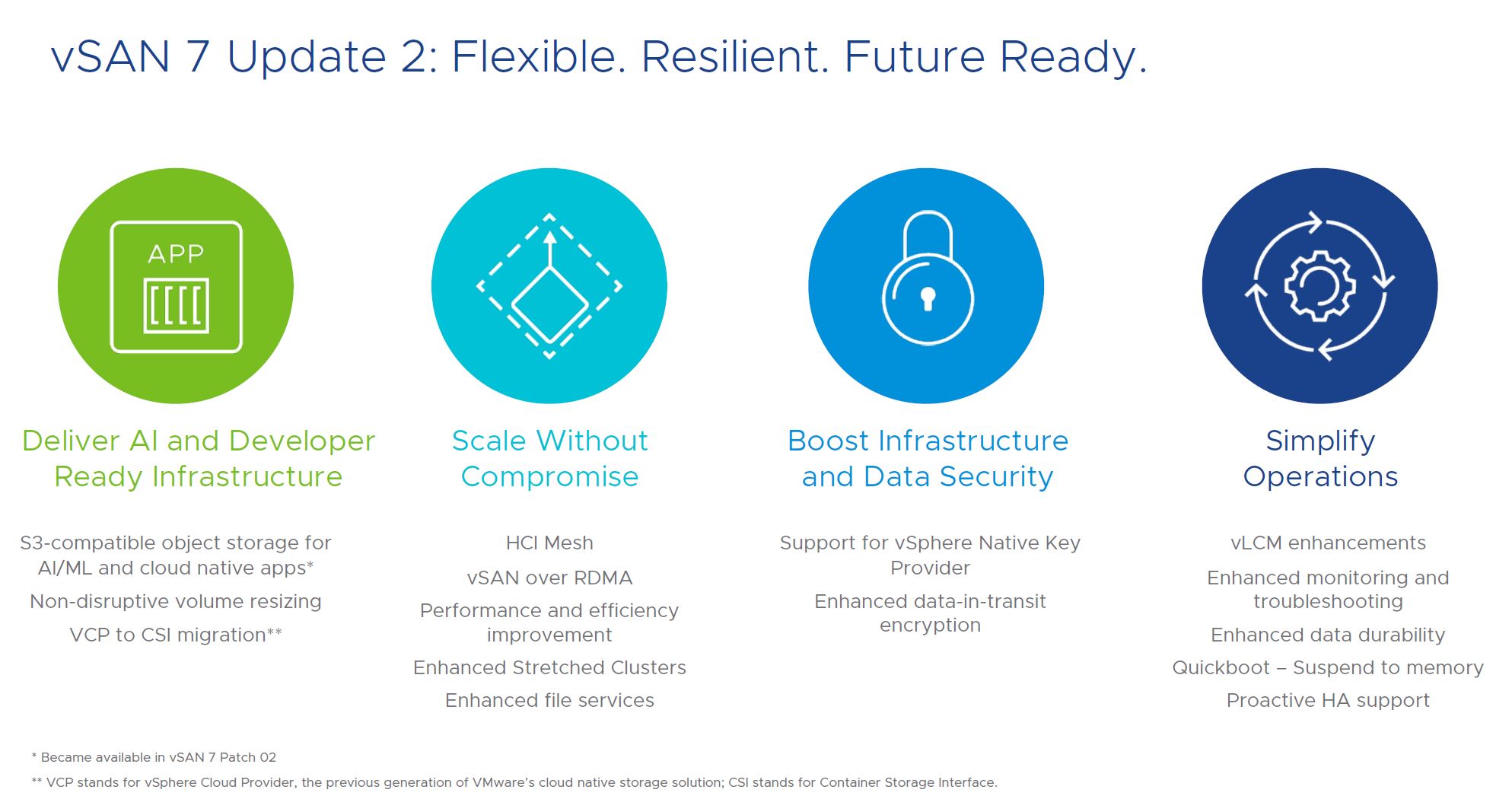

For vSAN we get a number of new features. A good example is S3-compatible object storage which helps further unify the storage stack. This is also important when looking at the next feature, the HCI Mesh.

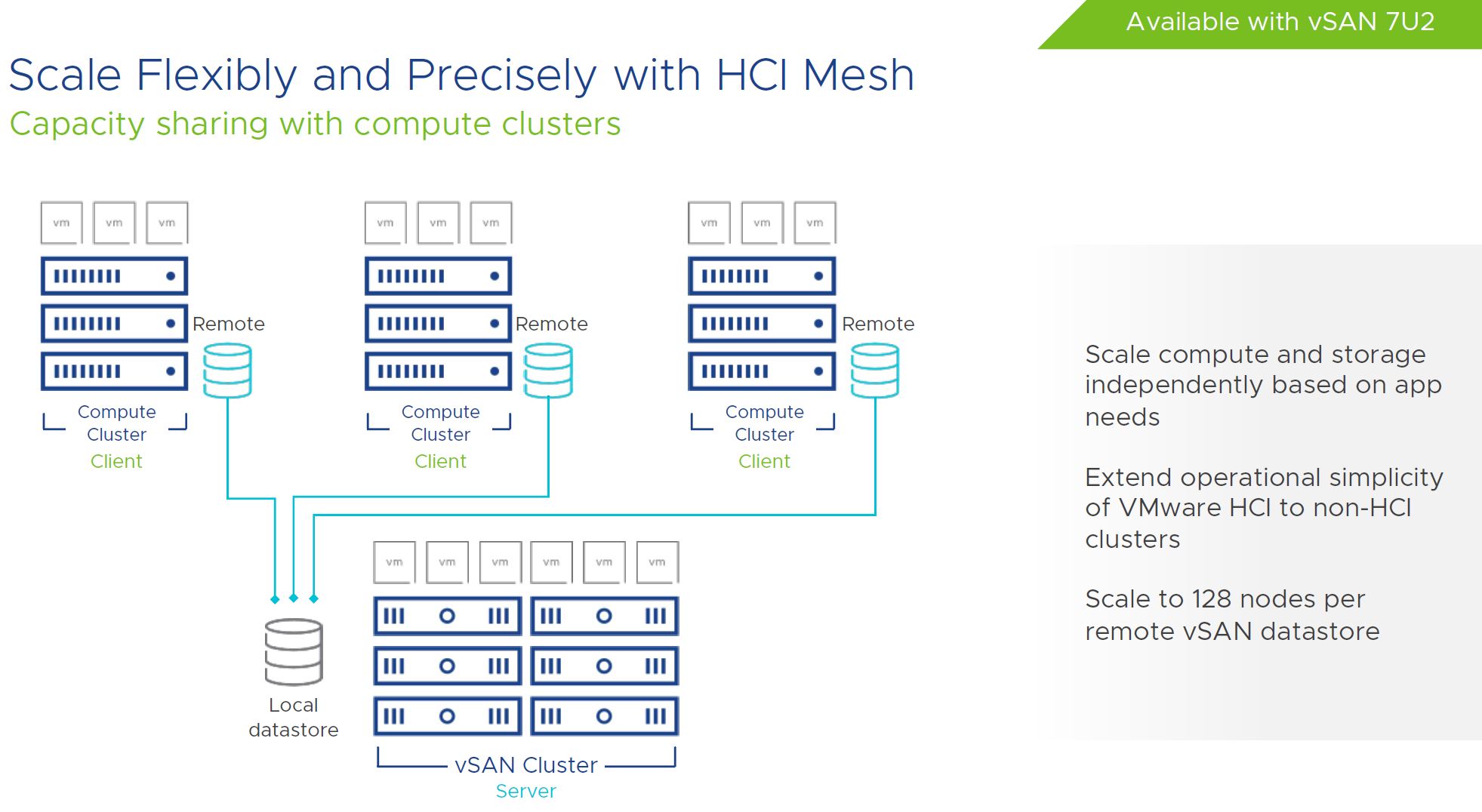

The HCI Mesh is interesting. VMware is working to extend vSAN as a storage platform beyond just the HCI cluster. Instead, it is now looking at ways to leverage vSAN storage to clusters of compute servers outside of the vSAN environment. A great example here is where one has high-performance per core/ low core count clusters for per-core licensed applications. While vSAN overhead on a 32 core processor may be less of a concern, on an 8 core processor that has high per-core license costs, it can be a greater concern. In the lab we have a model like this with a HCI cluster based on Ceph and then we can use lab nodes or clusters of lab nodes to access the KVM-Ceph storage. We have been using that solution for years, so it is great to see VMware adopting a similar model.

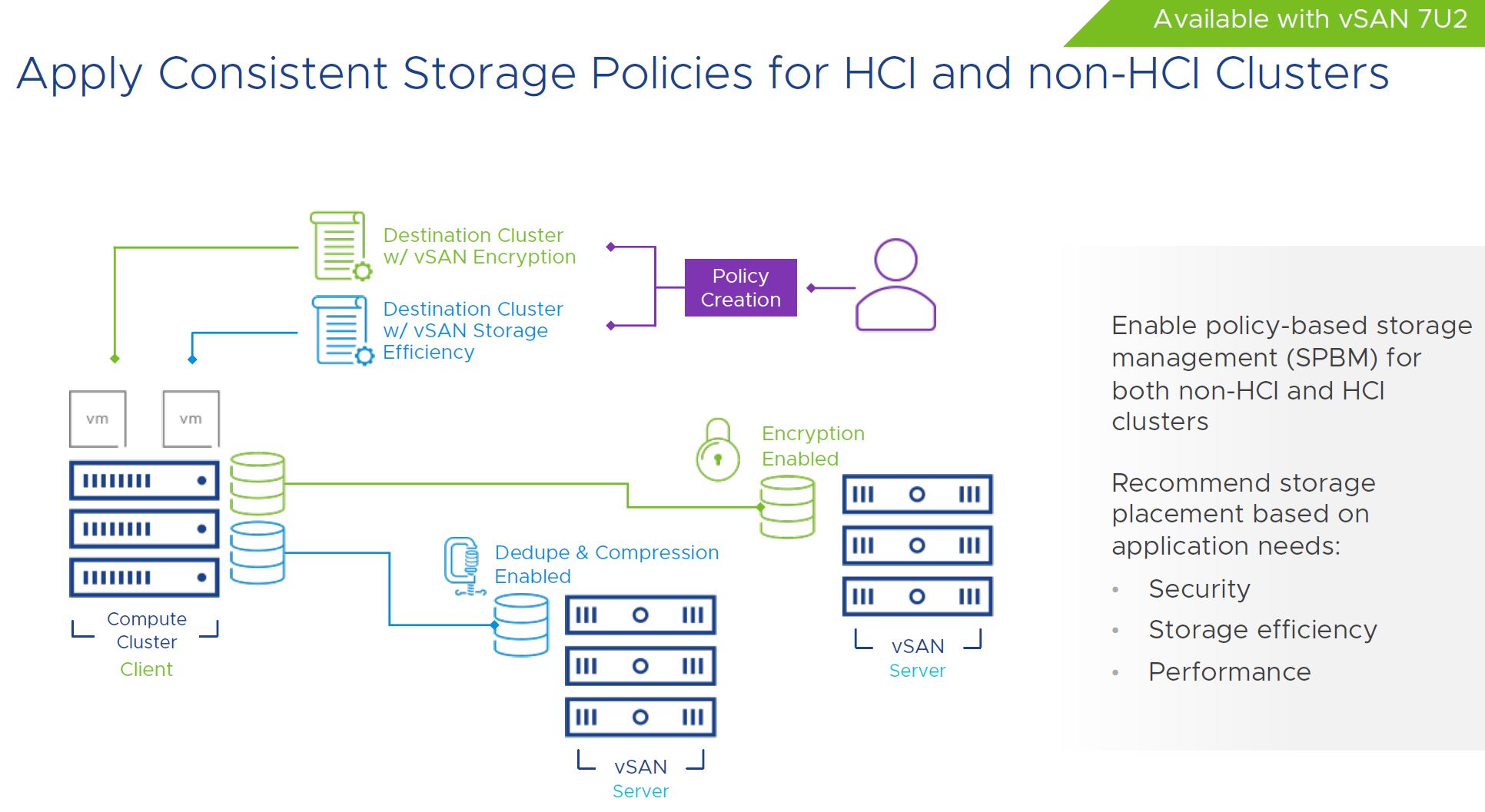

Along with that, VMware is adding storage policies that can span HCI and non-HCI clusters with vSAN 7U2.

Overall, these are positive changes on the VMware vSAN side as well.

Final Words

It is not a secret in the industry that the server market innovated at a glacial pace between 2012 and 2019, and some may argue even through today with AMD EPYC 7002 Series Rome Knockout and upstarts such as the Ampere Altra providing some change. At some point, VMware is going to face a choice.

One option is to resign itself to consistently delivering new innovations to its customers far later than the open-source/ Linux and cloud community is driving innovation. The other option is to thaw the glacial pace of hardware innovation that it has been on. Cloud providers are quickly adopting new technologies. KVM/ kubernetes will run on anything that one may want to push to the edge. Conversely, VMware is delivering competitive differentiators quarters or years after other ecosystems.

When we simply got a few extra cores and a few MHz every six quarters, this worked well. In the near future, this is going to change. VMware supporting hardware a half-cycle or more behind cloud providers and open source communities may make it easier for IT, but it will put its customers at a distinct disadvantage compared to those that can utilize new hardware sooner.

While I realize that an option was simply to copy/paste slides and say “VMware is great” at some point this velocity and breadth of hardware support needs to be a discussion within VMware. It may not be margin-friendly to address. At the same time, having a headline feature of supporting an ultra-popular and market-defining product ~10 months after it launches should start broader conversations within VMware.

Hi Patrick

The issue of the Vmware not supporting latest hw is bad and there are many reasons for that.

This recent announcement is bit misleading though – Vmware Vsphere was able to support A100 for a while in the GPU pass-through mode only.

What we got today was the support for the vGPU on the A100 and that means vMotion + suspend and resume which translates to ability to backup VMs that are utilizing these cards.

I don’t know if there is any other hypervisor that supports such features on these cards out there.

VMware is late, period.

The fact that only 7.0 U2 finally supports the AMD Zen’s NUMA topology, resulting in a 30% performance improvement, tells a lot about how slow they are. Closed source/single contribute code cannot compete with any of the massive upstream communities.