This week, NVIDIA added new partner systems to the NVIDIA Certified server path. The basic idea is that server vendors can run scripts to meet certain criteria and then market servers as NVIDIA Certified. Let us go into the offering in more detail to see what NVIDIA is doing.

NVIDIA Certified Server Offering

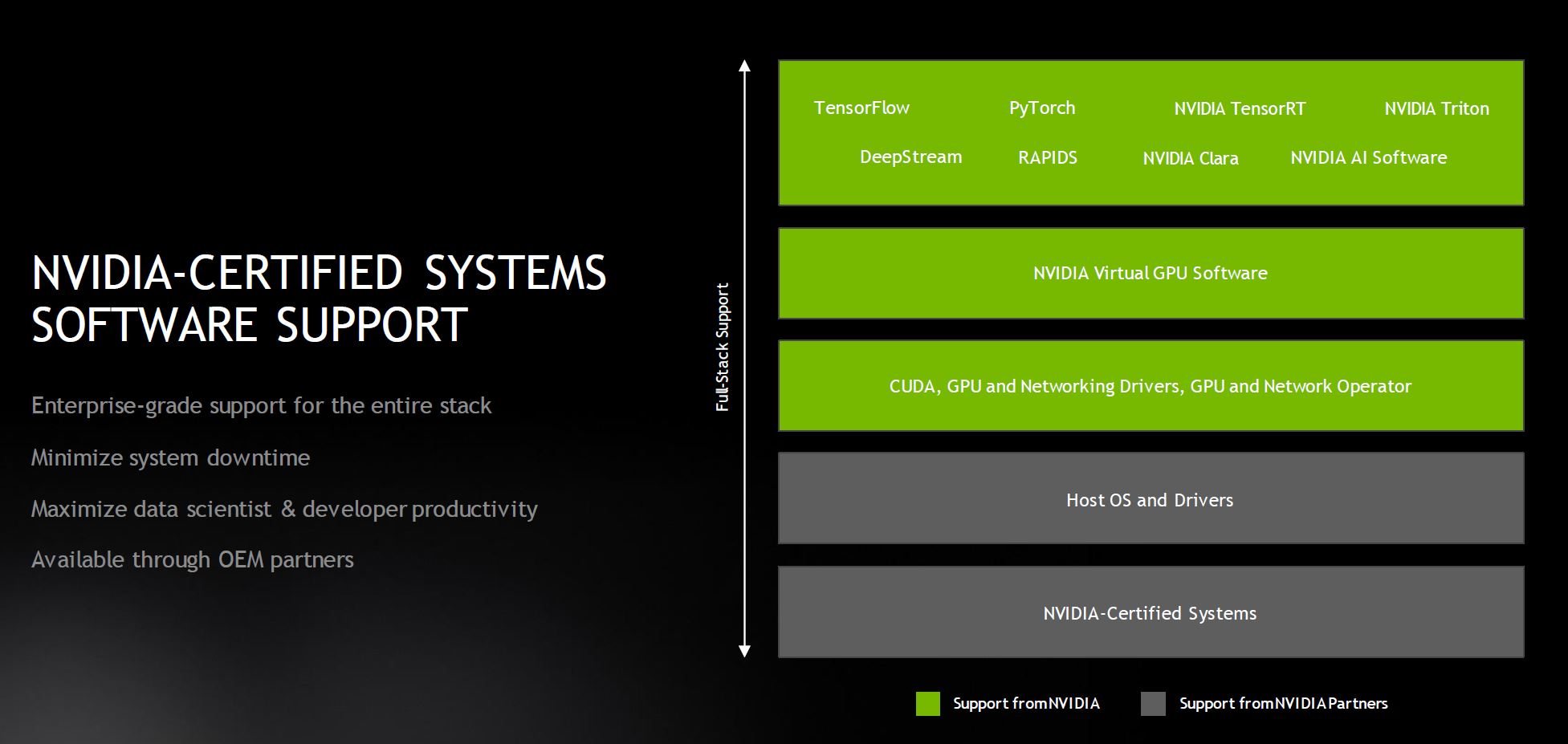

The basic idea behind the NVIDIA Certified Server offering is that an OEM can run validation scripts on a configuration that heavily uses NVIDIA components. The OEM, assuming they pass, can then have a system NVIDIA certified. That allows the OEM to sell a NVIDIA support contract with the system. Here is what the roles and responsibilities are for that support model:

We will have more in the Q&A below, but the key here is that NVIDIA is now maintaining not just the driver and CUDA stacks. Instead, NVIDIA is maintaining containers that run applications on its hardware and can scale-out using Mellanox-derived fabric IP. As a result, if one builds a scale-out AI application using NVIDIA’s stack, they may need NVIDIA to update or fix something as container versions change.

If you saw our NVIDIA GeForce RTX 3090 compute-focused review, that is a good example of where container changes caused something to break. As a result, we needed to wait for a new version of the containers. NVIDIA is not focused on supporting GeForce compute cards in this manner since it is focused more on the A100 for now, but that is an example where if we were a large organization running a big application, we would want the support with a SLA rather than just waiting a few weeks for a container to updeate.

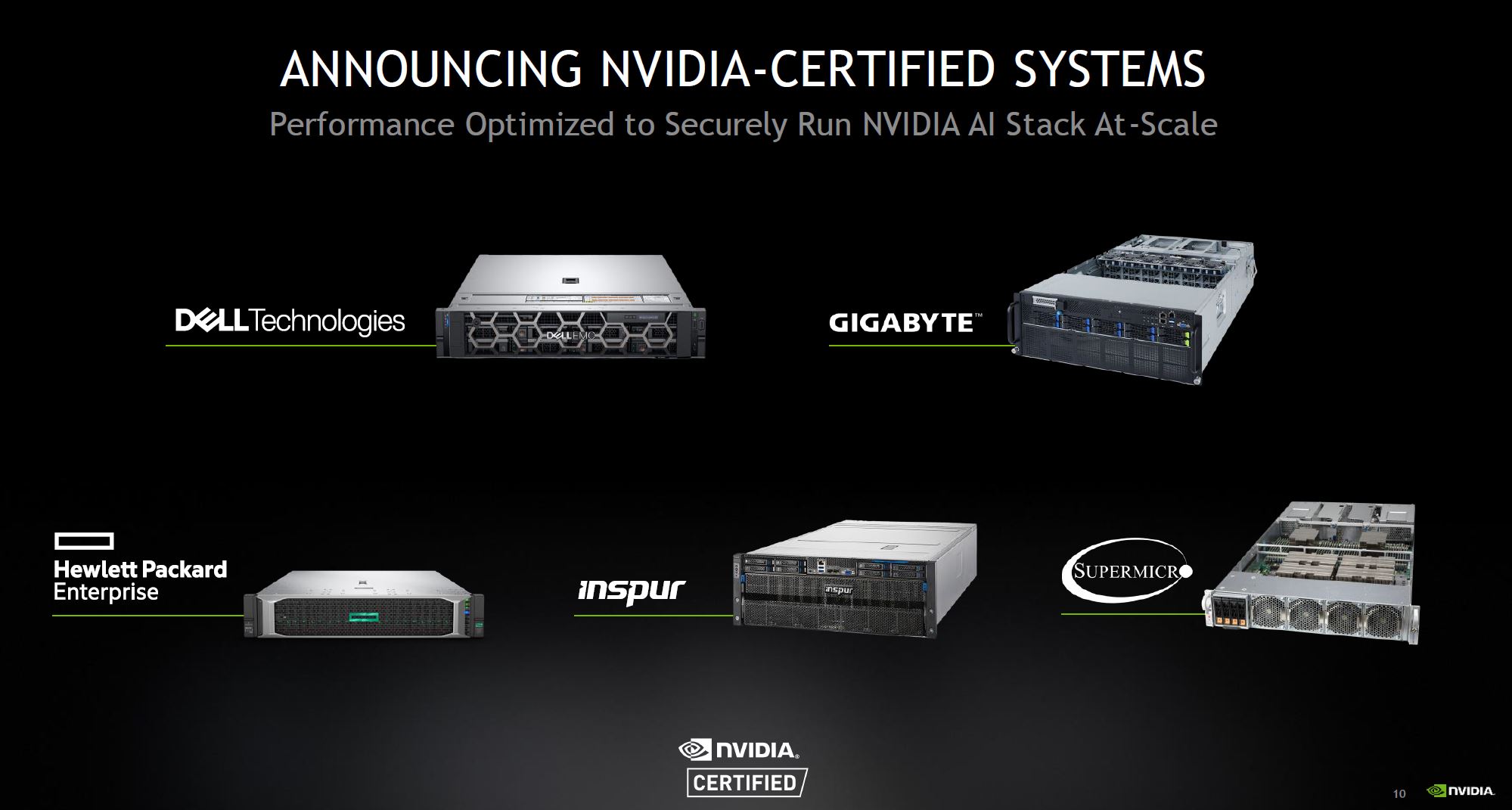

In terms of key partners, we have Dell EMC, HPE, Inspur, Supermicro, and Gigabyte all with systems in the first round. NVIDIA said many more are coming.

Just for fun, we cross-referenced the list with STH reviews and saw:

- Dell EMC PowerEdge R7525 and R740 rack servers. We reviewed the Dell EMC PowerEdge R7525 and Dell EMC PowerEdge R740xd but not the standard R740)

- GIGABYTE R281-G30, R282-Z96, G242-Z11, G482-Z54, G492-Z51 systems (We reviewed the Gigabyte R281-G30 and Gigabyte G242-Z10 but not the -Z11 variant)

- HPE Apollo 6500 Gen10 System and HPE ProLiant DL380 Gen10 Server (We recently reviewed the HPE Trusted Supply Chain using a ProLiant DL380T Gen10)

- Inspur NF5488A5 (We reviewed the previous-gen Inspur NF5488M5 using Intel Xeon CPUs and the V100)

- Supermicro A+ Server AS-4124GS-TNR and AS-2124GQ-NART – Surprisingly, Supermicro’s offerings are the only ones we have not reviewed a version of on this list.

We effectively have reviewed a version of about half the systems on the list at this point, although often without GPUs. That just shows how common some of these platforms are and that they are not just niche offerings getting certified.

Final Words

For NVIDIA, this is going to make a lot of sense. It is now a way to monetize support. NVIDIA has an enormous software engineering engine. Over the past few years, the company has been moving away from low-level drivers and languages and into the higher-level application and component development. It makes sense that with all this effort NVIDIA is trying to monetize this.

For partners, our assumption is that there is a marketing program involved. Many server vendors have been touting AI integration and support capabilities, but the NVIDIA Certified program would make it difficult to lean on Dell for support instead of NVIDIA for most of the high-value server functions. Having a NVIDIA Certified server from Dell EMC and Supermicro makes it much more difficult to explain the value of a Dell server over a Supermicro server for AI beyond iDRAC management for some organizations.

Pricing wise, the guidance we heard, and what is in the Q&A below equates to roughly $700-850/ GPU/ year. We suspect that there is a lot of margin dollars here and we did not get any discount structures tied to those figures, so treat that as the best we have, but likely different than street pricing.

Overall, those comfortable with more DIY style scale-out clusters will look at this and likely have little interest. For those who want this type of support, or those who need to build a vendor-supported infrastructure then this will likely have a lot of appeal. While NVIDIA is focusing on GPU and networking now, we can see this moving to DPUs and Arm-based servers like the Ampere Altra Wiwynn Mt. Jade Server in the future assuming that deal closes. To us, it would have been more interesting if Ampere was the initial launch platform for this since the Altra has a lot of PCIe connectivity and it would be a big boost to the AI on Arm story.

Here is the Q&A from NVIDIA on the offering.

NVIDIA Quick Q&A On the Offering

NVIDIA answered some follow-up questions that were not part of the original announcement but came via e-mail.

Q: Can customers contact NVIDIA directly for support?

A: There are two support paths the customer can take.

- Customer contacts OEM server vendor first

- If OEM server vendor determines that the problem is an NVIDIA SW issue, we will request that the customer open a case with NVIDIA and provide the OEM server vendor case number as well in case we need to collaborate and reference the case.

2. Customer contacts NVIDIA first

- Customers can contact NVIDIA through the NVIDIA enterprise support portal, email or phone: https://www.nvidia.com/en-us/support/enterprise/

- If NVIDIA determines that the problem is an issue that the OEM server vendor is responsible for, we will request that the customer open a case with their OEM server vendor.

Q: Tell me more about pricing?

NVIDIA-Certified Systems software support price per system. Pricing varies depending on the system configuration. For example, for volume servers, 2 GPUs* A100 systems are priced at $4,299 per system with a 3-year support term that customers can renew.

Q: What’s the cost for OEMs to participate?

OEMs, or any partner for that matter, do not have to pay NVIDIA to participate in this program. This program is really meant to ensure that Enterprises can confidently acquire the systems to run their AI workloads at scale. To that extent, we have support from the OEMs as they recognize the value of providing NVIDIA-Certified Systems to help Enterprises with their accelerated computing needs and have agreed to jointly work with us on this. (Source: NVIDIA e-mail(s) to STH)