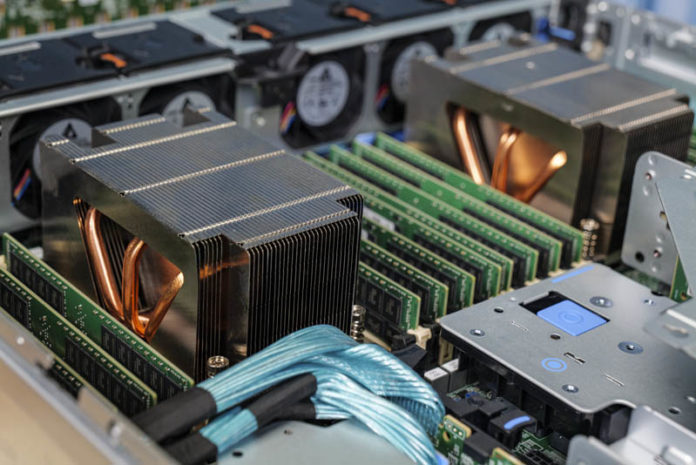

The Dell EMC PowerEdge R7525 is the fastest dual-socket mainstream x86 server you can buy today. To make that happen, Dell EMC is using a number of different tricks. The configuration we are testing has 24x NVMe SSDs which make for an impressive storage array. Going beyond that, this server can handle up to AMD EPYC 7H12 280W TDP 64-core CPUs. Those are the same CPUs that when they were launched almost a year ago were primarily reserved only for those customers building supercomputers. Adding to the server’s allure is the inclusion of an array of expansion options to further showcase the system’s capabilities. In our review, we are going to get into the details and show you why we came away impressed with the server.

Dell EMC PowerEdge R7525 Hardware Overview

Since these days our reviews feature more images than in previous years, we wanted to split this section into the external views and the internal views of the hardware.

We also have a video (above) that has B-roll showing more angles than we can do on the web. As always, we suggest opening the video in a new browser to listen along or check out views. As a quick note, we have done a few videos for this system previously in our AMD PSB piece as well as our 160 PCIe Lane Design piece.

Dell EMC PowerEdge R7525 External Overview

On the front of the system Dell sent, we see a “fancy” faceplate with its small status screen. This is an option that can be configured. Frankly, this look is shared with Dell’s Xeon servers of the generation and looks great. It is also costly. Standard features also include USB service ports as well as a monitor port for cold aisle service. The system can be configured with Quick Sync 2 which is Dell EMC’s fast setup via a mobile device option, not to be confused with Intel’s QuickSync which is an Intel GPU video transcoding technology.

On the front of our test system, we have 24x 2.5″ drive bays. Dell EMC has other offerings available including those for SAS, SATA, and also fewer drive bays. Some will not want this much drive bay connectivity, others will certainly want as much storage as possible. As we would expect from a PowerEdge, this is highly-configurable.

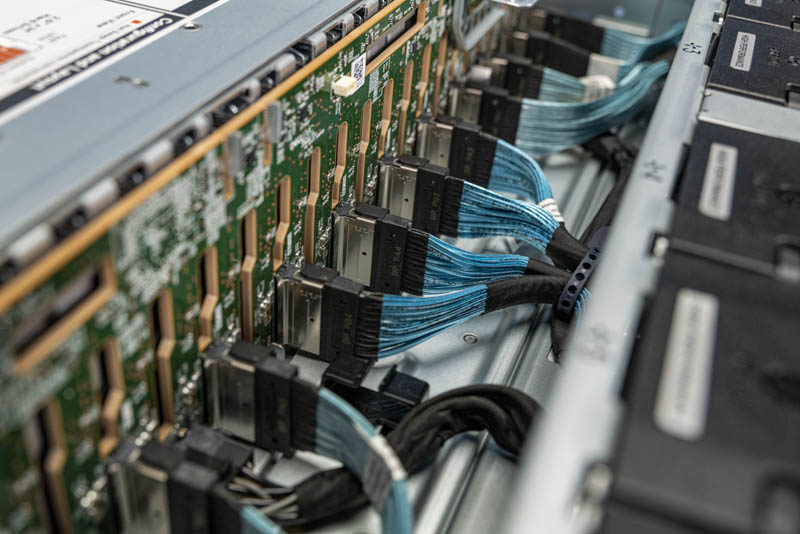

Something that is immediately different on this AMD EPYC server versus its Intel Xeon counterparts is that all 24x NVMe SSD bays are all directly connected to the motherboard with dedicated lanes. That takes a total of 24x PCIe x4 lanes for 96 total. 96 is the total PCIe lane count we get in current 2nd Generation Intel Xeon Scalable systems like the Dell EMC PowerEdge R740xd. The big difference here is that we do not need PCIe switches to hit this PCIe lane count. The other, and perhaps more profound, the difference is that we get PCIe Gen4 lanes with EPYC instead of the legacy PCIe Gen3 lanes with Intel Xeon.

Since our internal overview section is quite long, we are just going to quickly mention here that the backplane is interesting itself. Dell is using a 24x drive PCB here. Many other vendors in the market are using three 8x drive backplane PCBs. That is just a small design detail that immediately sticks out when you review a lot of servers.

On the rear of the system, we get normal PowerEdge flexibility. Instead of looking at either side, we wanted to focus on what we still get, even with 24x NVMe drives using 96 PCIe Gen4 lanes. In a system like the PowerEdge R740xd, we would have very limited expansion. Here, we have two full-heights and two low-profile expansion slots along with an OCP NIC 3.0 slot. We will get into more detail on this in our internal overview, but a unique feature here is that none of the I/O including the two 1GbE ports (Broadcom), two SFP+ ports (Intel) nor the iDRAC, USB, and VGA ports are connected to the motherboard. Dell has a truly modular design.

On either side of the rear of the chassis, we have 1.4kW 80Plus Platinum Dell EMC power supplies. As we saw in our power consumption testing, this larger capacity is likely needed given how much one can configure in this system.

One other small note is that the rear of the system has a sturdy handle. One can remove this later, but it can also be used for a bit of cable management as a tie-down point if needed. If the low-profile card slots are used, it may require removing this handle for cabing. Again, this is a small touch, but a nice one to see.

Next, we are going to look inside the system and see what it has to offer under the cover.

Speaking of course as an analytic database nut, I wonder this: Has anyone done iometer-style IO saturation testing of the AMD EPYC CPUs? I really wonder how many PCIe4 NVMe drives a pair of EPYC CPUs can push to their full read throughput.

I should have first said this: I want a few of these!!!

Patrick, I’m curious when you think we’ll start seeing common availability of U.3 across systems and storage manufacturers? Are we wasting money buying NVMe backplanes if U.3 is just around the corner? Perhaps it’s farther off than I think? Or will U.3 be more niche and geared towards tiered storage?

I see all these fantastic NVMe systems and wonder if two years from now I’ll wish I waited for U.3 backplanes.

The only thing I don’t like about the R7525s (and we have about 20 in our lab) is the riser configuration with 24x NVMe drives. The only x16 slots are the two half-height slots on the bottom. I’d prefer to get two full height x16 slots, especially now that we’re seeing more full height cards like the NVIDIA Bluefield adapters.

We’re looking at these for work. Thanks for the review. This is very helpful. I’ll send to our procurement team

This is the prelude to the next generation ultra-high density platforms with E1.S and E1.L and their PCIe Gen4 successors. AMD will really shine in this sphere as well.

We would set up two pNICs in this configuration:

Mellanox/NVIDIA ConnectX-6 100GbE Dual-Port OCPv3

Mellanox/NVIDIA ConnectX-t 100GbE Dual-Port PCIe Gen4 x16

Dual Mellanox/NVIDIA 100GbE switches (two data planes) configured for RoCEv2

With 400GbE aggregate in an HCI platform we’d see huge IOPS/Throughput performance with ultra-low latency across the board.

The 160 PCIe Gen4 peripheral facing lanes is one of the smartest and most innovative moves AMD made. 24 switchless NVMe drives with room for 400GbE of redundant ultra-low latency network bandwidth is nothing short of awesome.

Excellent article Patrick!

Happy New Year to everyone at Serve The Home! :)

> This is a forward-looking feature since we are planning for higher TDP processors in the near future.

*cough* Them thar’s a hint. *cough*

Dear Wes,

This is focused towards the data IO for NAS/Databases/Websites. If you want full height x16 slots for AI, GPGPU or VM’s with vGPU then you’ll have to look elsewhere for larger cases. You could still use the R7525 to host the storage & non-GPU apps.

@tygrus

There are full height storage cards (think computational storage devices) and full height Smart NICs (like the Nvidia Bluefield-2 dual port 100Gb NIC) that are extremely useful in systems with 24x NVMe devices. These are still single slot cards, not a dual slot like GPUs and accelerators. I’m also only discussing half length cards, not full length like GPUs and some other accelerators.

The 7525 today supports 2x HHHL x16 slots and 4x FHHL x8 slots. I think the lanes should have been shuffled on the risers a bit so that the x16 slots are FHHL and the HHHL slots are x8.

@Wes

Actually R7525 can be configured also with Riser config allowing full length configuration (on my configurator I see Riser Config 3 allow that). I see that both when using SAS/SATA/Nvme and when using NVME only. I also see that on DELL US configurator NVME only configuration does not allow Riser Config 3. Looking at Technical manual, I see that such configuration is not allowed while looking on at the Installation and Service Manual such configuration is listed in several parts of the manual. Take a look.

I configured mine with the 16 x NVMe backplane for my lab. 3 x Optane and 12 by NVMe drives. 1 cache + 4 capacity, 3 disk groups per server. I then have 5 x16 full-length slots. 2 x Mellanox 100Gbe adapters, plus the OCP NIC and 2 x Quadro RTX8000 across 4 servers. Can’t wait to put them into service.

Hey There, what’s the brand to chipset?

Hi, can I ask the operational wattage of DELL R7525?

I can’t seem to find any way to make the dell configurator allow me to build an R7525 with 24x NVMe on their web site. Does anyone happen to know how/where I would go about building a machine with the configurations mentioned in this article?