Dell EMC PowerEdge R7525 Internal Overview

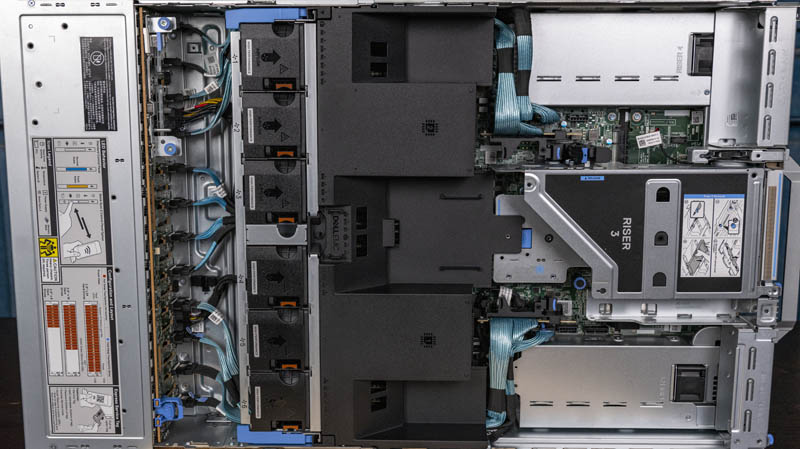

Here is a quick look at the system. We already discussed the front drive bays and backplane. We are going to move through the inside of the system from the front (pictured as left below) to the rear.

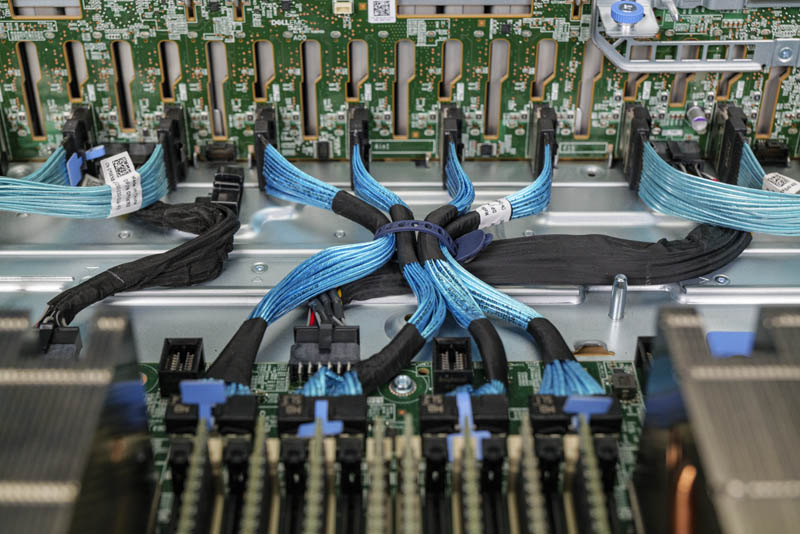

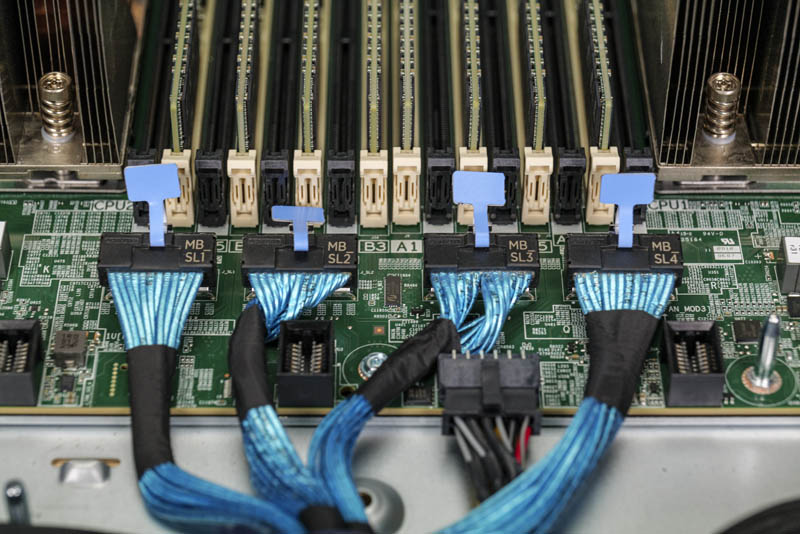

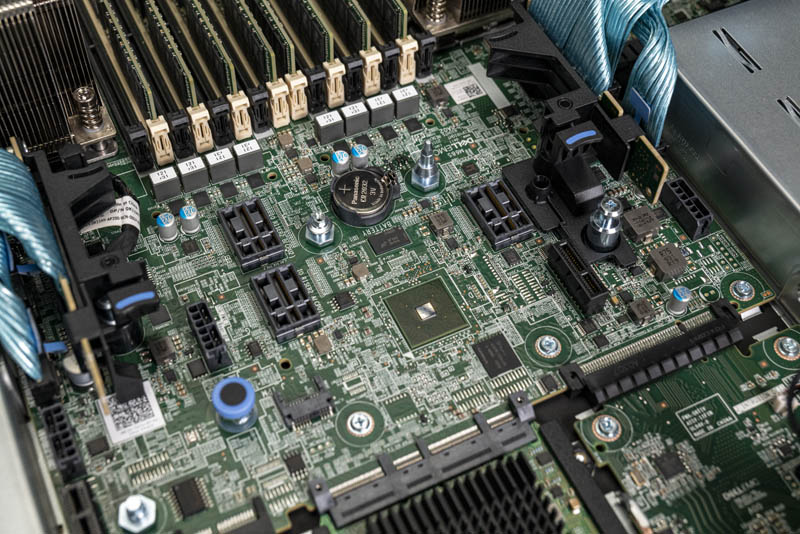

Behind the backplane, one will immediately notice the use of these blue cables. Each cable carries 8x PCIe Gen4 lanes, or enough for two PCIe x4 NVMe SSDs. A major impact of this design is that the PCIe lanes can be re-routed. If one is using a SAS controller and therefore does not need an extensive number of front-panel PCIe connectivity, these lanes can be re-purposed to provide connectivity elsewhere in the chassis.

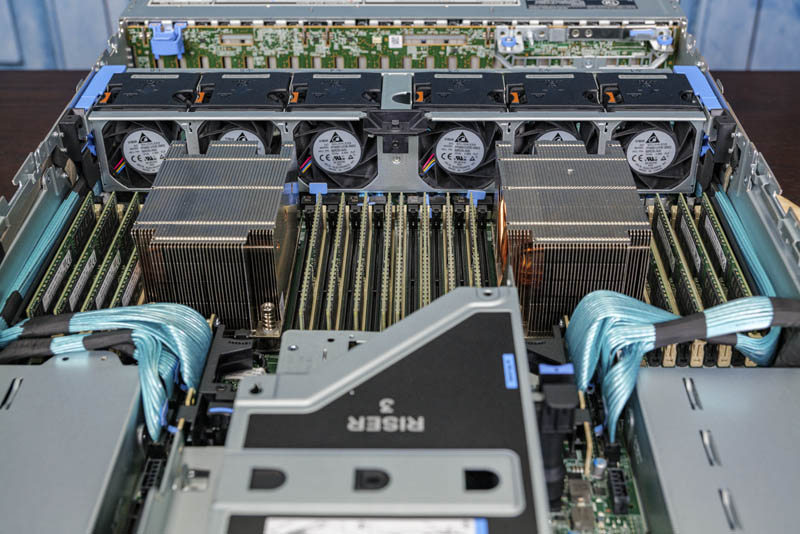

Behind the backplane is a fan partition. The fan partition has six fans that are hot swappable. As one can see, one can also remove the entire fan partition using two levers. This is demonstrated in the YouTube video briefly.

Airflow is directed by a molded guide. Behind the fans, and under that black airflow guide we get to the heart of the system.

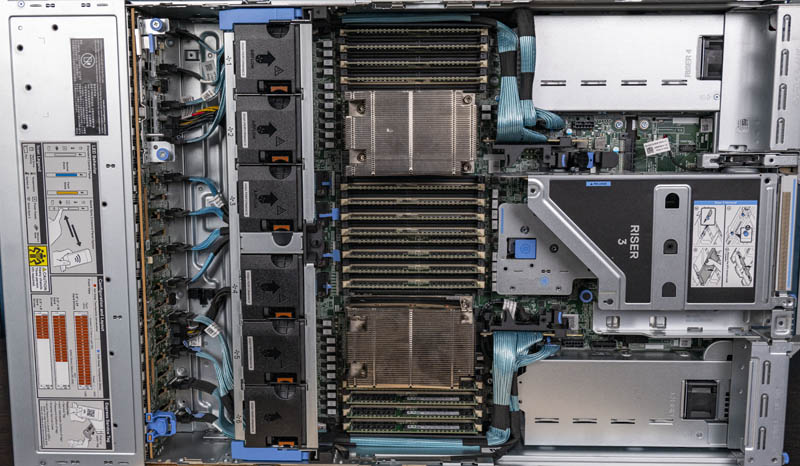

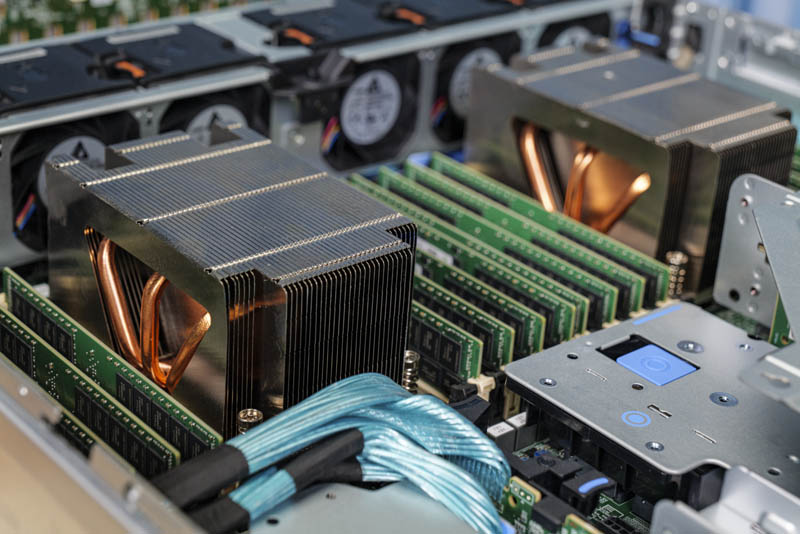

Inside the system we get two AMD EPYC 7002 series “Rome” processor sockets (SP3.) While we are reviewing the system during the EPYC 7002 generation, we expect this server to be capable of accepting the socket-compatible EPYC 7003 “Milan” generation as those launch, likely in Q1 2021.

One of the unique features of this server is that one can configure the system to either have four inter-socket XGMI Infinity Fabric links and 128x PCIe Gen4 lanes. Alternatively, one can take these lanes and configure three inter-socket XGMI Infinity Fabric links and get 160x PCIe Gen4 lanes as we have in our test system. This is a configurable option ad one can see the cabled headers here being routed to NVMe SSDs.

We did an entire piece and video on this feature if you want to learn more. For some context, next-generation Intel Xeon Scalable Ice Lake servers that will launch after the AMD EPYC 7003 series will only support 64x PCIe Gen4 lanes per socket for 128 total. This is an innovative way to put this configurable feature into a product by Dell that will make it more configurable than its next-generation of Intel Xeon servers.

With each AMD EPYC CPU we get 8-channel DDR4-3200 memory configurations with two DIMM per channel (2DPC) operation. As a result, 8-channels x 2 DIMMs per channel x 2 sockets gives us 32 DIMM slots. We have 16x 64GB ECC RDIMMs here, but each processor can technically support 128GB and 256GB LRDIMMs (Dell’s configuration options change and did not have larger DIMMs when we were writing this article, but we were assured larger capacities were coming.) That means one can get up to 4TB of memory per processor or 8TB in the server at its maximum. Unlike on the 2nd Generation Intel Xeon Scalable side, this does not incur an additional cost for a M or L SKU, although we covered that the 2nd Gen Intel Xeon Scalable M SKUs were discontinued and the Xeon L SKUs had lower prices due to this competition. We expect Ice Lake Xeons to re-think this memory capacity premium in large part due to this AMD EPYC competition. Ice Lake Xeons will also have 8-channel DDR4-3200 support reaching parity on that front.

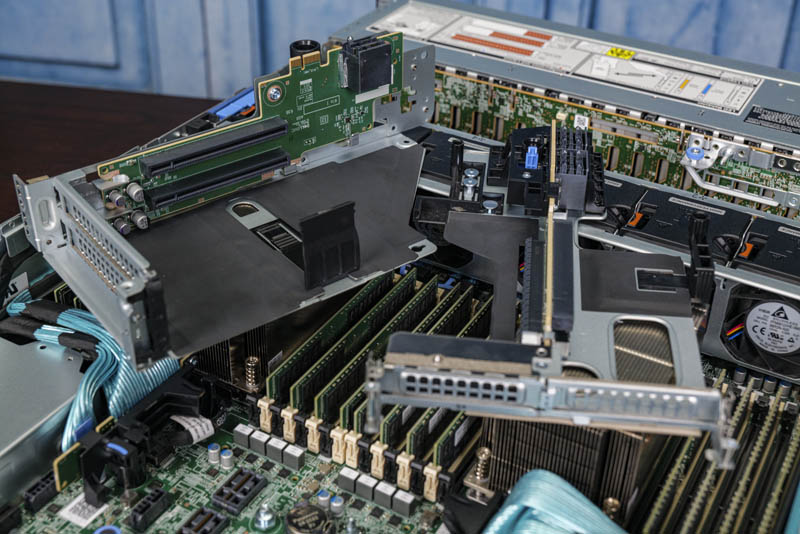

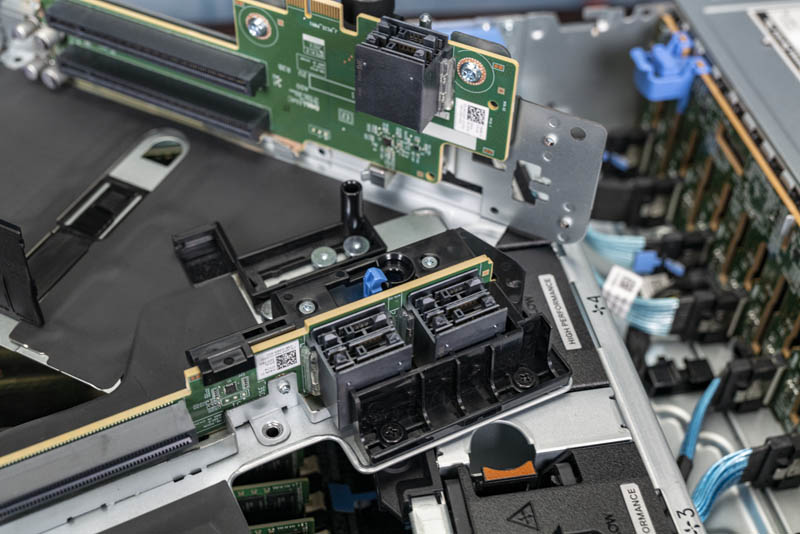

In terms of PCIe connectivity, we have two PCIe risers in our system offering both low-profile and full-height expansion slots. Even with the 24x front-panel NVMe SSDs, we get extra connectivity here. Next-generation systems have more PCIe connectivity as we already see with EPYC, we will see with Ice Lake soon, and we recently featured in our Ampere Altra Arm-powered Wiwynn Mt. Jade Server Review.

These risers are connected to the main PCB via high-density connectors. This deisign further adds to the flexibility of the platform. traditional x16 or x32 riser slots would likely not have fit on the motherboard.

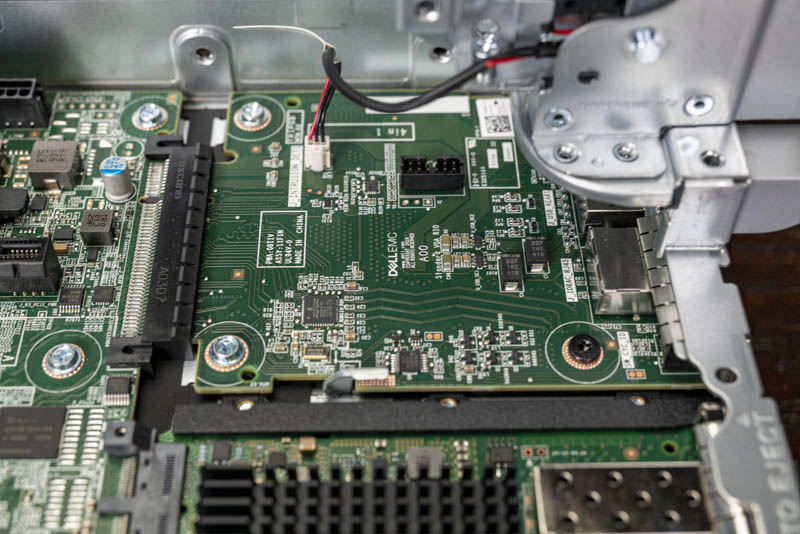

Here is a look at the motherboard. One can see the cabled connections at the outer edges. In our system these go to the NVMe SSDs, but they can be routed elsewhere for other connectivity based on configurations. The higher density connectors are inside that. We then have the battery and BMC inside of those features.

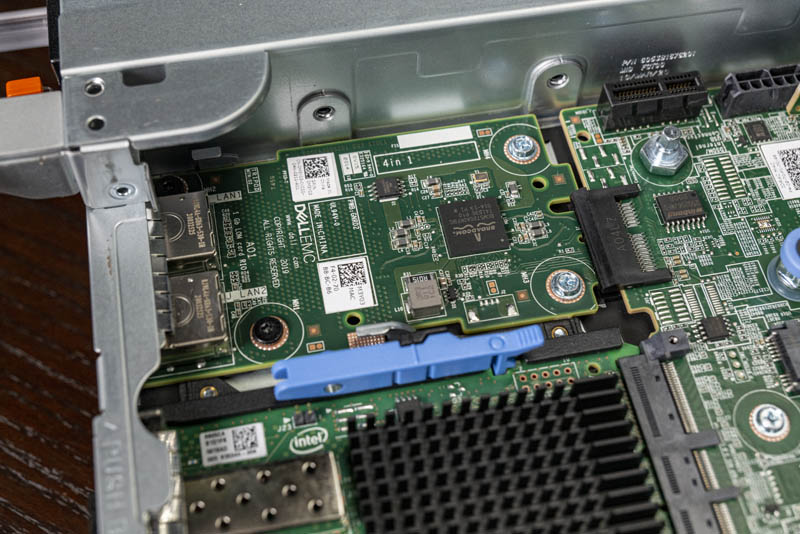

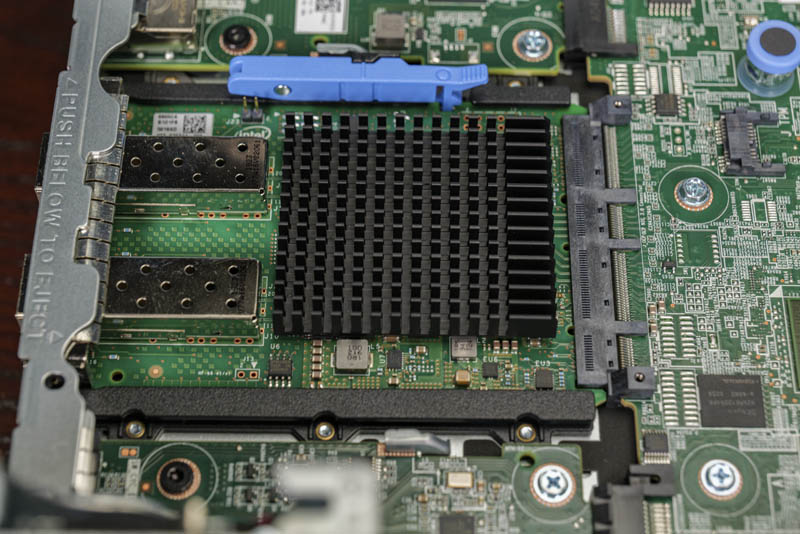

We mentioned this in our external overview, but one can see where the motherboard stops. It does not extend to the rear I/O portion of the chassis. Instead, all rear I/O is handled via customizable risers. For example, our base Broadcom networking is handled via its own PCB.

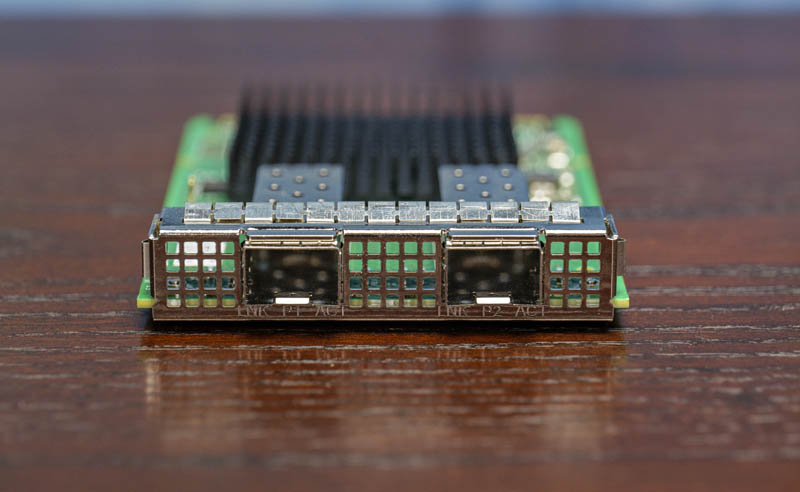

In the middle, we get the OCP NIC 3.0. This is a big deal in the PowerEdge ecosystem since this is the next-generation standard NIC form factor. Dell adopting this over a proprietary LOM is great to see.

Our particular unit is a dual 10GbE SFP+ Intel X710, but we have seen the OCP NIC 3.0 form factor with 100GbE NICs. We have a PCIe Gen4 x16 link here so there is plenty of bandwidth, but producing NICs that have low-enough power for 2x 100GbE/ 1x 200GbE connectivity is still a work-in-progress for the industry in this form factor.

Aside from the NICs, we also have the traditional rear I/O on its own flexible PCB. This PCB has features such as USB, VGA, the iDRAC management port, and chassis intrusion. Again, this is flexible so as to be customizable like the rest of the system.

While many of these may seem like small advances in server design, they add up to make an almost wildly configurable 2U server. With top-end core counts, memory capacity, and PCIe connectivity, this is effectively the flagship 2U dual-socket server in the PowerEdge line. Dell does not always market it as such, but this is clear from what we can see when looking at it.

Next, we are going to discuss the test configuration and management before getting into our performance testing.

Speaking of course as an analytic database nut, I wonder this: Has anyone done iometer-style IO saturation testing of the AMD EPYC CPUs? I really wonder how many PCIe4 NVMe drives a pair of EPYC CPUs can push to their full read throughput.

I should have first said this: I want a few of these!!!

Patrick, I’m curious when you think we’ll start seeing common availability of U.3 across systems and storage manufacturers? Are we wasting money buying NVMe backplanes if U.3 is just around the corner? Perhaps it’s farther off than I think? Or will U.3 be more niche and geared towards tiered storage?

I see all these fantastic NVMe systems and wonder if two years from now I’ll wish I waited for U.3 backplanes.

The only thing I don’t like about the R7525s (and we have about 20 in our lab) is the riser configuration with 24x NVMe drives. The only x16 slots are the two half-height slots on the bottom. I’d prefer to get two full height x16 slots, especially now that we’re seeing more full height cards like the NVIDIA Bluefield adapters.

We’re looking at these for work. Thanks for the review. This is very helpful. I’ll send to our procurement team

This is the prelude to the next generation ultra-high density platforms with E1.S and E1.L and their PCIe Gen4 successors. AMD will really shine in this sphere as well.

We would set up two pNICs in this configuration:

Mellanox/NVIDIA ConnectX-6 100GbE Dual-Port OCPv3

Mellanox/NVIDIA ConnectX-t 100GbE Dual-Port PCIe Gen4 x16

Dual Mellanox/NVIDIA 100GbE switches (two data planes) configured for RoCEv2

With 400GbE aggregate in an HCI platform we’d see huge IOPS/Throughput performance with ultra-low latency across the board.

The 160 PCIe Gen4 peripheral facing lanes is one of the smartest and most innovative moves AMD made. 24 switchless NVMe drives with room for 400GbE of redundant ultra-low latency network bandwidth is nothing short of awesome.

Excellent article Patrick!

Happy New Year to everyone at Serve The Home! :)

> This is a forward-looking feature since we are planning for higher TDP processors in the near future.

*cough* Them thar’s a hint. *cough*

Dear Wes,

This is focused towards the data IO for NAS/Databases/Websites. If you want full height x16 slots for AI, GPGPU or VM’s with vGPU then you’ll have to look elsewhere for larger cases. You could still use the R7525 to host the storage & non-GPU apps.

@tygrus

There are full height storage cards (think computational storage devices) and full height Smart NICs (like the Nvidia Bluefield-2 dual port 100Gb NIC) that are extremely useful in systems with 24x NVMe devices. These are still single slot cards, not a dual slot like GPUs and accelerators. I’m also only discussing half length cards, not full length like GPUs and some other accelerators.

The 7525 today supports 2x HHHL x16 slots and 4x FHHL x8 slots. I think the lanes should have been shuffled on the risers a bit so that the x16 slots are FHHL and the HHHL slots are x8.

@Wes

Actually R7525 can be configured also with Riser config allowing full length configuration (on my configurator I see Riser Config 3 allow that). I see that both when using SAS/SATA/Nvme and when using NVME only. I also see that on DELL US configurator NVME only configuration does not allow Riser Config 3. Looking at Technical manual, I see that such configuration is not allowed while looking on at the Installation and Service Manual such configuration is listed in several parts of the manual. Take a look.

I configured mine with the 16 x NVMe backplane for my lab. 3 x Optane and 12 by NVMe drives. 1 cache + 4 capacity, 3 disk groups per server. I then have 5 x16 full-length slots. 2 x Mellanox 100Gbe adapters, plus the OCP NIC and 2 x Quadro RTX8000 across 4 servers. Can’t wait to put them into service.

Hey There, what’s the brand to chipset?

Hi, can I ask the operational wattage of DELL R7525?

I can’t seem to find any way to make the dell configurator allow me to build an R7525 with 24x NVMe on their web site. Does anyone happen to know how/where I would go about building a machine with the configurations mentioned in this article?