NVIDIA has a new add-in card GPU accelerator. For the PCIe interface, the NVIDIA A100 is an Ampere generation GPU that is set to be a popular option for many markets. This move was widely expected as PCIe GPUs are still extremely popular. While the company’s SXM modules, such as the ones we saw used in servers such as the Inspur NF5488M5 and Gigabyte G481-S80 servers can utilize GPU to GPU NVLink, the PCIe GPUs are easy to integrate into existing servers.

NVIDIA A100 PCIe Add-in Card Launched

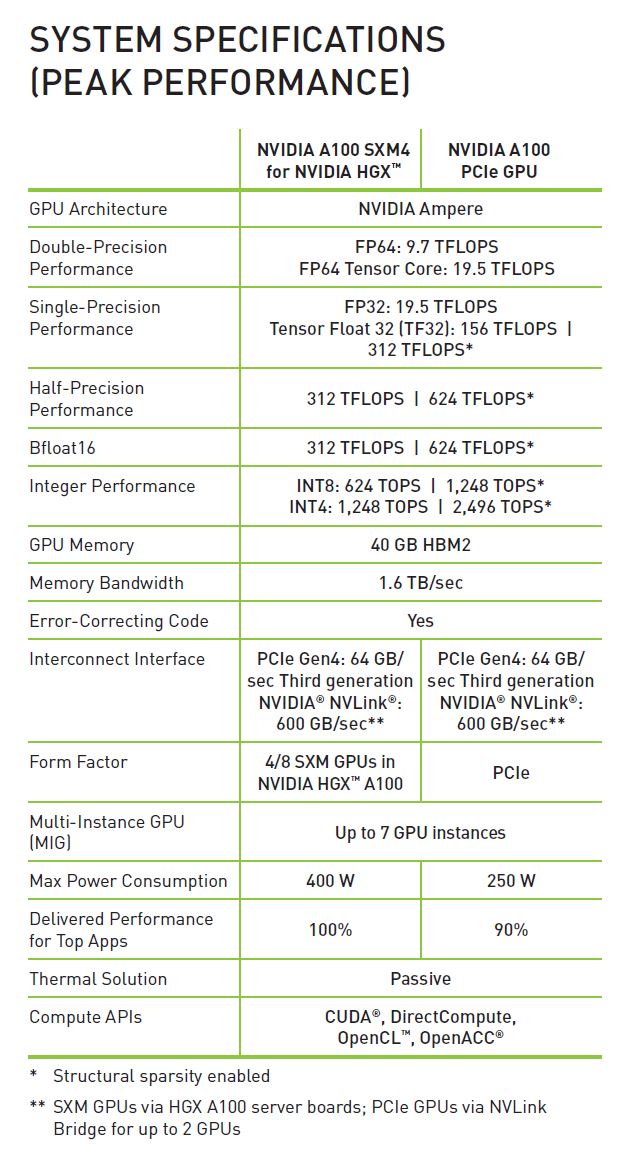

The new NVIDIA A100 GPUs are rated for up to 250W operation. They are also based on PCIe Gen4. While they will work with current-generation Intel Xeon systems, they will need AMD EPYC 7002 Series “Rome” CPUs to realize their full host facing bandwidth.

NVIDIA has a number of new technologies that came forward to this generation. With the NVIDIA A100 built on 7nm technology with 40GB of Samsung HBM2, the company was able to push transistor counts and add new acceleration for both existing FP32/ FP64 workloads as well as acceleration for new AI workloads. NVIDIA highlights a 20X gain in their slide, but that is looking at some fairly specific areas where new accelerators were introduced.

With a 250W TDP, the card does not have the same power and thermal headroom to run as the SXM variants. As the SXM power rises to 400W, there is a growing delta between the performance of PCIe and SXM based solutions. In the official specs, peak performance is listed as the same, but we would expect sustained performance to vary due to TDP.

Final Words

PCIe cards are more important for the reseller and VAR community in this generation. With the NVIDIA A100 4x GPU HGX Redstone Platform and the NVIDIA DGX/ HGX A100 NVSwitch platform, server OEMs control the market for NVIDIA GPU servers more than in previous generations. With the PCIe cards, a VAR can purchase a system and integrate the GPUs separately. That is a subtle nuance but one of the most important changes to the NVIDIA A100 data center sales and distribution model.

What – if anything – is known about pricing?

What Igor said.