Dell EMC PowerEdge XE8545 NVIDIA A100 4-GPU Redstone Platform

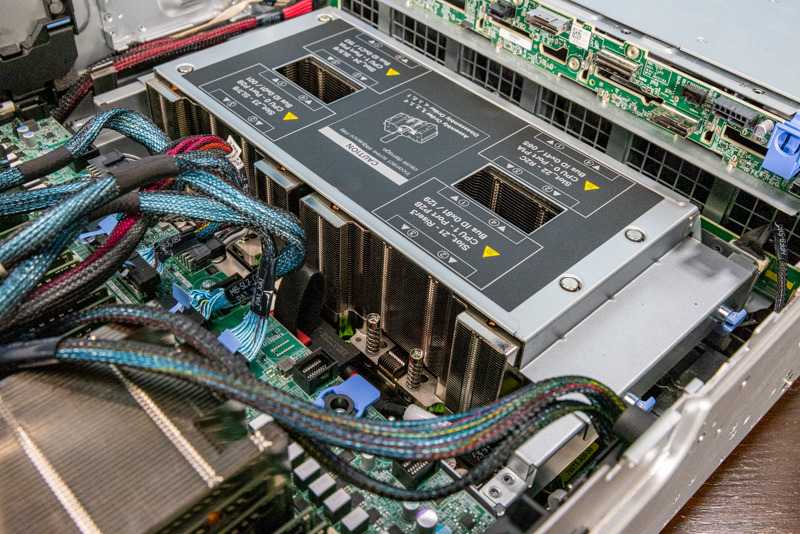

Let us now get to the big feature of this server, the NVIDIA Redstone platform. For those that are unaware, NVIDIA’s top-end GPU is the NVIDIA A100. The A100 has two primary form factors: PCIe and SXM4. PCIe is the low-end form factor used to prioritize flexibility over performance. As such, that is in the PowerEdge R750xa. This XE8545 however uses the higher-end SXM4 GPUs.

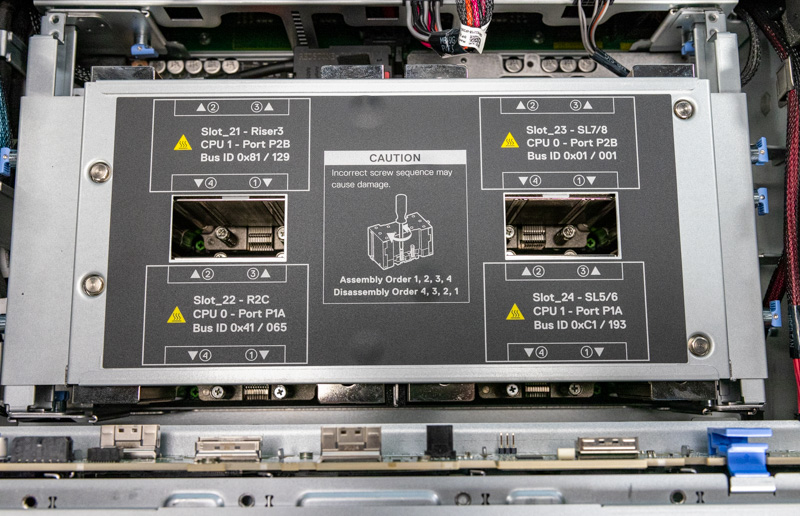

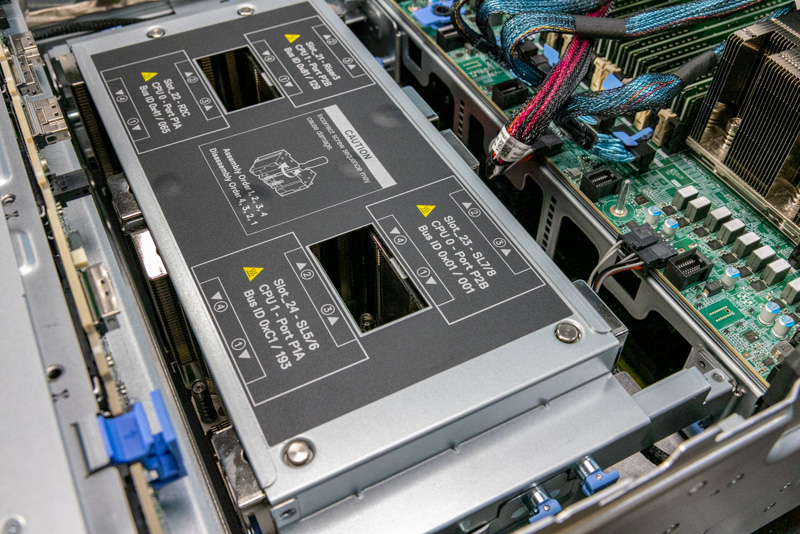

Within the SXM4 ecosystem, there are two main types of platforms. There is Redstone, that is used here, and Delta. Redstone places four SXM4 GPUs onto a PCB and is sold to vendors, like Dell, as a complete unit. Each of the four GPUs has direct NVLink connections to the other three GPUs. Each GPU also has a direct PCIe Gen4 link to CPUs (two to each CPU.) With the higher-end Delta platform, one adds NVSwitch for a switched NVLink fabric as well as PCIe Gen4 switches to handle the fact that there are twice as many GPUs. Many applications benefit from the lower latency of not having the switches in the middle of the CPU to GPU path and therefore, Redstone is an important platform.

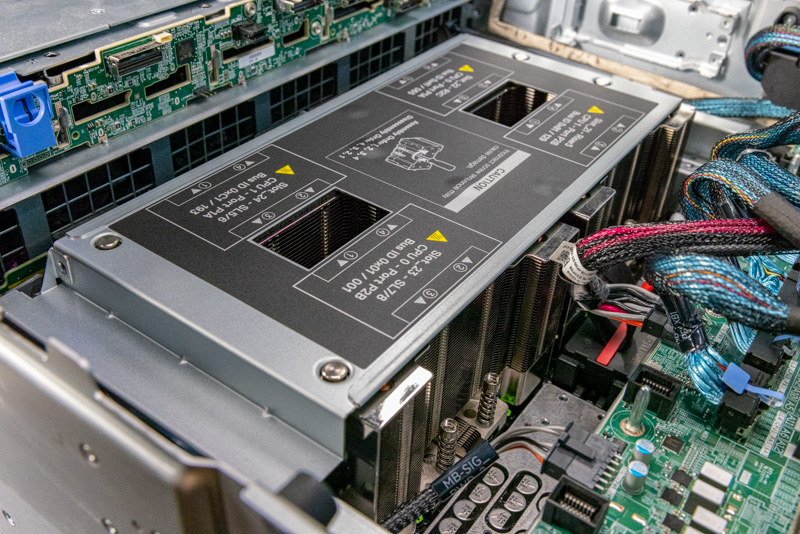

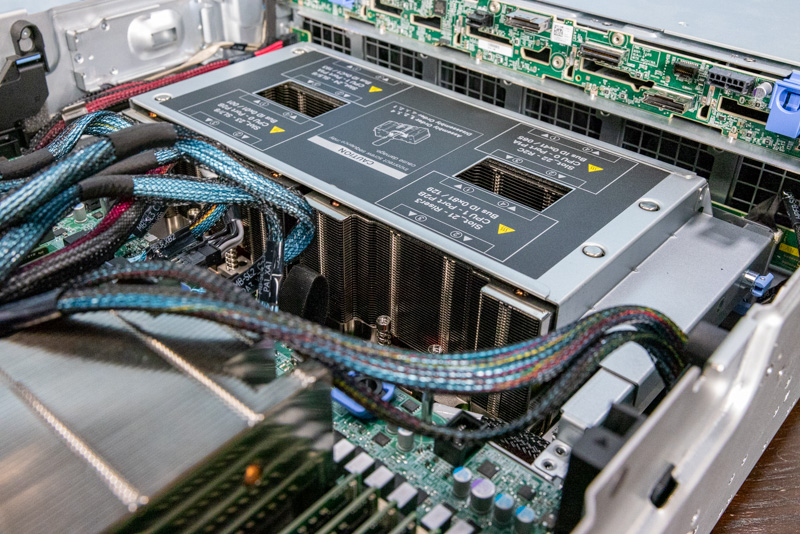

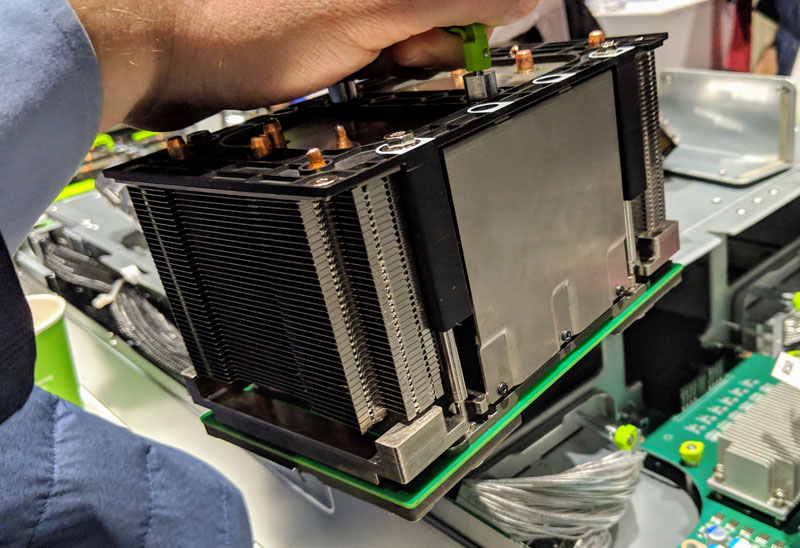

One can see the big Redstone assembly in the photos around this discussion. NVIDIA actually sells this entire assembly to Dell and Dell integrates the assembly into the PowerEdge XE8545. This is different from PCIe GPUs where Dell purchases the PCIe GPUs and then integrates those. Instead, Redstone is purchased as an entire assembly that Dell then needs to provide data and power connectivity to along with cooling.

One can see that the Redstone assembly sits in the lower part of the chassis so it can get direct airflow from the 3U fan wall.

We have mentioned “Delta” several times. You can see what one of those platforms looks like in our Inspur NF5488A5 8x NVIDIA A100 HGX platform review.

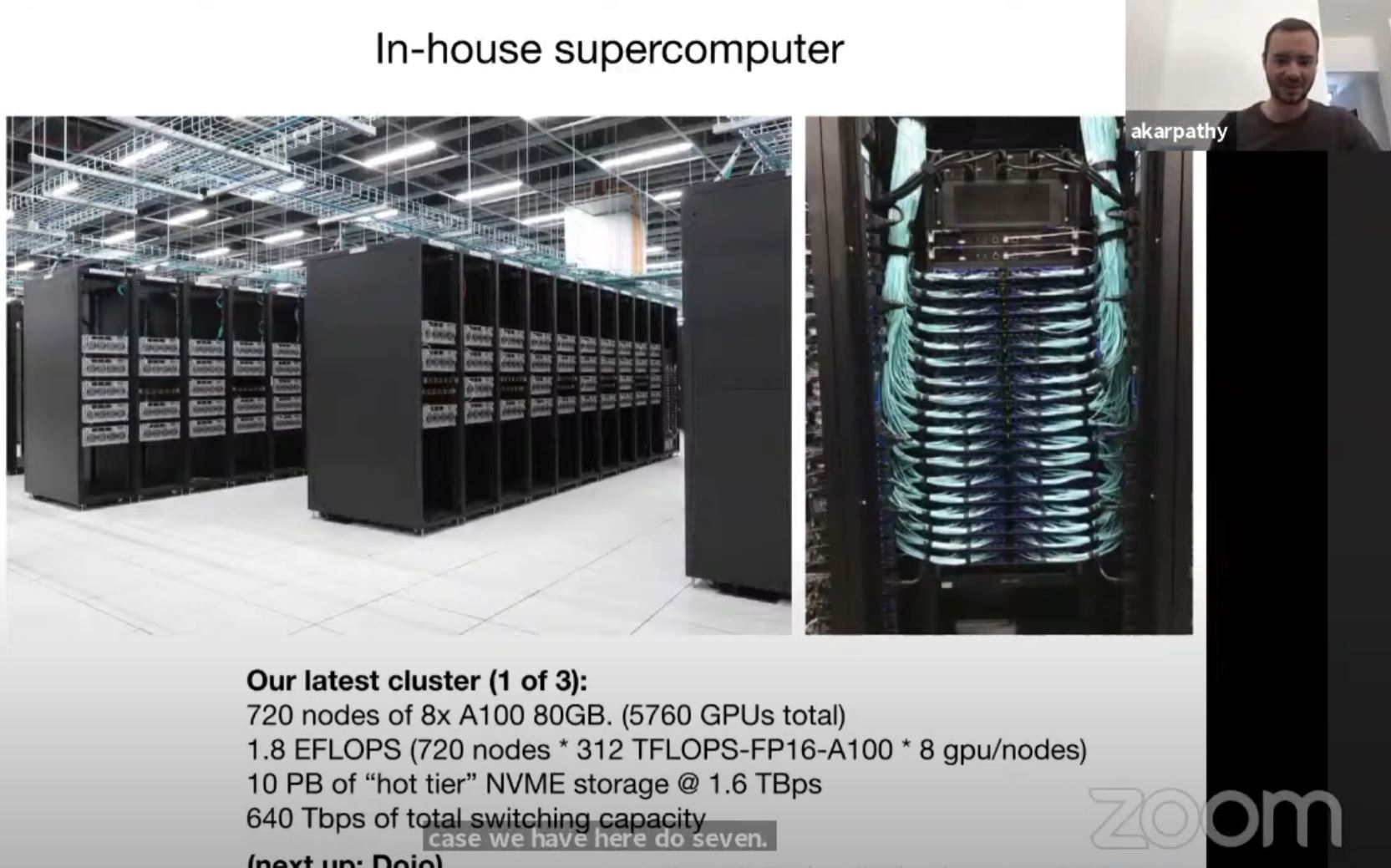

For those wondering, the Redstone platform is often the better HPC-focused platform due to latency. The Delta platform is favored by AI pioneers. Here is Tesla’s autonomous driving AI cluster. That is using the Supermicro Delta platforms.

Dell does not have a standard PowerEdge “NVIDIA Delta” platform for high-end AI training but those systems tend to be sold in clusters with a different margin profile than Dell usually seeks so perhaps that makes sense. For the time being, if you wan the high-end SXM4 GPUs from Dell, then you want the XE8545.

Another innovation is that Dell is able to cool both the 400W 40GB and the 500W 80GB SXM4-based A100 modules on air, but there is a catch. The NVIDIA A100 400W 40GB modules can have ambient data center temperatures of up to 35C. Using the 500W 80GB modules requires a 28C data center. In our lab, we usually run at just over 27C so we could air cool the 500W modules, but it would likely require us to ask the data center operator about getting more cooling to the racks to give a bit of a bigger margin.

The future of these is liquid cooling. We just looked at the Intel Ponte Vecchio is a Spaceship of a GPU that is coming in 2022. We expect 2022 generation GPUs to use 600W and more. As a result, liquid cooling will become necessary. As a result, we did a piece looking at liquid cooling in the data center and three different options a few weeks ago. Specifically there we showed the power and performance difference of using the A100 40GB 400W air-cooled and the A100 500W 80GB liquid-cooled on the NVIDIA Delta platforms.

In this generation, Dell can still cool 500W GPUs on air. There is a chance that in lower-density 4x GPU configurations we may see air cooling. Facebook was looking at air-cooling next-generation 500W-600W accelerators a few years ago.

Perhaps the point is, Dell is quickly running into the limits of air cooling in a platform like this. It is not just Dell, other vendors are as well. Our suggestion is that if you are a Dell customer and want to plan for your GPU server deployments in 2022, you reach out now to your Dell sales rep to see if and what type of cooling will be required for your purchases next year. What will start to happen in 2022 is that organizations that want high-end accelerators as we have in the XE8545 but did not plan for the power and liquid cooling future they will require will be at a disadvantage. If you are looking at the XE8545 today, remember the industry has line-of-sight to 1kW accelerators coming and much higher power CPUs. Power and cooling in this class of system will become a big deal and may require facility changes that will take months to complete.

Next, let us get to the basic topology, management, performance, and power before getting to our final words.

With 3 nvme u.2 ssd’s how can i leverage 2 drives into a raid 1 configuration for OS? That way I can just use 1 of my drives for scratch space for my software processing.

Do i need to confirm if my nvme backplane on my xe8545 will allow me to run a cable to the h755 perc card on the fast slot x16 of riser 7 for the perc card? Would this limit those raided ssd’s to the x16 lanes available to the riser where the perc card would be? At least my os would be protected if an ssd died. The 1 non raided drive would be able to use the x8 lane closer to the gpus right? I was originally hoping to just do raid 5 with all 3 drives so that i could partition a smaller os and leave more space dedicated to the scratch partition.