Going through the June 2018 Top500 list, we saw a few trends. Instead of focusing on the entire list, we are going to focus this article on the 133 new systems on the June 2018 Top500 supercomputers list. Focusing on the new systems shows trends on the newest systems even though there are 367 systems that have been on since at least November 2017. Here are a few key trends that we saw.

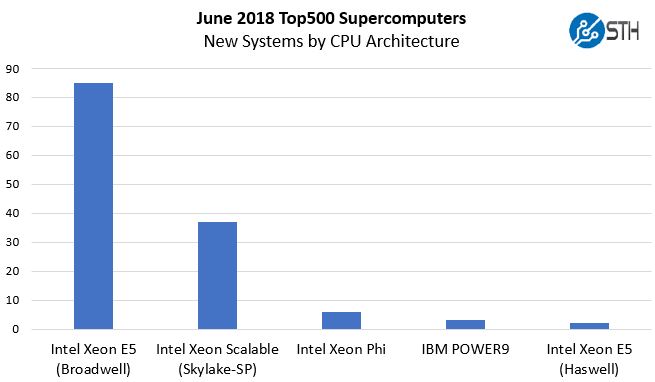

CPU Architectures

Intel Xeon Scalable (Skylake-SP) launched in July 2017, about a year ago. You can see STH’s coverage at Intel Xeon Scalable Processor Family (Skylake-SP) Launch Coverage Central. Almost a year later, one may expect that the new generation of CPUs has taken over. Instead, Intel Xeon Scalable is a distant second place on the new systems list.

Here is a breakdown based on CPU generation:

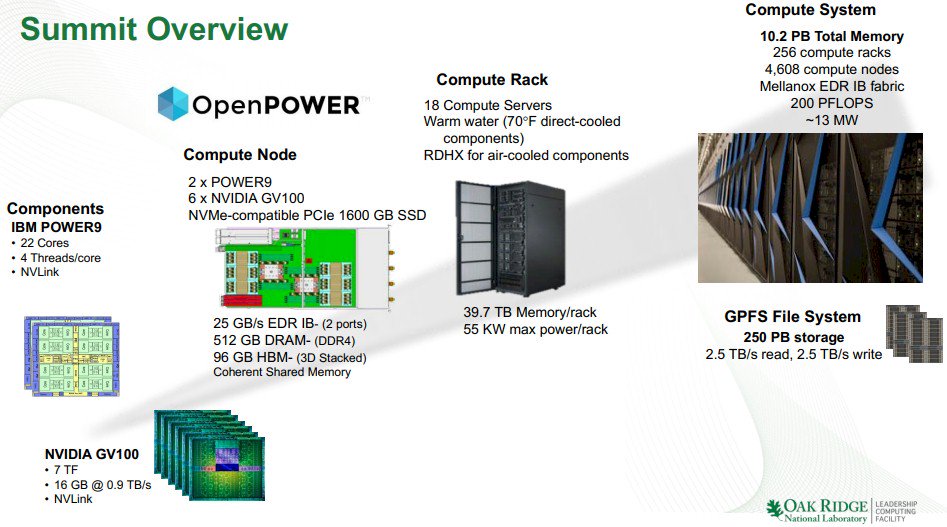

IBM POWER9 holds two of the top 3 spots. The number one spot is held by the CORAL program Summit supercomputer.

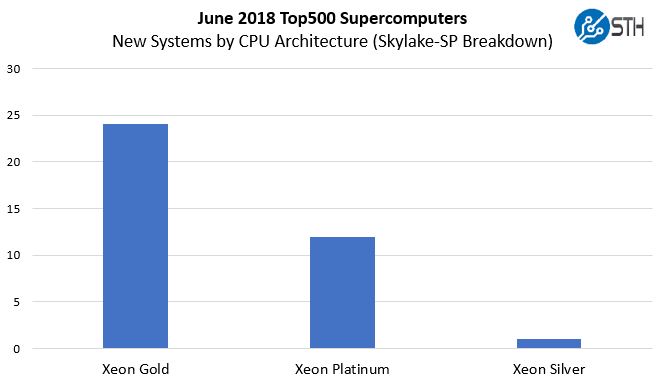

Beyond these two spots, the interesting part is the Intel Xeon breakdown. The vast majority are Intel Xeon E5 V3 / V4 generation parts. This is driven by hosting systems being run by a few vendors to boost presence on the list. For the new Intel Xeon Scalable systems here is a breakdown of the CPUs by family:

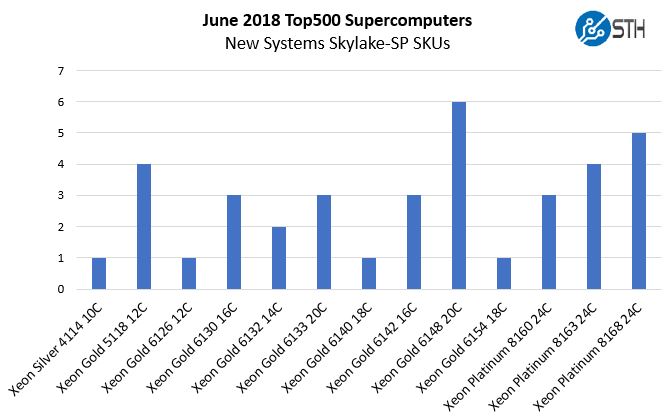

Here is the breakdown by SKU:

For those wondering about the Xeon Silver 4114 and the Xeon Gold 5118 systems, they are an interesting mix. The Intel Xeon Silver 4114 system is an Inspur system at #137 paired with NVIDIA Tesla V100 GPUs and FDR Infiniband installed at a Chinese Internet service provider. The Intel Xeon Gold 5118 systems are all Sugon systems installed in China between #328 and #485 on the Top500 list. Two systems are 10GbE clusters (more on those shortly) while the other two utilize Tesla P100 and Tesla V100 generation GPUs with FDR and EDR Infiniband respectively. Three of those five Xeon Silver and Xeon Gold 5100 systems are among the 26 NVIDIA accelerated systems.

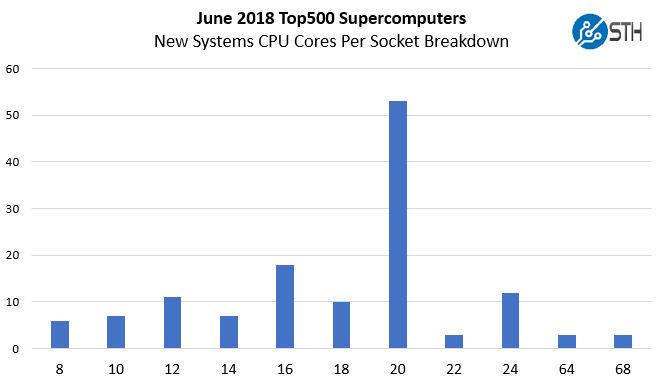

CPU Cores Per Socket

With today’s CPUs hitting core counts of 28 cores for Intel Xeon Scalable, 32 cores for AMD EPYC 7000 and Cavium ThunderX2, and even more for some of the more exotic architectures, one may assume that these systems top the list. Instead, we find a more modest core count.

The 22 core parts are all IBM POWER9. The 60+ core parts are the six Intel Xeon Phi 7200 series based machines. HPC workloads are often not CPU core bound so it is no surprise that the lower core count parts are popular. In the STH lab, we have Intel Xeon Gold 6148F parts that we used for pieces like the Intel Skylake Omni-Path Fabric Does Not Work on Every Server and Motherboard buyer’s guide. Those are common chips with 20 cores and onboard Intel Omni-Path 100Gbps fabric (OPA100.)

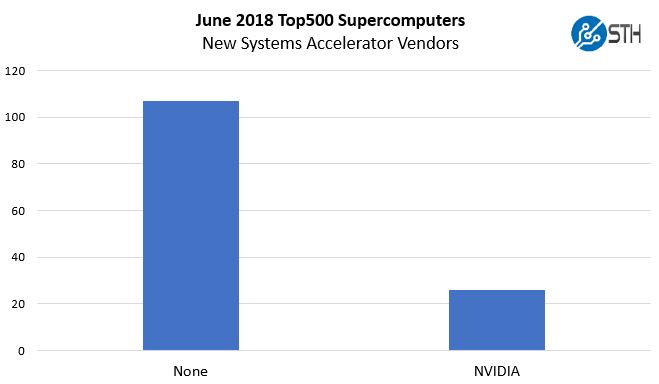

Accelerators or Just NVIDIA?

Outside of the 133 new systems, there are other accelerators on the list. Within the subset of 133 new systems, NVIDIA is the only accelerator vendor.

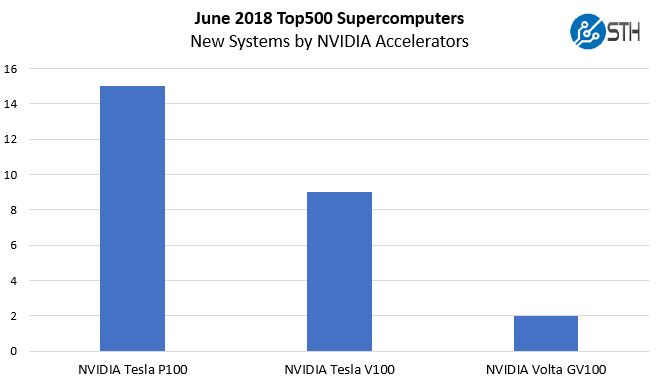

Although NVIDIA has been pushing the Tesla V100 for a year, the actual distribution only had 11 “Volta” accelerated clusters.

That number is less than one may have expected given how heavily NVIDIA is touting its Volta generation as the best HPC chip out there. The NVIDIA Tesla V100 has been out for about a year. Between the V100 and GV100 NVIDIA’s Volta is only found in 11 of the 133 top new systems.

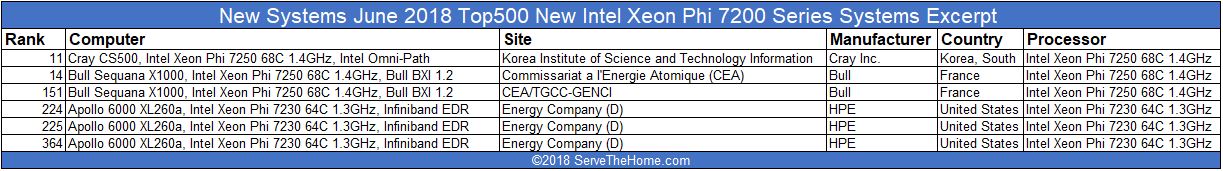

Intel Xeon Phi Two New Top 15 Systems

The Intel Xeon Phi 7200 series was essentially killed after Knights Mill launched in late 2017. As we would expect, there are no new Knights Mill systems on the list, but there are Knights Landing systems that made it.

We did not see any PCIe accelerator Knights Landing systems on the new list which makes sense. A major benefit of KNL is the ability to avoid PCIe data transfers and perform multi-core accelerated computing on the host chip.

On the other hand, AVX-512 has found its way to Intel Xeon Scalable CPUs and is competitive with Knights Landing, except for the lack of high speed dedicated memory. At STH we reviewed the Intel Knights Landing developer system over a year ago before the product line was effectively canceled.

Still, it is interesting that NVIDIA’s newest AI and HPC “Volta” architecture only was found in 11 new spots while the sunset Xeon Phi 7200 still notched six new systems.

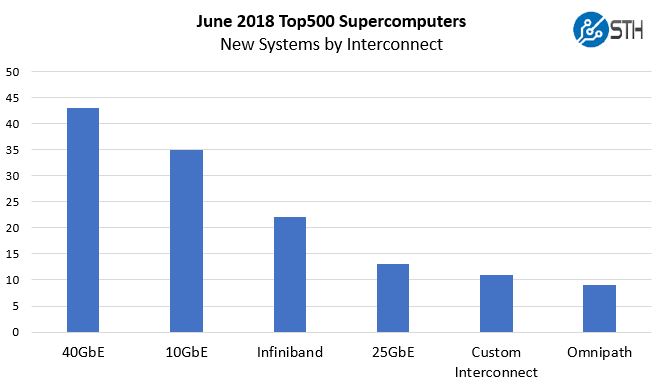

Fabric and Networking Trends

Most may logically assume that technology like Mellanox Infiniband or Intel Omni-Path are the top choices for Fabric, but they are not, and it is not even close. The top interconnect technology is Ethernet, the same technology you are likely using to get to this content.

There is a nasty trend on the Top500 list to take a cluster, be it web hosting or otherwise, and run Linpak on it. These are not “supercomputers” in the sense that they run traditional HPC workloads. Instead, they are bragging rights entries.

We covered this topic last year in our Why 25GbE Now Claims 19 spots in the Top500 List. If you were wondering, whether this happened yet again, it did.

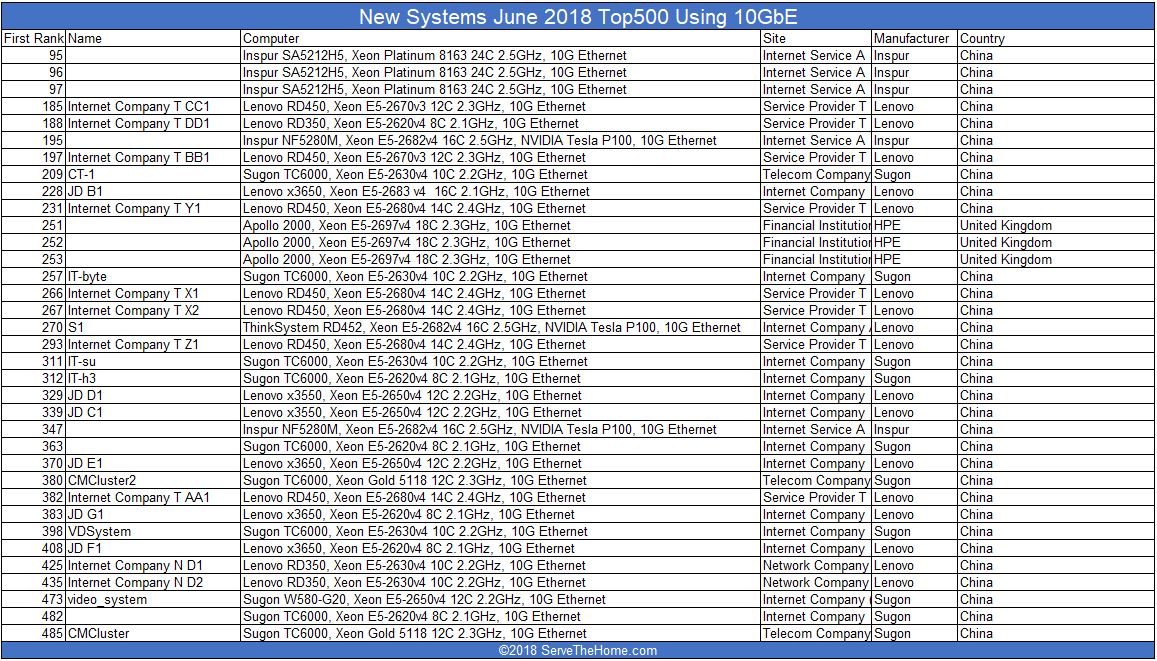

10GbE is essentially “free” in the server world today. Intel Xeon Scalable systems with their Lewisburg PCH’s can get access to 10GbE. You can read about that in our Burgeoning Intel Xeon SP Lewisburg PCH Options Overview. Intel Xeon D systems have had 10GbE both in the Xeon D-1500 and Xeon D-2100 series generations. (As an aside, there are two Xeon D-1500 series Top500 supercomputers.) Even the “lowly” Intel Atom C3000 series has 10GbE built-in. If you think of a server node as being thousands of dollars, adding 10GbE to a modern system is a sub 1% cost difference on most nodes. In the world of Ethernet, 10GbE is the commodity low-end now and yet, 35 of the 133 new Top500 systems use them. Here is the list:

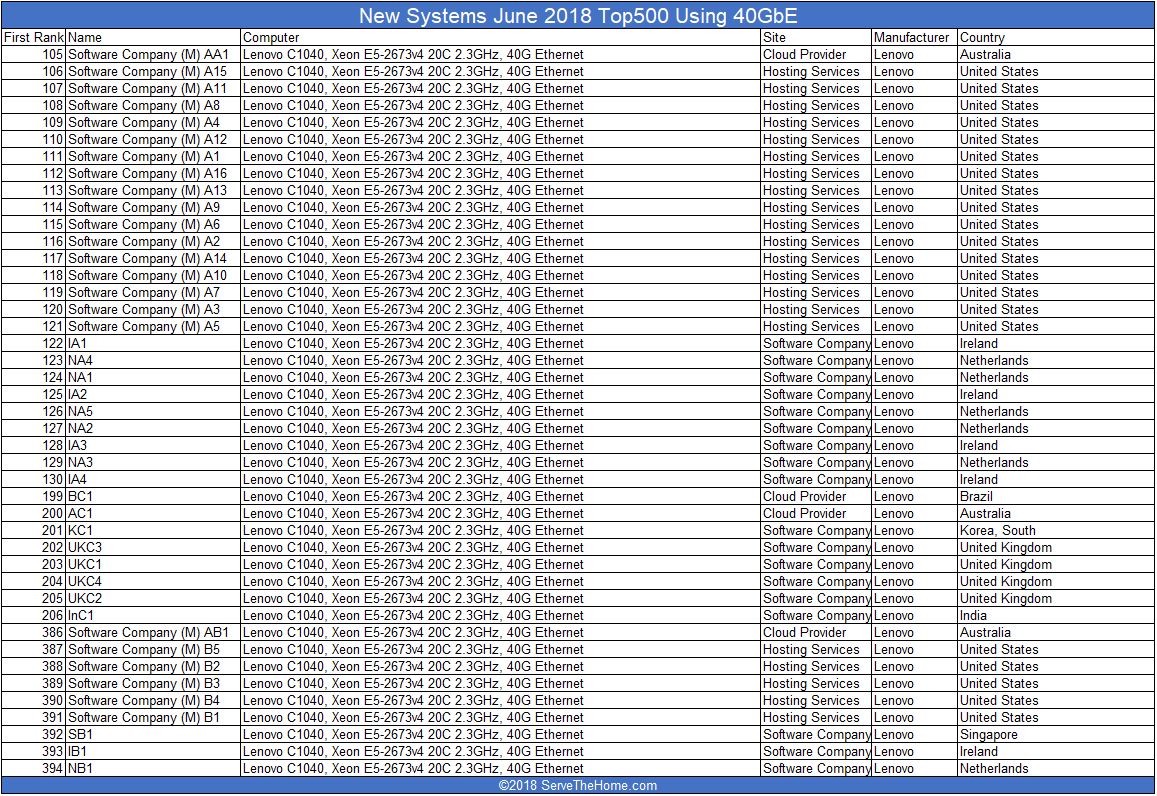

You may see a pattern emerging here. If you cannot, let us take 40GbE. This is what the STH lab has begun to transition away from as 25GbE and 100GbE are newer technologies. Here is the 40GbE list of 43 new supercomputers:

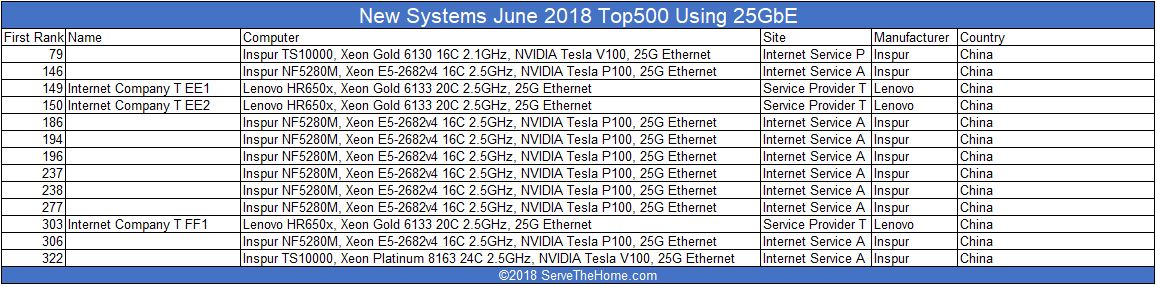

For newer Ethernet options, here is are the new 25GbE systems on the list:

On the November 2017 list, there were 19 25GbE supercomputers. On the latest list, there are 13 new clusters using 25GbE. Of note, those are 8 of the 15 NVIDIA Tesla P100 based systems and 2 of 9 NVIDIA Tesla V100 systems.

Final Words

Here is where things get a little strange. Lenovo claims the highest total of new systems on the list with 64 of the 133 new Top500 systems making their debut. If you count the Lenovo Ethernet systems, which are largely corporate hosting clusters, they account for 63 of Lenovo’s 64 new entries in the list. This is a trend that is frankly a shame to see. The hardest part about this is the fact that we walk by corporate clusters of hundreds of GPUs on a daily basis in local data centers that are not on the Top500 list because they are primarily AI focused and privately owned. Then again, their system vendors are not pushing their customers to run Linpack and submit results.

We hope that the November 2018 Top500 list has some interesting additions such as systems based on Cavium ThunderX2 and AMD EPYC. We also see that Fujitsu’s announcement for an ARM-based Post-K system likely spells trouble for SPARC architectures as they are losing a key vendor and application.

A big question that the industry must face is whether a web hosting cluster is considered a supercomputer and how to treat them on the Top500 list.

Lenovo is clearly just getting its customers to run Linpack on systems designed for web hosting to take the top vendor spot. It’s shady and not all unlike Huawei paying for smartphone reviews. A cluster of servers intended to run mongodb, elasticsearch, redis and nginx aren’t doing science as a single cluster. They’re just a load balancing cluster exercise for companies that aren’t big enough to build their own servers.