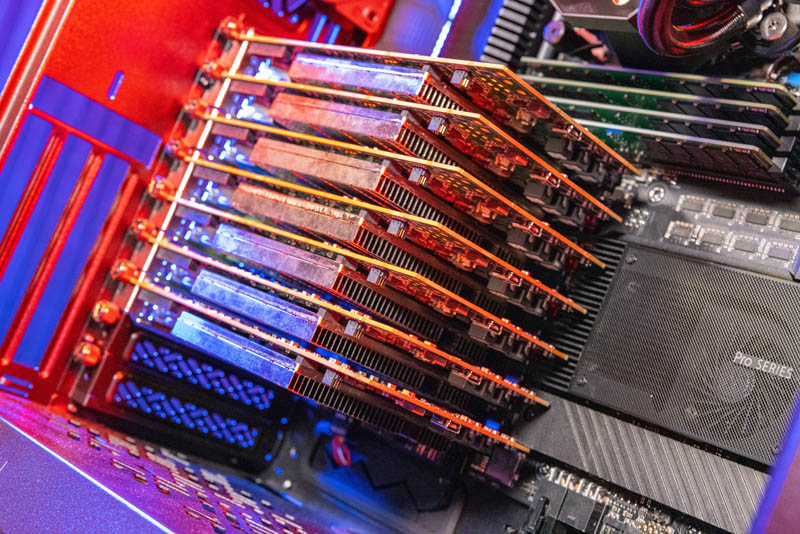

It is time that we turn our attention to the other DPU option in the market for enterprises, the AMD Pensando DPU. In the realm of network adapters, perhaps nothing is as enchanting at the moment as DPUs. We recently looked at a 400GbE solution (video here) but DPUs are the new hotness. If you are a hyper-scaler with teams that can build your own DPU, program FPGAs, or do magic with P4 pipelines, then there are a lot of options. If you are an enterprise running VMware, your choices are either the NVIDIA BlueField-2, or the AMD Pensando DSC2. We already did a deep dive into a hands-on lab with the NVIDIA BlueField-2 DPU and VMware vSphere. Now we are going to do the same with the AMD Pensando DPU and show you things like logging into VMware ESXio on the DPU and even what the cards look like installed in servers. Let us get to it.

The AMD Pensando DPU Quick Background

If you saw our What is a DPU or 2021 NIC Continuum pieces, you probably are already familiar with DPUs. We saw a need years ago to define what is an offload adapter, SmartNIC, DPU, or an exotic solution like the Intel IPU Big Spring Canyon (video here.) If you want to learn about a DPU versus other forms of adapters, we have a quick primer here:

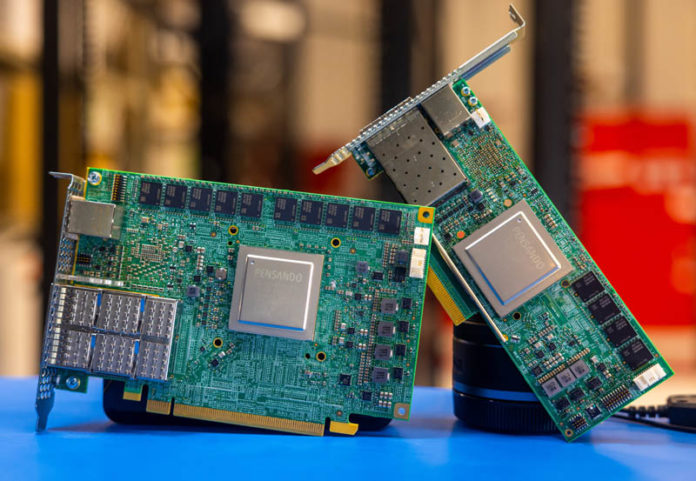

About a year ago we covered when AMD purchased Pensando. Pensando had wins at places like the Oracle Cloud, and one other big one: VMware. That gave AMD a foothold into the DPU market.

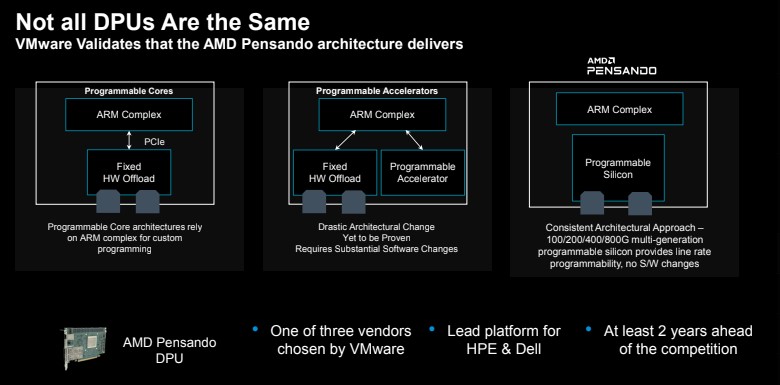

VMware Project Monterey (now just part of VMware vSphere) only had three vendors listed for support: NVIDIA BlueField-2, AMD Pensando, and Intel. Despite the fact that VMware’s former chief is running Intel, Intel Mt. Evans is not currently on the compatibility list. Instead, this is currently a two-horse race between NVIDIA BlueField-2 DPUs that we showed earlier and AMD Pensando DPUs.

Despite the fact that VMware is trying to obfuscate DPU vendors to a large degree, AMD has one major advantage over NVIDIA: speed. At the time that we started writing this in May 2023, NVIDIA BlueField-2 was only supported on 25GbE cards while AMD Pensando is supported on 100GbE cards.

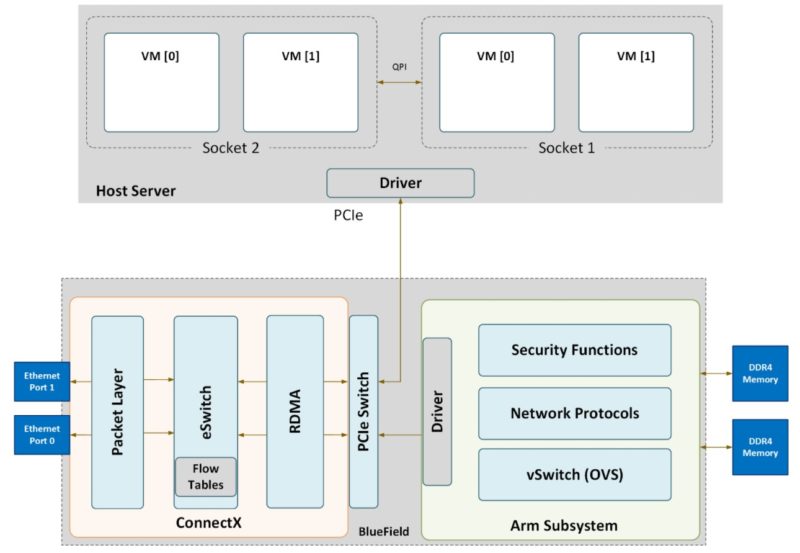

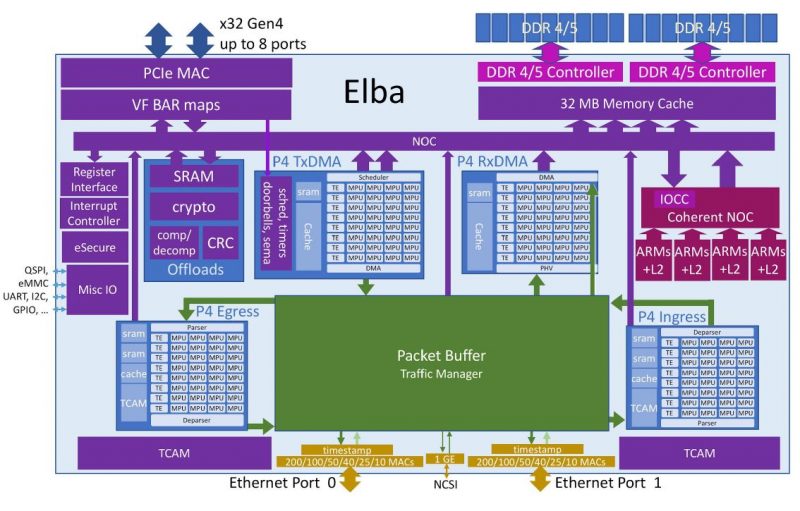

Pensando had its initial “Capri” architecture that we covered at Hot Chips and now has “Elba” a 7nm part. Architecture is very important. NVIDIA effectively is accelerating Open vSwitch and has an architecture with BlueField-2 DPUs that has a ConnectX-6 and an Arm/ accelerator co-processor linked with a PCIe switch.

The AMD Pensando Elba has a fast NOC and then runs traffic through its P4 engines with some offload engines and its Arm complex as almost ancillary features.

The user experience is VERY different between NVIDIA and AMD. With NVIDIA, one logs into the DPU almost like a Raspberry Pi (using Ubuntu) with a giant 100GbE NIC attached.

AMD Pensando you can log into the DPU, but really the way you are meant to program the part is through the P4 engines.

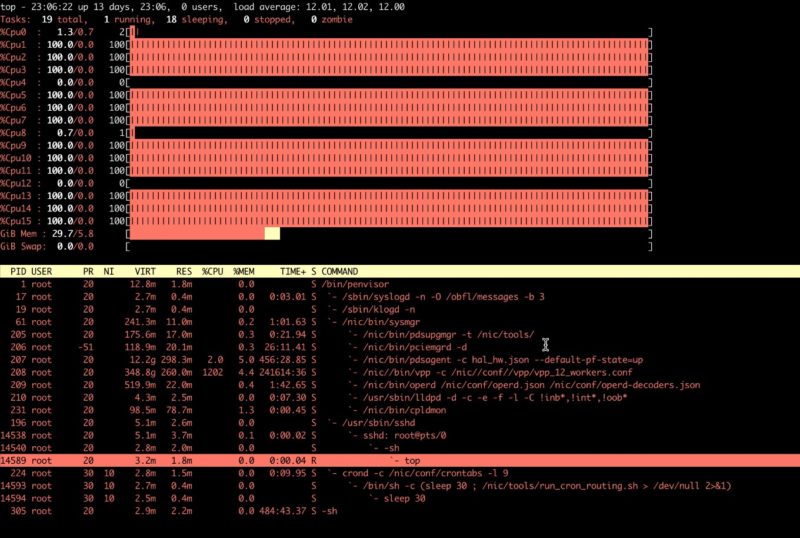

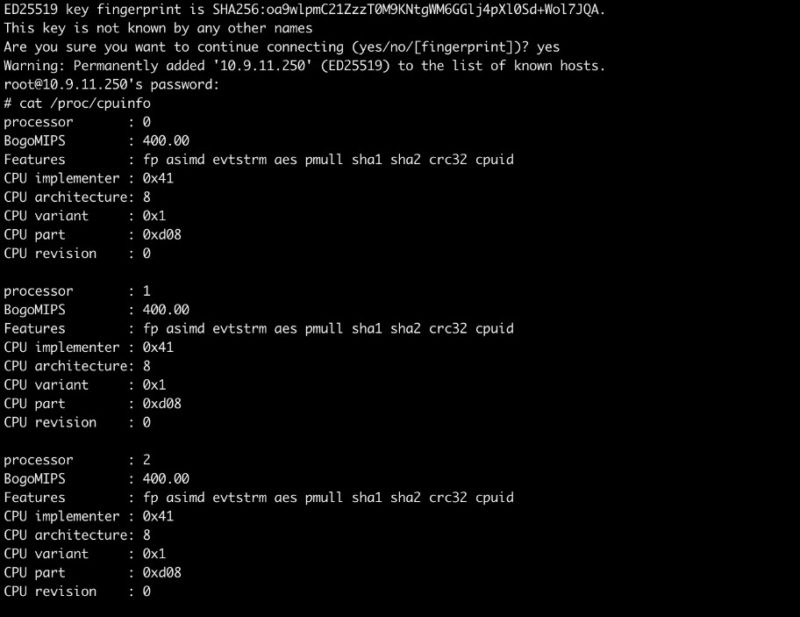

AMD has a lightweight Linux distribution at its base. It then has API tools to allow one to do the packet processing in P4.

Still, there is an Arm Cortex A72 complex with memory and its own onboard storage.

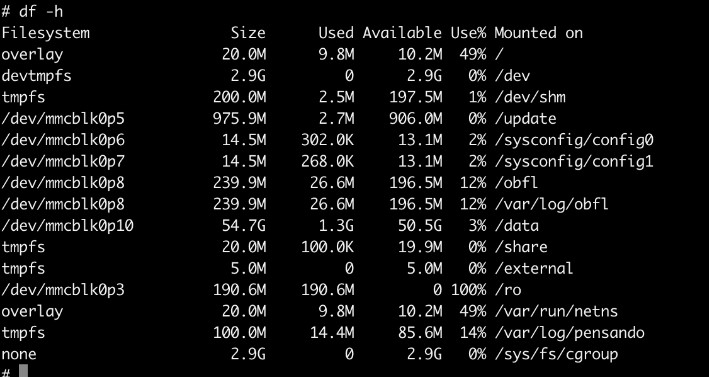

If this sounds a bit tricky, it is. To be clear, AMD is currently targeting larger customers such as VMware, or hyper-scalers to enable many machines to effectively use the silicon. NVIDIA has a model that feels more like its Jetson boxes where if you have a card, you can log in and start playing. Indeed, we already did a piece on Building the Ultimate x86 and Arm Cluster-in-a-Box just for fun because NVIDIA’s model allows for this.

That is why VMware is so important here. Bringing DPU support to VMware means that VMware hides the complexity of learning P4, and instead one buys a system with a Pensando DPU and VMware, and there is a single toggle to enable high-performance UPT networking using the DPU. It is actually the same toggle that lets you select NVIDIA BlueField-2 and AMD Pensando DPUs. From a VMware end-user perspective, the process is very similar.

Next, let us take a look at VMware ESXio running directly on the DPUs.

STH is the SpaceX of DPU coverage. Everyone else is Boeing on their best day.

Killer hands on! We’ll look at those for sure.

This is unparalleled work in the DC industry. We’re so lucky STH is doing DPUs otherwise nobody would know what they are. Vendor marketing says they’re pixie dust. Talking heads online clearly have never used them and are imbued with the marketing pixie dust for a few paragraphs. Then there’s this style of taking the solution apart and probing.

It’ll be interesting to see if AMD indeed also ships more Xilinx FPGA smartnic or no?

They released their Nanotubes ebpf->fpga compiler pretty recently to help exactly this. And with fpga being embedded in more chips, it’d be an obvious path forward. Somewhat expecting to see it make an appearance in tomorrow’s MI300 release.

You had me at they’ve got 100Gb and nVidia’s at 25Gb

Does this work with Open Source virtualization as well?

Why does it say QSFP56? That’d be a 200G card, or 100G on only 2 lanes.

@Nils there’s no cards out in the wild and no open source virtualization project that currently has support built in for P4 or similar. It’s a matter of time until either or both become available but until then it’s all proprietary.

I also don’t expect hyperscalers to open up their in-house stuff like AWS’ Nitro or Googles’ FPGA accelerated KVM