At STH, we have been covering DPUs for many years. Enough time to see some solutions flourish and others fail. While DPUs have been deployed in the cloud for many years, bringing that cloud-like deployment model to the Enterprise has been much slower, if not impossible. Now with VMware vSphere 8, organizations with VMware deployments can start taking advantage of DPUs. That project has gone from theory to deployment and we were able to check it out.

As a quick note, we normally would not be prioritized to get access for this NVIDIA LaunchPad demo. We are saying that NVIDIA is sponsoring this piece. Like all pieces on STH, NVIDIA, and VMware did not get to review this piece before it went live to maintain editorial independence. NVIDIA did help show us around the demo environment before we started our walk-through and testing. That was necessary in this case to ensure our first time setting this up was not going to be done incorrectly.

What is the NVIDIA BlueField-2 DPU?

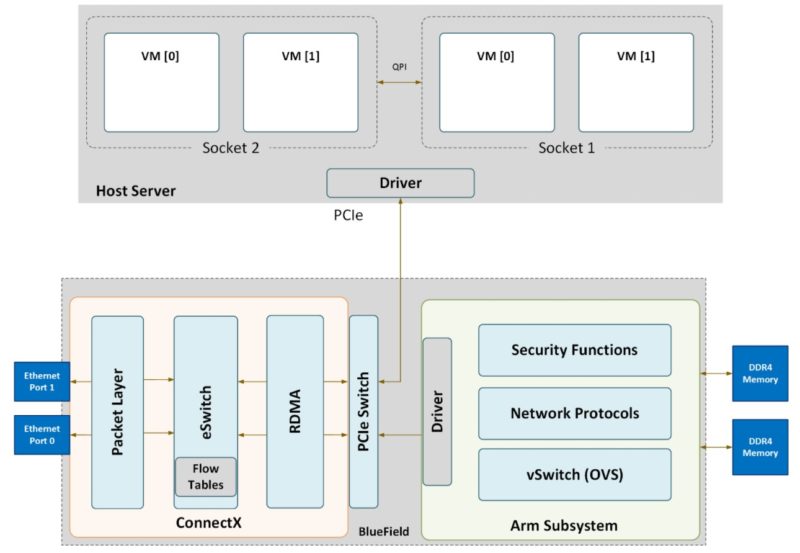

We have covered the NVIDIA BlueField-2 DPU on STH many times. One way to think about BlueField-2 is that it is a NVIDIA ConnectX-6 network interface with an Arm core complex, its own memory, storage, out-of-band networking, a PCIe switch, and accelerators.

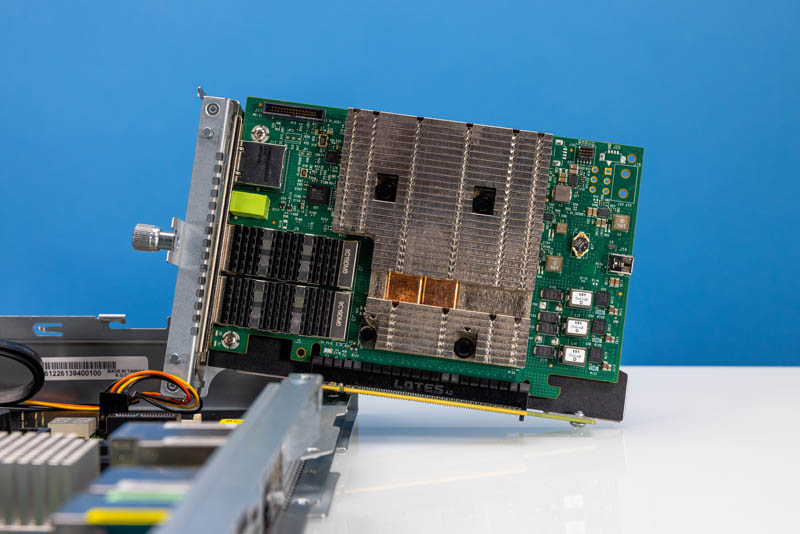

The BlueField-2 cards come in various form factors. Here is one from our recent AMD Ryzen Server the ASRock Rack 1U4LW-B650/2L2T Review.

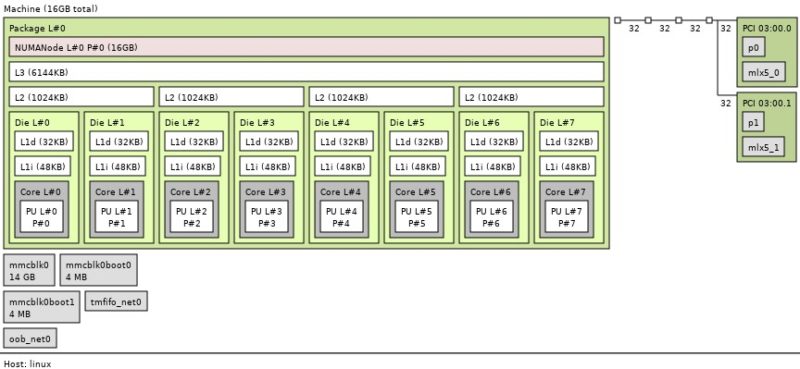

Here is the topology when logged directly into the card’s Arm complex.

We showed in ZFS without a Server Using the NVIDIA BlueField-2 DPU a fun demo with these cards running storage stacks using the Arm cores.

While the demo we built last year was fun, it was not meant for production. The VMware-NVIDIA networking integration is. That is the solution we are going to review today.

Next, we are going to show how this works and how we set up the demo environment. If you just want to see the “so what” performance and impact, that will be on the last page.

Yeah, I’ll just say it: I don’t understand all the fuss. Does it improve performance in area XYZ? Well yes, of course. It’s a computer. You have put a smaller computer in charge of a specialized group of functions so that your CPU does not have to do those functions. It would be like going nuts over a GPU. Yes, of course the GPU is going to GPU so the CPU does not have to. And no, ZFS was not run on not a server, it was run on the computer that is the DPU. Some of them throw down 200W+ (not including pcie passthrough). Well, I think that is a correct high number, NVIDIA actually publishes all of the specs EXCEPT the power usage. You have to go sign into the customer portal to get those. I kid you not, look: https://docs.nvidia.com/networking/display/BlueField2DPUENUG/Specifications

I mean, I know there has never been a computer of 200W that has ever been able to run ZFS with ECC memory……….atom. And as your article does show, as usual, it is not that you can put another computer somewhere, its putting it there and getting it to do what you want and talk with the computer playing host to it. So great. Its an comp-ccelerator. It can offload my OVS, cool. Also there is a certain always around the corner about so much these days. The future, great (maybe?), but if something is always around the corner, its not being used for anything they told us Sapphire Rapids was good for. Bazinga! Intel joke. AMD handed Zen 1 to China for a quick buck. AMD joke. All is balanced.

The article states all VM were provisioned the same, but then doesn’t go on to provide details or at least details I could find. Was the test sensitive to how many virtual CPUs and RAM was assigned to each VM? What are the details of the VM?

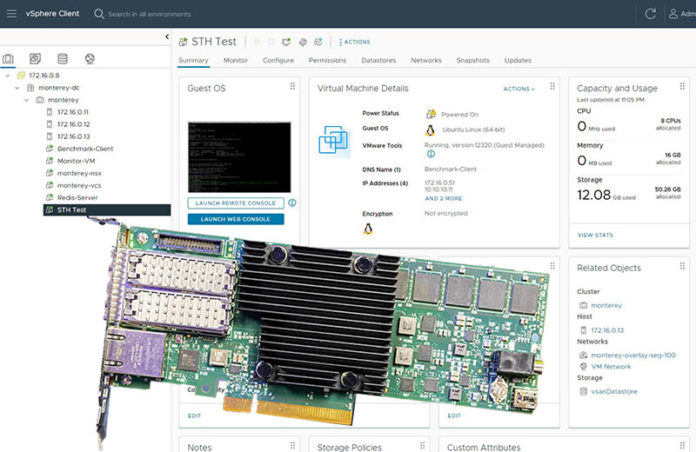

Hi Eric – We had screenshots of the VMs on Page 2 so my thought was folks could see the 8 vCPU/ 16GB configuration there.

Thanks for the quick reply. I was reading the article on my mobile phone. The screen images are blurry unless one clicks on an image and then pinches to zoom. I see the configuration now.

Thanks for the detailed analysis.

I wonder how close you could get to these figures using DPDK in Proxmox instead of using a DPU. The DPU performance difference while nice is not as big as I would have expected. Afaik AWS mentioned latency decreases and bandwidth uplift of 50% when initially switching to their Nitro architecture.

What was the power consumption of the BF2?

WB

AMD committed something akin to treason, and someone lied to you about something something Intel. Run Along – you are not the target audience for this now deprecated DPU nor for anything in the server realm.

David

This the previous gen card – the Bluefield 3 (400Gb/s) offers better performance.

No SPR servers to review (I have 13 dual SPR 1U servers) – no modern DPU to review, no DGX A100 or DGX H100 to review – yet all of those are in my datacenter…

If Patrick had actual journalistic skills and a site that attracts more than a couple dozen people I would let him review the equipment I have.

Ha! This DA troll. You’re realizing at some point they’ve got 130K subs on YT and they’re getting tens of thousands of views per video, not posting anywhere near as much on here. Based on their old Alexa rank they’re 3-5M pv/ month so that’s like 100k pv/ article.

13 SPR servers is small. We’re at thousands deployed in just the last 4 weeks and we’re looking at Pensando or BlueField for our VMware clusters going forward.

Go troll somewhere else.

DA I strongly suspect that better hardware performance is not the missing key but that the virtualization implementation is less than optimal. We already have numbers of DPU to DPU traffic running directly on the cards and those are much better than the VM level differences.

Right now, only a select number of cards are supported by VMware. These are the fastest BlueField-2 DPUs supported. BlueField-3’s will be, but not yet.

To be fair to DA – we do only get a several dozen people per minute on the main site.