Benchmarking BlueField-2 DPU Enabled UPT Performance Benefit with Redis

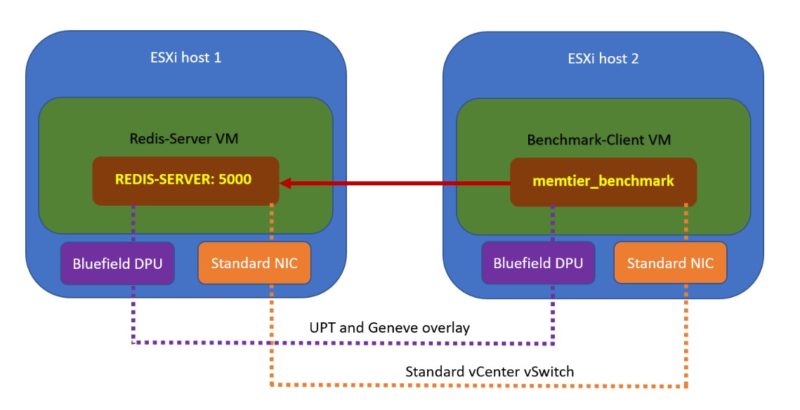

This is the quick setup of what we are going to do with the VMs setup in the environment above. We are going to run the memtier benchmark on the standard NICs and the BlueField-2 DPUs. All of the VMs are configured the same except for the network and our hosts are uniform.

Since we are doing this on our own, NVIDIA and VMware are probably hoping that we configured it properly and we get better performance. They are seeing this at the same time STH readers are, so let us get to it.

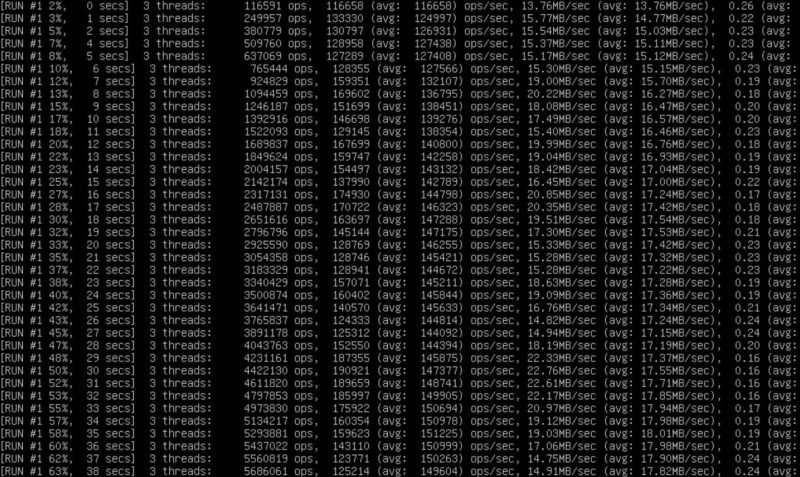

Here is what running the benchmark over standard NICs looks like in the terminal:

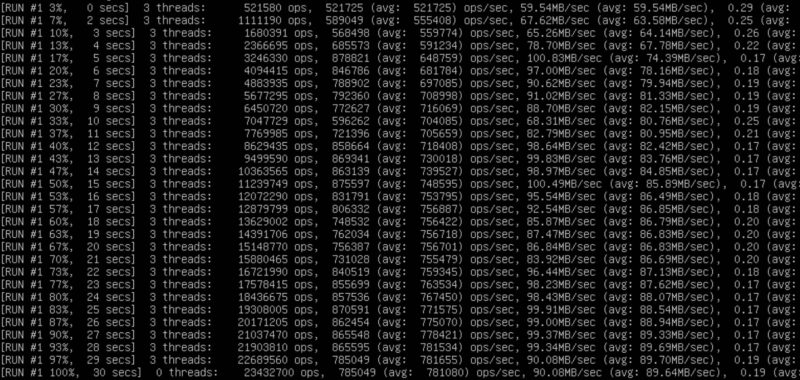

Here is the BlueField-2 DPU version using UPT:

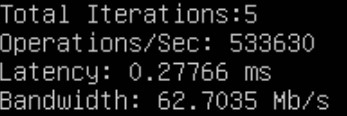

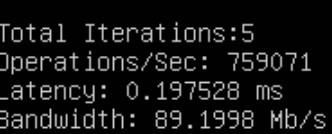

Here is what we got for operations/ sec, latency, and bandwidth for the

Here is the same using BlueField-2 DPUs:

That is roughly a 42% increase in operations per second and a 29% decrease in latency. Both are solid figures and are showing the performance impact of DPU-enabled UPT versus standard vmxnet3 networking.

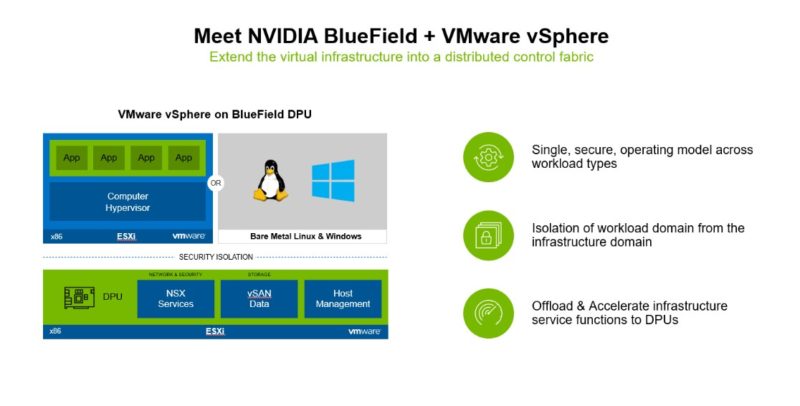

A Bit More on Using DPUs with VMware

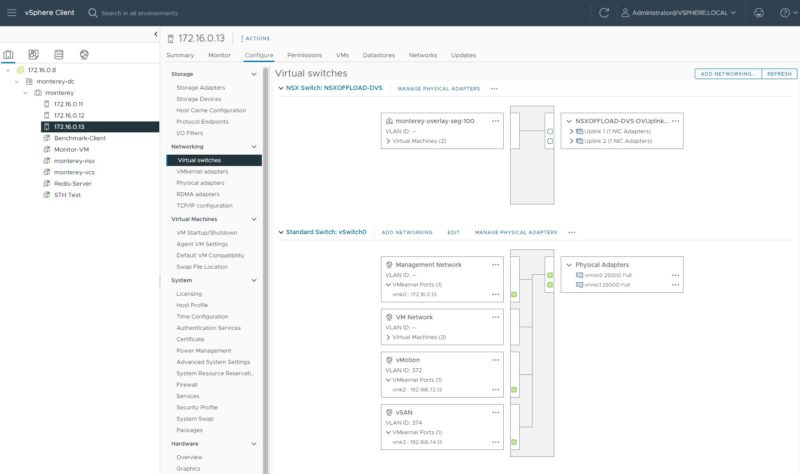

Since many have not seen this, the integration with the NVIDIA BlueField-2 DPUs and VMware was more than we were expecting. NVIDIA’s (previously Mellanox) BlueField-2 DPUs were focused on providing OVS offload. Our best guess is that that is what the VMware engineers are using under-the-hood. We thought folks might want to see the virtual switches.

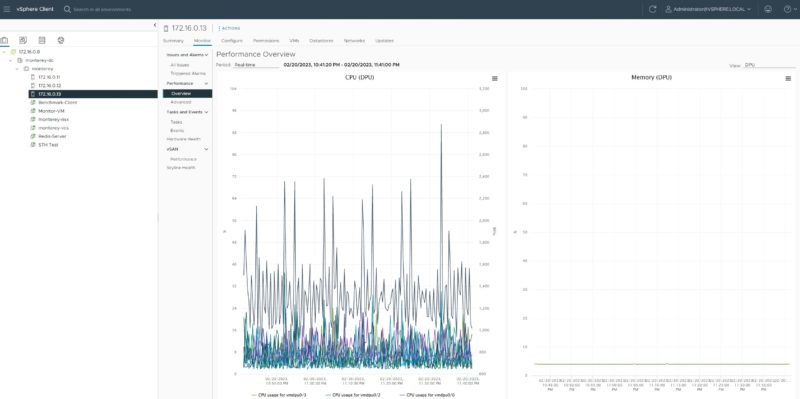

Also, things like seeing the DPU performance works in VMware.

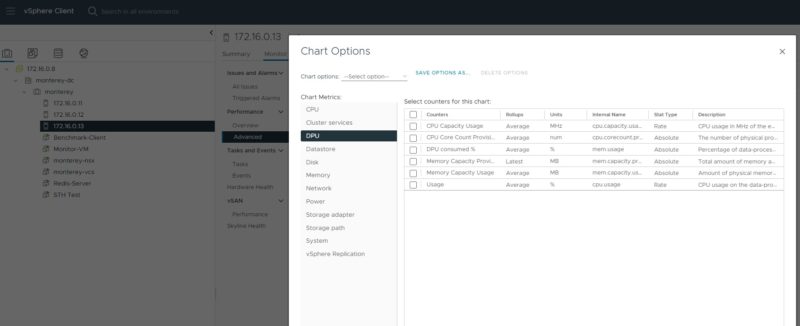

We can even chart different metrics for the DPU.

Still, it is important to understand what is going on here. The DPU is running a special version of ESXi called ESXio. In its current state, we only are using part of the envisioned functionality.

The next step is to use the DPU to allow for bare metal provisioning, VMware vSAN storage, and more. This will bring a much more cloud-like environment and operating model for VMware. Large cloud providers have had this capability for years. Still, bringing this capability to the VMware ecosystem is awesome. What will be more awesome is also being able to bring VMware vSAN and other services. VMware is building this new capability over time and there are a lot of interesting features coming.

Final Words

This was a really cool demo to get to see. Being able to use DPUs in a VMware environment with NSX and UPT showed the power of the solution. At the same time, it is really the beginning. It is very evident that the base assumption in VMware is that one does not have a DPU installed, so there is still a decent amount of setup involved. Over time, hopefully, DPUs become more commonplace, and operating with things like UPT and DPUs becomes the default. Here BlueField-2 DPUs are already giving us more performance like a pass-through adapter while also giving us the operating model more like a standard vmxnet3 NIC. That is great.

What this has us really excited about is the future. Right now, there are only two working DPU options for VMware, and both are a bit specialized. Further, we showed the networking side today, but hopefully, in the future, vSAN and bare metal provisioning become just as easy to use. Oh, and there is new hardware coming that is even more capable. This is the closest to hands-on with a BlueField-3 DPU we have gotten, but hopefully, we get some in the lab soon.

If you do want to give DPUs a try and do not have BlueField-2 yet, and you have a VMware environment you are accustomed to, you can apply for access on NVIDIA LaunchPad.

Yeah, I’ll just say it: I don’t understand all the fuss. Does it improve performance in area XYZ? Well yes, of course. It’s a computer. You have put a smaller computer in charge of a specialized group of functions so that your CPU does not have to do those functions. It would be like going nuts over a GPU. Yes, of course the GPU is going to GPU so the CPU does not have to. And no, ZFS was not run on not a server, it was run on the computer that is the DPU. Some of them throw down 200W+ (not including pcie passthrough). Well, I think that is a correct high number, NVIDIA actually publishes all of the specs EXCEPT the power usage. You have to go sign into the customer portal to get those. I kid you not, look: https://docs.nvidia.com/networking/display/BlueField2DPUENUG/Specifications

I mean, I know there has never been a computer of 200W that has ever been able to run ZFS with ECC memory……….atom. And as your article does show, as usual, it is not that you can put another computer somewhere, its putting it there and getting it to do what you want and talk with the computer playing host to it. So great. Its an comp-ccelerator. It can offload my OVS, cool. Also there is a certain always around the corner about so much these days. The future, great (maybe?), but if something is always around the corner, its not being used for anything they told us Sapphire Rapids was good for. Bazinga! Intel joke. AMD handed Zen 1 to China for a quick buck. AMD joke. All is balanced.

The article states all VM were provisioned the same, but then doesn’t go on to provide details or at least details I could find. Was the test sensitive to how many virtual CPUs and RAM was assigned to each VM? What are the details of the VM?

Hi Eric – We had screenshots of the VMs on Page 2 so my thought was folks could see the 8 vCPU/ 16GB configuration there.

Thanks for the quick reply. I was reading the article on my mobile phone. The screen images are blurry unless one clicks on an image and then pinches to zoom. I see the configuration now.

Thanks for the detailed analysis.

I wonder how close you could get to these figures using DPDK in Proxmox instead of using a DPU. The DPU performance difference while nice is not as big as I would have expected. Afaik AWS mentioned latency decreases and bandwidth uplift of 50% when initially switching to their Nitro architecture.

What was the power consumption of the BF2?

WB

AMD committed something akin to treason, and someone lied to you about something something Intel. Run Along – you are not the target audience for this now deprecated DPU nor for anything in the server realm.

David

This the previous gen card – the Bluefield 3 (400Gb/s) offers better performance.

No SPR servers to review (I have 13 dual SPR 1U servers) – no modern DPU to review, no DGX A100 or DGX H100 to review – yet all of those are in my datacenter…

If Patrick had actual journalistic skills and a site that attracts more than a couple dozen people I would let him review the equipment I have.

Ha! This DA troll. You’re realizing at some point they’ve got 130K subs on YT and they’re getting tens of thousands of views per video, not posting anywhere near as much on here. Based on their old Alexa rank they’re 3-5M pv/ month so that’s like 100k pv/ article.

13 SPR servers is small. We’re at thousands deployed in just the last 4 weeks and we’re looking at Pensando or BlueField for our VMware clusters going forward.

Go troll somewhere else.

DA I strongly suspect that better hardware performance is not the missing key but that the virtualization implementation is less than optimal. We already have numbers of DPU to DPU traffic running directly on the cards and those are much better than the VM level differences.

Right now, only a select number of cards are supported by VMware. These are the fastest BlueField-2 DPUs supported. BlueField-3’s will be, but not yet.

To be fair to DA – we do only get a several dozen people per minute on the main site.