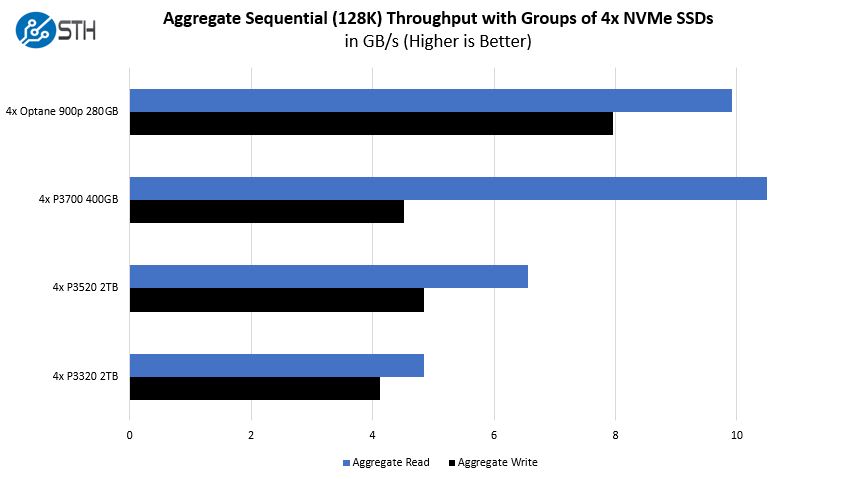

Gigabyte G481-S80 NVMe Storage Performance

We tested a few different NVMe storage configurations because this is one of the Gigabyte G481-S80 key differentiation points. Previous generation servers often utilized a single NVMe storage device if any at all. There are six SAS3/ SATA bays available but we are assuming those are being used for OS/ bulk storage given the system’s design.

These numbers are about what we would expect. Here is the key point: if you are buying a DGX-1.5 class system like the Gigabyte G481-S80, using Intel Optane is an exceedingly interesting NVMe option. For local storage, it offers lower latency and great bandwidth even at lower queue depths. We are using smaller drives here, but Intel Optane 905P drives are now much larger.

Of course, the flip side to this is that with a Gigabyte G481-S80 or DGX-1.5 class system, when Intel Cascade Lake-SP is finally announced one will be able to use Intel Optane Persistent Memory DIMMs for up to 3TB of memory channel Optane storage. This is something that the Intel Xeon E5 V4-based DGX-1 class systems cannot offer.

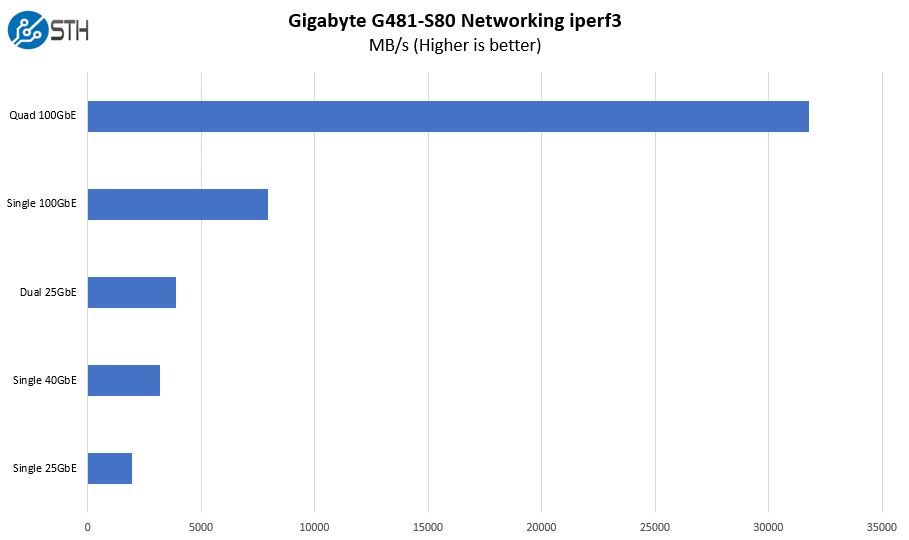

Gigabyte G481-S80 Networking Performance

We loaded the Gigabyte G481-S80 with a number of NICs. For the main networking NICs, we used Mellanox ConnectX-4 100GbE/ EDR Infiniband NICs. We want to note here that Gigabyte offers Omni-Path networking on this platform. We requested that our review sample come with OPA but we were unable to secure the internal cables and bracket in time. As a result, we went solely with Mellanox. The primary reason we used Mellanox ConnectX-4 for the 100Gbps networking is that we had cards on hand and did not have ConnectX-5 available. We also used this opportunity to test our Dell Z9100-ON 100GbE switch. If you are deploying this system, just get 4x Mellanox ConnectX-5 NICs.

At one point we had a ConnectX-3 card in the front PCIe x8 slot and used that for a single 40GbE connection. We also had our dual 25GbE Broadcom NIC installed in the OCP slot. As a result, we fired everything up and saw just how much bandwidth we could push.

This is one of the unique aspects to the DGX-1.5 class design of the Gigabyte G481-S80. Each Mellanox NIC is paired with two SXM2 NVIDIA Tesla GPUs. If you are not pushing data to the GPUs, then you have four 100GbE NICs connected through four PCIe switches each with a PCIe x16 uplink. That adds a slight amount of latency, but also means that there is a massive improvement in network bandwidth compared to our DeepLearning11 single root system build.

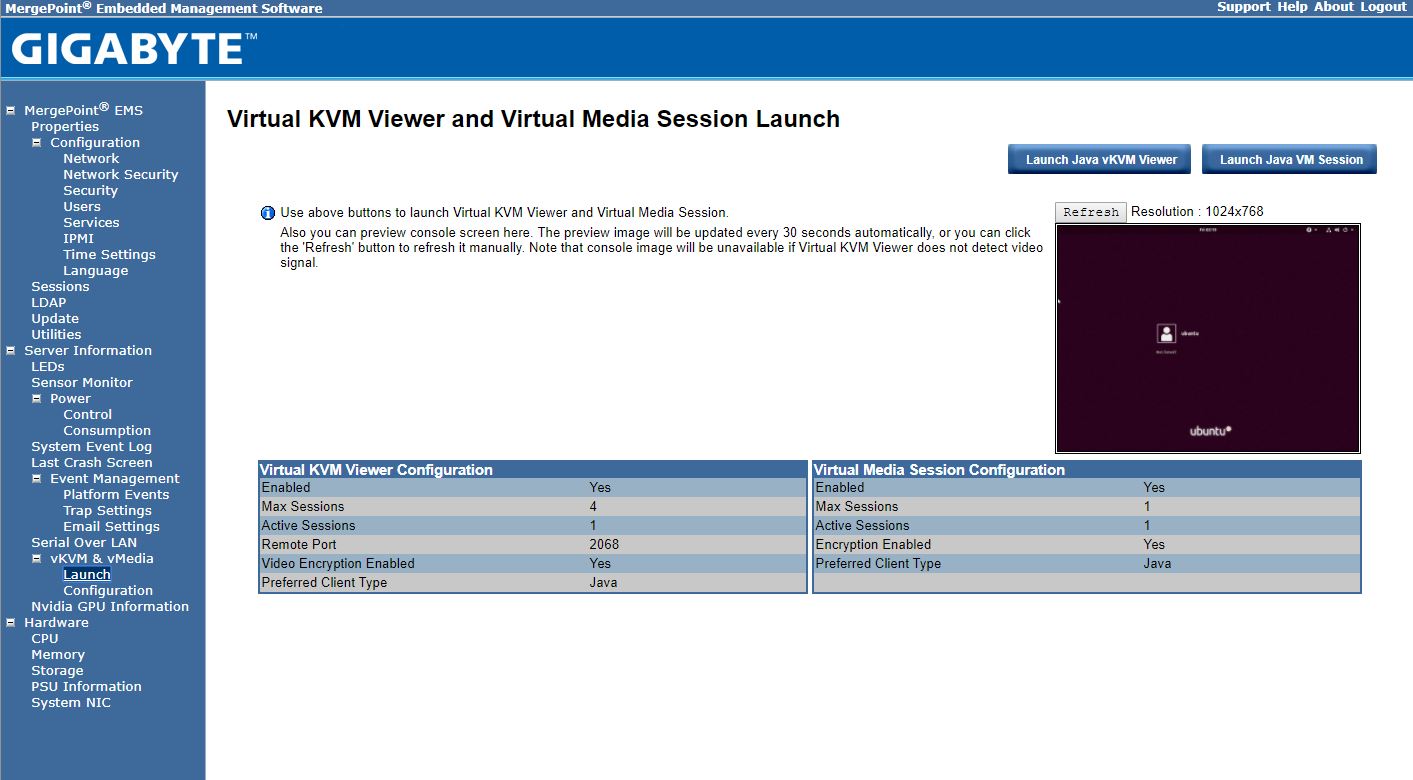

Gigabyte G481-S80 Management

These days, out of band management is a standard feature on servers. Gigabyte offers an industry standard solution for traditional management, including a Web GUI. This is based on the ASPEED AST2500 solution, a leader in the BMC field.

With the Gigabyte Gigabyte G481-S80 solution, one has access to the Avocent MergePoint based solution. This is a popular management suite that allows integration into many systems management frameworks.

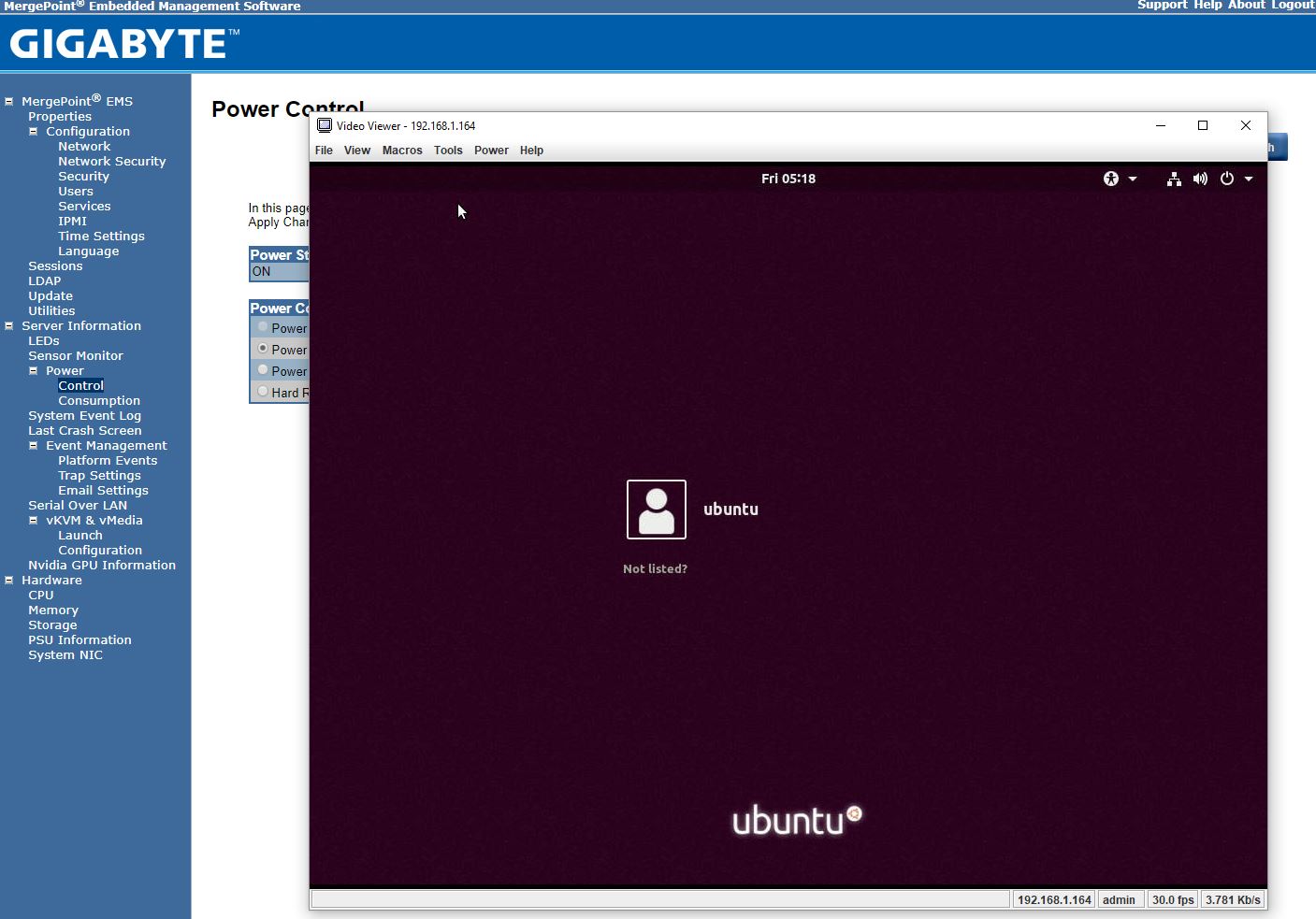

Gigabyte allows users to utilize Serial-over-LAN and iKVM consoles from before a system is turned on, all the way into the OS. Other vendors such as HPE, Dell EMC, and Lenovo charge an additional license upgrade for this capability (among others with their higher license levels.) That is an extremely popular feature because it makes remote troubleshooting simple.

At STH, we do all of our testing in remote data centers. Having the ability to remote console into the machines means we do not need to make trips to the data center to service the lab even if BIOS changes or manual OS installs are required.

Next, we are going to look at the Gigabyte G481-S80 power consumption. We are then going to present our server spider which will be followed by our final words on the platform.

Gromacs would be a nice benchmark to see.

Thanks for doing more than AI benches. I’ve sent you an e-mail through the contact form on a training set we use a Supermicro 8 GPU 1080 Ti system for. I’d like to see comparison data from this Gigabyte system.

Another thorough review

It’s too bad that NVIDIA doesn’t have a $2-3K GPU for these systems. Those P100’s you use start in the $5-6K each GPU range and the V100’s are $9-10K each.

At $6K per GPU that’s $48K per GPU, or two single root PCIe systems. Add another $6K for Xeon Gold, $6K for the Mellanox cards, $5K for RAM, $5K for storage and you’re at $70K as a realistic starting price.

Regarding the power supplies, when you said 4x 2200W redundant, it means that you can have two out of the four power supplies to fail right?

I’m asking this because I’m might be running out of C20 power socket in my rack and I want to know if I can plug only two power supplies.

Sorry, my question’s reply is in the marketing video.

They are 2+N power supplies.

Interested if anyone has attempted a build using 2080Tis? Or if anyone at STH would be interested. The 2080 Ti appears to show much greater promise in deep-learning than it’s predecessor (1080Ti), and some sources seem to state the Turing architecture is able to perform better with FP16 without using a single many tensorcores as former Volta architecture. Training tests on tensorflow done by server company lambda also show great promise for the 2080Ti.

Since 2080Ti support 2-way 100Gb/sec bidirectional NVlink, I’m curious if there are any 4x, 8x (or more?) 2080ti builds that could be done by linking each pair of cards with nvlink, and using some sort of mellanox gpu direct connectX device to link the pairs. Mellanox’s new connectX-5 and -6 are incredibly fast as well. If a system like that is possible, I feel it’d be a real challenger in terms of both compute-speed and bandwidth to the enterprise-class V100 systems currently available.

Cooling is a problem on the 2080 Ti’s. We have some in the lab but the old 1080 Ti Founder’s edition cards were excellent in dense designs.

The other big one out there is that you can get 1080 Ti FE cards for half the price of 2080 Ti’s which in the larger 8x and 10x GPU systems means you are getting 3 systems for the price of two.

It is something we are working on, but not 100% ready to recommend that setup yet. NVIDIA is biasing features for the Tesla cards versus GTX/ RTX.