The Ice Elephant in the Room: Ice Lake Xeon Discussion

The challenging part about this review is that it is almost like the EPYC 7002 “Rome” review from 2019, just with changes in the Zen3 generation and adding Cooper for niche segments and new Cascade Lake Refresh pricing. The platform features are largely the same as are the capabilities and core counts. As we have seen, higher clock speeds, higher IPC, and other improvements just make the 64-core part dominance of sockets higher.

For AMD’s part, they can only compare the EPYC 7003 to the newest dual-socket parts on the market which are the 2017-2021 era Skylake/ Cascade Lake generations. At STH, we are not allowed to go into details of the next-generation Intel Xeon Ice Lake chips, show you performance, core counts, features, and such. However, Intel has socialized a number of features already in public, so we can start the contextual discussion today. Frankly, not getting into the Ice Lake platform basics would make this EPYC 7003 review worthless as we would not be discussing its main competitor in the market.

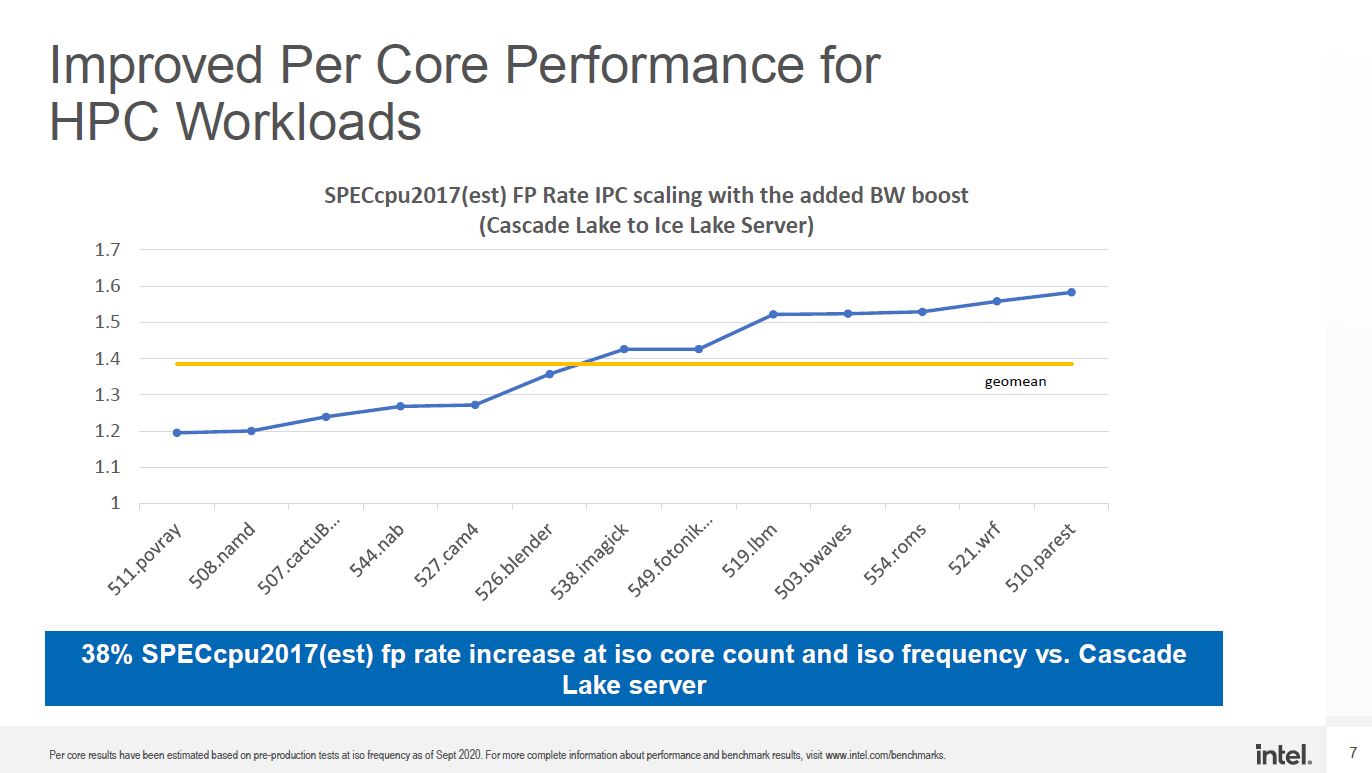

With the Ice Lake Xeon generation, we are getting PCIe Gen4 which Intel has shown. We are going to get more PCIe lanes as well. We already know this 10nm chip is based on a newer Sunny Cove-based design and will feature 8-channel DDR4 memory. As is customary with a new process node, we expect more cores, but given Intel’s public challenges at 10nm and the fact that Ice Lake was designed in Intel’s monolithic die era. Being years delayed due to fabs means Intel is unlikely to try matching AMD (or Arm’s) core/ socket offerings.

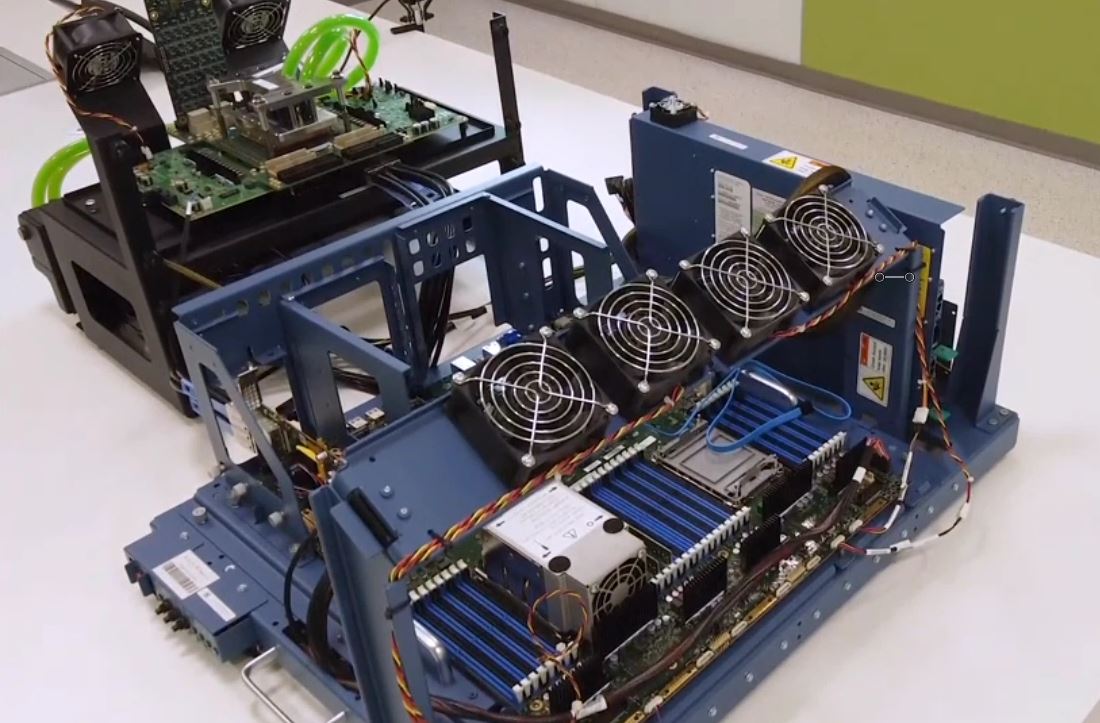

At the same time, as we saw in our Installing a 3rd Generation Intel Xeon Scalable LGA4189 CPU and Cooler piece, the new Intel socket is designed for higher TDP. Between 10nm power benefits plus a higher TDP, we are expecting better performance per socket from Intel.

Intel has seen the rise of confidential computing and will have another generation to address side-channel vulnerabilities, so we expect those features will be in Ice Lake Xeons.

Intel has stated that its Optane PMem 200 works in Memory Mode with the 3rd Generation Intel Xeon Scalable CPUs, but that is not a feature available with the 3rd Gen “Cooper Lake” parts, so it must be a feature of Ice Lake Xeons.

All told, the market for AMD will change with Ice Lake. Those organizations that are intent to stay on an Intel platform will lose one of the biggest drivers for going AMD: the platform. When Intel matches or exceeds AMD on the memory side (with PMem 200 being an “exceed” example) and the PCIe situation becomes similar again, AMD will lose a major competitive advantage.

AMD rode a wave of having PCIe Gen4 ~7 or so quarters before Intel. That means that there was a huge amount of bring-up and validation work done on AMD for PCIe Gen4 that was not done first on Intel Xeon this generation. That has been a major pull for AMD, and that advantage largely will dissipate, save for the 2P 160x PCIe Gen4 and the single-socket 128 lane configurations.

Undoubtedly, if you want to consolidate sockets 2:1, AMD is still going to be the answer for the vast majority of the market. At the same time, the market for server parts with 8-32 cores is going to greatly intensify when Intel has a competitive platform around its cores.

For HPC applications, we have seen AMD win as the new Milan memory and cache enhancements, rising TDPs/ clocks, and just having more cores than Intel’s Ice Lake Xeons mean that AMD will continue to do well there. Intel will always notch wins since they have been a big supplier in the space, but with the Xe HPC and Sapphire Rapids coming online in the next generation, Intel will have a completely different HPC value proposition than it will in the Milan v. Ice generation.

AMD has a strong position with its servers being in-market for a long time, and that means the refresh cycle is going to focus on offerings that OEMs have not had for AMD platforms before to increase parity. Still, Intel will likely end up shipping more Ice Lake Xeon servers than AMD EPYC 7002 and 7003 combined so we need to keep that in perspective.

Next, we wanted to give a framework for servers in 2021, and then we will have our final words.

Any reason why the 72F3 has such conservative clock speeds?

The 7443P looks as if it will become STH subscriber favorite. Testing those is hopefully prime objective :)? Speed, per core pricing, core count – there this CPU shines.

What are you trying to say with: “This is still a niche segment as consolidating 2x 2P to 1x 2P is often less attractive than 2x 2P to 1x 2P” ?

Have you heard co.s are actually thinking of EPYC for virtualization not just HPC? I’m seeing these more as HPC chips

I’d like to send appreciation for your lack of brevity. This is a great mix of architecture, performance but also market impact.

The 28 and 56 core CPUs are because of the 8-core CCXs allowing better harvesting of dies with only one dead core. Previously, you couldn’t have a 7-core die because then you would have an asymmetric CPU with one 4-core CCX and one 3-core CCX. You would have to disable 2 cores to keep the CCXs symmetric. Now with the 8-core CCXs you can disable one core per die and use the 7-core dies to make 28-core and 56-core CPUs.

CCD count per CPU may be: 2/4/8

Core count per CCD may be: 1/2/3/4/5/6/7/8

So in theory these core counts per CPU are possible: 2/4/6/8/10/12/14/16/20/24/28/32/40/48/56/64

I’m wondering if we’re going to see new Epyc Embedded solutions as well, these seem to be getting long in the tooth much like the Xeon-D.

Is there any difference between 7V13 and 7763?