Today as part of a “Big Week” at STH, we are taking a look at a system we have been working with that has 1.2PB of raw storage capacity, and that is not even the top-end configuration. The Dell EMC PowerEdge XE7100 is a 5U system handles 100x 3.5″ HDDs, with flexible CPU, GPU, and SSD options which we were able to check out. This is going to be a longer review, but before we get into it, I want to address the challenged marketing of this server.

In a typical week, I get hands-on time with new servers that we are reviewing and many servers that we will never review on STH. Often these have cool designs. In addition to hands-on, I typically sit on 10+ hours of briefing calls every week with various vendors. After the briefing for the PowerEdge XE7100 for our launch coverage, most of our readers will have noticed that I was less than impressed. Then one arrived on a 410lb pallet and made me almost upset. I thought I was duped by Dell EMC marketing. The Dell EMC engineers behind this product did a fabulous job making an awesome box, but marketing is making it sound boring at best.

Today, we are fixing this situation and giving the Dell EMC XE7100 some proper collateral. The engineers and product team behind this deserve recognition, even if Dell EMC is trying not to promote it.

Dell EMC PowerEdge XE7100 Hardware Overview

This is a huge system. Just for some sense, the pallet this review unit arrived on was 410lbs/ 186kg.

As a result, we have a video so you can see the system, and hear about the impacts.

We suggest opening this in a new YouTube tab so you can listen along and see some B-Roll angles we are not covering here.

Since this is such a large system, we are going to break up the hardware overview into the chassis, and then compute options for the chassis.

Dell EMC PowerEdge XE7100 Chassis

The system itself is a 5U server. Many of the 90+ bay storage servers on the market are top-loading 4U units. Part of Dell’s offering is that this is actually a 5U unit that is shorter (911mm or ~36in) than many of the competitive units. We are going to start with the front and then take a look at the rest of the chassis.

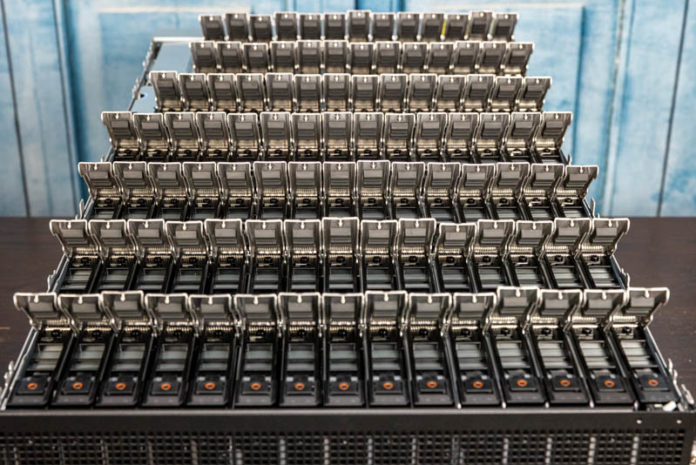

On the front, we can see the two expander/ PERC controller/ 2.5″ SSD bay units. These are actually quite important as they greatly impact the configurability of the system.

Each unit in our system has an expander and a PERC card which can be either a HBA or a RAID unit. HBAs are popular with scale-out solutions that use replication and erasure coding instead of older RAID. This is a key feature of the solution versus competitive units. As one would expect, we do not get directly attached lanes to each drive since this is a 100-bay unit.

These bays have another feature. Each can house a 7mm 2.5″ drive. These are small mostly plastic trays that are tool-less which is a nice feature.

Dell is using high-density connectors to ensure these are easy to service. That may seem like a small feature, but many competitive solutions put HBAs and expanders on less robust connectors.

The rear of the system has three major components. These are the fans, power supplies, and compute nodes. We are going to discuss the fans and power supplies before moving to the compute nodes which are in their own section.

Everything on the rear is easy to service. A key part of the chassis is that it is designed to have most major components serviced inside a rack. There are a few components that are inside that we will talk about when we get to them.

Primary cooling for the drives is actually handled by six fans in redundant modules at the rear of the chassis. One can see that each fan has its own power and sensor connector. Some top-loading storage systems utilize front fans, others have them in the interior. This makes it easy to service the fans without having to open the chassis which is great on a large and heavy system like this with so many rotating drives.

The power supplies are fairly standard Dell 80Plus Platinum units rated at 2.4kW. This is certainly a system where one can use a lot of power just given the number of components contained within so large PSUs like this are needed.

Next, we are going to take a look at the 100x drive bays in this system.

Just out of curiosity, when a drive fails, what sort of information are you given regarding the location of said drive? Does it have LEDs on the drive trays, or are you told “row x, column y” or ???

On the PCB backplane and on the drive trays you can see the infrastructure for two status LEDs per drive. The system is also laid out to have all of the drive slots numbered and documented.

Unless, you are into Ceph or the likes and you are okay with huge failure domains (the only way to make Ceph economical for capacity), then I just don’t understand this product. No dual path (HA) makes it a no go. Perhaps there are places where a PB+ outage is no biggie.

Not sure what software you will run on this, most clustered file system or object storage system that I know recommend going for smaller nodes, primarily for rebuild times, one vendor that I know recommends 10 – 15 drives, and drives with no more than 8TB.

I enjoy your review of this system, definitely cool features, love to the flexibility with the gpu/ssd options and as you mentioned this would not be an individual setup but rather integrated in a clustered setup. I’d also imagine it has the typical IDRAC functionality, and all the dell basics for monitoring.

After all the fun putting it together, do you get to keep it for a while and play with or once you reviewed it….just pack it up and ship it out?

Hans Henrik Hape even with CEPH this is to big, loosing 1,2 PB becouse one servers fails, rebalancing, network and cpu load on nodes. It’s just bad idea not from data loose point of view but from maintaining operations durring failure.

Great Job STH, Patrick, you never stopped surprising us with these kind of reviews. sine you configured system as a raw 1.2PB can you check the internal bandwidth available using something like

iozone -t 1 -i 0 -i 1 -r 1M -s 1024G -+n

I would love to know since no parity HDD is it possible the bandwidth will 250MBpsx100HDD i.e. 25GBps. I’m sure their will be bottlenecks maybe the PCIe bus speed for the HBA controller or CPU limitation. But since you have it will nice to known

Again thanks for the great reviews

“250MBpsx100HDD i.e. 25GBps” ANy 7200 RPM drive is capable of ~140 MB/sec sustained, so, 100 of them would be ~14 GigaBYTES per second… Ergo, 100 Gbps connection will then propel this server to hypothetical ~10 GB/sec transfers… As it is, 25 Gbps would limit it quite quickly.

A great review of this awesome server! I wish I had a few hundred K$ to spare, and, needed a couple of them!

Happy wise (wo)men who’ve got to play with such grownup toys.

There is a typo in the conclusion section compeition -> competition.

But else a really cool review of a nice “toy” I sadly will never get too play with.

>AMD CPUs tend to have more I/O and core count which also means more power consumption

Ohrealy?

Perfect for my budget conscious Plex

James – if you look at Milan as an example, AMD does not even offer a sub 155W TDP CPU in the EPYC 7003 series. We discussed in that launch review why and it comes down to the I/O die using so much power.

While nothing was mentioned about prices, Dell typically charge at least 100% premium for the hard drives with their logo (all made NOT by Dell) despite having much shorter than normal 5yr warranty – so combination of 1U 2 CPU (AMD EPYC) server and 102-106 4U SAS JBOD from WD or Seagate will be MUCH cheaper, faster (in a right configuration) and much easier to upgrade if needed (the server part which improves faster than SAS HDD).

Color me unimpressed by this product despite impressive engineering.

Definitely getting one for my homelab

I just managed to purchase the 20 m.2 DSS FE1 card for my Dell R730xd. I did not to too much test but It works (both in esxi and truenas). And the cost for this card is absolute bargin. Feel free to contact me for the card.