We received a lot of positive feedback from our Top500 June 2018 Edition New Systems Analysis and so we are going to use a similar format for this article. In the latest Top500 list, there were 153 newly added systems. That is a 15% uptick in the refresh rate over the June 2018 list. Since there is are many ways of slicing the 500 data points, we are instead going to focus solely on the new systems that made the list for the first time. We will also discuss a practice that must stop whereby companies are inflating their numbers without a real focus on the HPC community. The Top500 list is under siege and is in danger of becoming a web hosting cluster list if the trend continues.

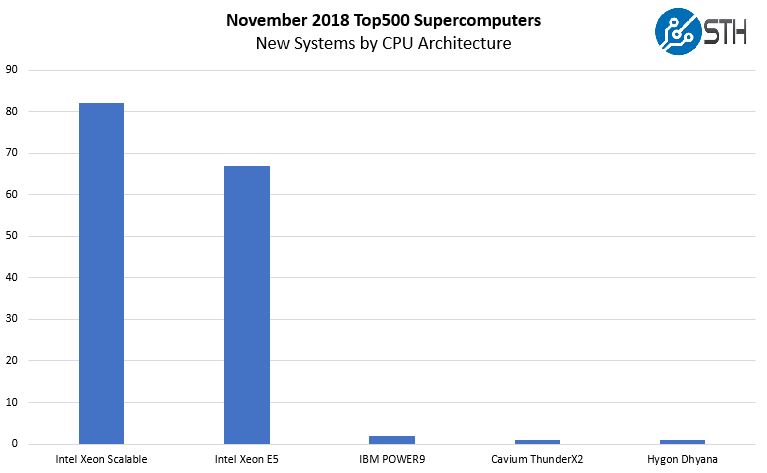

Top500 New System CPU Architecture Trends

Intel Xeon Scalable (Skylake-SP) launched in July 2017, about a year ago. You can see STH’s coverage at Intel Xeon Scalable Processor Family (Skylake-SP) Launch Coverage Central. Almost a year later, one may expect that the new generation of CPUs has taken over. Instead, Intel Xeon Scalable is a distant second place on the new systems list.

Here is a breakdown based on CPU generation:

There are quite a few eyebrow-raising items on this list. First, Arm is now on the list! When I spoke at the Cavium ThunderX2 launch we had already seen announcements on HPC wins. Further, during our ThunderX2 review, one may have noticed a focus on the HPC space. Astra sees a #203 spot on the list, putting it firmly in the top half. This is a major accomplishment for the Marvell / Cavium, Arm, and ecosystem teams.

If you see the Hygon Dhyana and are wondering what that is, it is the AMD EPYC “inspired” Chinese version of AMD’s new x86 architecture only to be sold in China. Through a complex IP licensing scheme, AMD has partners in China that are building x86 CPUs based on their IP. You can read this Sugon PreE system debut as AMD EPYC with an asterisk. If someone says AMD EPYC is missing from the top 50, this is the system to look at. It is a 32 core 2.0GHz CPU so you can equate it to an AMD EPYC 7501 being used.

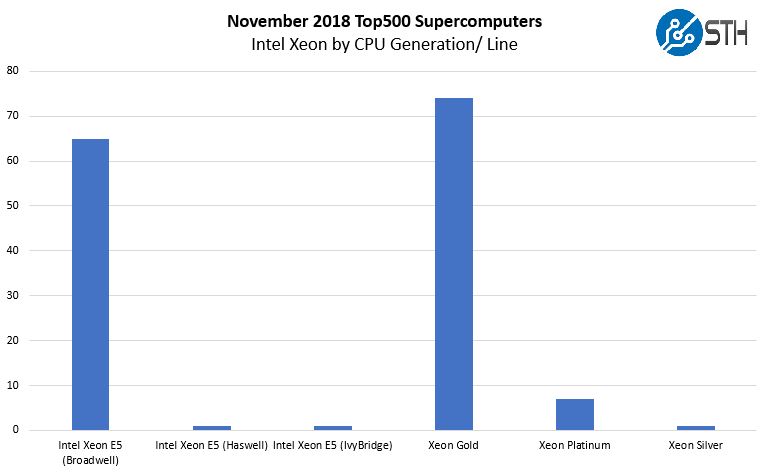

Intel Xeon CPUs by Generation and Line

Looking at the Intel architectures, we see that they completely dominate the list, and the broader market as a whole. We wanted to see two points: what the Intel Xeon E5 systems were, and what SKU levels organizations are using for Xeon Scalable after over a year on the market (see Intel Xeon Scalable Processor Family (Skylake-SP) Launch Coverage Central.)

We know Intel Xeon E5 V4 “Broadwell” chips are still being sold in the market, indeed earlier in 2018 we saw a shortage of them as Intel pushed customers to Xeon Scalable. In the Intel Xeon Scalable line, the Intel Xeon Gold lineup is the most common, and indeed, more common than even the Intel Xeon E5 V4 generation. There are a few points we wanted to call out as you may have looked at the above chart as we did and think, that looks strange.

First, the Intel Xeon E5 Ivy Bridge system is a Sugon E5-2658v2 based system (10 cores per CPU) with no co-processors and connected via 10GbE. It is installed an Internet company in China. Our sense is that a 5-year-old CPU is probably not deployed in a new large-scale cluster in the second half of 2018. This seems to be Sugon following a trend of getting customers to run Linpack on their clusters and claiming them as a Top500 system.

The Haswell system is installed at the Institute of Atmospheric Physics, Chinese Academy of Sciences. This may not be a new system, but we are going to give that Sugon system a pass since it is installed for HPC research.

Intel Xeon Silver is used in a new system as well. This is a Lenovo system based around the 8-core Intel Xeon Silver 4110. This is a cluster based on 10GbE and installed at a Chinese service provider. With 9600 CPUs that is a sizable installation.

Heading to the top end, the Intel Xeon Platinum has five of the seven systems using the Intel Xeon Platinum 8160 processors and two using Intel Xeon Platinum 8174 CPUs. Also interesting here, two of the Platinum systems use Omni-Path and no accelerator including the new #8 SuperMUC-NG from Germany. Five are using the NVIDIA Tesla V100, including the DGX-2 based Circe (#61.)

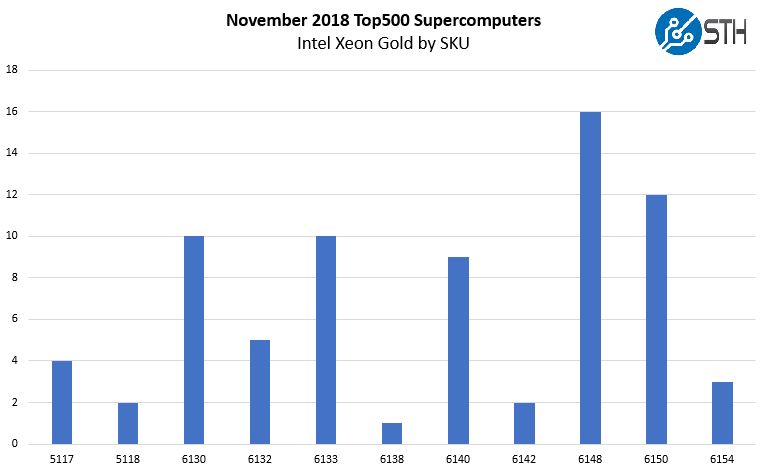

Delving Into New Intel Xeon Gold Deployments

Since the most common CPU family was Intel Xeon Gold, we wanted to show which SKUs are being deployed. One immediate item you will notice is that 10 of the new systems are using the Intel Xeon Gold 6133, a 2.5GHz 20 core part.

As was the case last time, the Intel Xeon Gold 6148 remains immensely popular. In our Intel Xeon Gold 6148 Review, we called it the “go-to HPC chip.” We also called the Intel Xeon Gold 6130 “a great SKU” in our review. The Gold 6150 was one of the first CPUs we looked at in this generation immediately after launch and the Gold 6140 also seems to be popular. You will also notice that over 90% of these new Intel Xeon Scalable Gold series deployments are Xeon Gold 6100 series over Gold 5100 series.

With the November 2018 list, the Intel Xeon Gold family was a big winner. We only saw 24 new Intel Xeon Gold systems in the June 2018 list so the momentum is picking up.

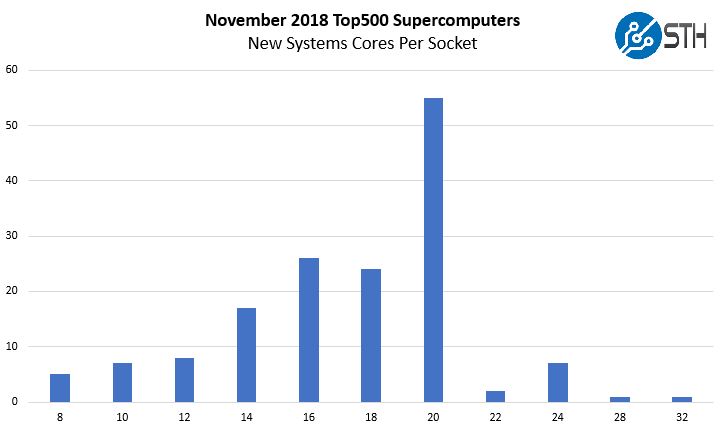

CPU Cores Per Socket

Here is an intriguing chart, looking at the new systems and the number of cores they have per socket.

Over a third of the systems use 20 cores per socket, but there is an enormous drop off after that. Twenty was also the favorite in the June 2018 list. Maximum cores of thirty-two did not make it to the June 2018 list, but neither are new 60+ core per socket systems on the November list. The range has narrowed considerably now that we have AMD (and variants), Arm with Marvell’s ThunderX2, and Intel all with similar ranges of per-core performance resulting in a fairly narrow band.

We also wanted to note quickly, this also shows that Knights Landing/ Knights Mill is not being deployed as Intel Xeon Scalable’s AVX-512 has tempered the need for that platform.

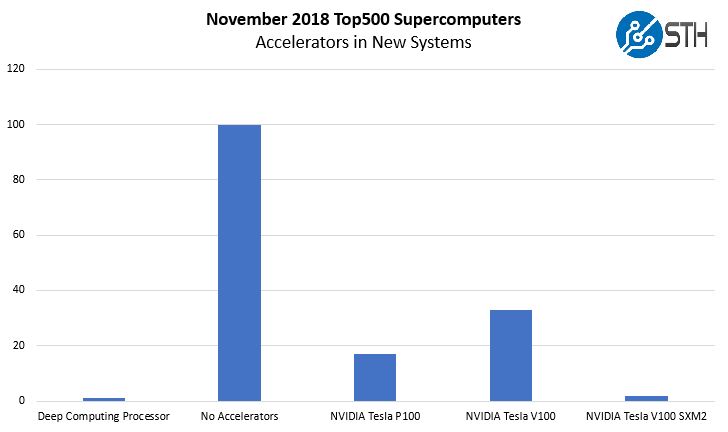

Accelerators or Just NVIDIA?

Unlike in the June 2018 list, NVIDIA is not the only accelerator vendor for the new systems. They are, however, the overall leaders in the space. Here is a breakdown:

The Deep Computing Processor is the accelerator paired with the AMD EPYC based Hygon Dhyana system. The rest are primarily NVIDIA Volta systems. At the same time, 100 of the 153 systems do not use accelerators. While NVIDIA is popular, it is also safe to say they are nowhere near 100% saturation.

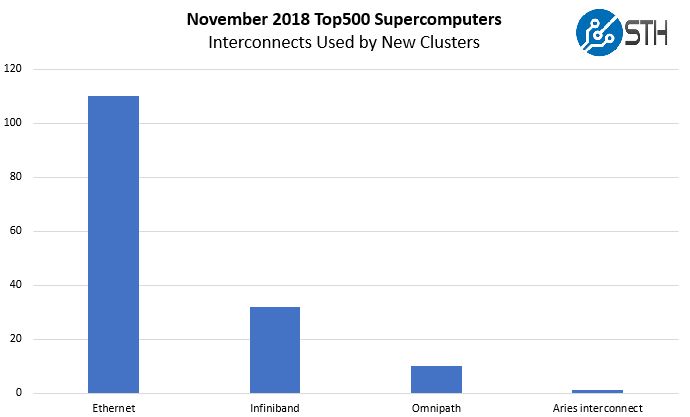

Fabric and Networking Trends

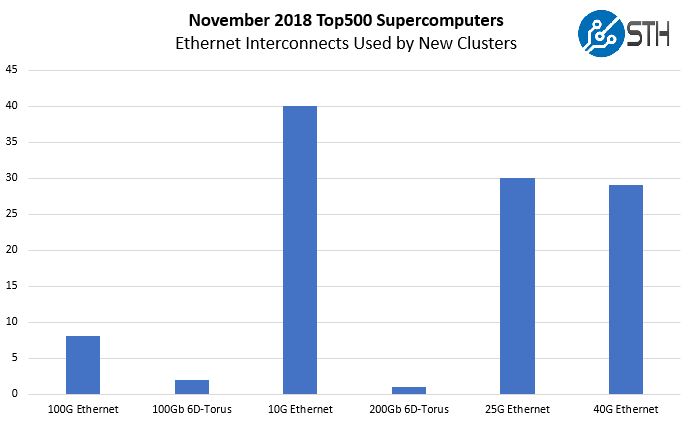

One may think that custom interconnects, Infiniband, and Omnipath are the top choices on the Top500 list’s new systems. Instead, we see 110 of the 153 new systems, over 70%, using Ethernet.

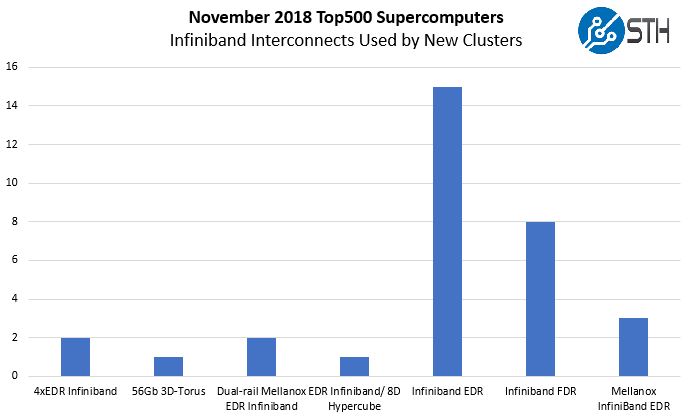

Omnipath notches a decent ten new systems. The Aries interconnect also gets a single win. It is still a heavily Infiniband and Ethernet world. For Infiniband, here is the breakout:

As you can see, 56Gbps Infiniband is losing out to the 100Gbps generation. We will soon see 200Gbps generation infrastructure but for this list, EDR is king.

Here is the Ethernet break out, and it deserves some discussion.

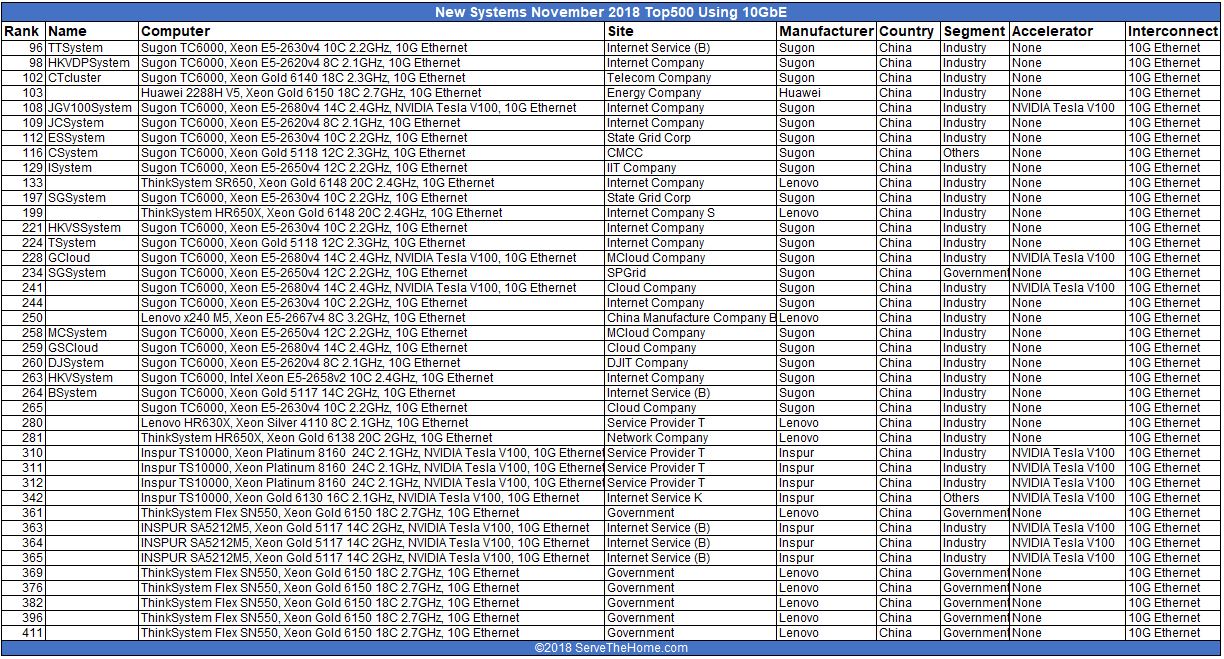

Let us discuss this for a moment. The 10GbE category is made up of systems using a relatively low speed interconnect by modern standards. Here is the list of 10GbE systems.

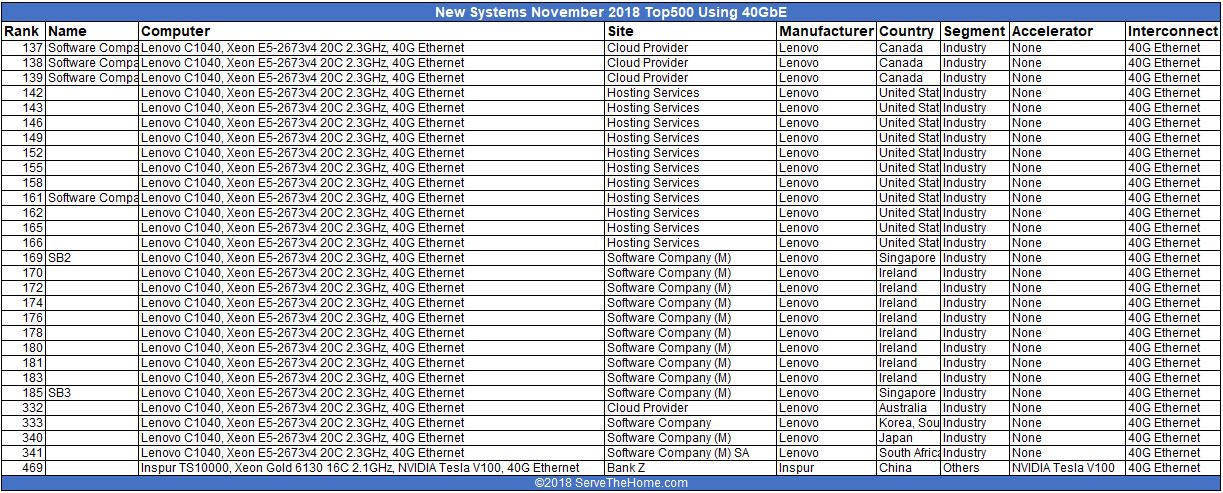

40GbE is the higher bandwidth Ethernet interconnect, but it is still a higher latency interconnect than today’s 100GbE standard. Here are the 40GbE systems on the list:

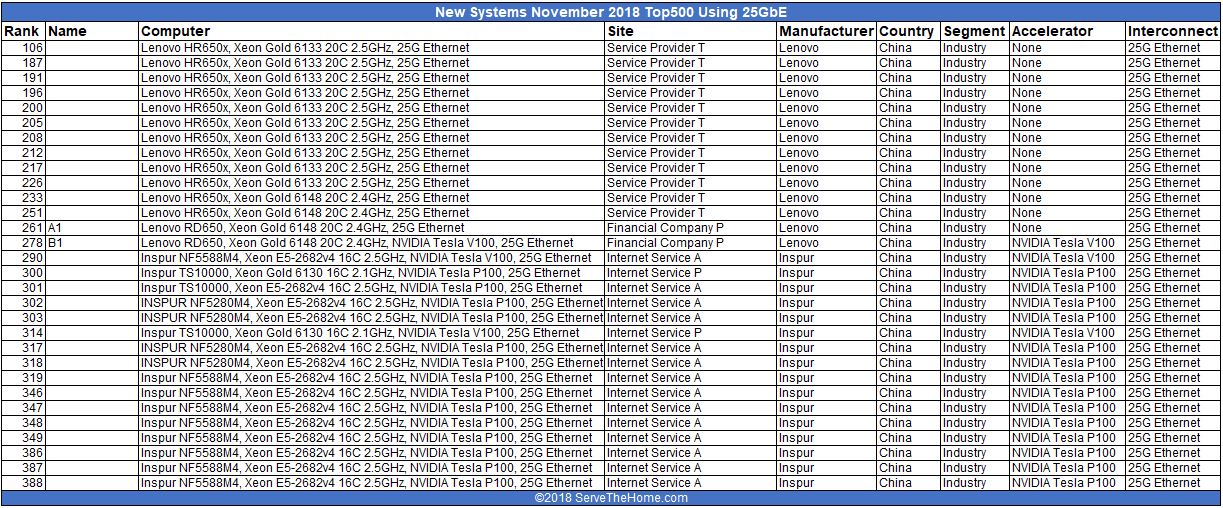

In the data center, 25GbE is becoming extremely popular in the data center. Here are the systems.

These systems are for service providers, hosting companies and the like. There are several NVIDIA Tesla P100/ V100 systems which make sense here. These may be cloud clusters or similar installations doing machine learning work.

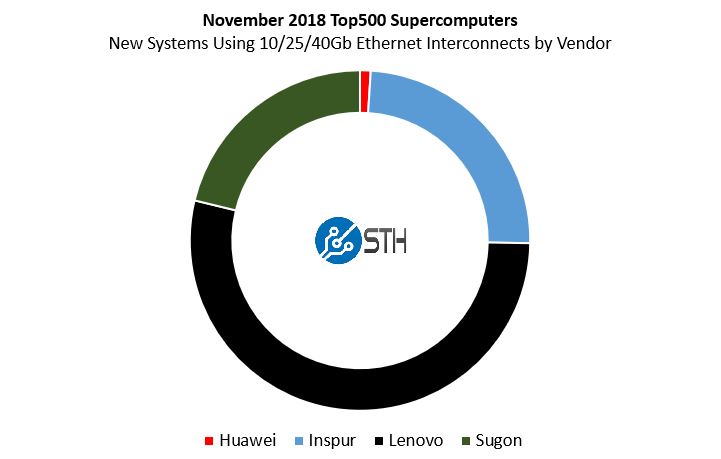

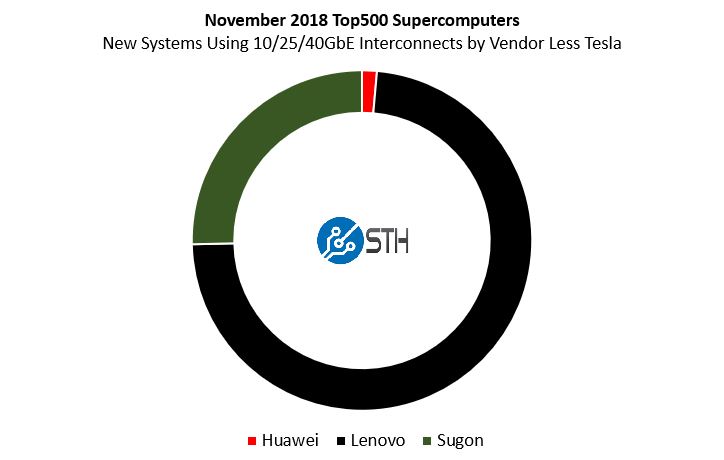

The NVIDIA Tesla P100 and V100 systems that use Ethernet are mostly from Inspur with a few systems from Sugon and a single system from Lenovo. We removed the NVIDIA accelerator systems and this is what the view looks like for system vendors who are submitting results of systems using lower-speed (non-100GbE) Ethernet systems and no NVIDIA Tesla accelerators.

Lenovo boasts that it is the biggest number of new vendors, but 52 of its 69 new Top500 systems seem to be things like “Hosting Services” providers (11 of the 69.)

This practice of submitting results from non-HPC clusters must stop. We know there are companies with massive infrastructures but they are not running Linpack just to make the list.

Final Words

A few points stuck out. Mellanox is doing well in the HPC space, and Intel needs to do something in the next-generation OPA to change the game. We expect/ hope that Intel OPA200 has the ability to run Ethernet, as well since it would be a game changer in networking. Intel is doing well with great overall share and growth of Intel Xeon Scalable and the Intel Xeon Gold is the clear favorite in this space. NVIDIA has another list with a stranglehold on new systems accelerators but still has some way to go for complete adoption.

At the end of the day, the practice of running Linpack on hosting clusters that are not doing HPC research devalues the list and we hope the Top500 folks discontinue allowing vendors to submit results of systems that are clearly being used for non-HPC style applications as part of a single cluster.