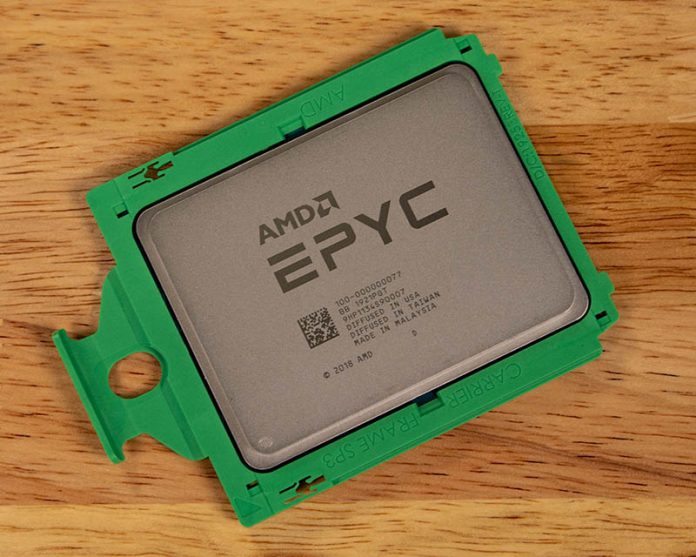

A team at the Microsoft Azure cloud service is passionate about high-performance computing with background coming from places like supercomputer centers. The company has been building a supercomputer in the cloud that allows one to consume HPC without having to build out a site. Unlike some other cloud vendors, Microsoft is building specific infrastructure with AMD EPYC 7002 series CPUs with SMT turned off and Mellanox interconnects in their Azure HBv2 instances.

Microsoft Azure HBv2 HPC Instances

The Microsoft Azure HBv2 instances are designed for HPC, and not general-purpose workloads. Each HBv2 virtual machine includes 120 AMD EPYC 7002 series cores at up 3.3GHz. That aligns with the AMD EPYC 7H12 HPC part as well as the EPYC 7642. Rumor has it that these are actually AMD EPYC 7742 CPUs we reviewed. Microsoft says these VM are capable of 4 TFLOPs (double-precision) and 8 TFLOPs (single-precision.)

Each VM also gets 480GB of RAM and 480MB of L3 cache. These are far from small nodes and that large L3 cache is something that Intel has tried to partially address with its Big 2nd Gen Intel Xeon Scalable Refresh but is still limited to 38.5MB / 28 cores on that solution or 77MB of L3 cache on a 56 cores 2P server. In addition, HBv2 VMs can hit 340GB/s of memory bandwidth due to higher speed DDR4 and 8-channel memory.

Networking is also a big story. The Azure HBv2 includes Mellanox HDR Infiniband which runs at 200Gbps. The HPC team at Azure built a high-speed Infiniband network just to service the HPC clusters. This is not re-purposed 25GbE from general-purpose machines. Mellanox HDR requires PCIe Gen4 to run at full speed in a single x16 slot. One of the AMD EPYC 7002 series advantages is that the CPUs have PCIe Gen4 over a year before the Intel Ice Lake Xeon series arrives in 2H 2020 assuming it stays on schedule. Utilizing this AMD EPYC feature is why the Azure HPC team is able to build networks that Intel CPUs cannot utilize as easily. Users of the VMs will utilize standard Mellanox OFED drivers with all RDMA verbs and MPI variants to make utilizing HPC in the cloud easily.

In Microsoft’s blog post on the new instances, they highlight case studies of weather and CFD tasks scaling of 640 to 672 nodes for HPC applications. That is 76,800 to 80,640 cores being utilized per workload and is racks of machines.

For storage one of the HPC in the cloud challenges has always been storage. The new Azure HBv2 VMs include NVMeDirect technology and RDMA networking to provide high-speed parallel storage. Microsoft is seeing 763GB/s read and 352GB/s write performance (peak) using the BeeOND filesystem.

Final Words

One thing is clear, the AMD EPYC 7002 series is making an impact in the HPC space. Microsoft Azure’s selection of the EPYC 7002 series for their HBv2 HPC infrastructure is a major validation of the design. Microsoft Azure teams are generally privy to roadmaps and early access to technology from every major vendor, as well as hyper-scale pricing. As a result, the Azure HPC team has the ability to choose what it believes is best for its customers and to scale its business to a larger customer base. Make no mistake, this is a significant validation on the AMD EPYC 7002 series from multiple customer points of view including Azure as the service provider and its customers.

We have seen Cray and AMD win big for the next-generation exascale supercomputers so the outlook seems bright here.

For Microsoft, they have had a team dedicated to cloud HPC for years. The team is driving innovation in the space and is poise to take additional market share from on-prem deployments since it offers cloud benefits in a very on-prem focused ecosystem. From what we understand, this is not just one cluster of a few hundred machines to support the new HBv2 instance types. Instead, it is starting in the Central and South Central US regions with GA today, and is scheduled to expand to several other regions including West Europe, West US 2, and Japan East in Q2 2020 according to Azure’s tracker. That is a fairly strong indicator that the Azure HPC team is seeing success with its offering and there is customer demand for these new HBv2 instances since this is a more expansive roll-out than a single small cluster in one region. Azure is bringing hyper-scale to the cloud HPC market and we hope that team (some of whom are STH readers) have continued success in the space.

Editor’s note: we are going live with this piece but are still awaiting a few images from the Azure team. It will likely be updated with additional images in the near future.

This is very cool stuff, but a bit disappointed that pricing wasn’t mentioned. To save others time, the South Central US region pricing is:

Pay as you go: $3.96/hour

1 year reserved: $2.9700/hour (~25%)

3 year reserved: $1.9800/hour (~50%)

Spot pricing: $0.9002/hour (~77%)

Honestly, the pricing seems pretty good for all the features being offered.

@ssnseawolf

Are these prices for renting a single HBv2 virtual machine (all 120 cores and 480GB of RAM)?

Can you rent these Azure nodes if you are located in a different geographical region?

I don’t understand why does the Mellanox pic say x32 Gen3 lanes. How does it connect to two slots?