The Inspur NF5280M6 is the company’s mainstream dual-socket Intel Xeon server. This is the generational update to the Inspur NF5280M5 we reviewed previously. With this update comes the new 3rd generation Intel Xeon Scalable processors, codenamed Ice Lake, PCIe Gen4, and a host of new features. In our review, we are going to get into the details as we normally do. Let us get to it.

Inspur NF5280M6 Hardware Overview

As our reviews have gone into more depth, we have split the hardware overview section into two parts, the external and then the internal overview. We will continue that tradition here. Also, we have a video for this review that you can find here:

We always suggest opening this in a new YouTube tab, browser, or app for the best viewing experience.

Inspur NF5280M6 External Overview

The Inspur NF5280M6 is the 2U offering. That 2U form factor does not have the same CPU and memory density as 1U systems, but it offers a lot more in terms of storage and PCIe card expandability.

Starting with the front of the server, we have standard LED indicators and the power button on one rack ear. This is done to ensure maximum airflow and flexibility for the front storage portion of the server.

On the right rack ear, we get a VGA port and two USB 3 ports. One can manage this server from the cold aisle.

The front of our system is a 24x 2.5″ SFF configuration. One can see the large airflow vents between the blocks of drives as has become standard in modern servers. We will go over some of the other options briefly later in this section.

One fun item is that the drives are labeled 0-23, not 1-24. The drive bays can be set up for SAS, SATA, or NVMe. As we are seeing with many new servers, the drive carriers are tool-less making for fast drive swapping.

The front of the system is very modular. Each block of eight drives is serviced by its own backplane. These backplanes are held in place via a single thumbscrew.

Our system is what is called the 8x PCIe configuration. There are spaces for eight full-height PCIe cards when it is fully populated with risers. We only have one of the three risers here.

On the left side, we can see the chassis with the LFF HDD and PCIe riser spots marked for when those options are installed. On the bottom, we can see an OCP NIC 3.0 slot populated with a Mellanox NVIDIA MCX566A-CDAB which is a 100GbE ConnectX-5 EN card.

In the middle, we can see another stack designed for risers or LFF options. Below that we can find a few features. First, there is a space for two SFP+ cages. This is for the Intel X710 dual 10GbE SFP+ option that is not present in our system. We will point out where that would be populated on the motherboard in our internal overview. There are then two USB 3.0 ports and a VGA port. We also get an out-of-band management IPMI port. Something to note is that without that dual X710 option, the server does not have onboard networking.

On the right side of the rear, we have two riser slots that sit above the CPUs. There is also rear storage and power supplies on this side as well. Let us next look at those three features.

First, we can see that we have a dual-slot riser that sits above the power supplies. This riser has both our storage controller as well as our 10/25GbE networking for the system. It leaves the other expansion areas open if one wants to add a number of accelerators or other cards.

Next to this are two SSD slots. Each has its own carrier and there are options for SATA as well as NVMe here. We have the M.2 setup, but the server also has EDSFF options and that makes sense given how this area is built. Having rear boot drives, instead of internal drives, is a feature we see on many servers these days to increase serviceability.

On the power supply side, we have two 80Plus Platinum power supplies that are rated at 1.6kW each. Inspur has options ranging from 500W to 2kW and spanning Platinum and Titanium efficiency ratings.

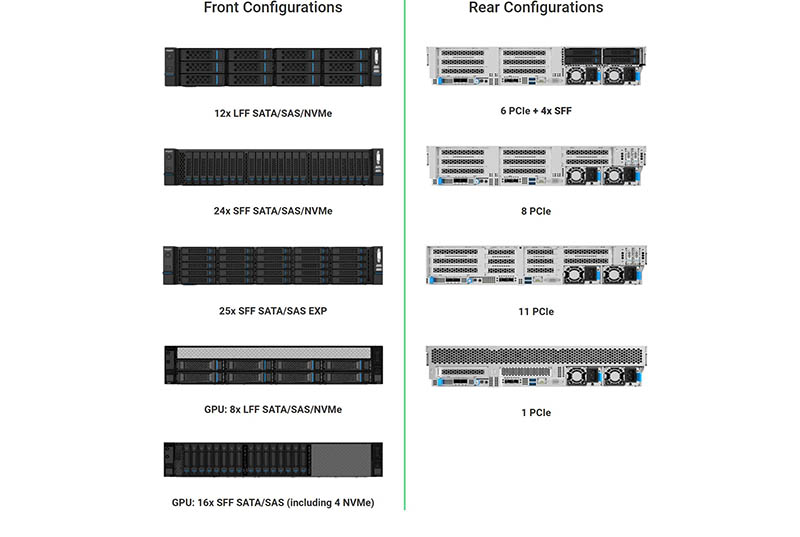

In terms of front and rear configurations, we found five front options and four rear options mentioned. These can span 3.5″ and 2.5″ form factors in the front, along with options for allowing more airflow for GPU cooling.

On the rear, while we have the 8 PCIe configuration, there is an 11 PCIe configuration and this system can support up to 8x NVIDIA T4 GPUs. We can see that there is also a 2.5″ rear storage option. We saw mentions of HDDs on the rear left and center riser sections, but those were not listed on this chart.

Next, we are going to get inside the NF5280M6.

It would be nice to see how the missing risers work for the other PCIe slots. Is there a big price differential between models?