Today we have a review of the Inspur NF5180M6. This is Inspur’s 1U mainstream server designed to support two Ice Lake generation Xeons. We previously looked at the M5 generation of this server in our Inspur NF5180M5 Flexible 1U Server Review. Now, it is time to take a look at the newest iteration that offers a fairly large generational leap. We also have a little bit of extra testing we were able to do with this machine and compare it to its 2U counterpart. Let us get to the review.

Inspur NF5180M6 Hardware Overview

As our reviews have gone into more depth, we have split the hardware overview section into two parts, the external and then the internal overview. We will continue that tradition here. Also, we have a video for this review that you can find here:

We always suggest opening this in a new YouTube tab, browser, or app for the best viewing experience.

Inspur NF5180M6 External Hardware Overview

The Inspur NF5180M6 is the 1U offering that as we go through the server will remind many of our readers of the Inspur NF5280M6 2U server we reviewed. Inspur’s methodology is to build one base motherboard platform then customize the I/O and chassis to 1U for density or 2U for more expandability. That helps drive volume and therefore quality with more systems deployed.

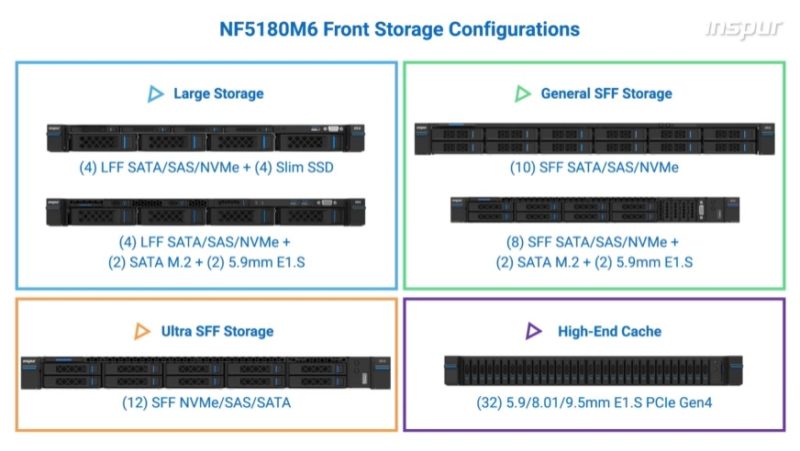

The front of the NF5180M6 has twelve 2.5″ drive bays. These bays can be either SAS, SATA, or NVMe depending on the configuration. We are just going to quickly note here that Inspur has a number of different options and up to a 32x E1.S EDSFF SSD solution for high-end storage.

On the Ultra SFF option that we have, the drive bays take almost the entire system’s 1U front faceplate.

The impact of this is that the power button and LEDs are very small just above the SSDs. Normally small buttons make us nervous, but these worked reasonably well and it was not overly easy to hit them inadvertently.

On the rear of the system, we have our normal block of two USB ports, a management port, and a VGA port. There is also a small serial port. Let us also get to some more of the details.

On the left side, we have an OCP NIC slot.

The OCP NIC 3.0 slot in ours is using a NVIDIA ConnectX-5 adapter, but there is a variety of cards available for this slot. This is an industry-standard NIC form factor, and Inspur is using what is probably the most popular faceplate design as well.

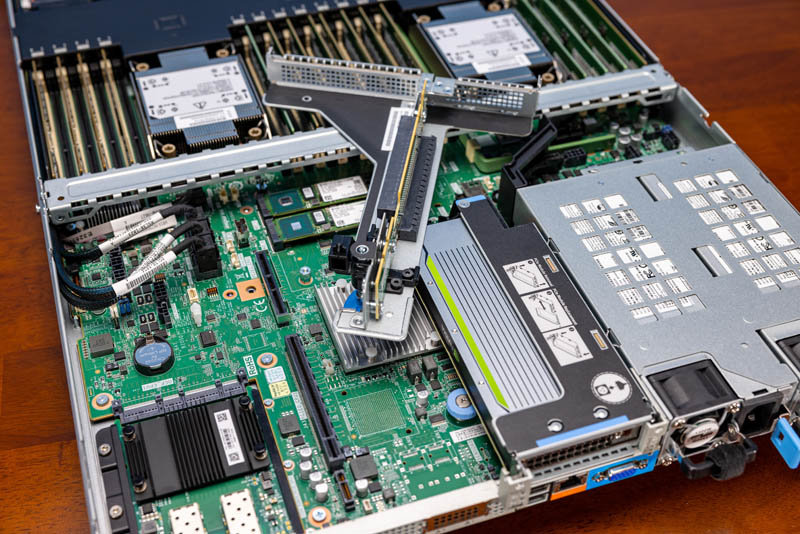

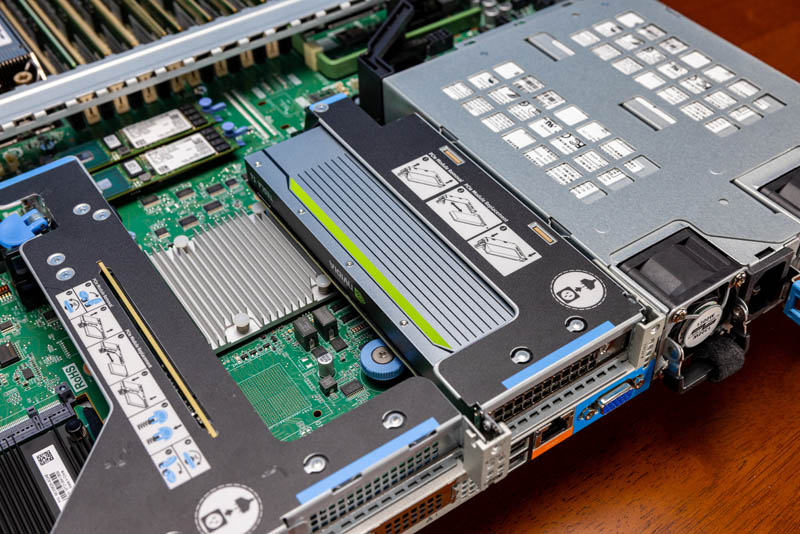

To balance the page sizes out a bit, let us also discuss the risers. First, we have a dual PCIe Gen4 x16 riser. One is a full-height slot and one is a low-profile slot.

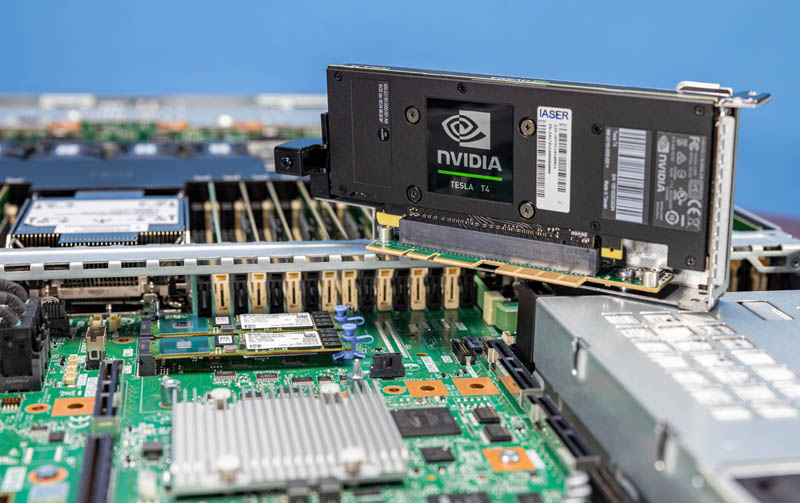

You will see that we have a NVIDIA T4 in the other middle PCIe slot. We tried other accelerators even up to full-height HBM2 sporting FPGAs, and this server managed to handle those configurations as well.

This is also a PCIe Gen4 riser. One gets the OCP NIC 3.0 slot along with three PCIe Gen4 x16 slots for quite a bit of expandability in the 1U platform.

As with the front, the rear I/O is also configurable and Inspur has options for rear drive bays and more as well.

In terms of power supplies, we get two 1.3kW redundant hot-swappable power supplies.

Next, let us get inside the system.

Leaving a suggestion: upload higher-res images on reviews!

They look very soft on higher dpi displays and you can barely zoom on them :(

Is the front storage options diagram from Inspur directly? The image for 10x 2.5″ and 12x 2.5″ seem to be swapped.

Jim – it is, and you are correct.

The only thing missing might be a swappable BMC, or perhaps just the application processor part of the BMC. They tend to get outdated much faster than the rest of the hardware, and even if they were using OpenBMC as a software stack it would severely limit what the machine can do in its second or third life after it’s been written off by the first enterprise owner.