The HPE ProLiant DL325 Gen10 is a 1U single-socket AMD EPYC server that we have a lot of experience with. While we are going to review a 64-core AMD EPYC 7002 edition of this server, we actually have much more experience. We run around a dozen of these servers in the STH lab and in the data centers we use equipped with an AMD EPYC 7401P and SSDs. In our review, we are going to go over the hardware, and show what we have learned running these servers for several quarters.

HPE ProLiant DL325 Gen10 Overview

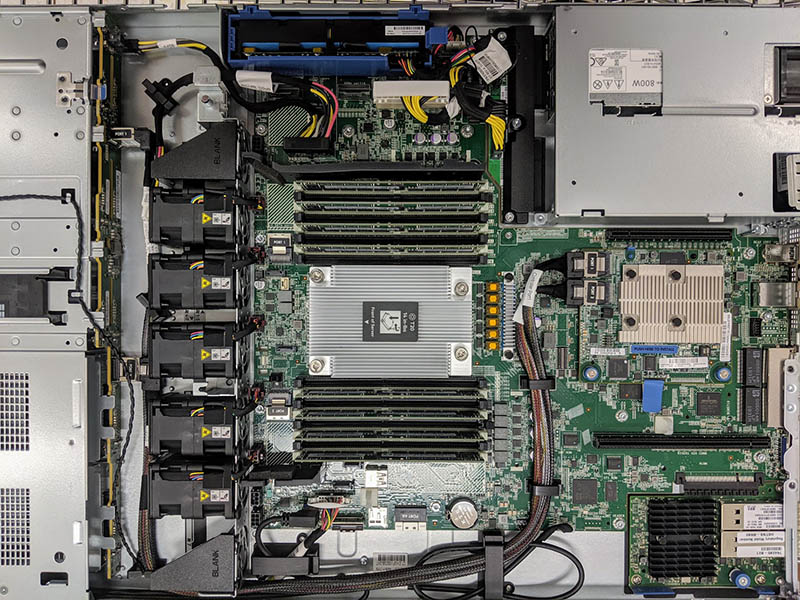

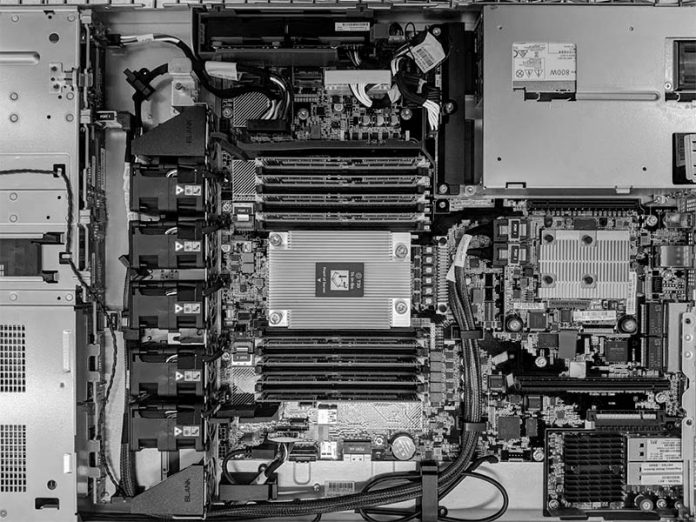

The HPE ProLiant DL325 Gen10 is a new class of 1U platforms. On one hand, it retains compatibility with other HPE platforms in terms of using common features FRUs such as drive trays, PSUs, SmartArray SAS, FlexLOM NICs, tool-less rails, and iLO 5. On the other hand, it now provides up to 64 cores and 128 threads and up to 2TB of memory in a single socket making it a true dual-socket Xeon replacement. HPE consciously crafted the DL325 Gen10 to take advantage of the AMD EPYC platform, and provide a real choice in the market.

The standout feature of the HPE ProLiant DL325 Gen10 is the single socket AMD EPYC 7000 series processor. First-generation parts ranged from the 8-core AMD EPYC 7251 to the 32-core Dual AMD EPYC 7601. With the second generation that range goes from 8 cores up to 64-core SKUs as we have in our test system (EPYC 7702.) AMD has “P” series SKUs that provide lower pricing for single-socket only parts. HPE sells many models with these SKUs and they provide great value over Intel Xeon alternatives from a price per core perspective.

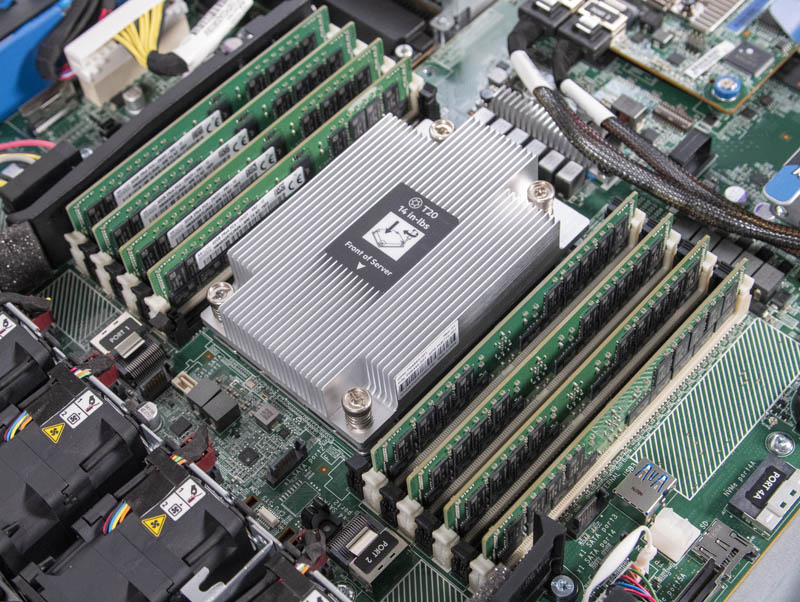

The CPU is flanked by 16x DDR4 slots that allow for eight-channel memory at up to DDR4-2933 (2nd gen) or DDR4-2666 (1st gen) speeds depending on the generation of EPYC CPU. Practically, that means that a single AMD EPYC 7002 series CPU has more memory bandwidth than two Intel Xeon E5 V4 CPUs combined. Using 128GB LRDIMMs, one can hit up to 2TB of memory capacity in the server as well, or as much as two Intel Xeon Platinum 8280 CPUs.

The ProLiant DL325 Gen10 has a particularly excellent expansion slot capability for a 1U server. Although it is a PCIe Gen3 platform, it has expansion slots specifically for a HPE SmartArray SAS HBA or RAID controller as well as a HPE FlexLOM networking module. You can see those features all installed above.

Standard, we have two PCIe slots, an x8 and x16 slot on the riser between the FlexLOM and the SmartArray. One can get another PCIe x16 slot with an optional riser that none of our systems came with. We ordered HPE Part Number P04849-B21 for the corresponding third slot riser. We have been told that one cannot use this riser with the SmartArray card installed.

All told, one can get up to three PCIe cards installed, while also installing high-speed networking in its dedicated slot, or two PCIe plus networking and storage, for a total of four devices. If you compare that to some of the other 1U EPYC offerings we have seen, generally, those have only 1-3 available slots.

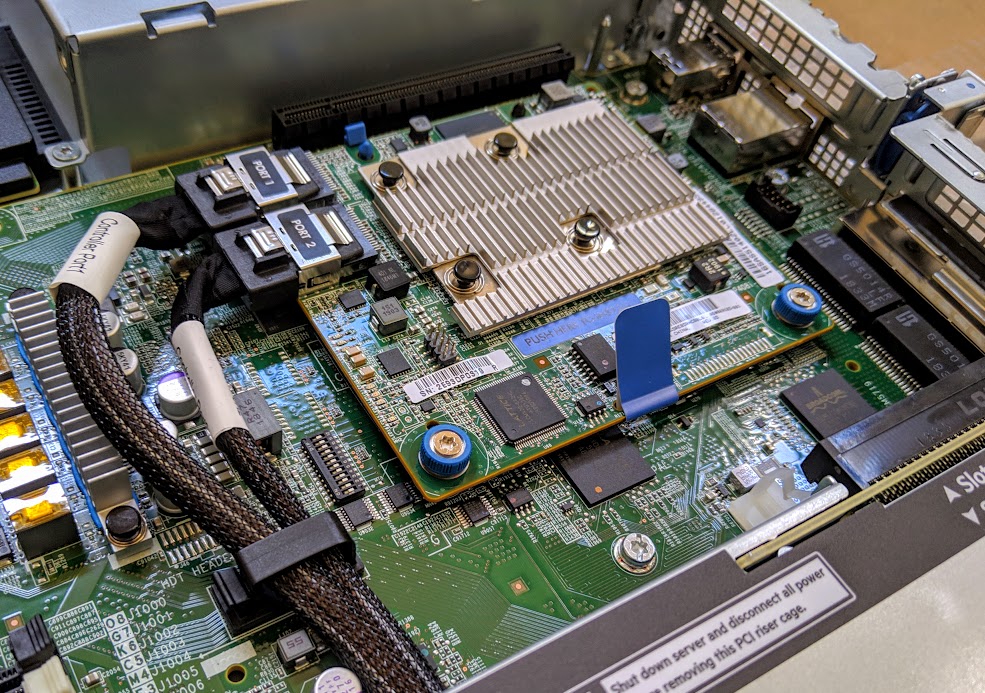

Storage is provided via the SmartArray option. We have had both E208i-a and P408i-a controllers in the DL325 Gen10’s we have used. Generally, we suggest the P408i-a’s for their higher-end performance.

There is even a spot for the SmartArray’s power backup unit.

One quick note here is that you technically do not need a SmartArray adapter for SATA drives. If you are just running SATA SSDs the onboard SATA will work. We moved the SFF-8087 cables from the SmartArray to the motherboard ports, and everything worked aside from HPE’s nice SmartArray management suite. For those who want SATA and software RAID or JBOD, this solution is lower power as well.

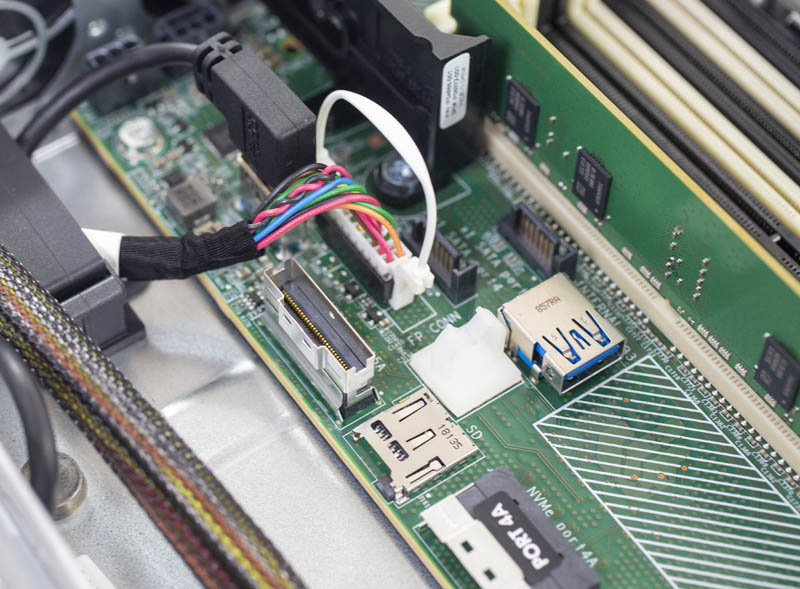

Inside the system, there are additional headers. HPE has headers for its NVMe option, as well as a few others. There are four SATA III 7-pin ports on the motherboard which may be needed for the 10x 2.5″ configurations. One can also find an internal USB 3.0 port for license key FOBs or recovery media. There is even a SD card slot which has become more common on servers.

HPE offers a number of DL325 Gen10 storage configurations. These include 10x 2.5″ SAS/SATA or 10x 2.5″ NVMe SSD configurations. There is an 8x 2.5″ plus optical bay option which is what most of our chassis are. One can even get a 4x 3.5″ hard drive configuration for storage. That configuration flexibility is great.

Rear I/O has a legacy VGA port, two USB 3.0 ports, and an out-of-band iLO management port. We are going to focus on the iLO 5 remote management capabilities later in this review.

Perhaps the most unique feature is a quad 1GbE NICs. These utilize the Broadcom NetXtreme BCM5719 drivers so they are widely compatible with different operating systems. Having 4x 1GbE is likely overkill for many applications, but it offers a lot of built-in commodity networking for everything from provisioning to management networks.

Update 2019-10-20: We posted An Important HPE ProLiant DL325 Gen10 Change Since Our Review where we found that the BCM5719 is being de-populated on many models and replaced with a quad-port Intel i350-based solution that takes the FlexLOM slot leaving no onboard 1GbE networking.

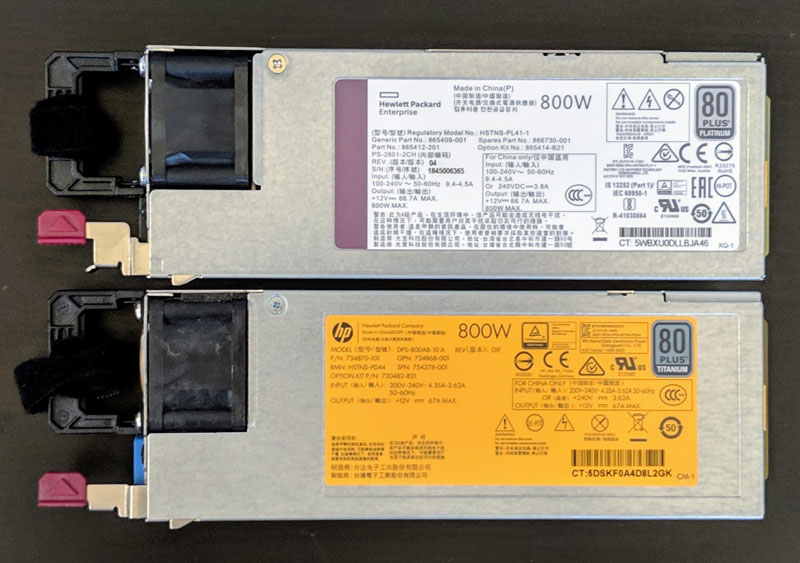

Power is provided via an 80Plus Platinum PSU. Our test unit came with redundant 500W units. HPE has several different configurations.

These power supplies work from 100-240V which is a wider range than some of HP’s older 80Plus Titanium 800W power supplies. We tested and even the legacy PSUs work in the DL325 Gen10 in our Testing HPE ProLiant 800W 80Plus Platinum and Titanium PSUs article. If you have on-site spare PSUs from your Gen9/ Gen10 Xeon HPE ProLiant’s, HPE is using the same standard PSUs here.

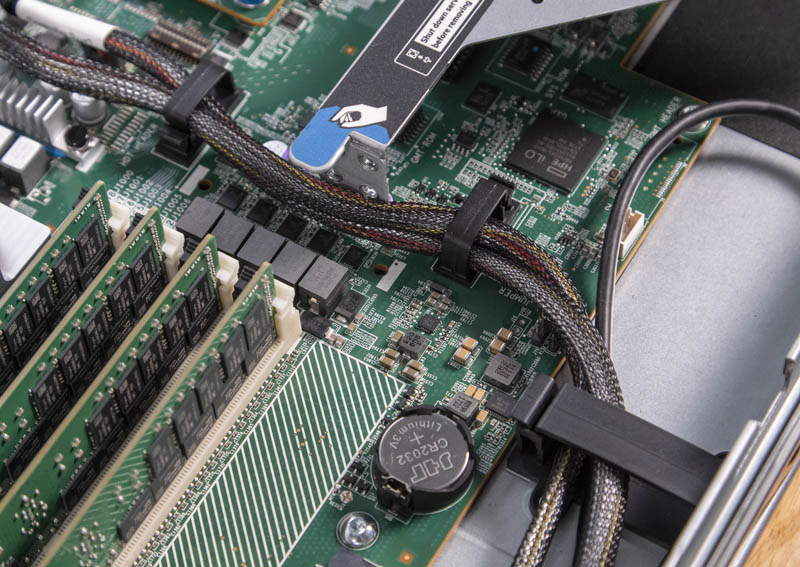

One other item we quickly wanted to note is cable management. Even though there are a lot of cost optimizations in this platform, HPE still has some distinctly ProLiant engineering here. There are a number of cable retention devices in the chassis as well as on the motherboard to ensure proper routing and airflow even after shipping and racking the servers. This is something that we have found missing from several white box offerings.

Overall, the HPE ProLiant DL325 Gen10 is well-built. One can see a few cost optimizations that HPE made on the platform, but they have worked very well for us in the lab.

Next, we are going to look at the system topology, iLO 5 management, and our test configuration before moving to performance and power consumption.

That’s a freaking thorough review if I’ve ever seen one. I wish you did the 10 by 2.5in NVMe U.2 config tho.

Any plans to review the DL385 G10?

I’m gonna pass this along to our team and we’ll probably get a test cluster going if our rep gives us a good price.

We bought a bunch of these based on reading some on the STH forums. Two things if you want to upgrade using non HPE parts –

Drives are ultra picky. Way more than Dell’s. We had some Toshibas that didn’t have HPE markings and parts but we’re the same drives and they caused 100% fan spin.

Memory has been a challenge. It is really picky and sometimes it’ll take 7 of 8 Samsung’s then not like the last one.

Sucks that HPE is actively pushing away this route

Thanks for the review. You wrote “We mentioned this previously, but the HPE ProLiant DL325 Gen10 we have is running DDR4-2933 memory speeds, not DDR4-3200. This has a slight impact on performance.” Based on your benchmarks, I canot see how much this impact would be in a virtualization environement, when you compare the same CPU once with DDR4-2933 and once with DDR4-3200. So would it be wise to wait until HPE delivers a real 7002 Epyc system with a new board (PCIe-Gen4) or would the difference be very minor?

To compare the memory bandwidth impact they would need to test with a different system that supported the higher clock then test at 3200 before locking it in the bios down to 2933 to see how much of impact there is.