HPE ProLiant DL325 Gen10 Power Consumption

For this, we wanted to present two sets of numbers. One using the AMD EPYC 7702 64-core part without storage being used, and then a maximum effort run with both storage and networking being hammered along with the CPU. We thought it would be important to give a range.

- Idle: 0.11kW

- STH 70% CPU Load: 0.29kW

- 100% Load: 0.31kW

- Maximum Recorded: 0.33kW

The configuration we used was one of the highest-end configurations this box supports. Using lower power SSDs, not using 40GbE nor a RAID controller, and similar hardware can save a lot of power.

We also use the HPE ProLiant DL325 Gen10’s in our lab for Testing HPE ProLiant 800W 80Plus Platinum and Titanium PSUs. There is a benefit to the Titanium PSUs. We had another configuration with the 500W PSUs which are more than sufficient for most configurations.

Note these results were taken using a 208V Schneider Electric / APC PDU at 17.5C and 71% RH. Our testing window shown here had a +/- 0.3C and +/- 2% RH variance.

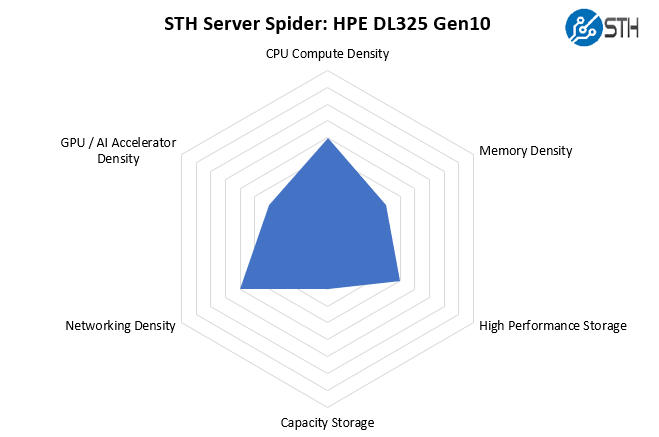

STH Server Spider: HPE ProLiant DL325 Gen10

In the second half of 2018, we introduced the STH Server Spider as a quick reference to where a server system’s aptitude lies. Our goal is to start giving a quick visual depiction of the types of parameters that a server is targeted at.

The HPE ProLiant DL325 Gen10 is designed to be a general-purpose 1U server. As such, it gets solid scores along a number of fronts and does not have an extreme focus on an area such as providing the most GPU compute or most 3.5″ storage per U. That is exactly what the DL325 Gen10 is designed to do, and in that role, it is a great fit.

Final Words

Our clusters of HPE ProLiant DL325 Gen10’s with the AMD EPYC 7401P have worked very well for us. The opportunity to upgrade these machines to a second-generation platform is exciting as that effectively doubles the potential CPU power. At the same time, for those paying licensing fees per-socket, the HPE ProLiant DL325 Gen10 offers a unique ability to rationalize sockets. We have seen 6-8 sockets, or 3-4 dual Intel Xeon E5-2630 V4 systems able to be replaced by a single 64 core EPYC part. That has an enormous TCO impact.

Overall, we really like the ProLiant DL325 Gen10 enough to have purchased more than a dozen of them for our internal uses. A lot of that happens to do with pricing we get from our HPE reseller on them. Make no mistake, HPE needs to get a 2nd generation platform with PCIe Gen4 and DDR4-3200 support into the market as companies like Dell EMC, Lenovo, and Supermicro have gone to market with theirs. At the same time, if you are not able to utilize PCIe Gen4, the DL325 Gen10 offers a great value in the market. That is really the bottom line with this platform. The HPE ProLiant DL325 Gen10 offers great capability, expandability, and value in the market making it a top pick.

That’s a freaking thorough review if I’ve ever seen one. I wish you did the 10 by 2.5in NVMe U.2 config tho.

Any plans to review the DL385 G10?

I’m gonna pass this along to our team and we’ll probably get a test cluster going if our rep gives us a good price.

We bought a bunch of these based on reading some on the STH forums. Two things if you want to upgrade using non HPE parts –

Drives are ultra picky. Way more than Dell’s. We had some Toshibas that didn’t have HPE markings and parts but we’re the same drives and they caused 100% fan spin.

Memory has been a challenge. It is really picky and sometimes it’ll take 7 of 8 Samsung’s then not like the last one.

Sucks that HPE is actively pushing away this route

Thanks for the review. You wrote “We mentioned this previously, but the HPE ProLiant DL325 Gen10 we have is running DDR4-2933 memory speeds, not DDR4-3200. This has a slight impact on performance.” Based on your benchmarks, I canot see how much this impact would be in a virtualization environement, when you compare the same CPU once with DDR4-2933 and once with DDR4-3200. So would it be wise to wait until HPE delivers a real 7002 Epyc system with a new board (PCIe-Gen4) or would the difference be very minor?

To compare the memory bandwidth impact they would need to test with a different system that supported the higher clock then test at 3200 before locking it in the bios down to 2933 to see how much of impact there is.