HPE ProLiant DL325 Gen10 Topology

With AMD EPYC 7001 servers, topology was a big deal. Each AMD EPYC 7001 chip had four NUMA nodes per socket, which had implications for applications. With the AMD EPYC 7002 series, by default, each socket is a single NUMA node.

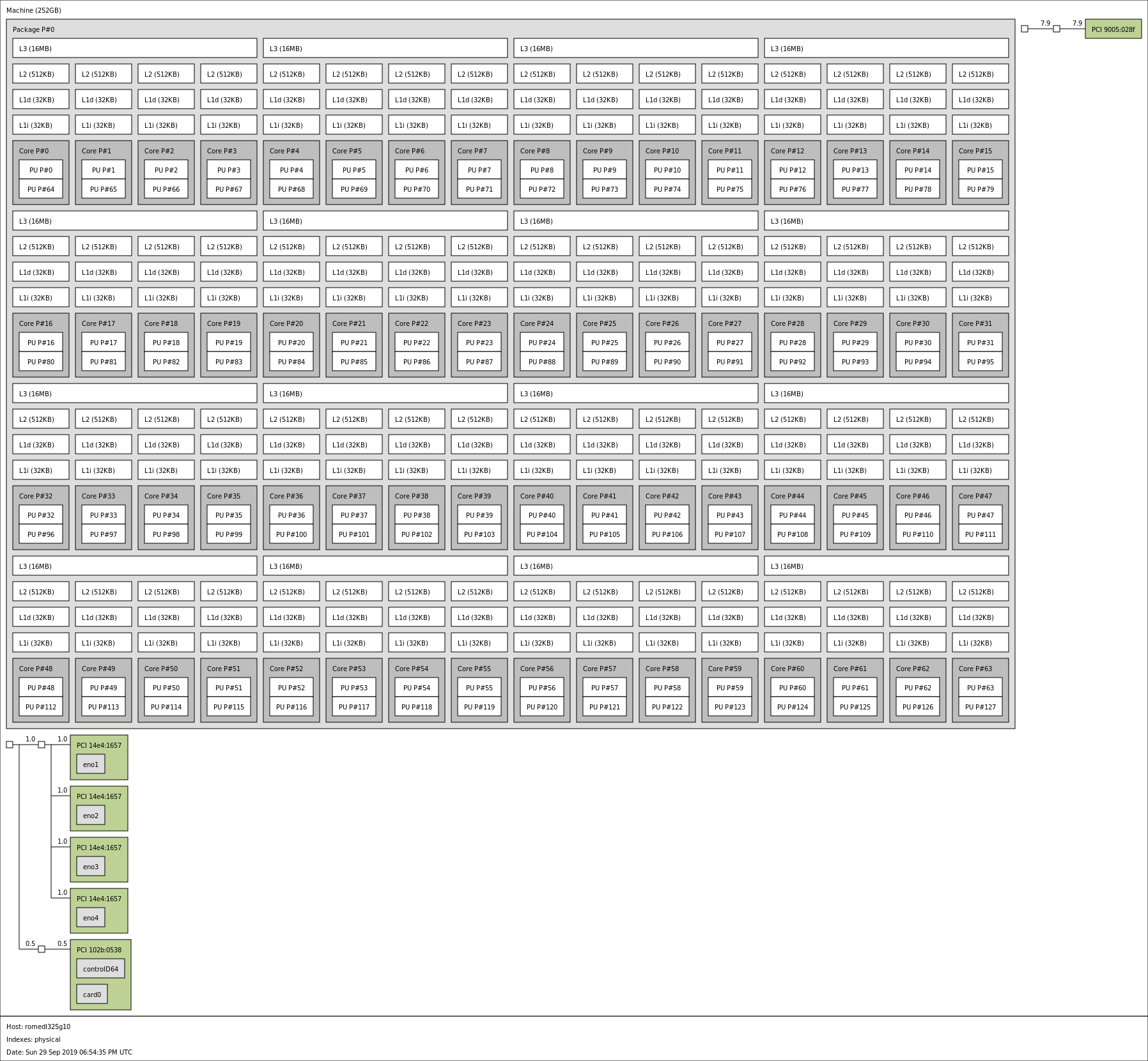

Here, we see a full 64 cores and 256MB of L3 cache in a single NUMA domain. We can also see the PCIe devices that directly attach to that NUMA node.

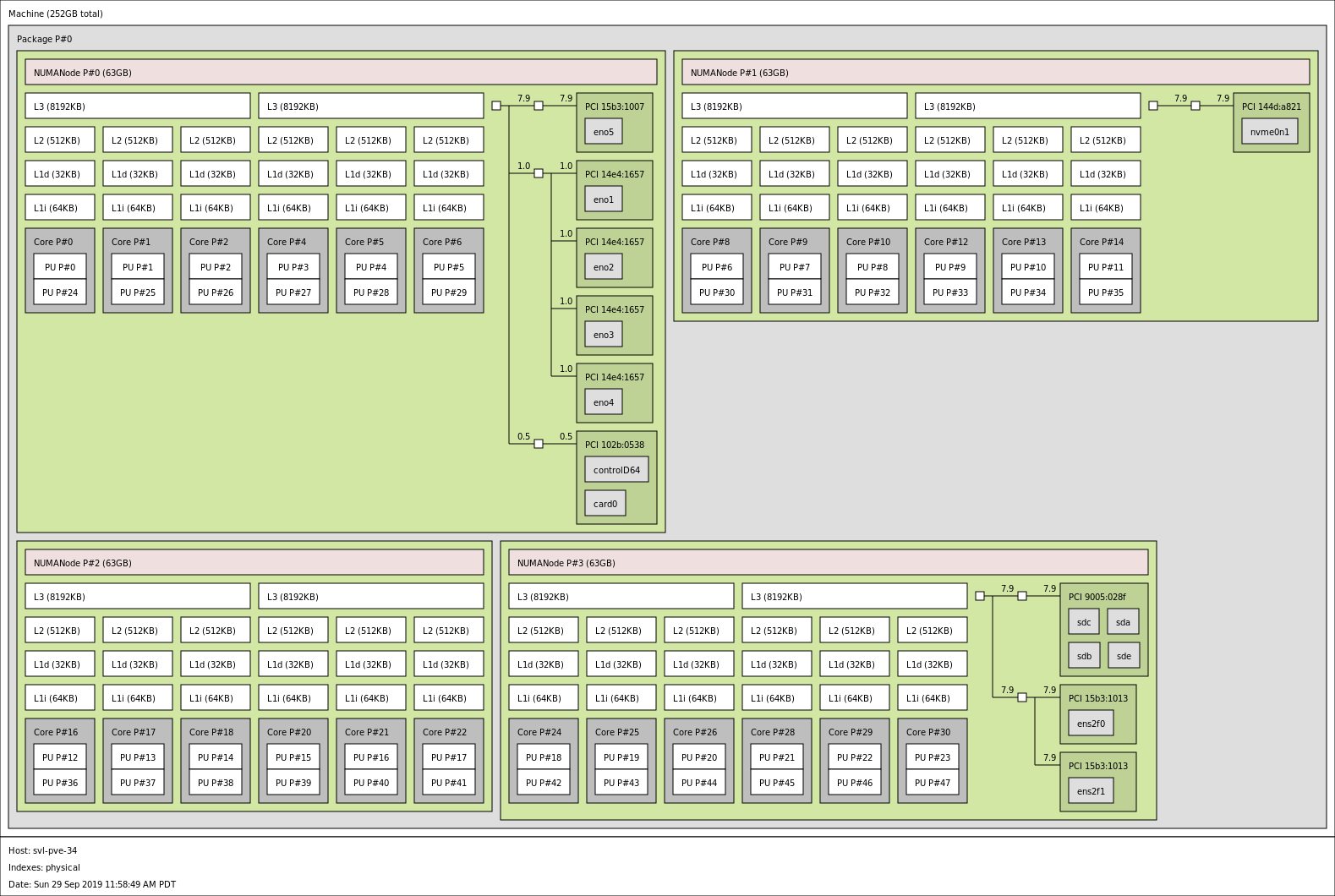

As a quick contrast, here is the topology of one of our AMD EPYC 7401P HPE DL325 Gen10’s while we were testing the machine:

You can see the difference beyond the simple core count. The older generation of CPUs had four NUMA nodes and PCIe devices attached to multiple NUMA nodes. For many workloads, this is fine. For most of today’s workloads, we would recommend the new AMD EPYC 7002 Series as it results in a topology that is actually superior to even dual-socket Intel Xeon Scalable systems.

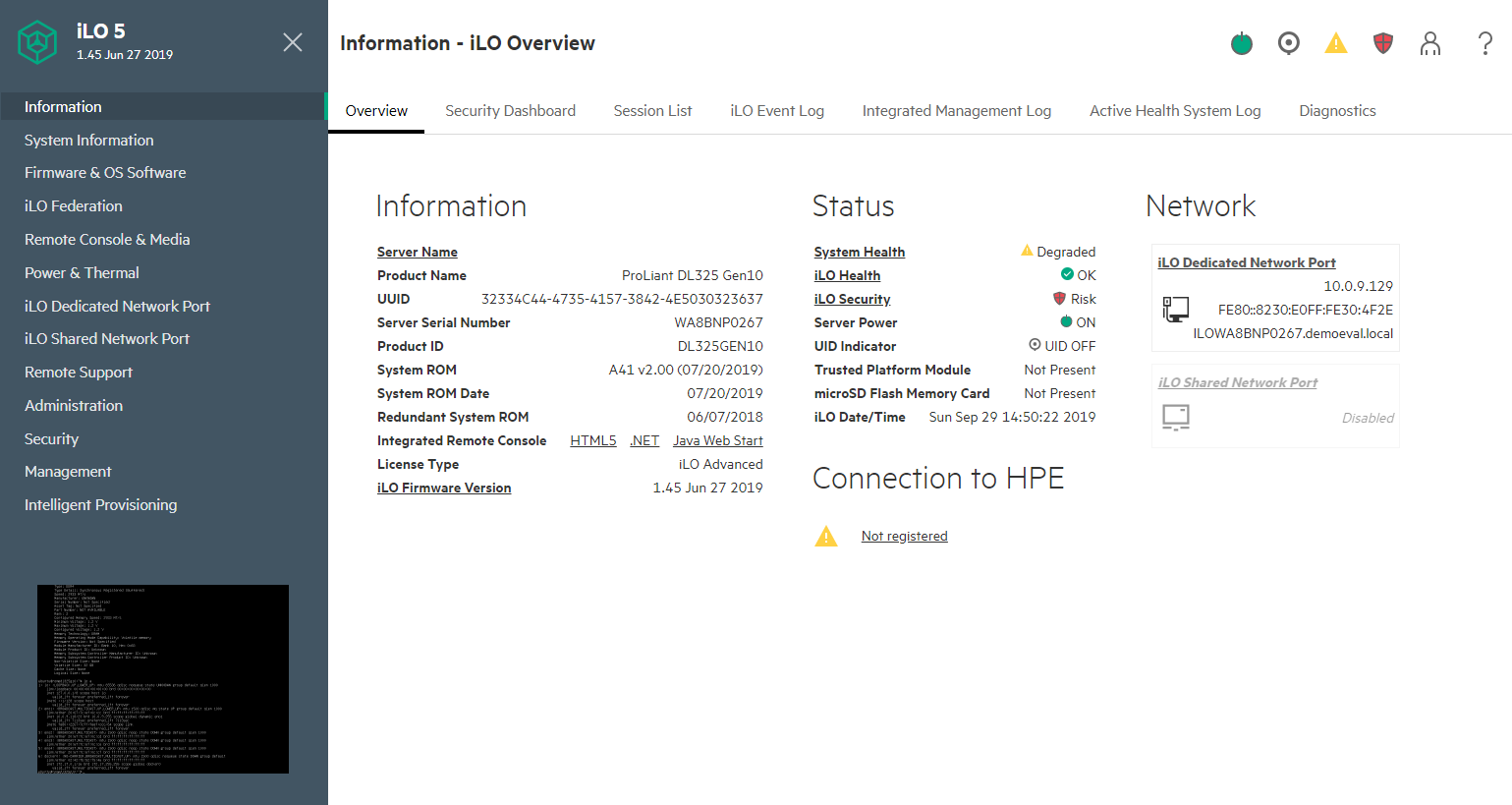

HPE ProLiant DL325 Gen10 Management Overview

HPE integrated lights out (iLO) management has been an industry staple for generations. Modern servers are meant to be deployed in data centers and rarely if ever visited by an administrator unless a part has failed. In its most basic form, the iLO 5 basic allows administrative tasks such as editing BIOS and firmware settings, changing boot orders and one-time boot settings, getting system inventory and event logs, and powering on/ off the server.

Here is the blurb from HPE iLO 5 on iLO Advanced features:

Licensing iLO Advanced enables true Lights-Out Management by enabling many features:

- Authentication: Directory integration, Kerberos with Two-Factor authentication, CAC Smartcard Authentication

- Remote Console: Virtual KVM (Integrated Remote Console), Console capture, replay, and share, Text Console, Virtual Serial Port record and playback

- Virtual Media: Image file (.iso or .img), CD/DVD, floppy, USB-key, scripting, folder

- Power: Power-related reporting, power capping, thermal capping on some systems

- Scalable Manageability: Support for Federation Management commands to update firmware, control server power, use virtual media, and more

- Other: Email alerting, Remote syslog, and support for HPE Smart Array Secure Encryption

Visit the following website to learn more about iLO licensing and to download a free trial license key: www.hpe.com/info/ilo. (Source: HPE iLO 5)

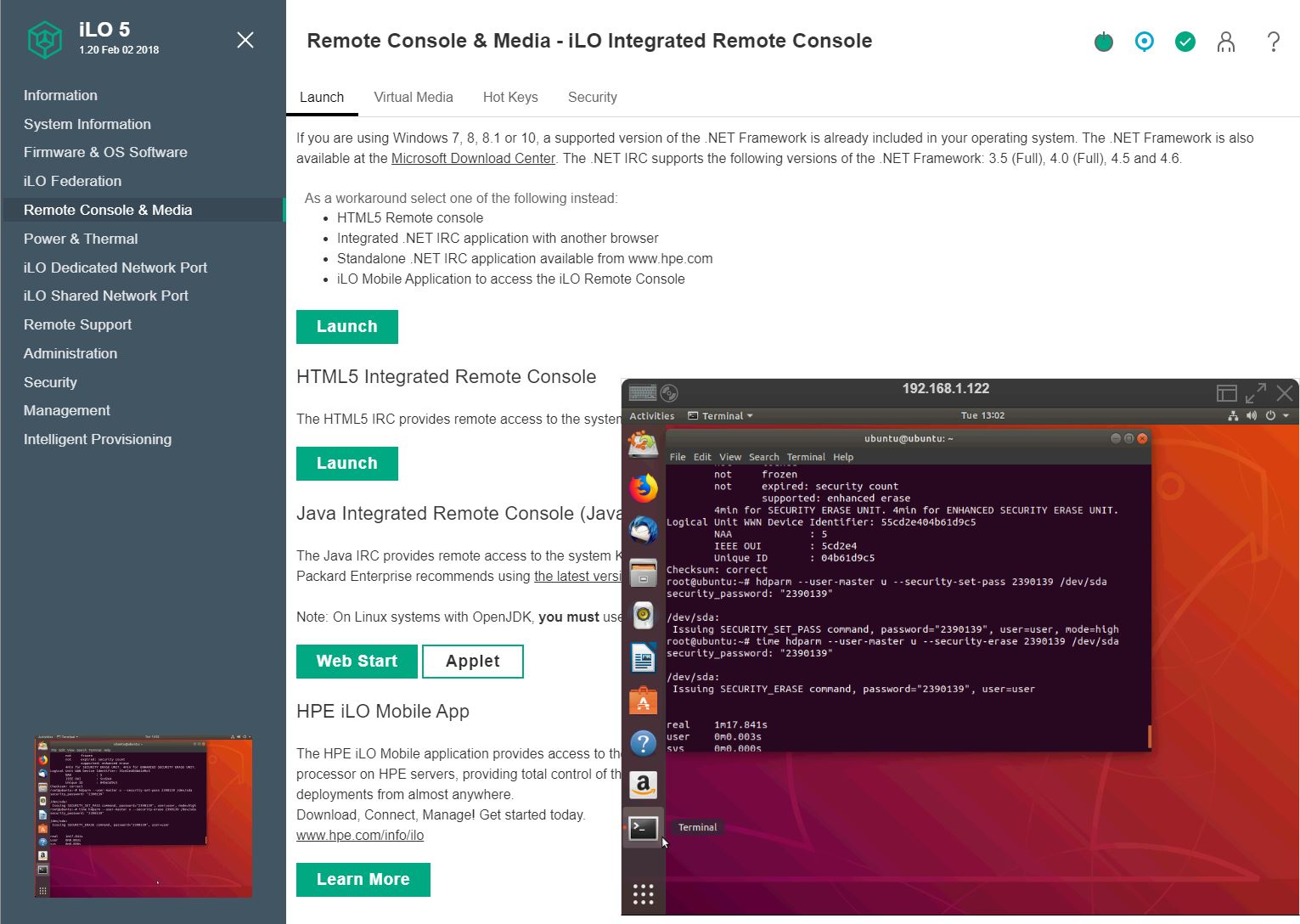

We made a video of the HPE ProLiant DL325 Gen10 management and its iLO 5. We further used the HPE iLO5 Standard as the baseline as well as Advanced on another machine. Here is the video tour:

Without iLO Advanced, one can do some fairly basic configuration. With iLO Advanced one gets not just what we see as industry-standard features like iKVM and media, but also HPE’s advanced integrations and scalable management features. Every server that passes through the STH lab ends up getting the iLO Advanced functionality license.

Perhaps the feature that is going to be most important to the HPE ProLiant DL325 Gen10 buyers is the iKVM management capabilities. This is the feature that allows for remote terminal access and also allows for remote media mounting. Without the iLO Advanced license, it is conspicuously absent on the HPE ProLiant DL325 Gen10. Instead one can use iKVM during POST and BIOS setup, but not once the server boots to the OS. We see this as an almost mandatory feature. HPE’s white box competition gives iKVM functionality for free in their base offerings.

HPE’s management solution is designed to manage large clusters of servers and increase automation functionality and information given. HPE iLO 5 is significantly more advanced than a low-cost white box server’s functionality in this space and HPE has a silicon root of trust that is needed to ensure BMC firmware and hardware security, a topic coming up more often. HPE iLO 5 may work well for managing large numbers of servers, and servers under a single pane of glass for organizations.

Smaller edge deployments say 1-10 servers, often have administrators that just want iKVM. On a tight budget, an iLO Advanced license on the HPE ProLiant DL325 Gen10 will mean a small customer can pay a hefty premium to get iKVM, and that premium may come at the expense of adding hardware to the configuration.

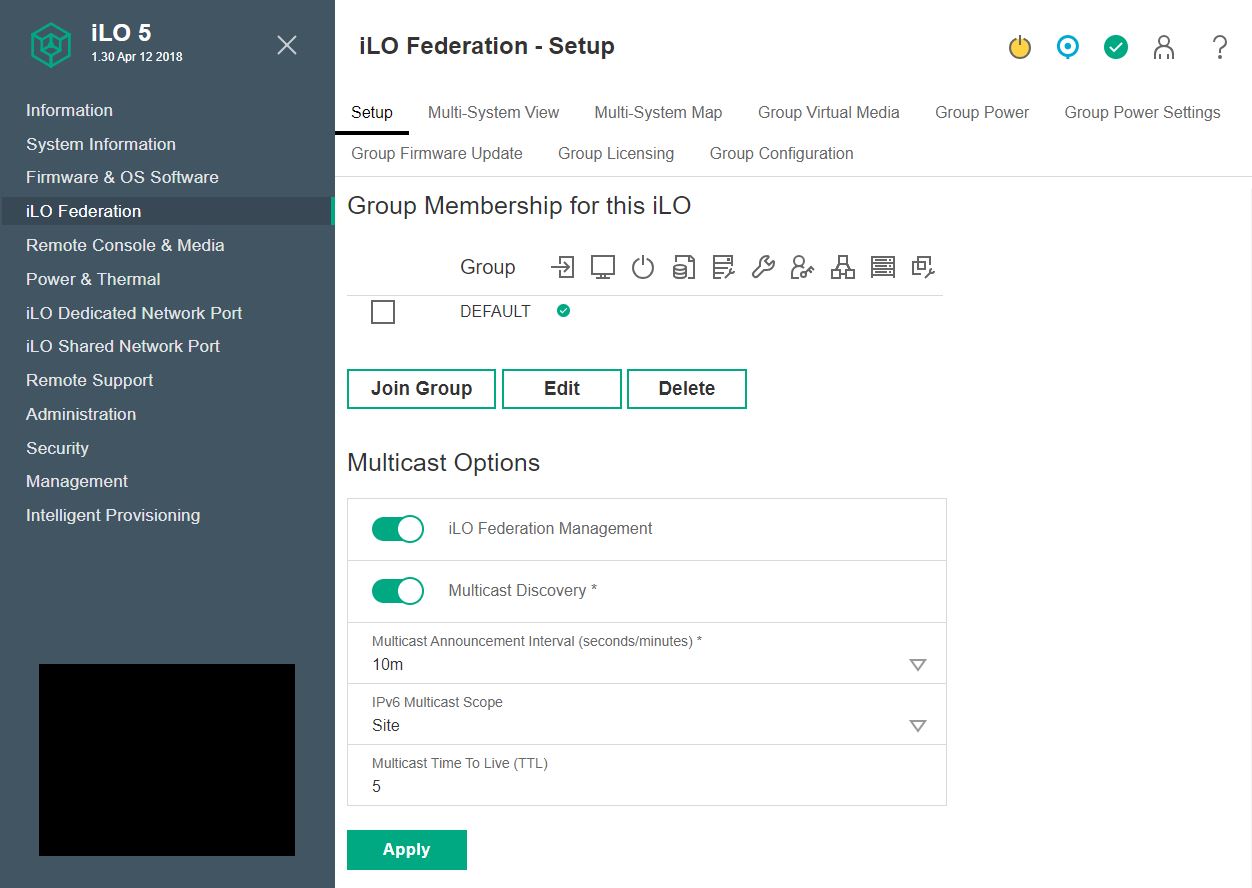

HPE iLO 5 has some really great features. You can see how the solution is designed for managing clusters of servers even without HPE Insight with features like iLO Federation. This is the type of feature that white-box vendors, and even other large server vendors, do not have.

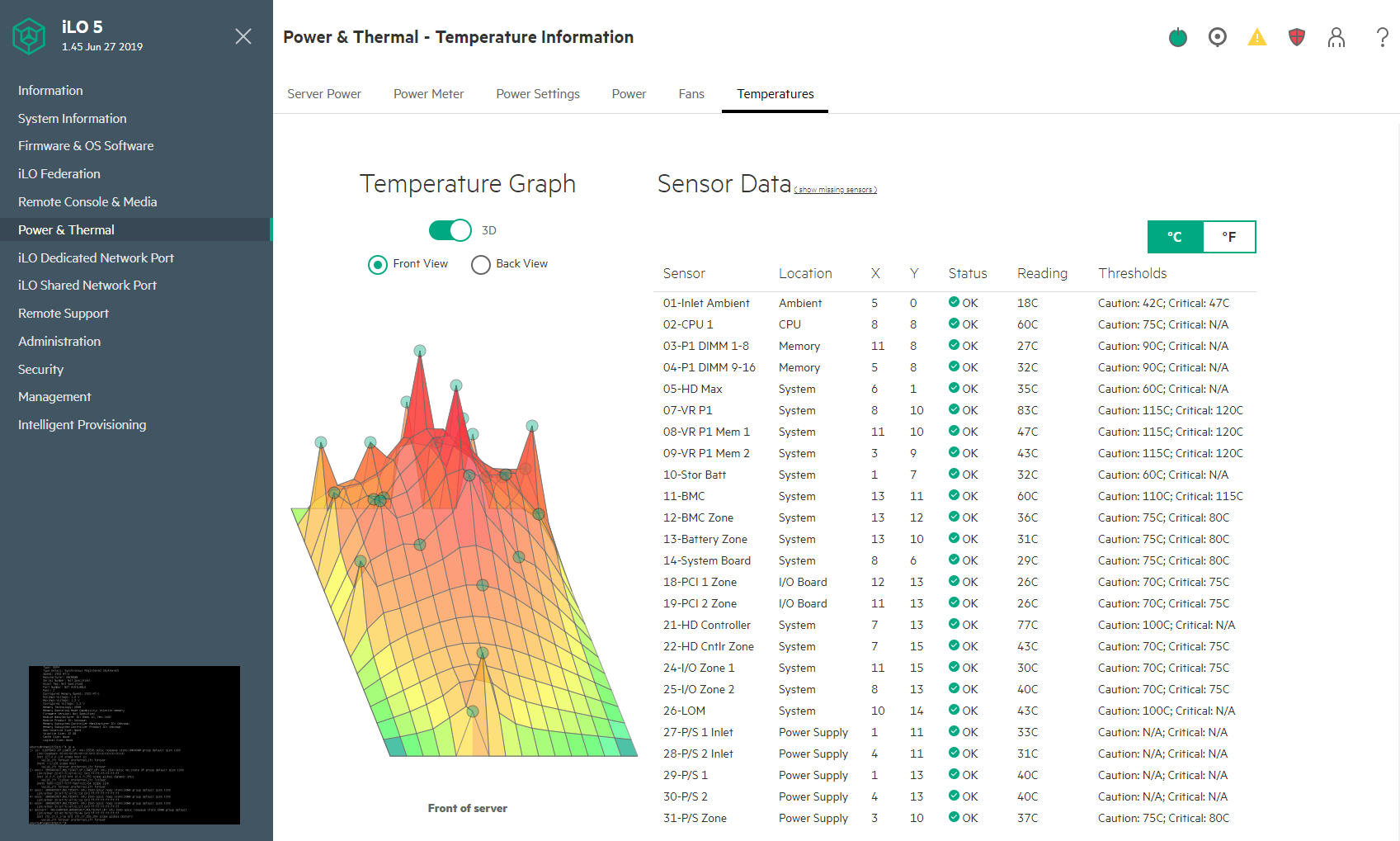

There is also a certain “cool” factor to HPE iLO 5’s interface. Here is a great example of the 3D temperature graph that can help you diagnose hot spots in the server.

HPE can also display heat maps in 2D fashion and below this view, there is a list of temperature monitors that are labeled with their locations. Most management solutions have a table with temperature sensor readings, but few have the 3D temperature graph eye candy.

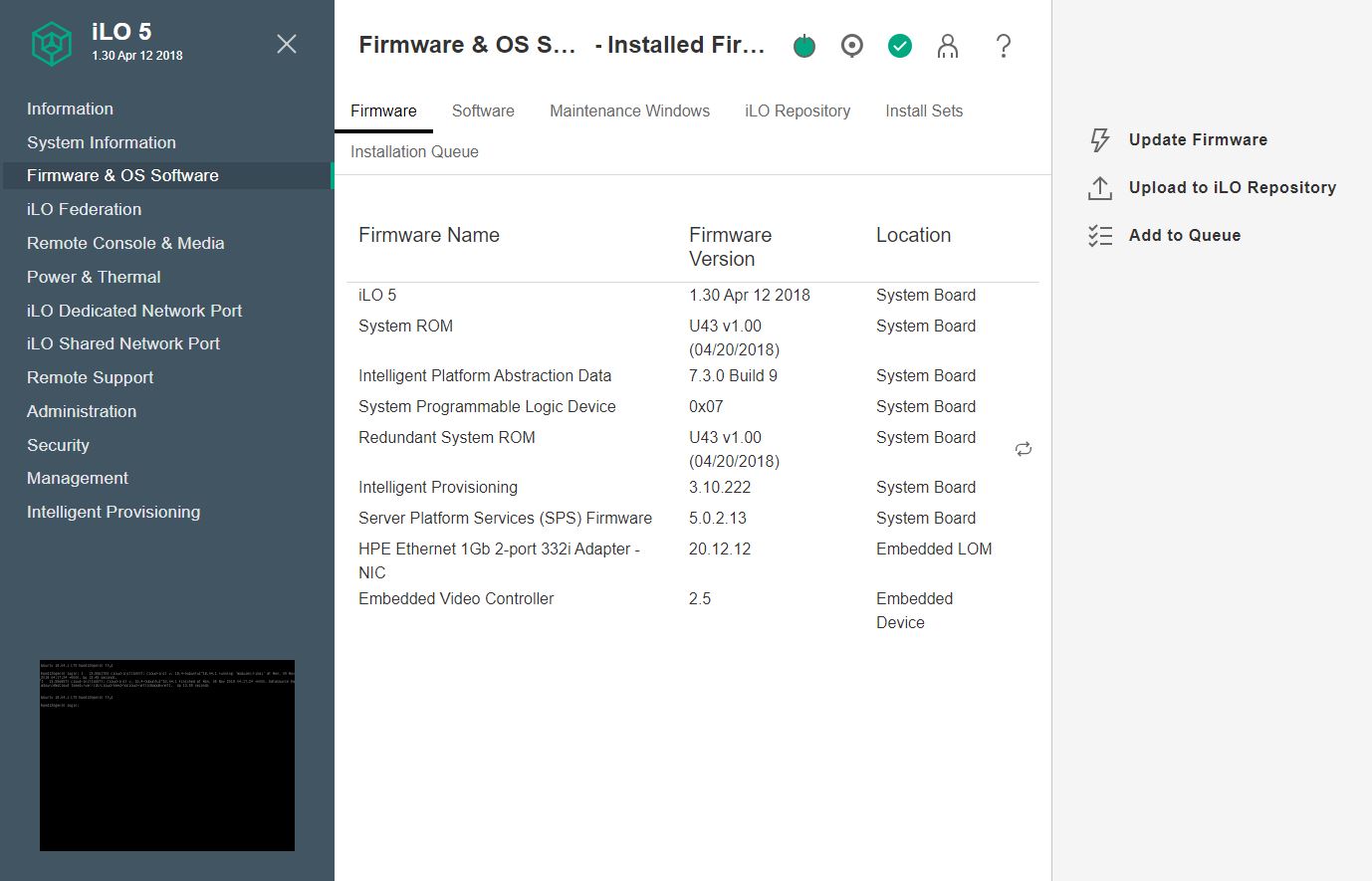

HPE iLO 5 has other features that are extremely useful for managing large numbers of servers. A simple example of this is that iLO 5 can show firmware versions not just of the UEFI firmware and BMC firmware, but it can show the firmware revisions of the complete system. That allows one to easily diagnose which servers in a large cluster need to be updated. Also, HPE has an easy manner to update this firmware from the management interface.

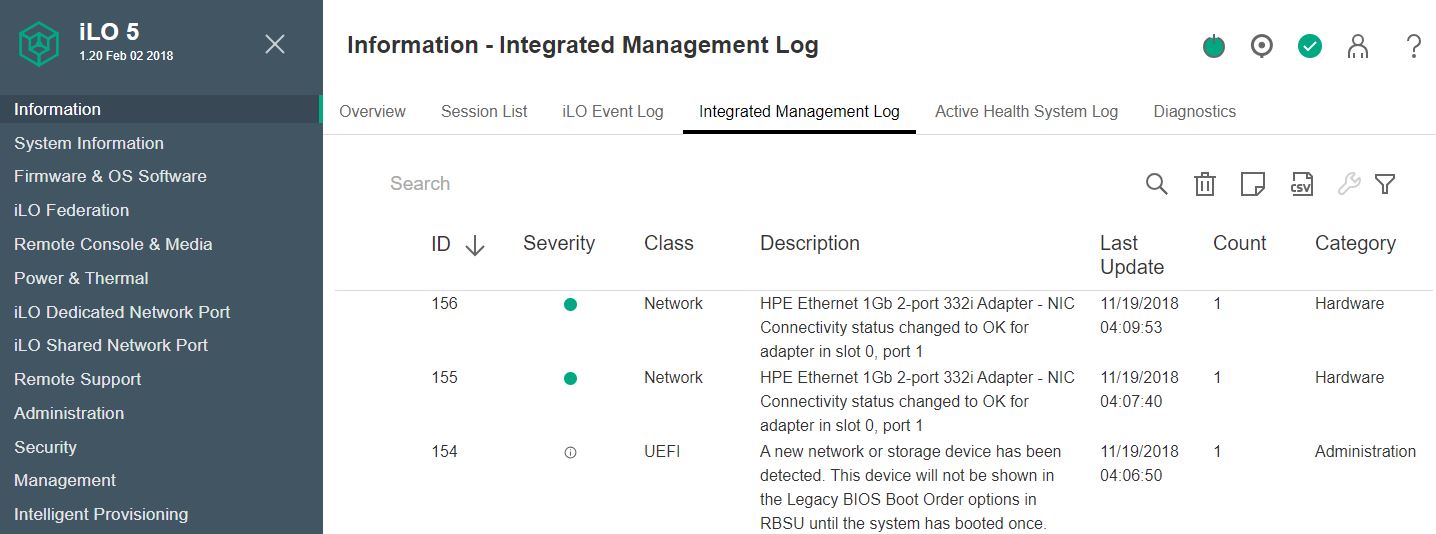

As one may expect, HPE iLO 5 provides standard features such as system logging.

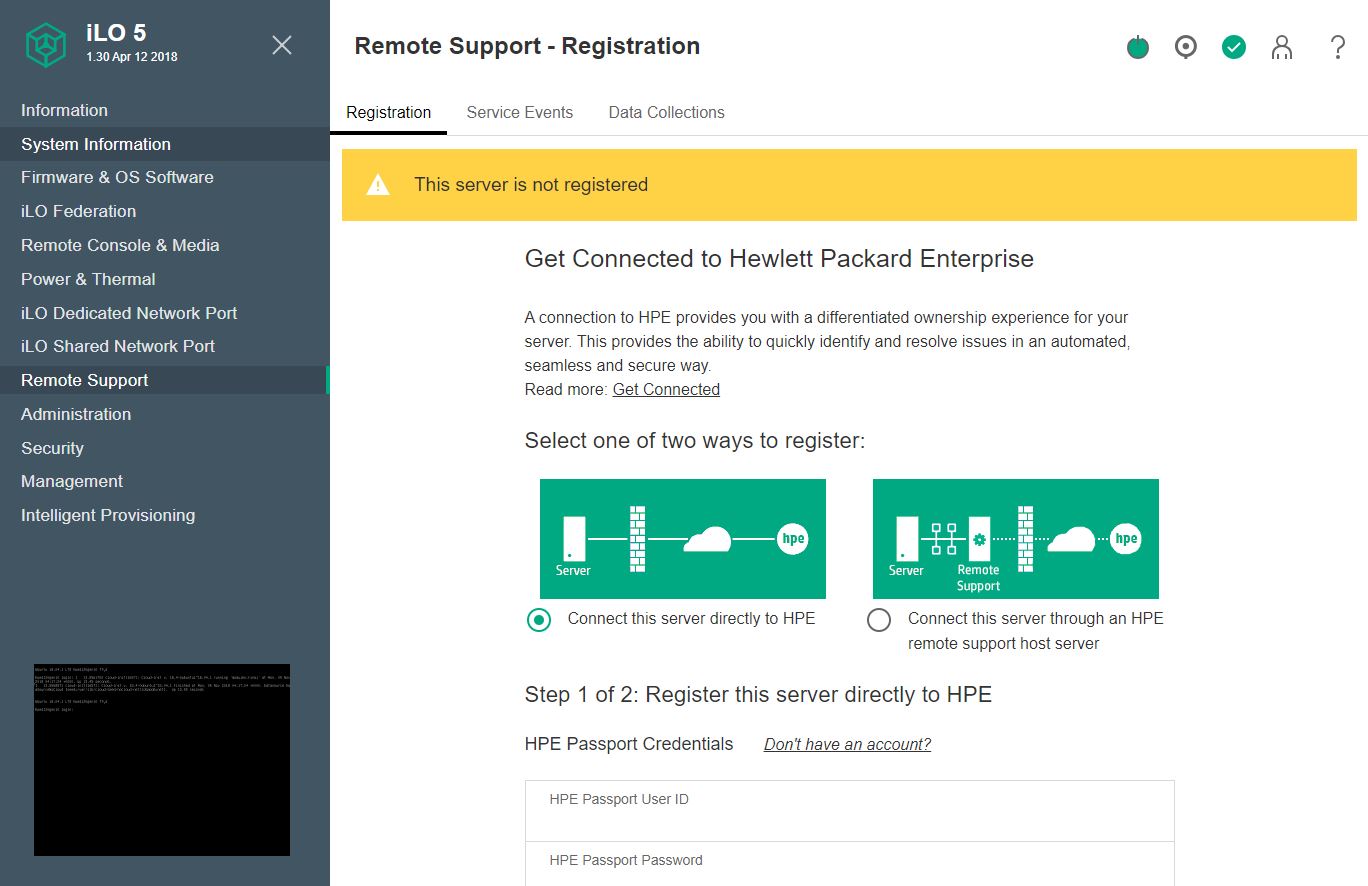

Another advanced feature is that HPE Remote Support integration allows automated support for hardware failure and replacement. This is a feature white box vendors do not have, and only some of the large server vendors deliver.

Overall, we like HPE iLO 5. If the iKVM functionality was included with the server, or it was a nominal upgrade ($20 or so) then it would be superior to white-box implementations in just about every area. Certainly, iLO Advanced is beyond standard remote management implementations and HPE is trying to capture some of that value.

HPE ProLiant DL325 Gen10 Test Configuration

Our test configuration is as follows:

- Server: HPE ProLiant DL325 Gen10

- CPU: AMD EPYC 7702 64 core/ 128 thread

- RAM: 8x 32GB DDR4-2933

- SSDs: 6x 1.6TB Toshiba SAS3

- SAS Controller: HPE SmartArray P408i-a

- NIC: HPE FlexLOM Mellanox ConnectX-3 Pro VPI 40GbE

- PSU: Redundant 2x HPE 500W 80Plus Platinum PSUs

A quick note about this test configuration. Since this is a first-generation platform with an AMD EPYC 7002 second-generation CPU, it can only handle up to DDR4-2933 speeds despite the AMD EPYC 7702 being able to go up to DDR4-3200. We expect that feature on HPE’s second-gen systems.

We also wanted to note that our readers configuring a similar system should get an AMD EPYC 7702P CPU. AMD has a lower-cost CPU option designed specifically for this type of single-socket system. It runs at the same speeds as the 7702, it just costs less.

Next, we are going to look at the DL325’s performance, followed by power consumption and our final words.

That’s a freaking thorough review if I’ve ever seen one. I wish you did the 10 by 2.5in NVMe U.2 config tho.

Any plans to review the DL385 G10?

I’m gonna pass this along to our team and we’ll probably get a test cluster going if our rep gives us a good price.

We bought a bunch of these based on reading some on the STH forums. Two things if you want to upgrade using non HPE parts –

Drives are ultra picky. Way more than Dell’s. We had some Toshibas that didn’t have HPE markings and parts but we’re the same drives and they caused 100% fan spin.

Memory has been a challenge. It is really picky and sometimes it’ll take 7 of 8 Samsung’s then not like the last one.

Sucks that HPE is actively pushing away this route

Thanks for the review. You wrote “We mentioned this previously, but the HPE ProLiant DL325 Gen10 we have is running DDR4-2933 memory speeds, not DDR4-3200. This has a slight impact on performance.” Based on your benchmarks, I canot see how much this impact would be in a virtualization environement, when you compare the same CPU once with DDR4-2933 and once with DDR4-3200. So would it be wise to wait until HPE delivers a real 7002 Epyc system with a new board (PCIe-Gen4) or would the difference be very minor?

To compare the memory bandwidth impact they would need to test with a different system that supported the higher clock then test at 3200 before locking it in the bios down to 2933 to see how much of impact there is.