Gigabyte G242-Z10 Storage Performance

For this exercise, we are using our legacy Linux-Bench scripts which help us see cross-platform “least common denominator” results we have been using for years as well as several results from our updated Linux-Bench2 scripts. Starting with our 2nd Generation Intel Xeon Scalable benchmarks, we are adding a number of our workload testing features to the mix as the next evolution of our platform.

At this point, our benchmarking sessions take days to run and we are generating well over a thousand data points. We are also running workloads for software companies that want to see how their software works on the latest hardware. As a result, this is a small sample of the data we are collecting and can share publicly. Our position is always that we are happy to provide some free data but we also have services to let companies run their own workloads in our lab, such as with our DemoEval service. What we do provide is an extremely controlled environment where we know every step is exactly the same and each run is done in a real-world data center, not a test bench.

We are going to show off a few results, and highlight a number of interesting data points in this article.

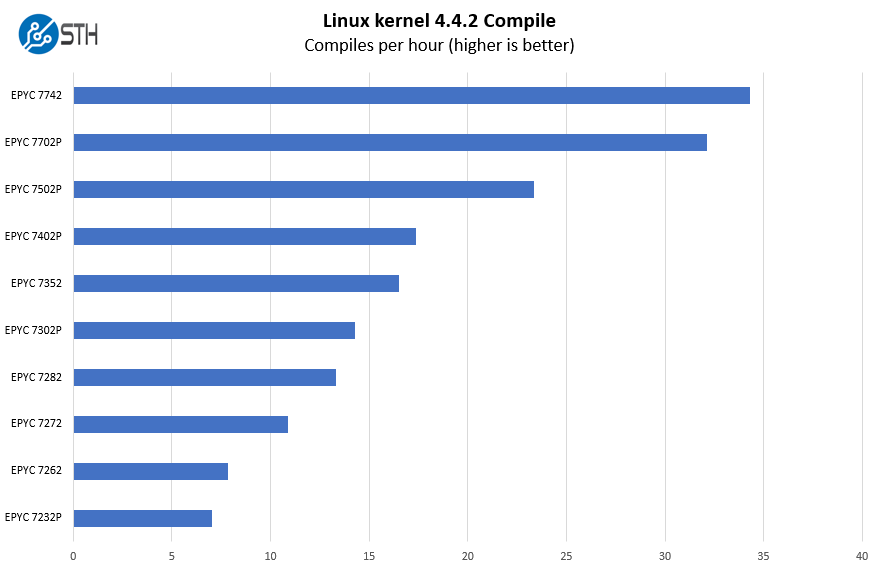

Python Linux 4.4.2 Kernel Compile Benchmark

This is one of the most requested benchmarks for STH over the past few years. The task was simple, we have a standard configuration file, the Linux 4.4.2 kernel from kernel.org, and make the standard auto-generated configuration utilizing every thread in the system. We are expressing results in terms of compiles per hour to make the results easier to read:

Part of our process is to try different processors to give our readers a sense of configuration options. We tested up to the $6,950 AMD EPYC 7742. Technically this system is supposed to support 280W TDP SKUs like the AMD EPYC 7H12 for the HPC market, but we do not have that SKU to test as it is reserved for private HPC deals.

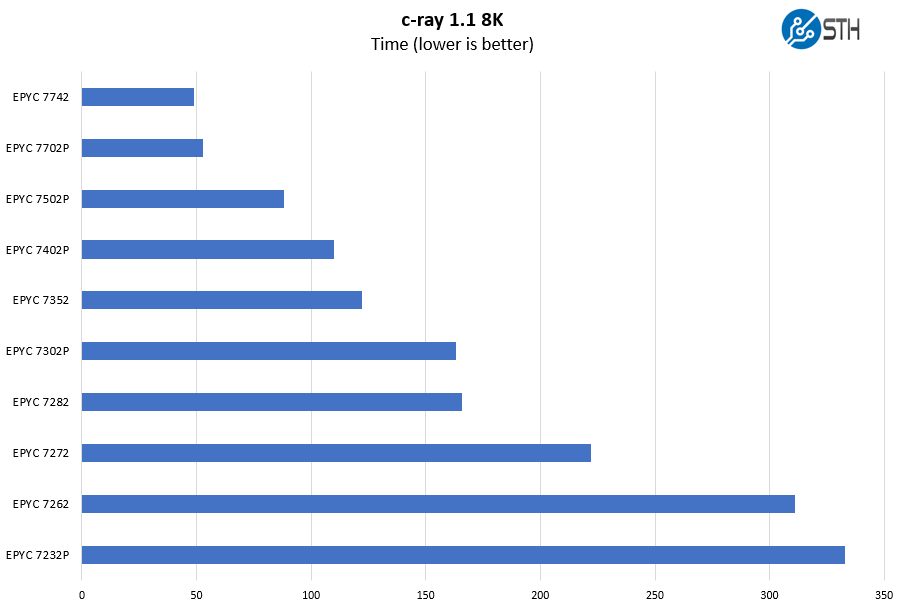

c-ray 1.1 Performance

We have been using c-ray for our performance testing for years now. It is a ray tracing benchmark that is extremely popular to show differences in processors under multi-threaded workloads. We are going to use our 8K results which work well at this end of the performance spectrum.

With the AMD EPYC 7002 series, there are special “P” SKUs that range from 8 to 64 cores. Some of the lower-end SKUs offer a lot of performance but are optimized for 4-channel memory optimization. C-ray rendering does not show much impact, but many GPU tasks will. We discuss that more in AMD EPYC 7002 Rome CPUs with Half Memory Bandwidth. You can see the accompanying video here:

We suggest not using these lower-end SKUs and starting your SKU deliberation at the AMD EPYC 7302P level or higher for this server.

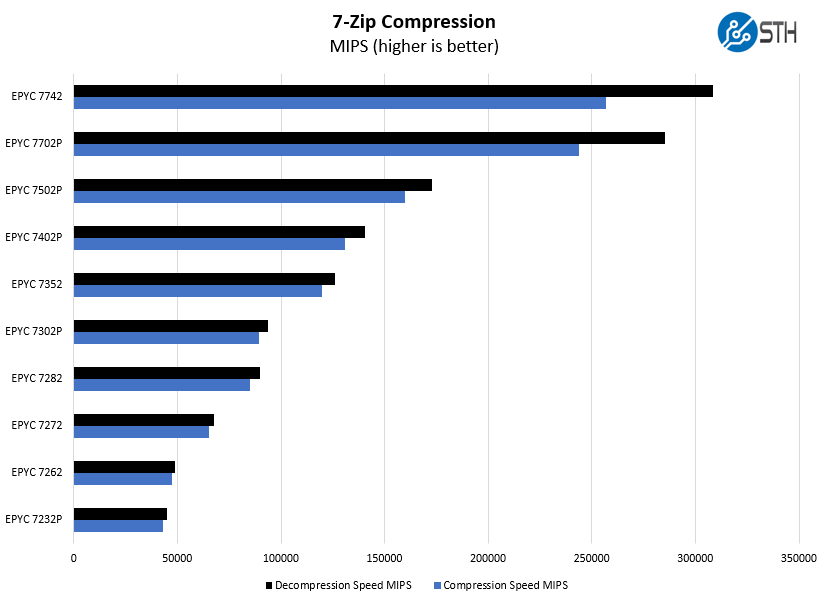

7-zip Compression Performance

7-zip is a widely used compression/ decompression program that works cross-platform. We started using the program during our early days with Windows testing. It is now part of Linux-Bench.

For a system like the Gigabyte G242-Z10, we see the AMD EPYC 7402P as perhaps the lower-end of what we think users will want to configure as it offers an outstanding price/performance ratio at 24 cores.

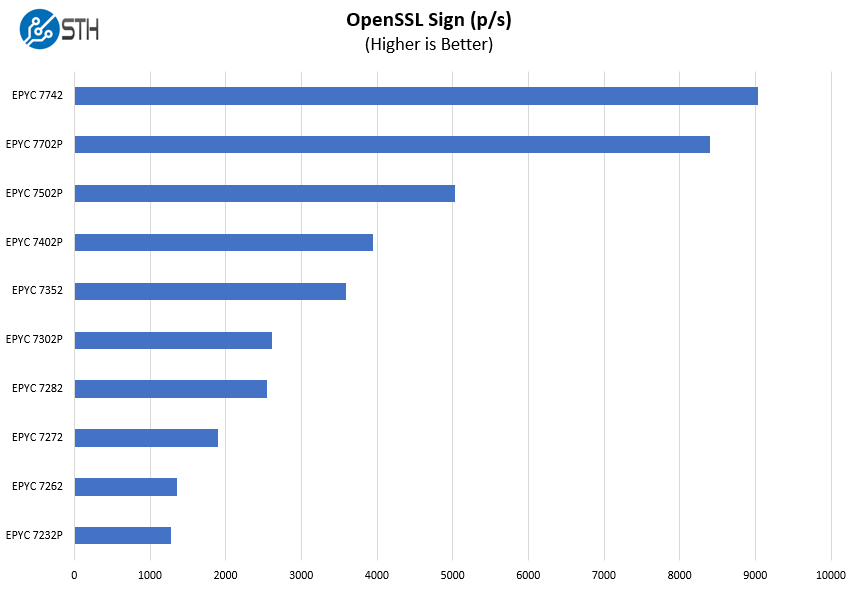

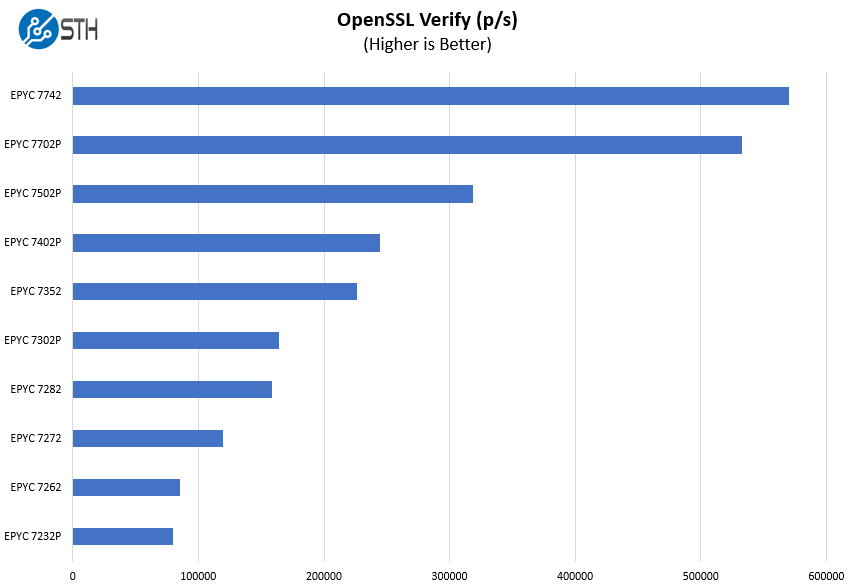

OpenSSL Performance

OpenSSL is widely used to secure communications between servers. This is an important protocol in many server stacks. We first look at our sign tests:

Here are the verify results:

One other area to consider is that a chip like the AMD EPYC 7702P can offer 64-cores of performance with lower TDP in this GPU server. That gives 16 CPU cores per GPU all in the same socket which some applications may find attractive.

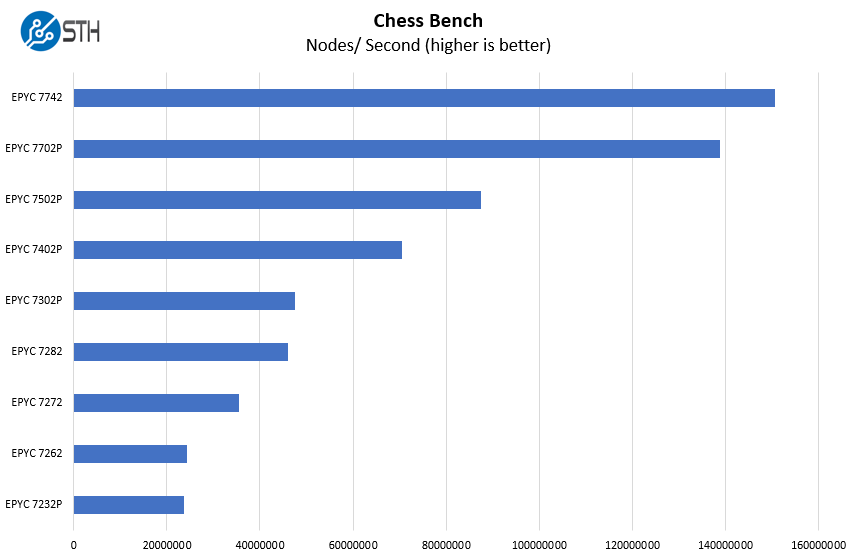

Chess Benchmarking

Chess is an interesting use case since it has almost unlimited complexity. Over the years, we have received a number of requests to bring back chess benchmarking. We have been profiling systems and are ready to start sharing results:

The other option we can recommend is the AMD EPYC 7502P. Costs are higher is the EPYC 7402P, but the advantage is an 8 core to GPU ratio which can help in data preparation tasks. Also, if you are doing virtual machines for VDI applications, having a higher core count is helpful. Here, the 32-core part is sized exactly for the new VMware 32-core per socket license pack we discussed in Licenseageddon Rages as VMware Overhauls Per-Socket Licensing.

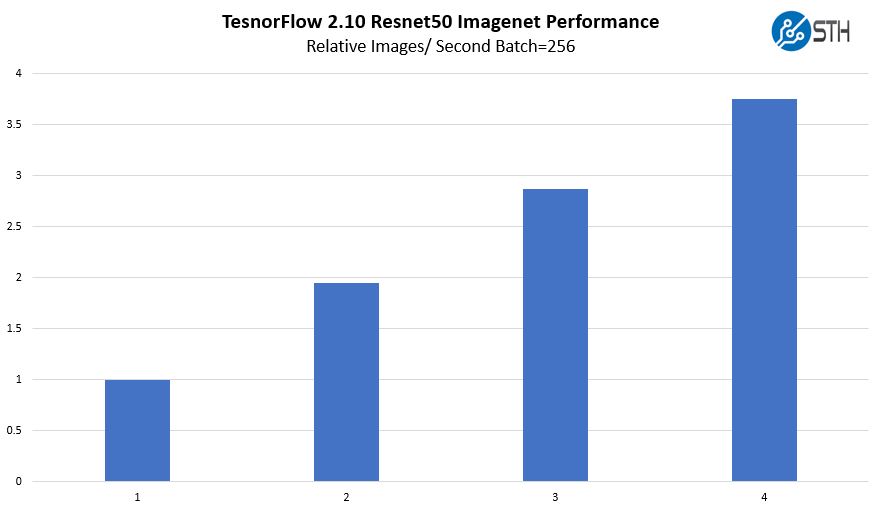

Gigabyte G242-Z10 TensorFlow Resnet-50 GPU Scaling

We wanted to give some sense of performance using one of the TensorFlow workloads that we utilized. Here, we are increasing the number of GPUs used while training Resnet50 on Imagenet data.

We only were able to borrow four Tesla V100’s for an afternoon to use in this system, but the performance was acceptable with reasonable scaling between GPUs.

For other GPU options, we have more complete reviews such as our PNY GeForce RTX 2080 Ti Blower GPU Review and the NVIDIA Tesla T4 AI Inferencing GPU Benchmarks and Review. Performance followed those reviews, however, we did not want to add another 80 charts to this review.

Next, we are going to look at power consumption, our STH Server Spider, and give our final words.

Thanks for being at least a little critical in your recent reviews like this one. I feel like too many sites say everything’s perfect. Here you’re pushing and finding covered labels and single width GPU cooling. Keep up the work STH

As its using less than the total power of a single PSU, does this then mean you can actually have true redundancy set for the PSUs?

Also, I could have sworn that I saw pictures of this server model (maybe from a different manufacturer) that had non-blower type GTX/RTX desktop GPUs installed (maybe because of the large space above the GPUs). I dont suppose you tried any of these (as they run higher clock speeds)?

https://www.asrockrack.com/photo/2U4G-EPYC-2T-1(L).jpg

https://www.asrockrack.com/general/productdetail.asp?Model=2U4G-EPYC-2T#Specifications

I am looking forward to see similar servers with PCIe 4.0!

Been waiting for a server like this ever since Rome was released. Gonna see about getting one for our office!

For my application I need display output from one of the GPUs. Can not even the rear GPU be made to let me plug-in a display?

To Vlad: check the G482-Z51 (rev. 100)

Has anyone tried using Quadra or Geforce GPUs with this server? They aren’t on the QVL list, but they are explicitly described in the review and can be specc’d out on some system builders websites.