The AMD EPYC 7502P offers a unique value that will resonate with many of our readers. Although it is only half the core count of the AMD EPYC 7702P, it provides what may be better value for many scenarios. To be clear, with 32 cores, 64 threads, 8 channel DDR4-3200 in up to 4TB of capacity, and 128 lanes of PCIe Gen4 support it resonates as a thoroughly high-end part in 2019. From a competitive standpoint, Intel’s best competitive 28-core products, the Intel Xeon W-3275 and Intel Xeon Platinum 8280 do not have the core and caches, PCIe expandability, and memory capacity to compete even with list prices in the 2x-4x range. Although we focus on the flashy high-end parts, the AMD EPYC 7302P, EPYC 7402P, and this EPYC 7502P are where AMD is going to make headway on its quest to make single-socket servers more relevant than ever.

Key stats for the AMD EPYC 7502P: 32 cores / 64 threads with a 2.5GHz base clock and 3.35GHz turbo boost. There is 128MB of onboard L3 cache. The CPU features a 180W TDP. These are $2300 list price parts.

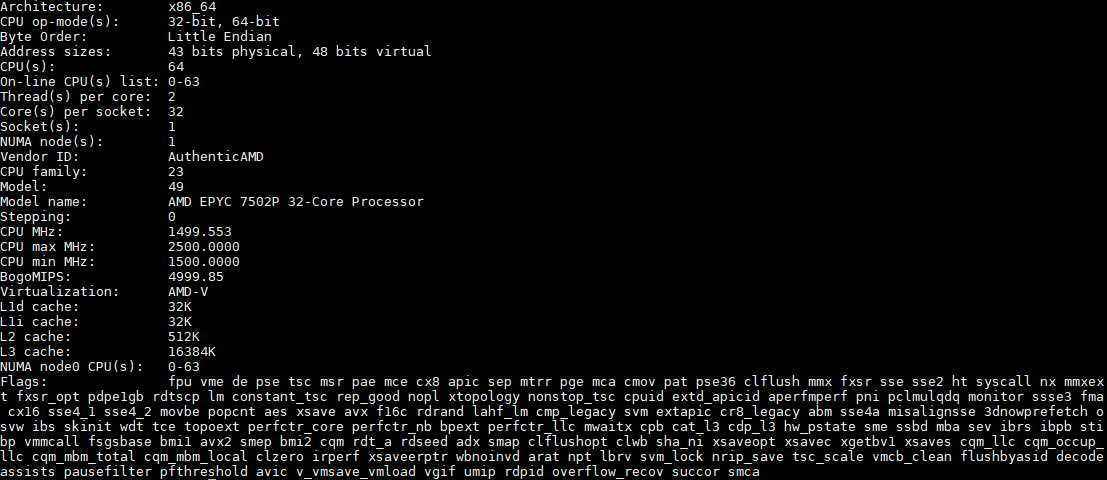

Here is what the lscpu output looks like for an AMD EPYC 7502P:

Before we get too far in this review, the AMD EPYC 7502P has 128x PCIe Gen4 lanes. That is an absolutely enormous amount of connectivity. A great example of how that can be used, and where this EPYC 7502P excels, is found in our Gigabyte R272-Z32 review. Many accelerator applications and newer hyper-converged infrastructure can utilize the 32 cores. Key to AMD’s value proposition is being able to deliver this platform all with a single package. The “P” in the SKU means that this is a single-socket only platform priced at a discount to the standard AMD EPYC 7502 which is the dual-socket capable version of this chip.

In a reversal from the previous generation, the NUMA node structure for single-socket EPYC 7002 has changed. We now, by default, have a single NUMA node for these 32 cores. Intel Xeon requires two NUMA nodes to reach 32 cores since the largest single CPU up to $10000 is the Intel Xeon Platinum 8280. With the previous generation, AMD needed four while Intel still needed two. If you read older articles discussing the Intel Xeon advantage for using fewer NUMA nodes, that is now reversed with the current generation.

A Word on Power Consumption

We tested these in a number of configurations. The lowest spec configuration we used is a Supermicro AS-1014S-WTRT. This had two 1.2TB Intel DC S3710 SSDs along with 8x 32GB DDR4-3200 RAM. One can get a bit lower in power consumption since this was using a Broadcom BCM57416 based onboard 10Gbase-T connection, but there were no add-in cards.

Even with that here are a few data points using the AMD EPYC 7502P in this configuration when we pushed the sliders all the way to performance mode and a 180W cTDP:

- Idle Power (Performance Mode): 101W

- STH 70% Load: 204W

- STH 100% Load: 223W

- Maximum Observed Power (Performance Mode): 257W

As a 1U server, this does not have the most efficient cooling, still, we are seeing absolutely great power figures here. The impact is simple. If one can consolidate smaller nodes onto an AMD EPYC 7502P system, there are power efficiency gains to be attained as well.

Next, let us look at our performance benchmarks before getting to market positioning and our final words.

That’s a cool cover photo. We’re going to look to quote these out v. Intel Xeon but even with the discounts we’re being positioned the 8260’s as competitive. Based on this, we’ll ask for an update

What motherboard gives pci 4 instead of pci 3 like the Supermicro I mostly use?

we went with the 7502 for a new vsphere cluster. superserver 1114s includes pci4 so we can test new mellanox 100g cards.

Hey dpf, where did you purchase you 1114’s from? We have one on order and it’s taking forever for it to get in.