The ASUS RS500A-E9-RS4-U is one of those server designs straddling the line between a fully customized server and a channel server made of off-the-shelf components. As such, this 1U single-socket AMD EPYC platform has a lot going for it. In our ASUS RS500A-E9-RS4-U review, we are going to look at what makes this platform unique in the market.

ASUS RS500A-E9-RS4-U Hardware Overview

The ASUS RS500A-E9-RS4-U is a 1U server with quite a different view about front I/O. Most 1U servers we see these days come with either four 3.5″ SATA/ SAS drive bays or a set of 8 or 10 2.5″ SATA/ SAS/ NVMe bays. ASUS is using 4x 3.5″ SATA/ SAS but with a twist. The 3.5″ bays are also NVMe capable. While that may be one of the standout features, perhaps the most intriguing is that the ASUS RS500A-E9-RS4-U retains a slim optical bay even in the 1U form factor. Many vendors have sought to add SSDs above or below 3.5″ drives, but this is one of the few AMD EPYC 1U NVMe servers with an optical drive bay shipping (the drive is optional.)

We wanted to quickly note here that the server is not terribly deep. It is only 24.3″ or 616mm deep which means it fits into many racks. ASUS has had a number of products over the years that are shorter depth than expected. For those looking to an industry comparison, this is virtually the same as the HPE ProLiant DL325 Gen10 at 24.21″ or 615mm deep. Indeed, installed side-by-side, they are very close.

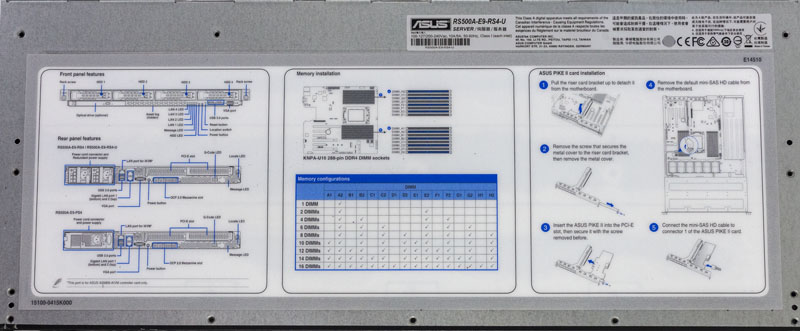

Atop the front of the chassis, ASUS has a service guide. We really like this in ASUS servers and it is a feature that high-end vendors all have, but many smaller OEMs overlook. When one needs to utilize remote hands in the field, these simple diagrams and instructions are important.

The rear of the 1U chassis has a fairly typical layout. The standard rear I/O consists of two 1GbE ports, two USB 3.0 ports, a VGA port, and an out-of-band management port. The 1GbE LAN utilizes an Intel i350 NIC which is compatible with every major OS, and even most smaller distributions.

Power supplies are redundant 770W 80Plus Platinum units.

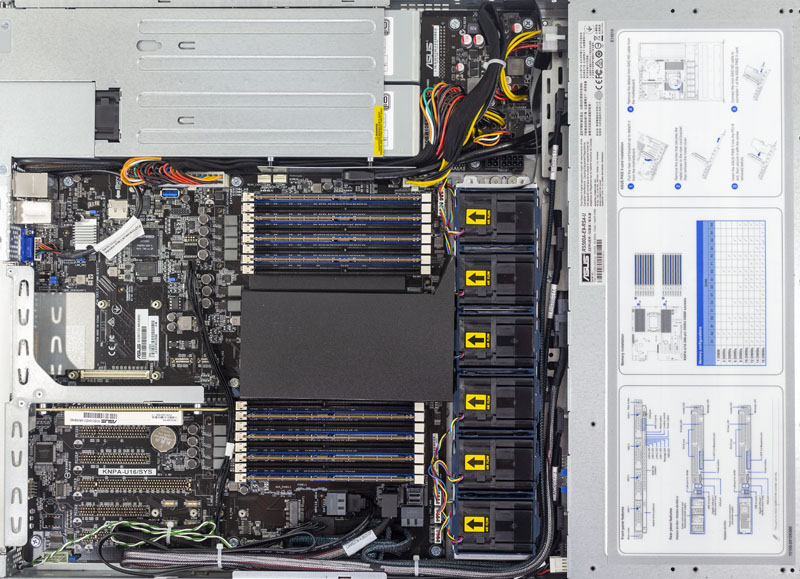

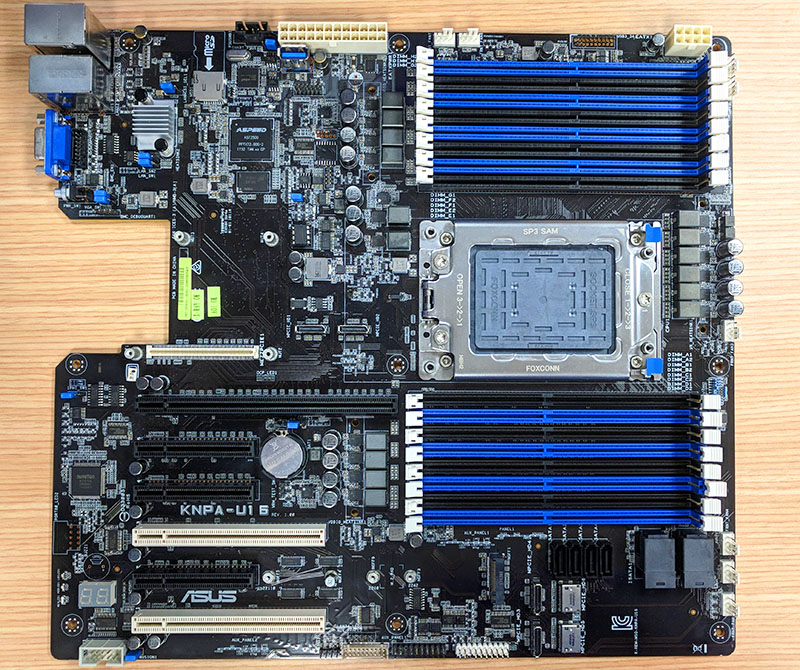

Inside the ASUS server, one can see a general layout that we have seen from many similar systems. Headlining the system is the AMD EPYC SP3 socket flanked by a total of 16x DDR4 DIMM slots. That means a single socket AMD EPYC CPU has the memory capacity and bandwidth to rival a dual-socket Intel Xeon E5 V3/ V5 system.

The AMD EPYC CPU heatsink uses an airflow shroud to direct multiple fans flows through its passive heatsink. This shroud is secured by one screw. The design works, but we wish this was a sturdier solution. It is a fairly common industry practice to use this type of solution, but sturdier plastic airflow guides hold up better to field service.

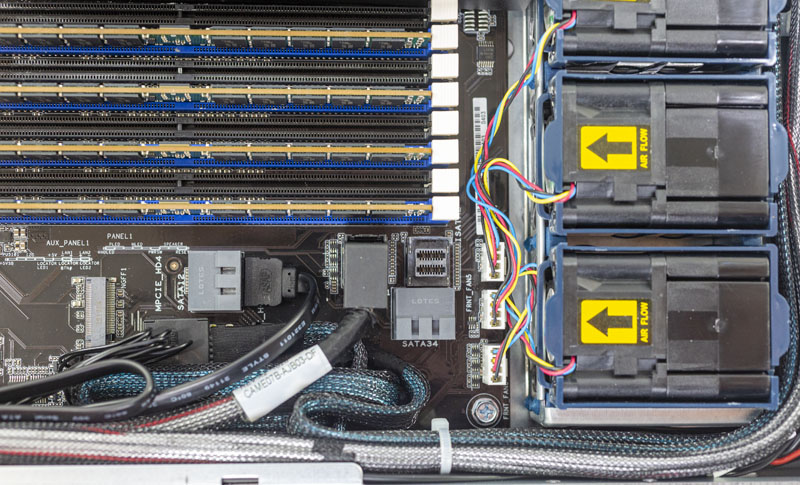

On the cooling front, ASUS has 1U cans that are easy to service. At the same time, these fans are directly wired to PWM headers which makes field replacement slightly more challenging than with some of the hot-swap fan designs we see in the market. We recently addressed this type of design in Are Hot-Swap Fans in Servers Still Required? and found that today’s fans are so reliable hot-swap is less necessary than being easy to service.

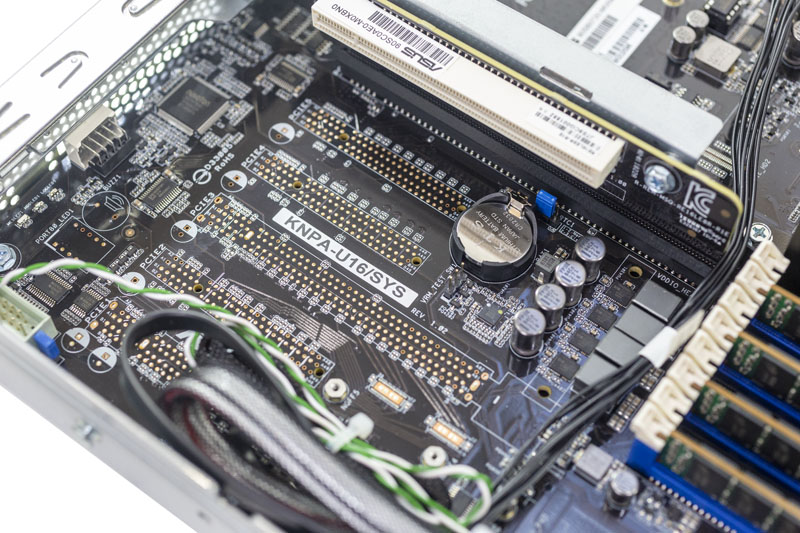

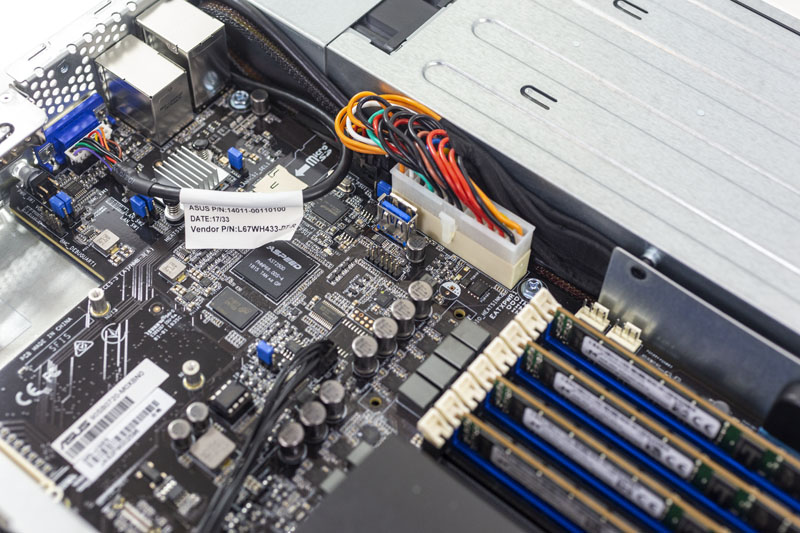

If the motherboard looks familiar, it should. We actually saw the motherboard in this system almost a year ago when we visited ASUS Server at Computex 2018.

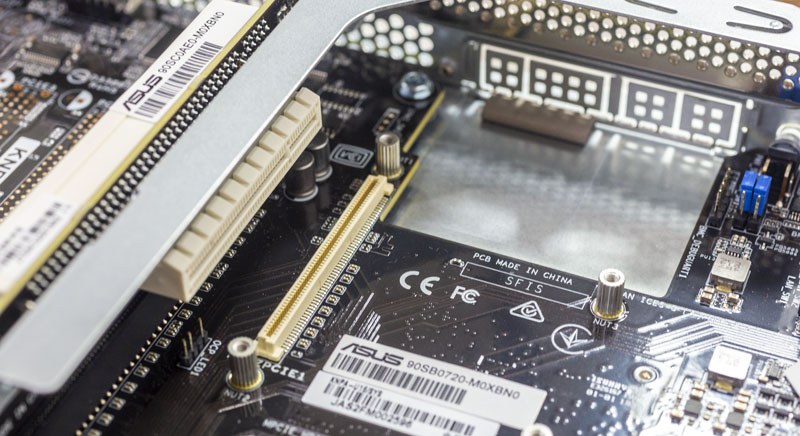

As one can see, the version in the ASUS RS500A-E9-RS4-U is slightly different as ASUS de-populated many of the PCIe slots which is desirable in 1U configurations. By de-populating the PCIe slots on the EATX motherboard, it gives more room for PCIe slots.

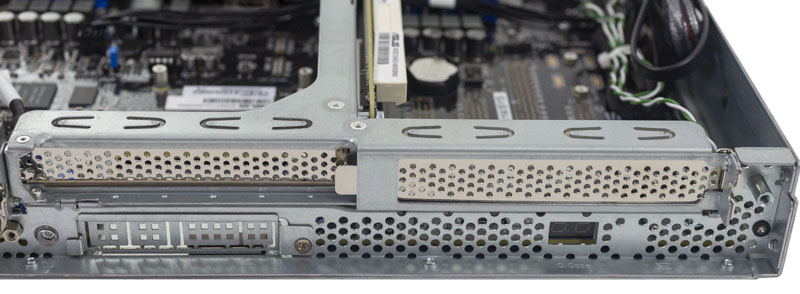

The ASUS RS500A-E9-RS4-U has two PCIe slots on either side of the riser. These are PCIe x16 and x8. Expansion does not stop there. ASUS is adopting the OCP 2.0 NIC form factor with the RS500A-E9-RS4-U. We like this as there is a broad ecosystem for OCP NICs. It is much better for end-users and systems integrators that ASUS is adopting an industry-standard instead of making their own NIC form factor.

For some frame of reference, here are the two PCIe riser slots on the rear of the chassis and the OCP cutout.

One will also see that this, like other ASUS servers, includes the Q-Code feature. When the system is booting, this LCD display shows the post code. That can be helpful to see if a server is in a reboot loop, if there is a hardware error preventing post from completing, or even just that everything is working well. Most servers can display this via IPMI, but sometimes it is easier to simply see it when you are in the hot aisle and diagnosing many servers.

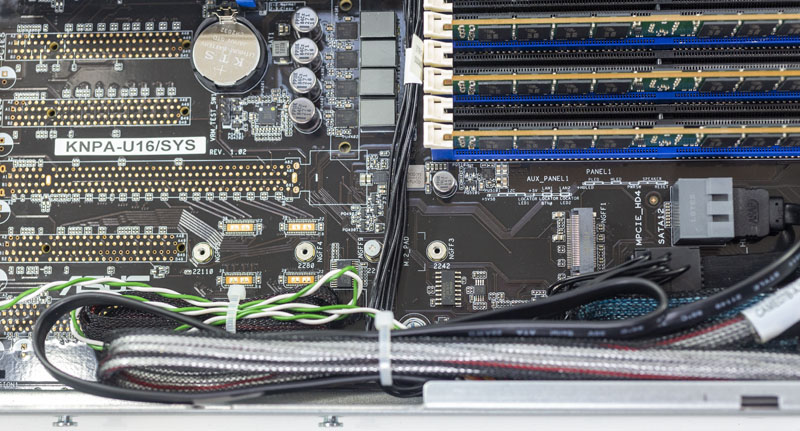

Beyond what we have already shown, there is a M.2 slot that can take up to 22110 (110mm) SSDs. We were a bit surprised to see that this is a SATA M.2 solution. As such, this is more of a boot drive option.

We should probably mention that there are a lot of cables in this chassis. That is part of the process when adapting a channel EATX motherboard to a 1U chassis. Cables cross the PCB and are tied down, but there are still many more cables than you would see in some other solutions on the market.

We are going to cover the out-of-band management in the next section, but the ASPEED AST2500 is an industry-standard Arm-based BMC. The server also includes a MicroSD slot and an internal USB 3.0 Type-A header. Many organizations use these slots to add low-cost flash.

Overall, the ASUS RS500A-E9-RS4-U is fairly easy to work on and has a lot of functionality onboard. From a hardware perspective, the one glaring weak point is PCIe connectivity. In this configuration, only about 48 of the AMD EPYC single socket’s 128 PCIe/ high speed I/O lanes are used for PCIe devices. That is lower than some of the other solutions we have seen. On the other hand, there is a market for lower-cost devices and many of these servers will be deployed with one or two drives and one or no add-on cards. For a range of use cases, the ASUS RS500A-E9-RS4-U has everything one may need.

Next, we are going to take a look at the ASUS RS500A-E9-RS4-U ASMB9 management. We will then get to our performance testing followed by our final thoughts.

I have an RS500a-E10-RS12 in front of me right now (the 12x SFF model of the same system, while the RS4 above is the 4x LFF model). Asus’s website and docs aren’t exactly clear on the differences between the two models, other than (obviously) using different drive sizes. The only real difference I saw in the spec sheet was a mention that you could only use LP cards in the RS12.

That’s not actually strictly accurate. The right x16 riser comes occupied with a 2x oculink card, connected to the 11th and 12th NVMe bays. There’s also a 3rd “riser” in the system, in the far left motherboard x16 slot. Instead of a PCIe slot, the riser has 4x oculink ports. Mechanically, this means that you can’t use *full*-height cards in the middle x16 riser, but you still have to use a full-height bracket. The card can be a bit taller than LP, though; the limit is around 1/2″ shorter than the FH bracket.

So, on the RS12, you have room for 1 x16 PCIe 4.0 card and 1 x16 OCP 2.0 PCIe 4.0 card, down one slot from the RS4. I had a spare 2x 10GbE OCP card on hand; it fits under the 2x oculink card but there isn’t a ton of clearance.

Thanks for sharing! It would be interesting to see a review of the RS500A-E10-RS12U model, too!