ASUS RS500A-E9-RS4-U ASMB9 Management

With this generation of the ASUS out-of-band management implementation, the company is using standard MegaRAC firmware. That brings a number of new features including a responsive web layout.

One of the other big features is the ability to use a HTML5 iKVM. We had a number of ASUS servers in the lab previously where the Java iKVM client would constantly require firmware updates to function due to Java security certificates. This is a big win for the company.

At STH, we recently posted a video tour of the management interface. You can see the tour here:

Overall, this is a more modern experience. At the same time, ASUS is focused on providing more of the standard MegaRAC firmware rather than adding an enormous set of proprietary features. Many customers want exactly this. Others like the features that Dell EMC, HPE, Lenovo, and others provide to their customers.

Next, we are going to look at the test configuration before moving on to our performance testing.

ASUS RS500A-E9-RS4-U Topology

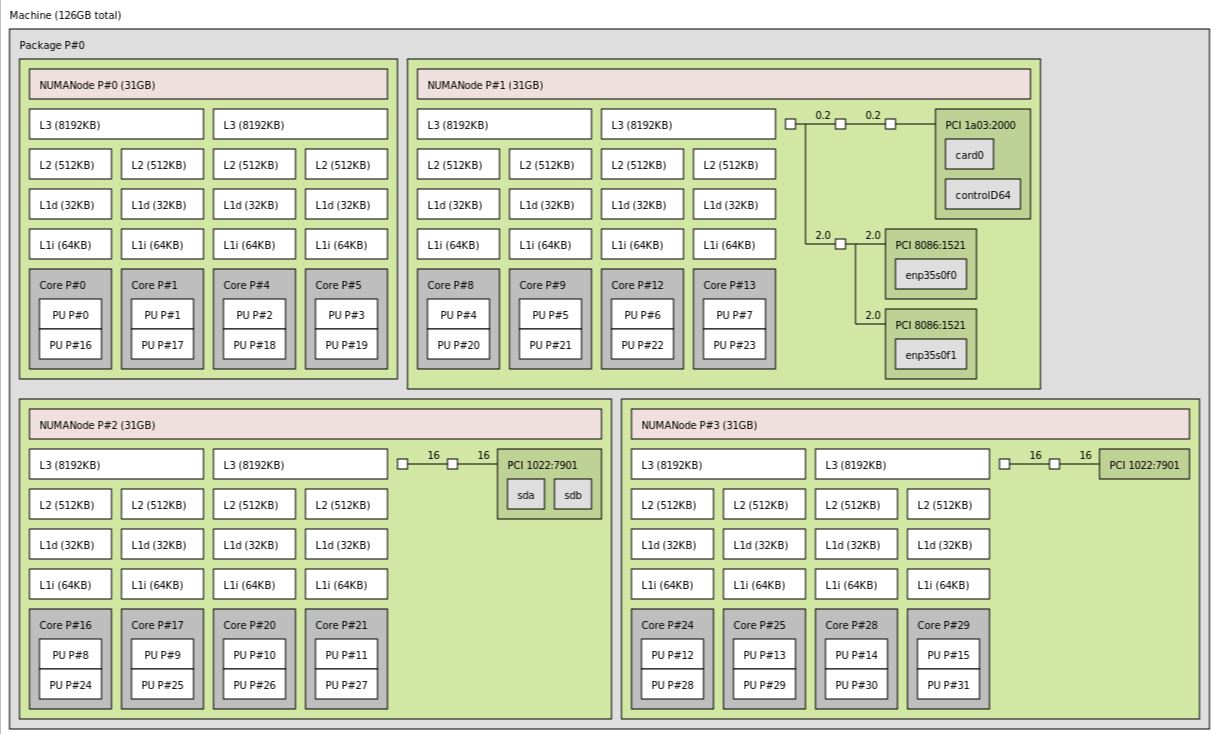

One area that we are keenly aware of today, and will be increasingly so with future multi-chip packages, is system topology.

With a single socket, the AMD EPYC platform has everything connected to a single CPU. At the same time, we can see that ASUS is using I/O from different dies for its onboard devices. That generally increases socket-to-socket communication needs.

ASUS RS500A-E9-RS4-U Test Configuration

The ASUS RS500A-E9-RS4-U we tested in a number of configurations. Here is the set of hardware we used:

- Server: ASUS RS500A-E9-RS4-U

- CPUs: AMD EPYC 7251, EPYC 7281, EPYC 7301, EPYC 7351P, EPYC 7401P, EPYC 7451, EPYC 7501, EPYC 7551P, EPYC 7601

- Memory: 8x 16GB Micron DDR4-2666

- SSDs: 2x Samsung 960GB SATA and 2x 1.92TB NVMe U.2

- NICs: Mellanox 10GbE OCP, Mellanox ConnectX-5 100GbE

We also installed an AMD EPYC 7371, but we did not see it on the HCL when we started testing. It worked and the topology map above was actually using that CPU.

Next, we are going to look at CPU performance of the ASUS RS500A-E9-RS4-U.

I have an RS500a-E10-RS12 in front of me right now (the 12x SFF model of the same system, while the RS4 above is the 4x LFF model). Asus’s website and docs aren’t exactly clear on the differences between the two models, other than (obviously) using different drive sizes. The only real difference I saw in the spec sheet was a mention that you could only use LP cards in the RS12.

That’s not actually strictly accurate. The right x16 riser comes occupied with a 2x oculink card, connected to the 11th and 12th NVMe bays. There’s also a 3rd “riser” in the system, in the far left motherboard x16 slot. Instead of a PCIe slot, the riser has 4x oculink ports. Mechanically, this means that you can’t use *full*-height cards in the middle x16 riser, but you still have to use a full-height bracket. The card can be a bit taller than LP, though; the limit is around 1/2″ shorter than the FH bracket.

So, on the RS12, you have room for 1 x16 PCIe 4.0 card and 1 x16 OCP 2.0 PCIe 4.0 card, down one slot from the RS4. I had a spare 2x 10GbE OCP card on hand; it fits under the 2x oculink card but there isn’t a ton of clearance.

Thanks for sharing! It would be interesting to see a review of the RS500A-E10-RS12U model, too!