At STH, we review a ton of server CPUs, but very few of them change the narrative of the industry like this AMD EPYC 7371 review. A few weeks ago, we covered the AMD EPYC 7371 Frequency Optimized Processor Launch during SC18. At the time, we thought that given the timing alongside Supercomputing 2018, that it was a HPC part. It may find legs in the HPC industry, but where it is going to change the industry’s narrative is in per core performance. A great example of a community that should clamor for the AMD EPYC 7371 is the Windows Server community. The base Windows Server 2019 license for Windows Server 2019 Standard and Datacenter are 16 cores which align perfectly with the 16-core EPYC 7371. With the release of the AMD EPYC 7371, AMD will have a chip with more performance than the Intel Xeon Scalable family at 16 cores. It will have more memory capacity and PCIe lanes than the Intel Xeon Scalable family. That will change the industry’s narrative on AMD EPYC v. Intel Xeon.

Getting Started with the AMD EPYC 7371

To get a few key points out of the way before we delve into this part. First, the expected availability of the AMD EPYC 7371 is Q1 2019. We do not have a price on the part. There is no official product page at this point, but we used retail marked chips. We have dual socket numbers, and the parts are dual socket capable. We instead wanted to focus this piece on single socket configurations because when we looked at the data, this is a game-changer.

To put it into perspective, STH runs our own hosting infrastructure. If you are reading this article on its release date, you are being served portions of the STH from AMD EPYC 7351P and AMD EPYC 7551 based servers. There is Intel Xeon in the infrastructure as well, we use a mix since we believe in using what we recommend. The AMD EPYC 7371 is the chip that fixes AMD’s Achilles heel: clock speed. Until now, Intel has had an advantage in clock speed while AMD has had an advantage in core counts with their current generations. With a 3.6GHz all core turbo and a 3.8GHz 8-core turbo, the AMD EPYC 7371 now matches or exceeds virtually every public Intel Xeon Scalable SKU.

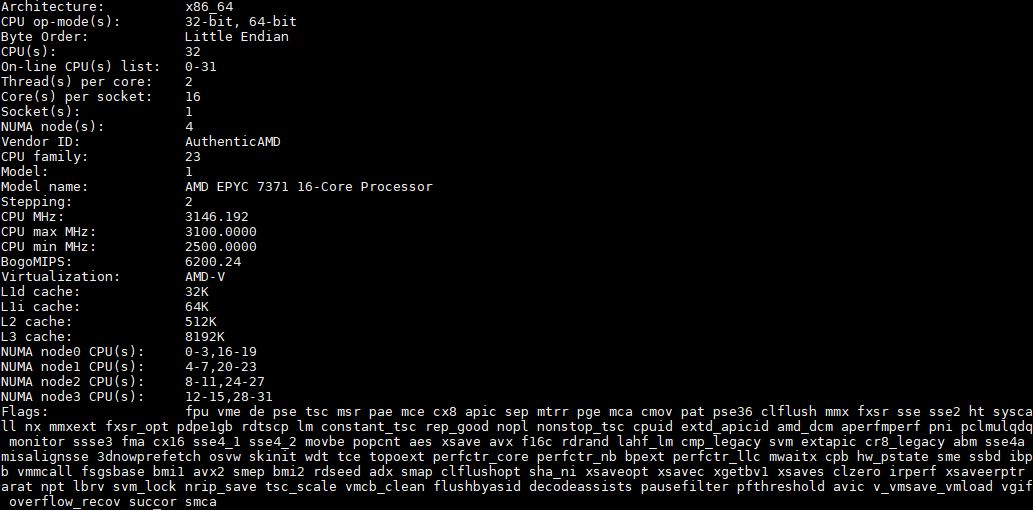

Key stats for the AMD EPYC 7371: 16 cores / 32 threads, 3.1GHz base, and 3.6GHz all core turbo. With 8 cores active, the AMD EPYC 7371 hits 3.8GHz turbo boost clocks. Feeding these high-speed cores is a whopping 64MB L3 cache or 4MB per core. The CPU features a 200W TDP which is the hottest AMD EPYC 7001 series CPU we have seen in the lab. Here is the lscpu output for the processor in single socket mode:

Intel has long history of parts that have fewer cores and more cache per core. To put this into some context, all of Intel’s current public Skylake-SP generation Xeons have 22MB L3 cache plus 1MB exclusive L2 cache. One can say that they have combined L2 and L3 caches of 38MB on their 16 core parts. The 8 core frequency optimized Intel Xeon Gold 6134 and Gold 6144 8 core parts have 8MB L2 plus 24.25MB L3 cache, or around 32.25MB per CPU or 64.5MB in dual socket configurations.

By contrast, if you want high cache levels, each AMD EPYC 7371 has 8MB L2 cache plus 64MB L3 cache per socket. Intel has always marketed the extra cache in their frequency optimized SKUs as a major selling point. Here, AMD simply has more.

AMD EPYC 7001 Series Background

Given that this is a first generation AMD EPYC part, codenamed “Naples” there is a key architectural feature that users must be aware of. A single socket AMD EPYC 7371 uses a four NUMA node implementation. You can read more about why in our AMD EPYC 7000 Series Architecture Overview for Non-CE or EE Majors article or learn about it in this video:

For those still unfamiliar with the AMD EPYC 7001 series here is AMD’s key value proposition bullets:

- More cores. AMD EPYC 7001 series parts range from 8 to 32 cores. From 16 to 32 cores, AMD EPYC 7001 SKUs are less expensive than Intel Xeon Scalable counterparts at the same core counts.

- More DRAM capacity. Each AMD EPYC has 8 channel memory (Xeon Scalable has 6 channel). In 16 DIMMs per socket, the AMD EPYC 7000 series can support up to 2TB of RAM per socket. The “M” series Xeon Scalable SKUs can only hit 1.5TB per socket, up from 768GB on a standard CPU, and carry a $3000 price tag for the privilege.

- 128 high speed I/O (PCIe/ SATA III) lanes in either single or dual socket mode. In single socket mode, AMD has up to 128 I/O lanes while Intel essentially has 48. You can learn more in our Single Socket AMD EPYC 7000 FAQ Answers to Common Questions.

- EPYC 7001 series is x86. You can get an Intel alternative architecture without needing major code ports or special support. Enterprise software will just work.

AMD EPYC 7001 series also has a few single socket only SKUs that have extremely low pricing. You can read our 16-core AMD EPYC 7351P review, 24-core AMD EPYC 7401P Review, and 32-core AMD EPYC 7551P Review to learn more. The AMD EPYC 7371 is a dual socket capable chip, that will sit above the AMD EPYC 7351 in the SKU stack.

Next, we are going to look at our test setup and configuration before we move on to benchmarks, power consumption, and our market positioning analysis.

On page 4 how do I know which terminal is for which processor?

I started reading and I was like whoa baby this is a 5 page CPU review why? Then I got into it at lunch and I know why. You’re right on the impact. That per-core performance is why we haven’t moved our Windows Hyper-V cluster to EPYC or even started to test it there.

You didn’t really mention it, but the clock speed also helps licenses in VMs. Maybe that’s obvious, but maybe to some it isn’t.

For SQL server you’re right that there’s other chips that might be better but those are extremely targeted products. It’s like EPYC’s first parts covered 75% of the market. These get them 20% more. Then there’s 5% that Intel still has better parts for.

Randy Bostrom if you look at the prompt text in those screenshots you’ll kick yourself. It’s a long review. It’s taken me 40 minutes to read.

CAN SOMEONE GET THESE GUYS GOLD 6142 CHIPS PLEASE

It can’t be that hard! Make it happen. @Intel if you don’t we’re gonna say you’re chicken.

I read STH reviews for “it is not released in a vacuum” phrase. What a peculiar feeling it triggers… Especially today, when it got paraphrased. And with a typo!

Patrick,

Why would you not have included the 7351P CPU into the “16 Core CPU Market” grid?

It is ~$400 cheaper than the 7351 CPUs, while providing (IIRC) the same performance. When talking about core-based licensing optimization it seems that this is the CPU model that is the “one to beat”.

{So much so that AMD really needs to make sure that they offer a 7371P variant, to help lock up that licensing market}

I’m with BinkyTO when is there going to be a 7371P version? I know what I want for Christmas. I’ll pay the power man.

It’s good to see AMD’s finally getting serious about frequency optimized. Maybe it’s better called performance per core optimized but that’s why we couldn’t use the EPYC that’s out there. Chips looked cheap but they’d cost too much for us to license servers for our environments. Your analysis is spot on.

6142 max all-core turbo is 3.3 GHz on 16 cores at around $2,950

Typo: Standard instead of Stanard

The base Windows Server 2019 license for Windows Server 2019 Stanard and Datacenter are 16 cores which

We we’re having this exact conversation in the office last week. We have about 40 Windows DC hosts. We’re RAM limited at 16C and 768GB. We want to EPYC but the single core is too low. This will fix

When in Q1?

That p5 SPEC CPU2017 here’s official data since I didn’t get it on the first read through

2x Gold 6130 164 https://www.spec.org/cpu2017/results/res2017q4/cpu2017-20171114-00735.html

2x EPYC 7351 165 https://www.spec.org/cpu2017/results/res2018q4/cpu2017-20180918-08912.html

2x Gold 6142 178 https://www.spec.org/cpu2017/results/res2017q4/cpu2017-20171211-01573.html

If Gold 6130 to 6142 is up to 500mhz more. EPYC 7351 to EPYC 7371 is 700MHz more, and more at the top single thread speed, then EPYC 7371 is going to do 185+. That wasn’t clear from the article on p5 but when I read the words then I looked at Cisco’s official results it make perfect sense. Maybe that’ll help someone else out

If they can do 4GHz at 225w crank it up. Intel’s been on this low power stuff for years but our data centers sell us metered power plus a cooling adder. 1 less server per rack would pay for 50-60w per server real quick.

If this comes in under $2k it’ll be a great value but you’ve still have to get people to welcome AMD again

Hopefully sth to go against the 6144 next.

I love STH for this review and analyst piece combined in one. They’re like analysts who have hands-on experience not just theoretical exposure.

For us, this is an important launch but not because we’re going to buy it. Our IT org will only buy second gen products. Rome would’ve been considered first gen for our Windows cluster. I’m going to put these in a RFP draft when they’re on the Dell site so I can get them nixed for being first gen. That’ll give me documentation to show Rome is a second gen product later in 2019. Next-level thinking here.

I was hoping for some 7nm EPYC by end of Q1/2019.

AMD has a once in a lifetime chance against Intel with Milan, they better hurry up to enjoy it as long as possible.

I buy some EPYC 7371 today for around 1550 USD. This price is absolutly fantastic and intel have nothing competive.

What cooler did you guys use? The Tyan heatsink says it doesn’t support the 200 watt processor.