Bonus Workloads with the AMD EPYC 7371

As the picture became clear that this was a hotrod CPU, we wanted to validate our assumptions a little more thoroughly before making our conclusion that the AMD EPYC 7371 is now the CPU to get. We wanted to show three different views of the gauntlet we put the chips through.

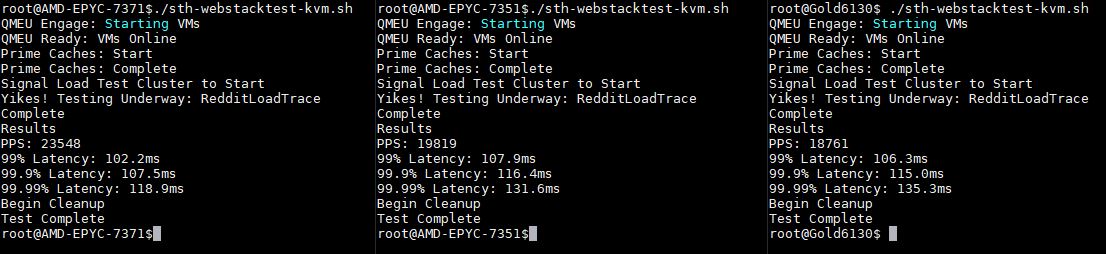

STH Hosting Analysis

One area that immediately piqued our interest was web hosting. At STH, we use both Intel Xeon E5 and Xeon Scalable CPUs, as well as AMD EPYC. Specifically, for our production cluster, we use AMD EPYC 7351P chips for our single-socket servers and AMD EPYC 7551 chips in our dual-socket servers. Let us be clear, everything runs absolutely fine on our current infrastructure. If we did not review these chips and believe in being willing to use what we recommend, we would not even think about upgrading what we have. At the same time, we have been working on introducing a containerized version of our production infrastructure in the lab for our 2019 test suite. This is a work in progress, and it is very different from a real web hosting scenario since we have 100GbE connectivity from the systems under test to our load generation nodes and everything is being served from a single machine. This is the reason we added the Intel Optane 480GB SSDs into the test beds, just to give us something comparable for our own purposes.

That is fairly impressive, and a large gap upgrade over both the AMD EPYC 7351(P) and the Intel Xeon Gold 6130. There are certainly software tuning opportunities, but this is a real-world example for us using an extremely lightly modified version of STH main site. What this tells us is that we can deploy AMD EPYC 7371 and see direct benefits to our hosting infrastructure by simply replacing chips.

AMD EPYC 7371 Under Duress

We spent a lot of time in the lab watching the AMD EPYC 7371 and trying to push it to lower clock speeds. As some context, the Intel Xeon Silver 4116 is the top end Xeon Silver SKU for Skylake-SP and it tops out at 3.0GHz. The Intel Xeon Gold 5100 range, outside of the quad-core Gold 5122, tops out at 3.2GHz maximum turbo frequency. We wanted to see if we could push the AMD EPYC 7371 to go below its 3.1GHz base clock since that is so fast it is about the speed of the turbo clocks of the Intel Xeon Silver and Gold 5100 ranges.

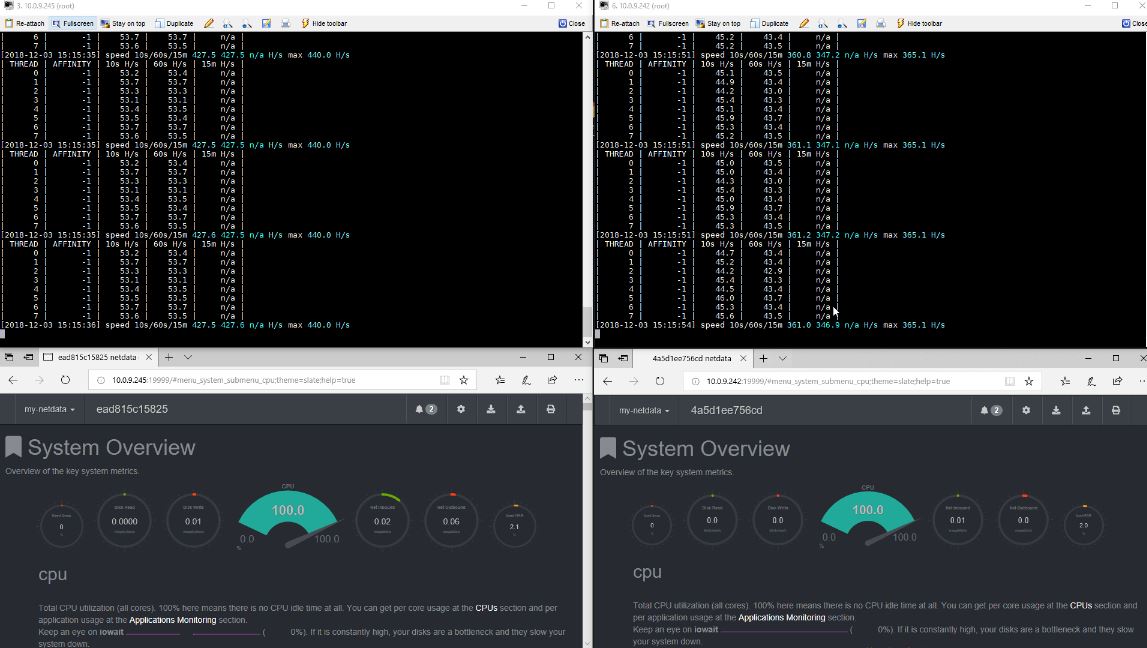

What we did was we took several of our benchmarks a step further and put the AMD EPYC 7371 “under duress.” Side-by-side we set up the AMD EPYC 7371 system with an AMD EPYC 7351 server. We launched docker containers pinned to each NUMA node on the CPUs to do Monero mining. These containers use around 2MB per thread, the same amount of L3 cache that each AMD EPYC 73×1 CPU has with 16MB L3 cache and 8 threads per die or 64MB total with 32 threads. This is a really nice way on AMD EPYC 7001 series chips to heavily stress the cores and caches. We also launched a netdata container on each.

We could watch the 100% CPU loading and pin frequencies to 3.1GHz and keep the chips out of their turbo frequencies. You can see that the AMD EPYC 7371 generally has a 20% performance advantage in this crypto workload, but we wanted to let the systems thrash.

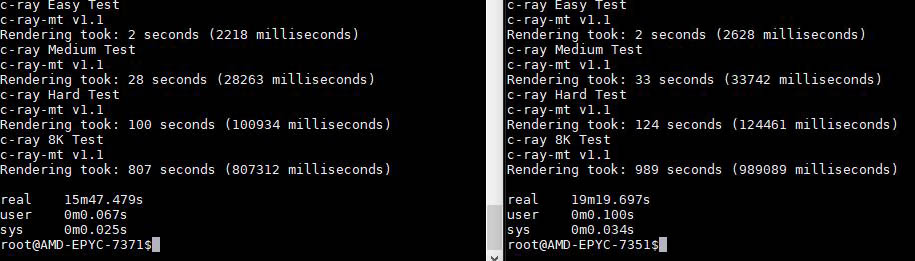

c-ray Under Duress

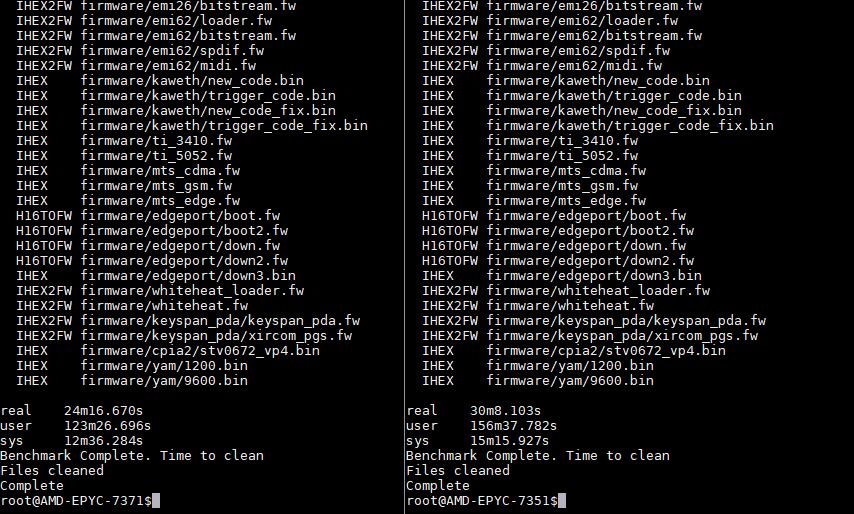

We went one step further. We wanted to see what would happen to c-ray when we started to thrash the processors. In the context of a CPU that is getting thrashed, we re-ran side-by-side a c-ray container while the cryptonight activity and the monitoring container were still running.

Side-by-side, one can see the impact of higher clock speeds. We have a 19-24% improvement in performance.

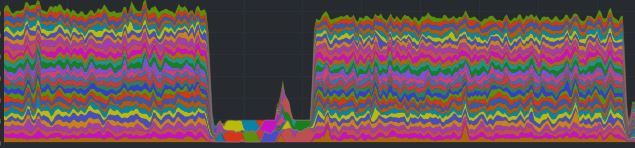

Linux Kernel Compile Under Duress

Whereas the c-ray benchmark is highly constrained to caches and scales extremely well with cores, compiling the Linux kernel uses more of the system and has some parts that are not as well threaded. Here is an example of CPU utilization, by thread, you may see during the compile.

We also used this to see the difference between the AMD EPYC 7371 and AMD EPYC 7351 under duress. Instead of running at maximum boost during the less threaded parts, the CPUs are pushed to their base frequencies during the test.

As you can see, the AMD EPYC 7371 is completing the task around 19.5% faster than the AMD EPYC 7351. Or said another way, the AMD EPYC 7351 takes around 24% more time to complete the task. This is expected given the similar architectures yet higher clock speeds for the AMD EPYC 7371.

That is an absolutely enormous gain within the same generation. During the Intel Xeon E5 era, we sometimes saw 100MHz increments for “refresh” mid-cycle chips that would add 3-5% more performance in a line. AMD is giving us 20%.

A Word on SPECrate2017_int_base AMD EPYC 7371

We seldom publish the results from our SPEC CPU2017 testing. Server vendors and CPU vendors have teams that optimize their platforms using AOCC, icc, and other compilers for their official runs. We understand this and typically we use gcc which provides a lower result that companies do not want to be found in searches and compared to official results of their competitors.

On the other hand, teams that buy 16 core Windows Servers often use SPECrate2017_int_base as a metric for RFP criteria. We are going to address this by simply giving a percentage delta. The AMD EPYC 7371 was about 19% faster in our gcc SPECrate2017_int_base runs over the AMD EPYC 7351. Putting that number into context, that is in-line with our other results so we feel that when official AMD EPYC 7371 numbers are published, they will see a significant speedup versus the AMD EPYC 7351.

Given what we have seen with our gcc numbers, and how they correlate to vendors’ official runs, we expect official SPECrate2017_int_base AMD EPYC 7371 figures will be in the 96 +/- 8% per socket range. That will put it above the Intel Xeon Gold 6142 and Gold 6142M (generally 85-90 per CPU) competition as the fastest 16 core CPUs on the market by a safe margin.

Again, if you are issuing an RFP, you should wait for official numbers from systems vendors, but in Q1 2019 as vendors release their official benchmark figures for the AMD EPYC 7371, the 16 core hierarchy will change with AMD atop of all of today’s x86 16-core per socket offerings.

Next, we are going to discuss power consumption before giving some of our market positioning thoughts and concluding our review.

On page 4 how do I know which terminal is for which processor?

I started reading and I was like whoa baby this is a 5 page CPU review why? Then I got into it at lunch and I know why. You’re right on the impact. That per-core performance is why we haven’t moved our Windows Hyper-V cluster to EPYC or even started to test it there.

You didn’t really mention it, but the clock speed also helps licenses in VMs. Maybe that’s obvious, but maybe to some it isn’t.

For SQL server you’re right that there’s other chips that might be better but those are extremely targeted products. It’s like EPYC’s first parts covered 75% of the market. These get them 20% more. Then there’s 5% that Intel still has better parts for.

Randy Bostrom if you look at the prompt text in those screenshots you’ll kick yourself. It’s a long review. It’s taken me 40 minutes to read.

CAN SOMEONE GET THESE GUYS GOLD 6142 CHIPS PLEASE

It can’t be that hard! Make it happen. @Intel if you don’t we’re gonna say you’re chicken.

I read STH reviews for “it is not released in a vacuum” phrase. What a peculiar feeling it triggers… Especially today, when it got paraphrased. And with a typo!

Patrick,

Why would you not have included the 7351P CPU into the “16 Core CPU Market” grid?

It is ~$400 cheaper than the 7351 CPUs, while providing (IIRC) the same performance. When talking about core-based licensing optimization it seems that this is the CPU model that is the “one to beat”.

{So much so that AMD really needs to make sure that they offer a 7371P variant, to help lock up that licensing market}

I’m with BinkyTO when is there going to be a 7371P version? I know what I want for Christmas. I’ll pay the power man.

It’s good to see AMD’s finally getting serious about frequency optimized. Maybe it’s better called performance per core optimized but that’s why we couldn’t use the EPYC that’s out there. Chips looked cheap but they’d cost too much for us to license servers for our environments. Your analysis is spot on.

6142 max all-core turbo is 3.3 GHz on 16 cores at around $2,950

Typo: Standard instead of Stanard

The base Windows Server 2019 license for Windows Server 2019 Stanard and Datacenter are 16 cores which

We we’re having this exact conversation in the office last week. We have about 40 Windows DC hosts. We’re RAM limited at 16C and 768GB. We want to EPYC but the single core is too low. This will fix

When in Q1?

That p5 SPEC CPU2017 here’s official data since I didn’t get it on the first read through

2x Gold 6130 164 https://www.spec.org/cpu2017/results/res2017q4/cpu2017-20171114-00735.html

2x EPYC 7351 165 https://www.spec.org/cpu2017/results/res2018q4/cpu2017-20180918-08912.html

2x Gold 6142 178 https://www.spec.org/cpu2017/results/res2017q4/cpu2017-20171211-01573.html

If Gold 6130 to 6142 is up to 500mhz more. EPYC 7351 to EPYC 7371 is 700MHz more, and more at the top single thread speed, then EPYC 7371 is going to do 185+. That wasn’t clear from the article on p5 but when I read the words then I looked at Cisco’s official results it make perfect sense. Maybe that’ll help someone else out

If they can do 4GHz at 225w crank it up. Intel’s been on this low power stuff for years but our data centers sell us metered power plus a cooling adder. 1 less server per rack would pay for 50-60w per server real quick.

If this comes in under $2k it’ll be a great value but you’ve still have to get people to welcome AMD again

Hopefully sth to go against the 6144 next.

I love STH for this review and analyst piece combined in one. They’re like analysts who have hands-on experience not just theoretical exposure.

For us, this is an important launch but not because we’re going to buy it. Our IT org will only buy second gen products. Rome would’ve been considered first gen for our Windows cluster. I’m going to put these in a RFP draft when they’re on the Dell site so I can get them nixed for being first gen. That’ll give me documentation to show Rome is a second gen product later in 2019. Next-level thinking here.

I was hoping for some 7nm EPYC by end of Q1/2019.

AMD has a once in a lifetime chance against Intel with Milan, they better hurry up to enjoy it as long as possible.

I buy some EPYC 7371 today for around 1550 USD. This price is absolutly fantastic and intel have nothing competive.

What cooler did you guys use? The Tyan heatsink says it doesn’t support the 200 watt processor.