ASUS RS500A-E9-RS4-U Power Consumption

We test rackmount power in our data center using 208V 30A Schneider Electric APC PDUs. We measured power using the AMD EPYC 7401P, 128GB of RAM, and dual SSDs, and saw some great figures:

- Idle: 82W

- 70% Load: 219W

- 100% Load: 253W

- Peak: 288W

If you are comparing this to a dual-socket Intel Xeon Silver system, as an example, you will have lower power consumption and only need a single socket in the ASUS RS500A-E9-RS4-U. That lowers operating costs and helps service providers maximize rack density.

Note these results were taken using a 208V Schneider Electric / APC PDU at 17.8C and 71% RH. Our testing window shown here had a +/- 0.3C and +/- 2% RH variance. The figures are certainly more than the single-socket Intel Xeon Silver line, but with that extra power consumption, ASUS and AMD are delivering a more expandable platform and more performance.

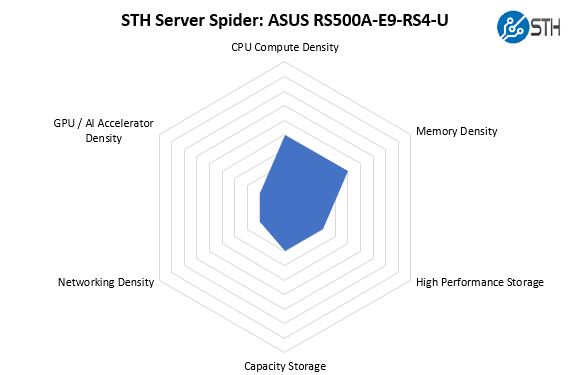

STH Server Spider: ASUS RS500A-E9-RS4U

In the second half of 2018, we introduced the STH Server Spider as a quick reference to where a server system’s aptitude lies. Our goal is to start giving a quick visual depiction of the types of parameters that a server is targeted at.

The ability to house four 3.5″ drives increases the total capacity storage options of the 1U platform versus some of its competitors. On the other hand, only four NVMe drive bays limit the high-performance storage capabilities. The OCP networking helps the ASUS RS500A-E9-RS4-U while the single PCIe x16 slot limits the accelerator density.

Final Words

Overall, the ASUS RS500A-E9-RS4-U is a good first-generation AMD EPYC 7001 platform. For now, the ASUS RS500A-E9-RS4-U is showing the company’s continued commitment to a platform that incorporates industry-standard features to make it easy to deploy. We also appreciate that ASUS removes unused PCIe slots since that helps lower cost and gives more room for expansion cards. At the same time, we wish that ASUS put more of the PCIe lanes to use in the platform.

I have an RS500a-E10-RS12 in front of me right now (the 12x SFF model of the same system, while the RS4 above is the 4x LFF model). Asus’s website and docs aren’t exactly clear on the differences between the two models, other than (obviously) using different drive sizes. The only real difference I saw in the spec sheet was a mention that you could only use LP cards in the RS12.

That’s not actually strictly accurate. The right x16 riser comes occupied with a 2x oculink card, connected to the 11th and 12th NVMe bays. There’s also a 3rd “riser” in the system, in the far left motherboard x16 slot. Instead of a PCIe slot, the riser has 4x oculink ports. Mechanically, this means that you can’t use *full*-height cards in the middle x16 riser, but you still have to use a full-height bracket. The card can be a bit taller than LP, though; the limit is around 1/2″ shorter than the FH bracket.

So, on the RS12, you have room for 1 x16 PCIe 4.0 card and 1 x16 OCP 2.0 PCIe 4.0 card, down one slot from the RS4. I had a spare 2x 10GbE OCP card on hand; it fits under the 2x oculink card but there isn’t a ton of clearance.

Thanks for sharing! It would be interesting to see a review of the RS500A-E10-RS12U model, too!