At Hot Chips 33, Intel Mount Evans was featured after its very recent Intel IPUs and Mount Evans ASIC at Architecture Day 2021 presentation. Hopefully, we can cover a few new details with the new chips. As with other talks, we are doing these live so please excuse typos.

Intel Mount Evans DPU IPU Arm Accelerator at Hot Chips 33

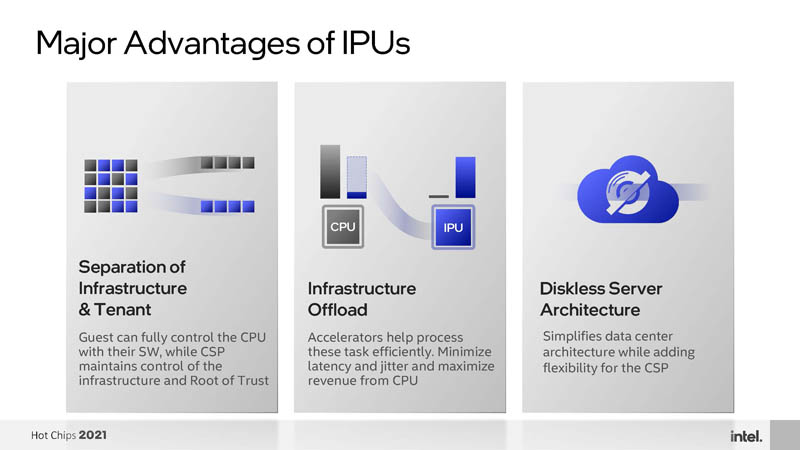

Intel has three main advantages for its DPU IPUs. Specifically, they are the separation of the infrastructure and application planes, the infrastructure offload functions, and being able to enable diskless server architectures.

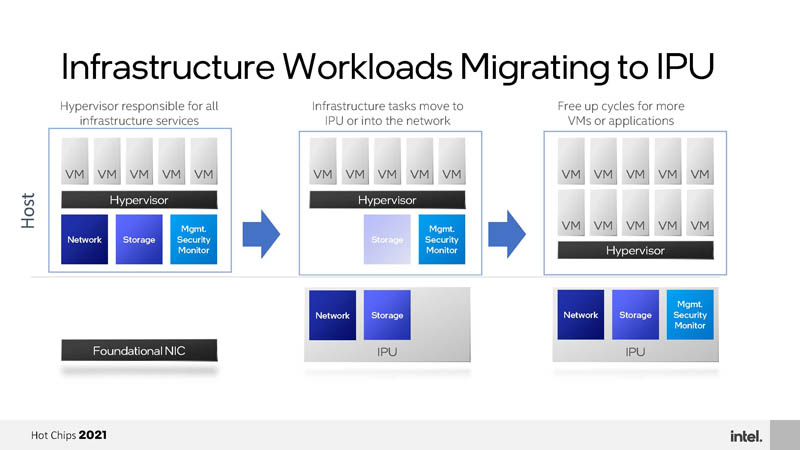

Part of the goal of Mount Evans is moving more infrastructure workloads to the ASIC rather than running them on the CPU, freeing up CPU cores to be sold for cloud service providers or being used for other higher-value purposes.

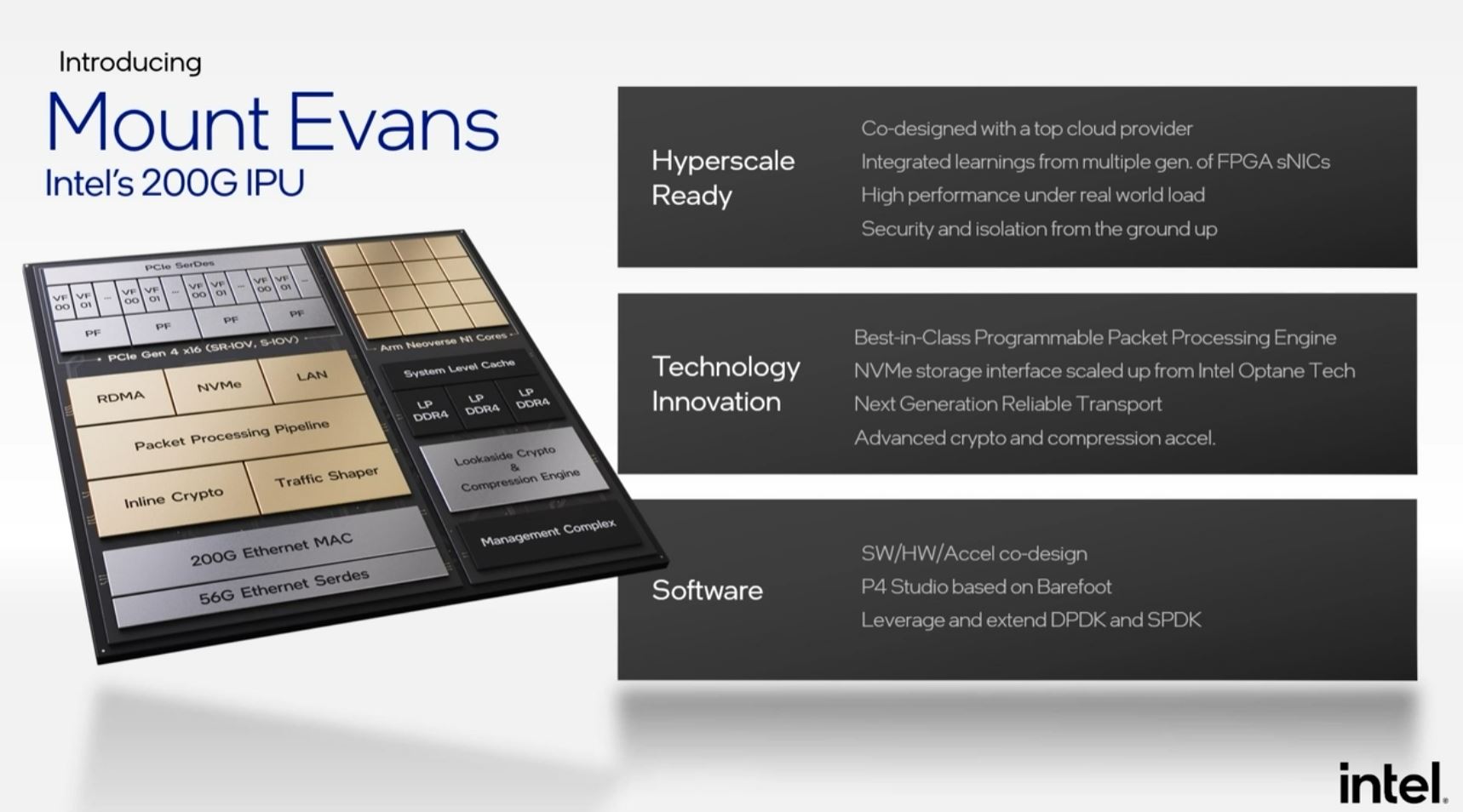

Here is the Mount Evans overview from Architecture Day 2021.

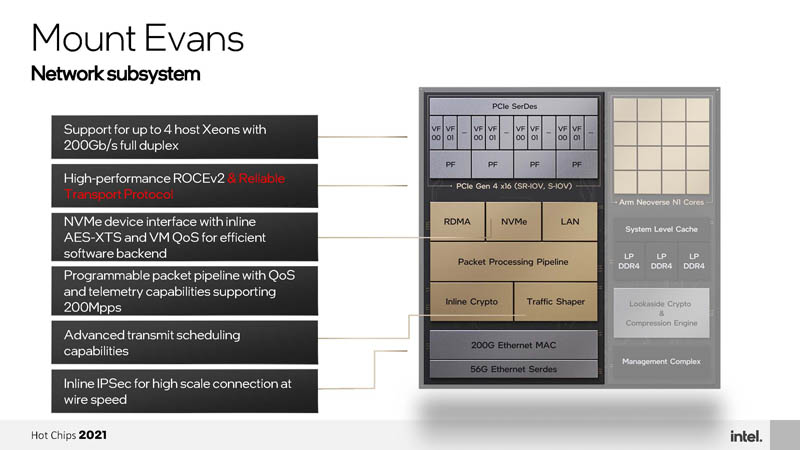

Intel has a number of new disclosures around the network subsystem (NSS) which is the data plane processing unit. As an example, The reliable transport protocol is designed to help long-tail latency on the network and was developed with a CSP customer.

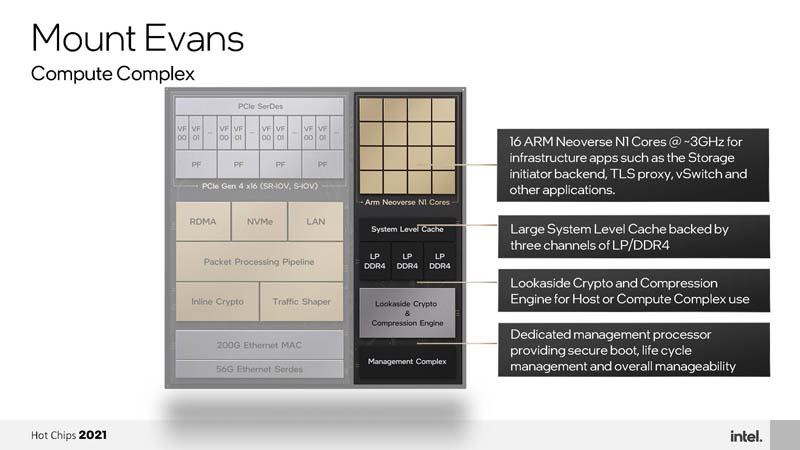

Intel also has some new disclosures a few days after Architecture Day 2021 on the compute complex. One can see, for example, that Intel is using N1 cores at around 3.0GHz. CPU is one area where the current BlueField-2 could use a bit more performance. The 2022 NVIDIA BlueField-3 DPU will have 16x A78 cores but the Marvell Octeon 10 will have up to 36 Neoverse N2 cores that Arm says are 40% faster on an IPC basis.

Quickly here, the Management Complex has dual Arm A53 cores. These are really management processors not designed to be application processors. A53’s are commonly used for management applications.

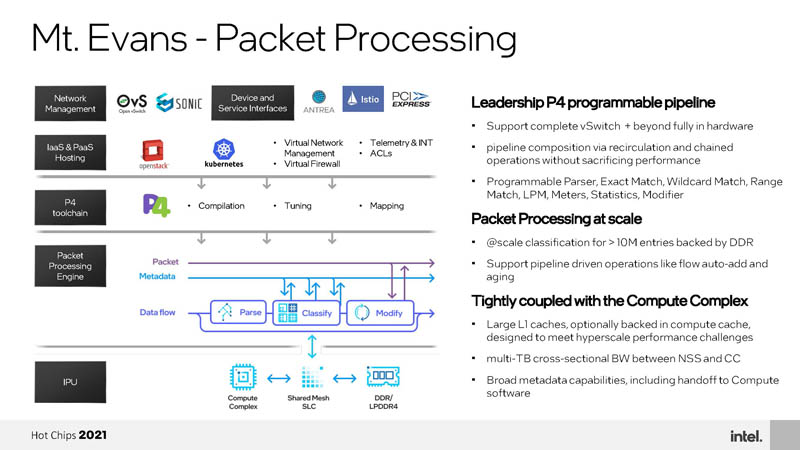

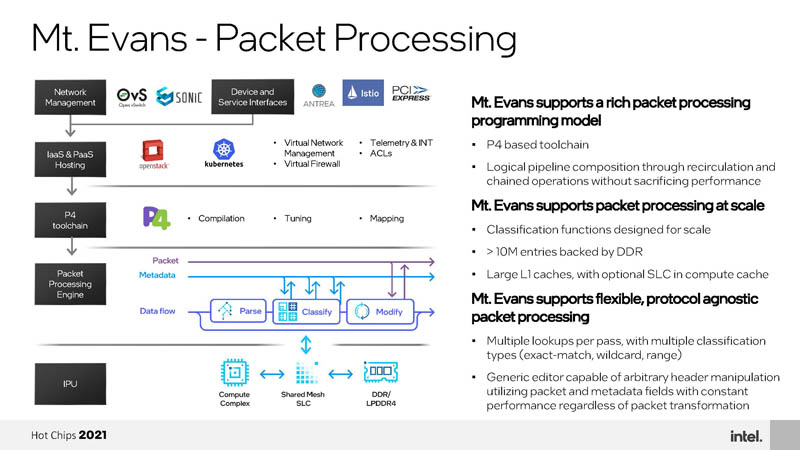

Intel has P4 from its Barefoot acquisition and has a programmable pipeline for a full-featured vSwitch.

It can also run multiple P4 programs simultaneously each with their own control planes.

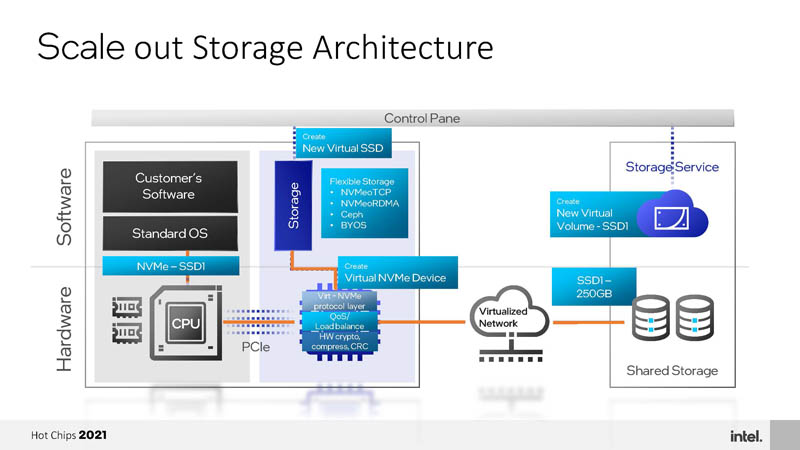

One of the key goals of Mount Evans is innovating on the storage initiator side. Intel discusses this as part of the idea of diskless servers. The goal is that the Mount Evans DPU IPU creates a virtual volume. That virtual volume is created on the infrastructure back-end across the network. To the server, it looks like a local NVMe device. It can be managed by the infrastructure provider so one can do things such as manage snapshots, capacity expansion, and so forth without having to run those functions on the host CPU. The other aspect is that Mount Evans can compress data at the ASIC before pushing it over the network. Compression and encryption before pushing data over the network speeds the back-end transfers and reduces bandwidth load.

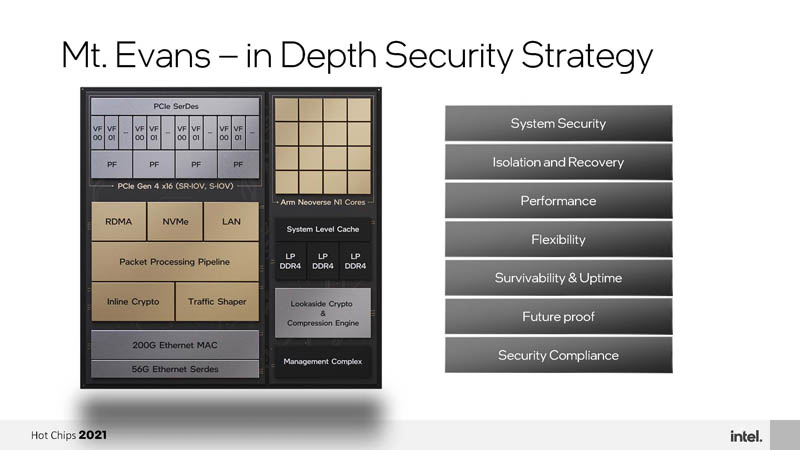

Mount Evans has six locations on the die to process cryptography. Intel also has features such as malicious host driver detection. Overall, Intel has a lot of thought behind security.

There is clearly a lot going on here.

Final Words

Mount Evans is designed to work within the PCIe power envelope but did not give power. Still, we are very excited to see Mount Evans hands-on. We have stacks of NVIDIA BlueField-2 DPUs here at STH, so it would be great to get these online as well. Intel has a bit of an advantage using QAT that has been around since 2013 (albeit upgraded for Mount Evans) and P4. There is something to be said for using well-known programming models in a new class of devices.

Was there info on power consumption? There is 75W from the slot but knowing from the BF2s you may need an additional power connector. Our projects limit us to using the slot only but it would be nice to see where power consumption and cooling requirements are going for these cards.