NVIDIA is making Multipath Reliable Connection (MRC), a next-generation RDMA transport protocol proven at scale on NVIDIA Spectrum-X Ethernet hardware, available to the broader industry through the Open Compute Project. The move gives NVIDIA a more open story around Spectrum-X Ethernet as AI training clusters push into larger multi-rack and gigascale deployments.

Why MRC on Spectrum-X Ethernet Matters

MRC enables a single RDMA connection (RoCEv2) to distribute traffic across multiple network paths simultaneously, giving large-scale AI training fabrics improved throughput, better load balancing, and higher availability. MRC finds the fastest available path and switches dynamically when congestion or failures appear, giving operators more control over data flow between GPUs. Packet spraying combined with path-aware failure handling help ensure data can quickly and reliably traverse large cluster networks.

At the scale of modern AI factories, even brief network disruptions can slow or interrupt an entire training job. MRC addresses this through software-accelerated load balancing across all available paths, dynamic congestion avoidance that sustains high bandwidth by rerouting traffic in real time, intelligent retransmission for rapid recovery from data loss, and microsecond-level failure bypass that detects network path failures at hardware speed. Fine-grained traffic visibility gives administrators control over routing, simplifying operations at scale.

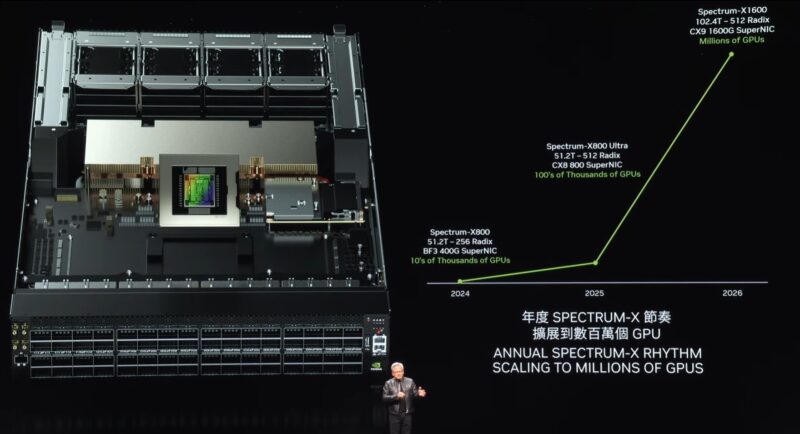

Another key innovation tied to MRC on Spectrum-X Ethernet is support for multiplanar network architectures. A multiplane network consists of multiple independent network fabrics, or “planes,” each providing an alternate communication path between GPUs. Spectrum-X Ethernet’s multiplane capability adds accelerated load balancing across these planes, boosting resiliency and scale without sacrificing performance. This architecture keeps latencies predictably low while scaling to hundreds of thousands of GPUs, a requirement that’s becoming standard as frontier LLM training runs grow larger and more complex. Today, multiplane has become very prevalent in large-scale clusters.

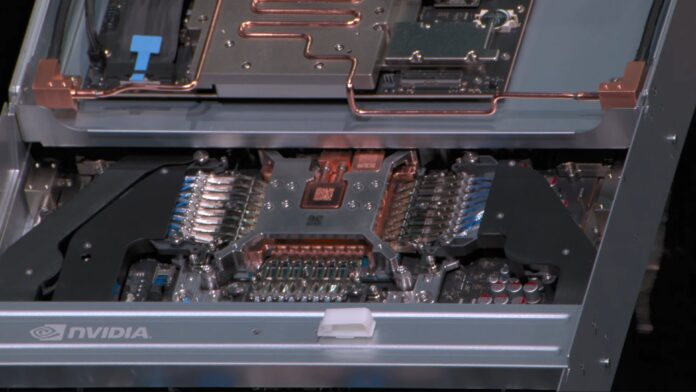

MRC also matters most when operators control the infrastructure or when they want more control of the hardware running elsewhere. Running on dedicated or owned hardware gives operators the ability to use custom protocols, shape routing behavior, and deploy telemetry that matches their specific cluster architecture. If the network is just a leased black box behind rented capacity, there is less room for that kind of deep optimization. Spectrum-X Ethernet already uses RoCEv2 and runs across Spectrum-4 and Spectrum-5 generations, so these capabilities are not only a future standards discussion. They are already deployed and operating at scale.

Spectrum-X Ethernet and MRC are already deployed with OpenAI at major hyperscalers, including Oracle and Microsoft. That is what makes this more than just a whitepaper. MRC is being discussed as a capability already operating in some of the largest AI infrastructure environments.

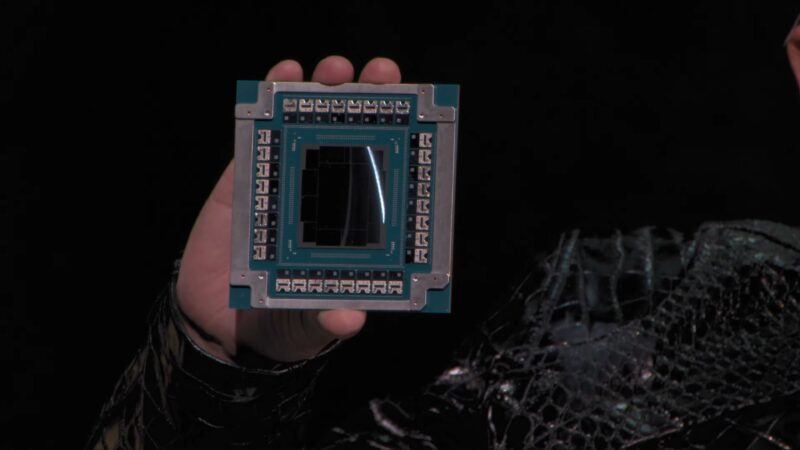

MRC is not a closed NVIDIA technology. NVIDIA collaborated on its development with AMD, Broadcom, Intel, and major cloud providers. The protocol is now released as an open specification through the Open Compute Project, allowing the broader industry to build interoperable, Spectrum-X-Ethernet-compatible networking stacks. Both Spectrum-X Ethernet Adaptive RDMA and MRC run natively across NVIDIA SuperNICs and Spectrum-X Ethernet switches, giving customers the flexibility to choose the transport protocol that best fits their workload.

On the competitive front, the Ultra Ethernet Consortium has generated significant industry buzz around open AI networking standards. Spectrum-X Ethernet is already using RoCEv2 with MRC deployed across multiple generations of Spectrum-X Ethernet switches. NVIDIA’s push to open-source the protocol is another step in its broader open strategy, while still keeping Spectrum-X Ethernet as the optimized hardware and software platform for deploying it. Opening up what it is doing on the protocol side here shows that NVIDIA’s networking team is very confident in what it has built with Spectrum for OpenAI and others.

Final Words

The important part of MRC is that NVIDIA is trying to make Spectrum-X Ethernet feel less like a proprietary alternative to Ethernet and more like a production path for Ethernet-based AI fabrics. For customers running large-scale training workloads, hardware-accelerated load balancing, dynamic congestion avoidance, and microsecond failure recovery address real cluster problems. NVIDIA has deployments and hardware today, but the open-specification angle is what makes this more than just another Spectrum-X Ethernet feature announcement.