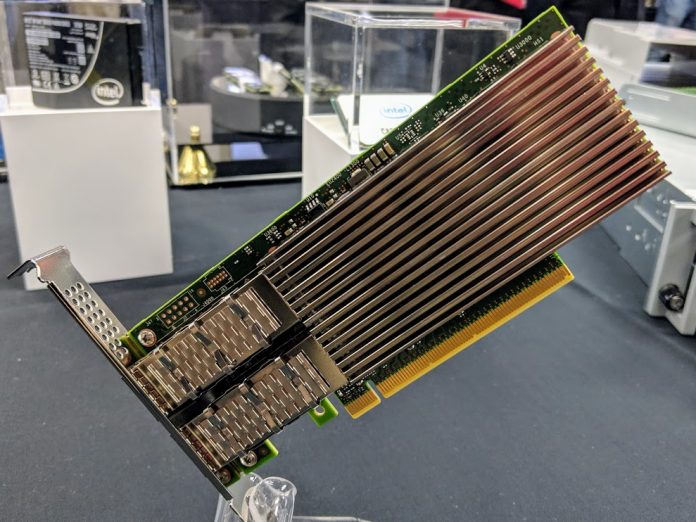

As part of the big Intel launch on April 2, 2019, Intel is getting back in the higher-end networking game with the launch of the Intel Ethernet 800 series 100GbE NIC family. The Intel Ethernet 800 series was formerly called Columbiaville and is Intel’s answer to the 100GbE segment that the company has been largely absent from. We noted in our A Product Perspective on an Intel Bid for Mellanox piece that Intel needed better 100GbE assets. Now that NVIDIA seems to have outbid Intel for Mellanox, the Intel Ethernet 800 series is what the company has for the market.

For our avid STH readers, this is the series we saw at OCP Summit 2019 in New Intel 100GbE Adapters Shown and Demonstrated. We had already been briefed, but could not share what we saw. Now we can.

Intel Ethernet 800 Series 100GbE NIC

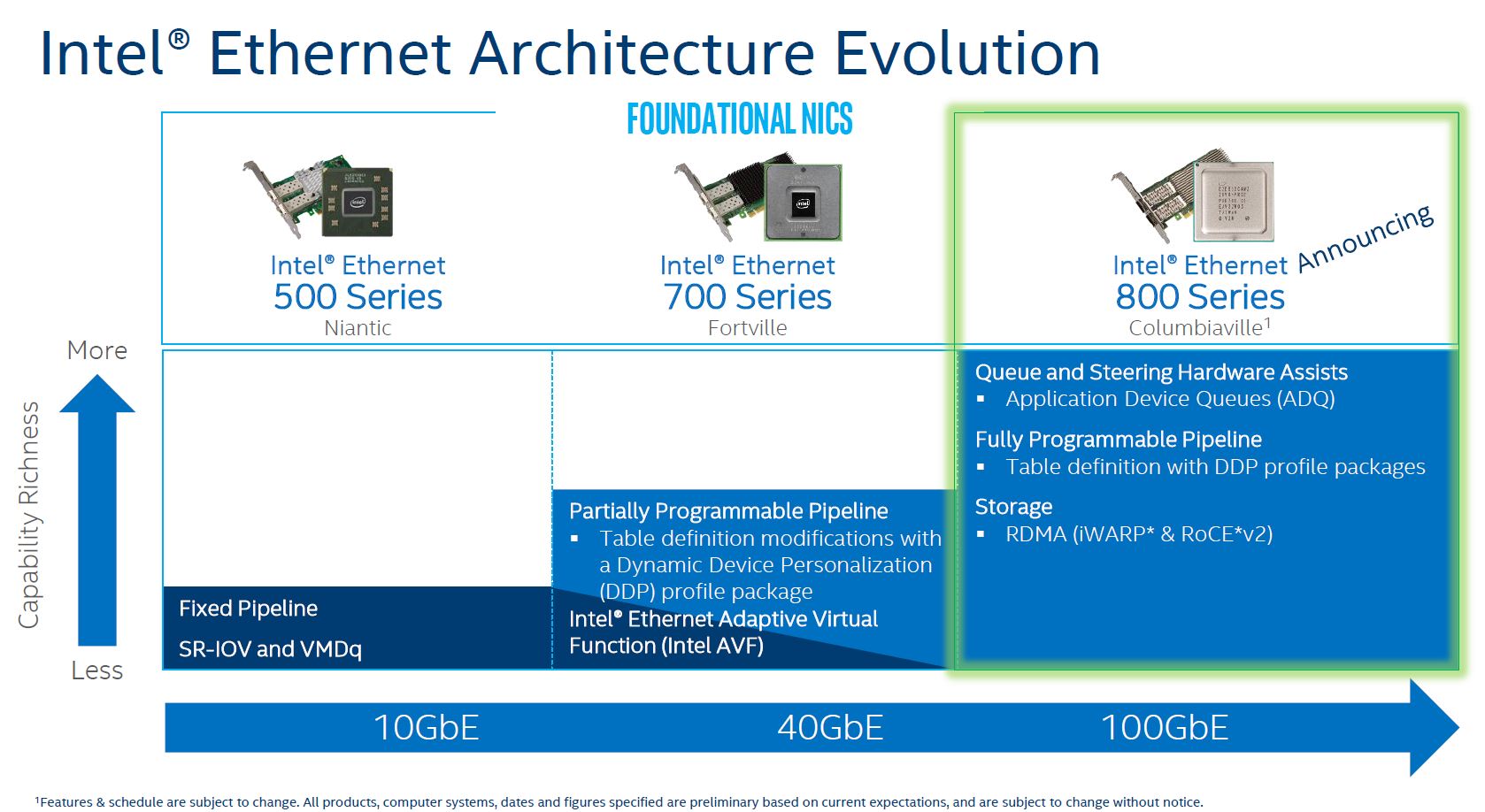

With the new Intel Ethernet 800 Series 100GbE NIC codenamed “Columbiaville” the company is adding several new features and perhaps the headline feature of faster Ethernet. You may recall when the 40GbE “Fortville” generation came out, it sipped power mostly due to the fact that it was a simple NIC compared to what others started putting out in the 40GbE generation. With lower offload capabilities, perhaps that is why Intel calls them “Foundational NICs” here,

The 700 and 800 series share a common Virtual Function Driver (Intel AVF) to help ease the transition. Other key features we will go into more depth on are the Application Device Queues (ADQ) and Dynamic Device Personalization package or DDP. We will go into those later in this piece.

One feature that Intel did not go into great depth on in its briefing was the storage line that says: RDMA iWARP and RoCE v2. Intel supported iWARP but not RoCE for a long time even when most of the industry had RoCE support. Now that is changing with the Intel Ethernet 800 Series as the company seems to be caving to the popularity of that device.

Intel Ethernet 800 Series Application Device Queues (ADQ)

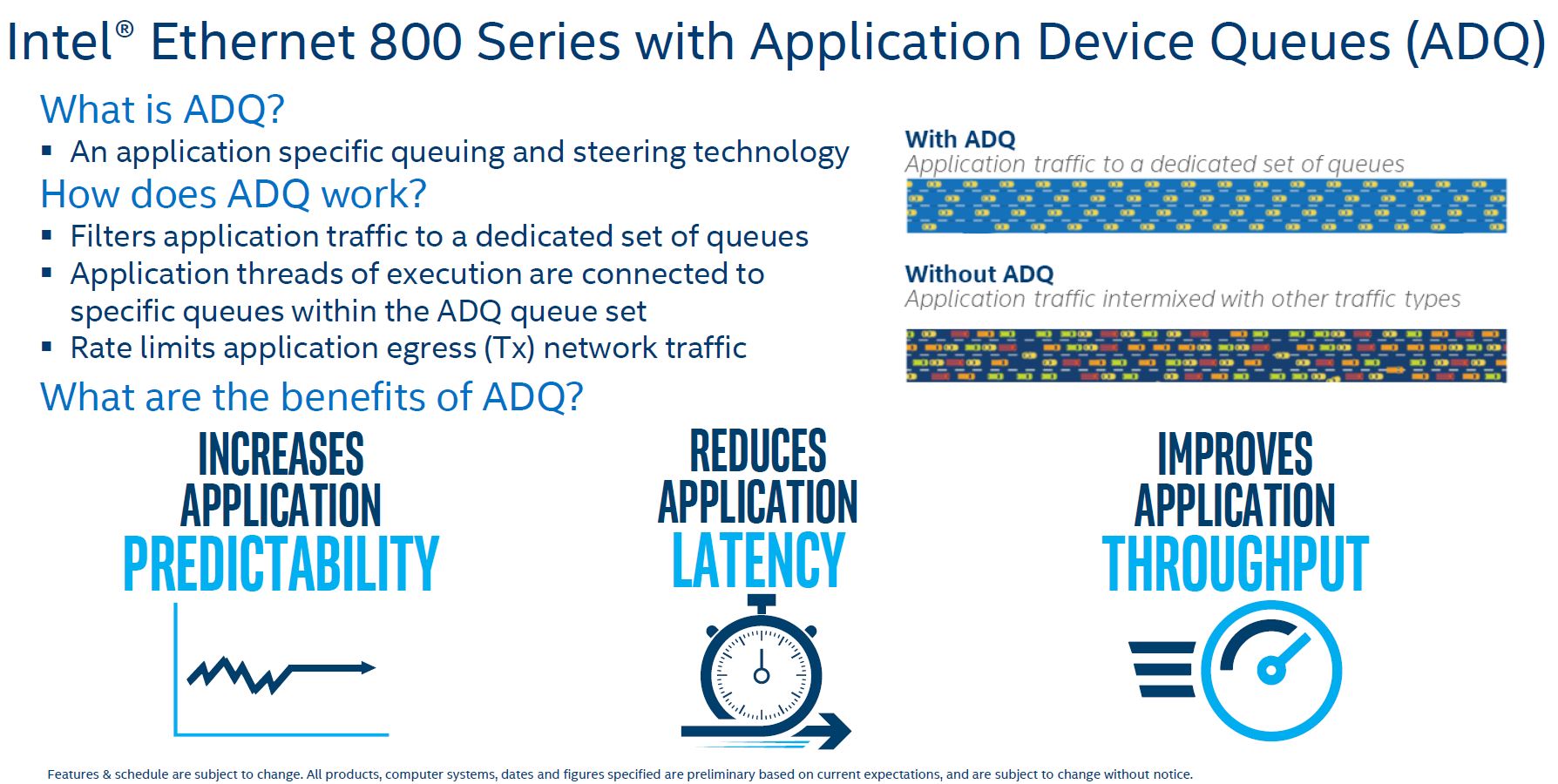

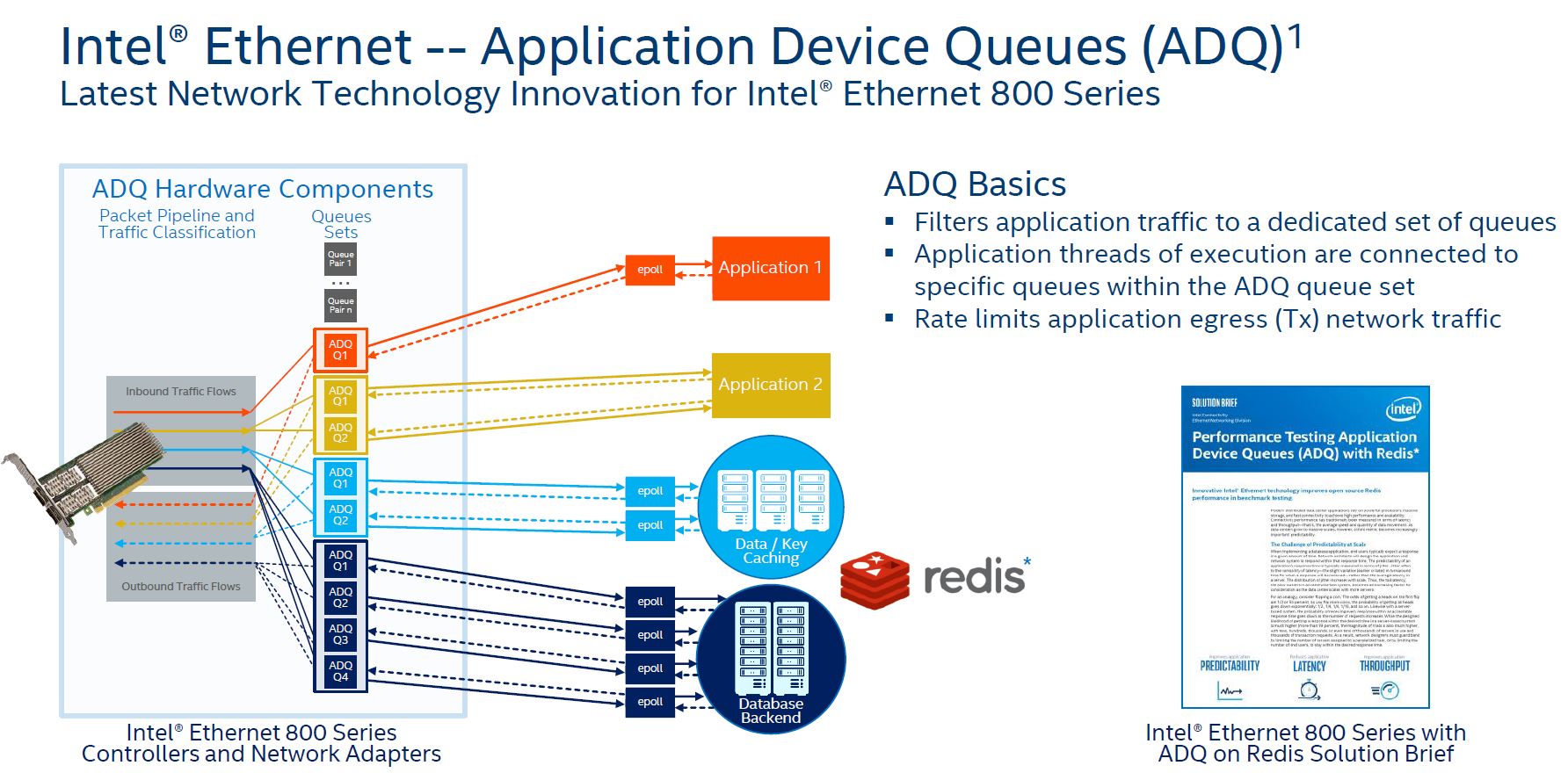

One of the headline features for the Intel Ethernet 800 Series is Application Device Queues or ADQ. ADQ went upstream in Linux 4.19 so this is going to be something that people can take advantage of almost immediately unless they are on a distribution with an older kernel.

Essentially, the hardware ADQ allows the NIC to prioritize application traffic and ensure QoS.

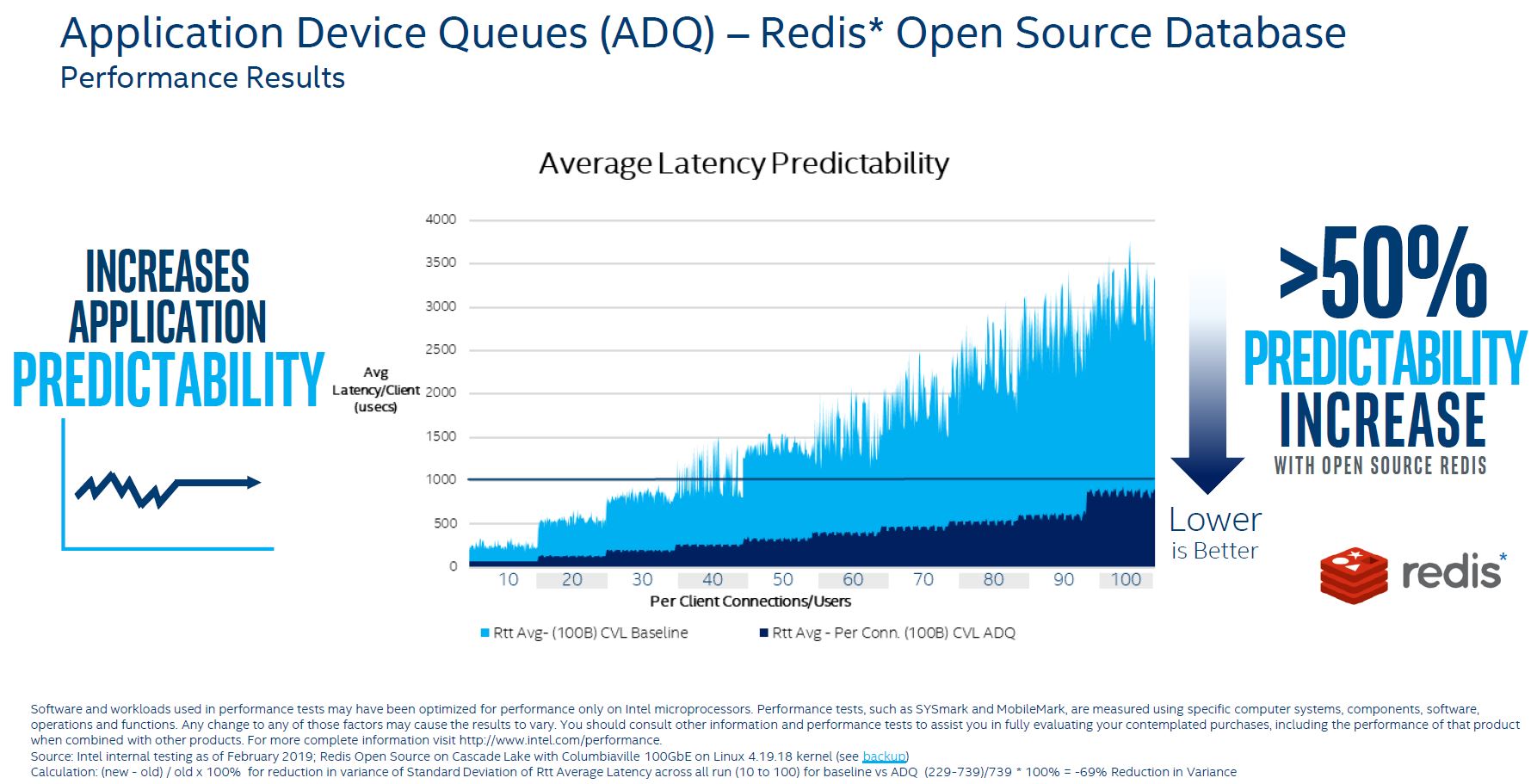

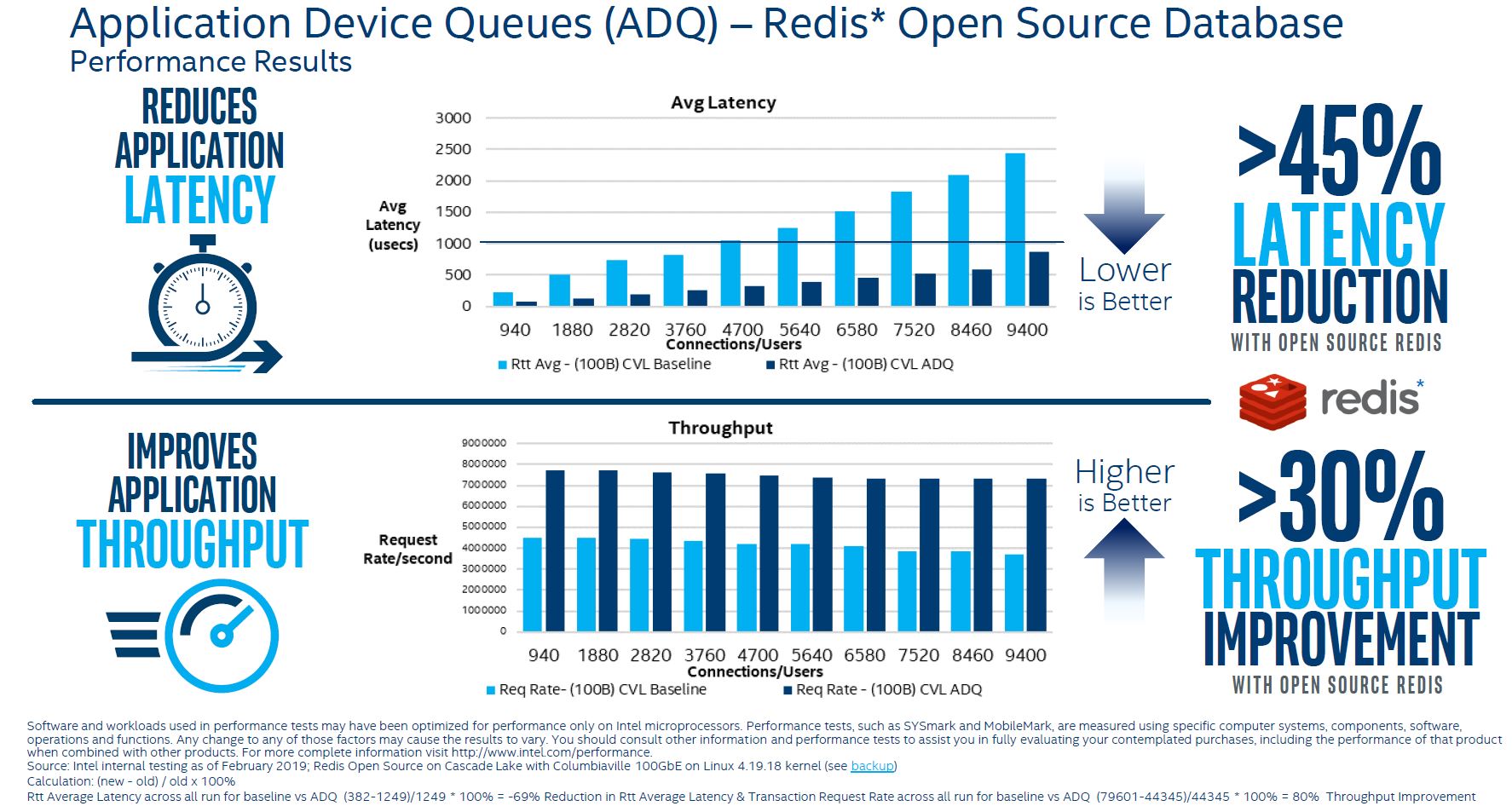

Redis is a popular application and one that Intel is pushing heavily in its April 2019 launch. Here, Intel is showing lower average latency for Redis using ADQ.

Again, Intel shows lower latency and higher throughput with ADQ.

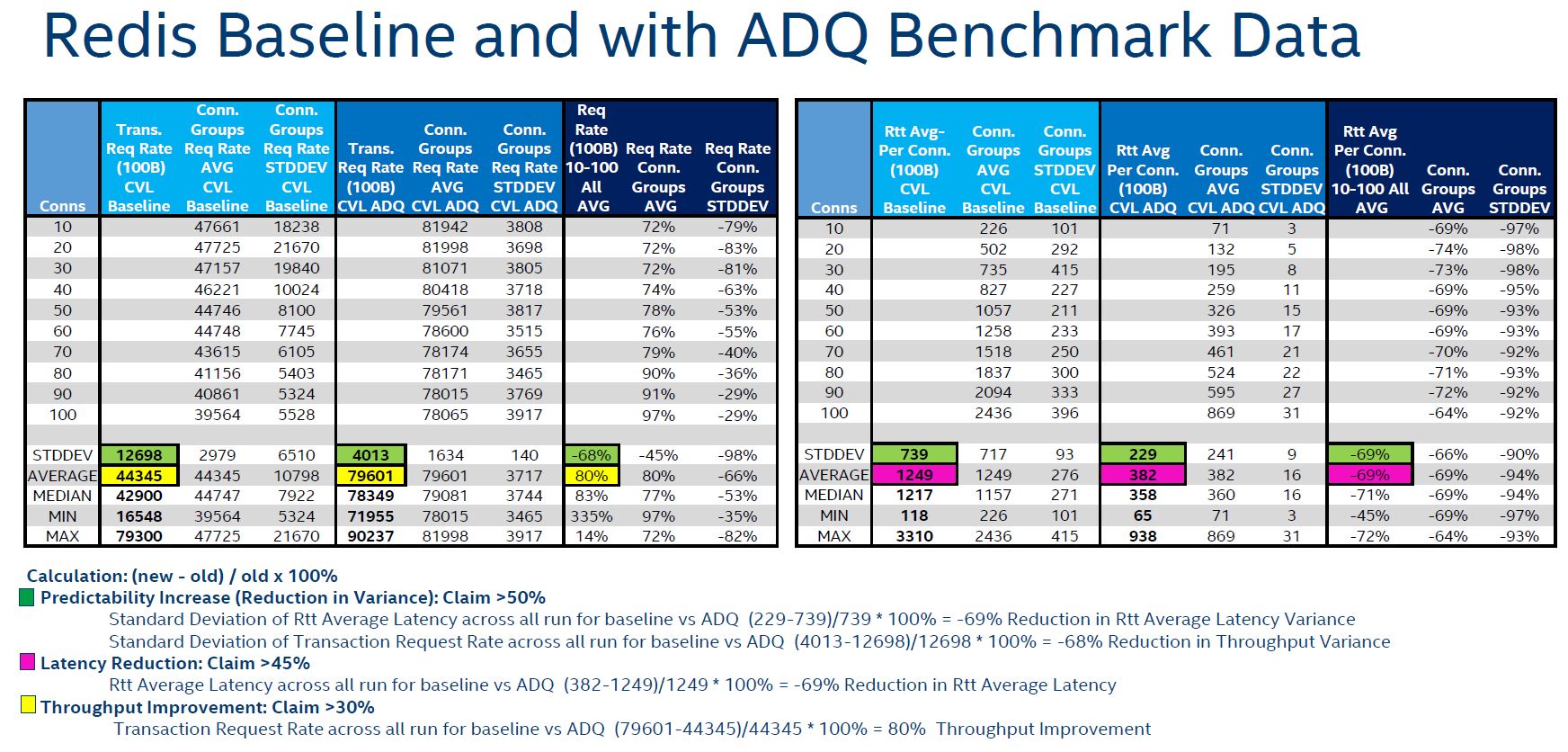

Here is the raw data the company is showing.

This is a good step for Intel that needs these features.

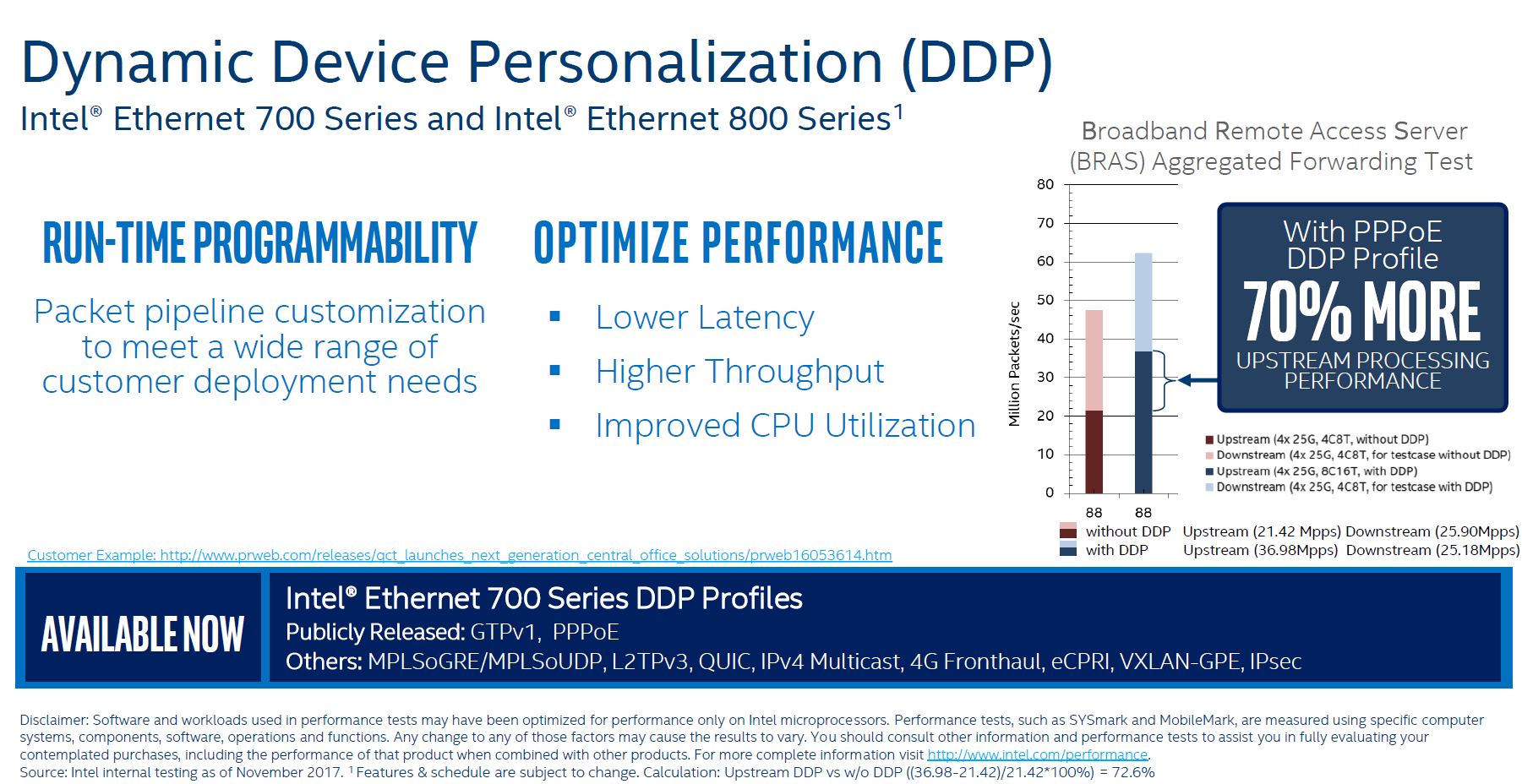

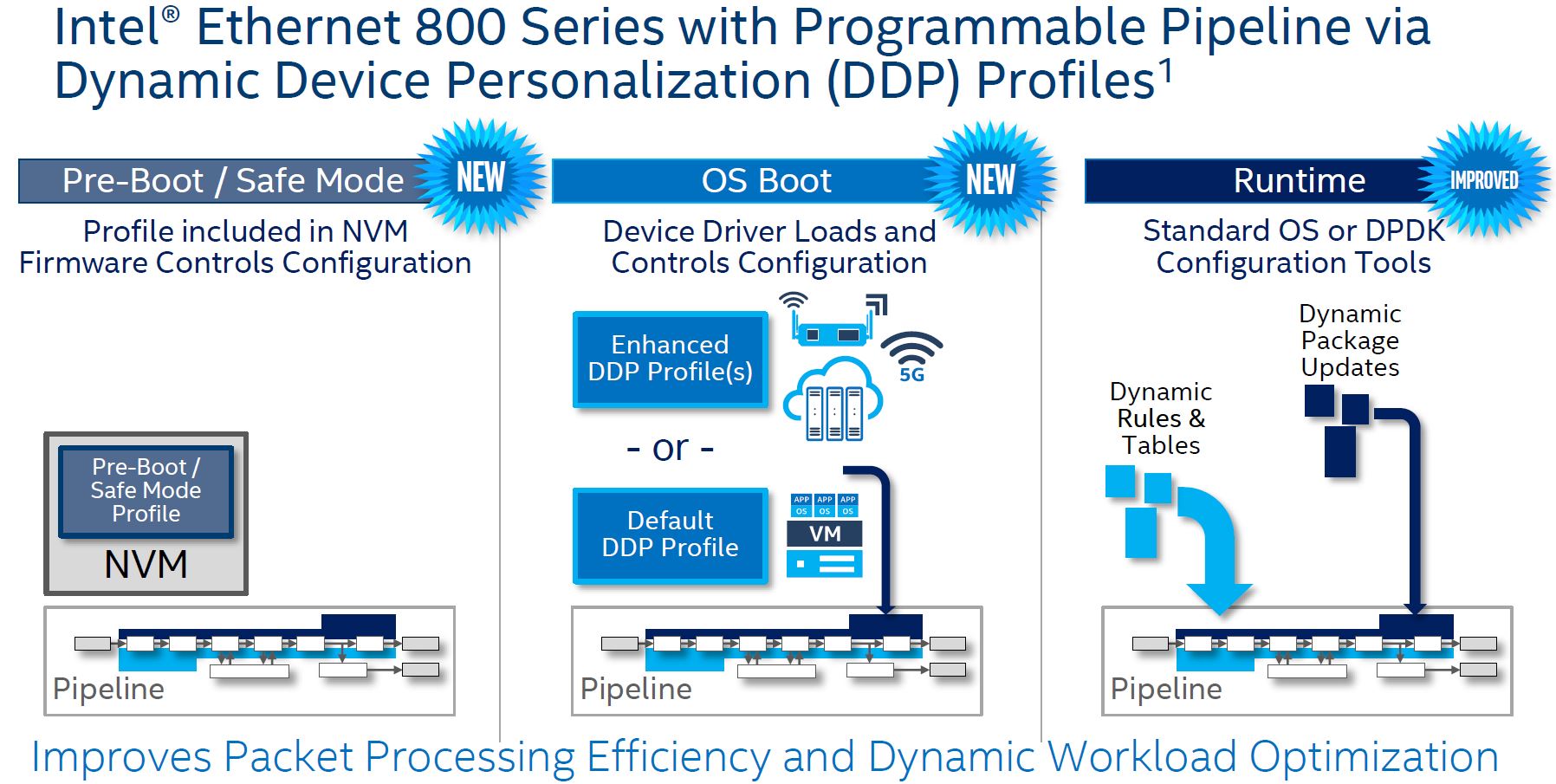

Intel Ethernet 800 Series 100GbE DDP

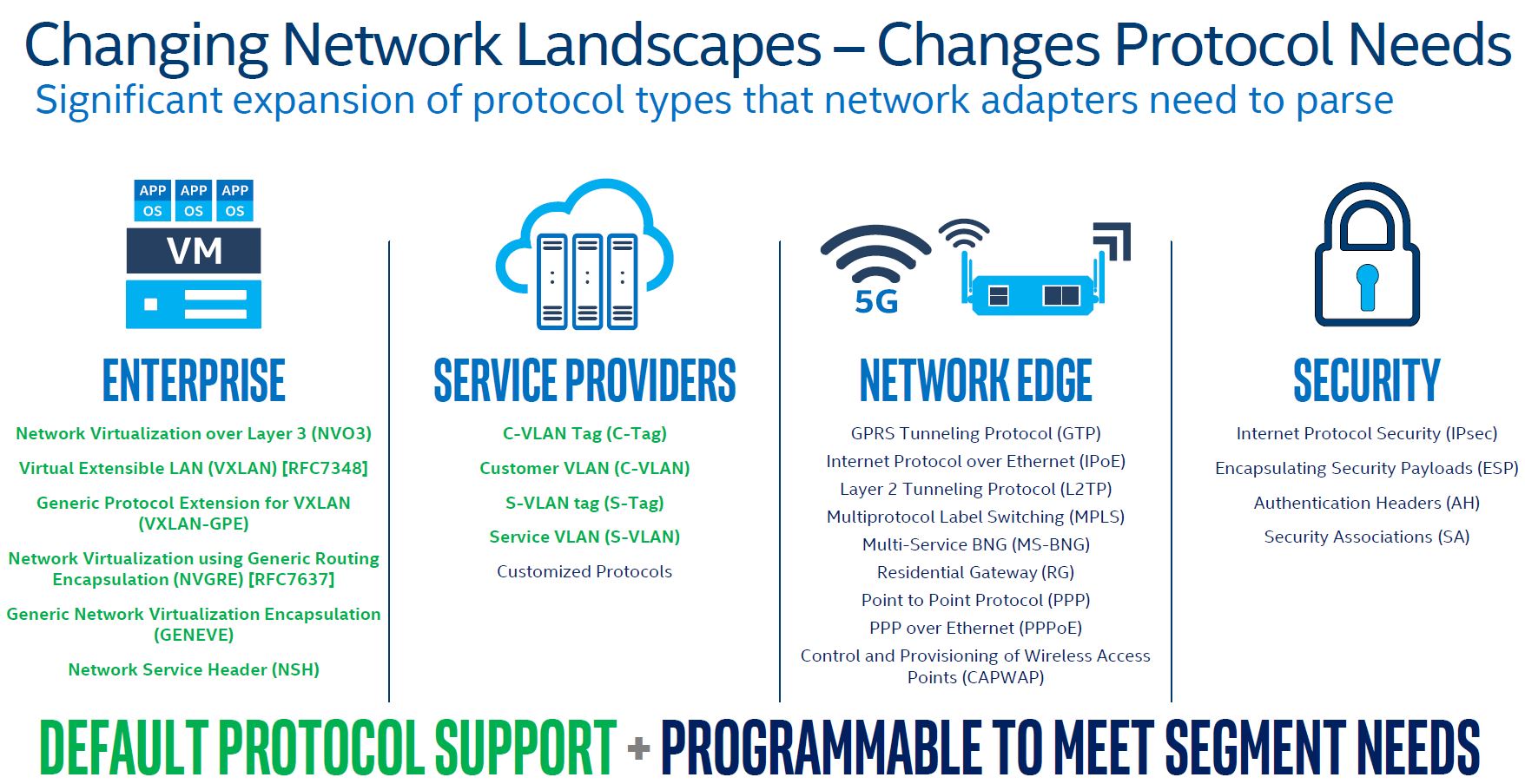

Intel also wanted to show off Dynamic Device Personalization or DDP as a key feature of the Ethernet 800 Series. As part of the setup, Intel showed how there are base sets of protocols, plus newer protocols that organizatiosn may need to support.

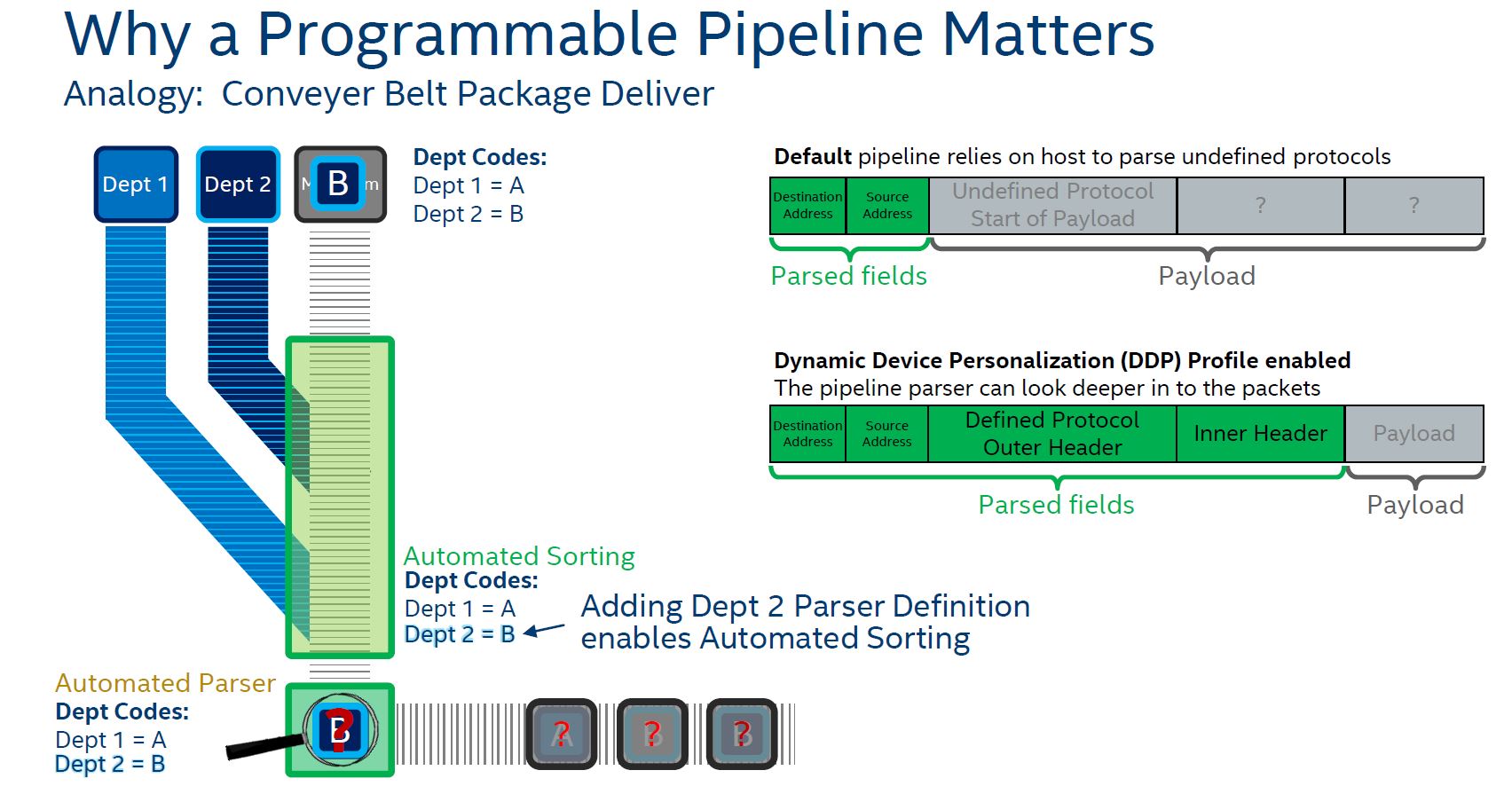

Essentially, Intel is using a programmable pipeline to do some customization and offload parsing to the NIC, instead of the host CPU.

Offloading parsing functions of packets to the NIC means lower latency and higher throughput. Intel needed a feature like this for a 100GbE NIC. Without it, 100GbE network speeds are too much to constantly have to go back to CPUs for in an efficient manner.

Part of the magic is that Intel has the ability to dynamically configure DDP profiles at boot, thus changing the NIC’s personality.

Overall, these are excellent new features.

Final Words

With RoCE v2, ADQ, and DDP, the Intel Ethernet 800 Series of 100GbE NICs has features that the company needed to add. Modern NICs have an enormous amount of offload built in. Mellanox, for example, added additional offload capabilities in its second generation 100GbE NIC, the Mellanox ConnectX-5 several years ago.

This is an area where Intel needs to still improve. Cloud providers like Amazon AWS and Microsoft Azure want heavy overhead offload on the NIC. Indeed, that is part of the reason Microsoft utilizes FPGAs in its infrastructure as we saw in Microsoft Debuts Project Brainwave Access to Intel FPGAs. Cloud providers need to sell every CPU cycle. If they required 4 cores in a 48 core system just to do overlay network processing, those would be four cores they cannot lease via EC2.

Intel, in the future, will need something better if it wants to compete at the high-end. Mellanox was in the 100GbE market in 2014 and is now in the 200GbE market, making 100GbE last generation. Part of the beauty of Intel Fortville 40GbE generations was the ability to inexpensively get devices on 40GbE networks. Perhaps the Intel Ethernet 800 Series will do the same, but the need for heavy offloads increases at 100GbE speeds. Intel is moving in the right direction, especially with RoCE V2 support. When Patrick told me about that, I was excited. Still, when you look at modern NIC offload spec sheets, they are lengthy compared to what Intel is showing.

We will have a review of the NICs if we add them to the lab any time soon.

Programmable Ethernet Switch Products. Intel technologies may require enabled hardware, software or service activation.