Today we have a look at the Inspur i24M6. This is the company’s 2U 4-node (2U4N) server based on the 3rd Gen Intel Xeon Scalable “Ice Lake” series of processors. While Intel has set a date for its Sapphire Rapids launch, we expect that Ice Lake servers will still sell well into the next year for a variety of reasons we will discuss at that launch. In the meantime, let us take a look at Inspur’s high-density Ice Lake server solution.

Inspur i24M6 2U4N Node Overview

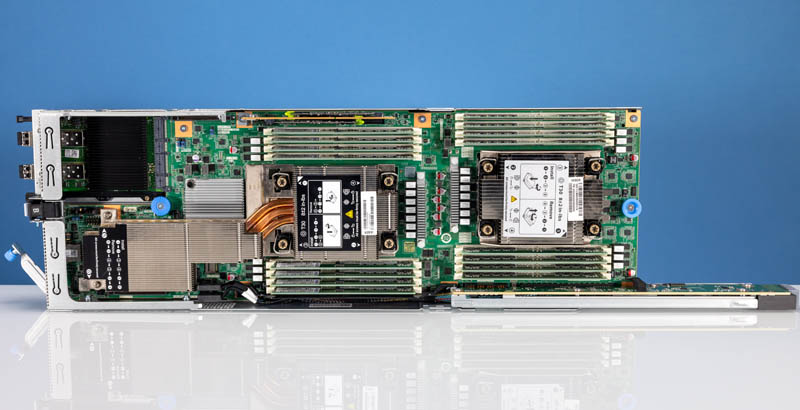

Normally we start with the chassis in these 2U4N reviews, but since the chassis is fairly similar to the Inspur i24 we reviewed previously, we wanted to instead start with the node overview. As one can see, each node in this 2U4N platform has dual Intel Xeon processors and room for I/O.

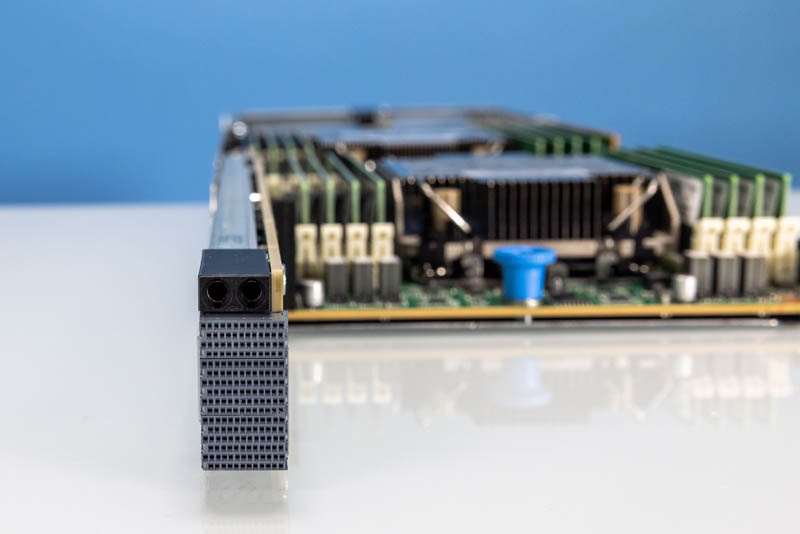

Something that Inspur does, that some competitive solutions do not, is to utilize high-density connectors for data and power. This frees up airflow in the chassis and is a more elegant solution.

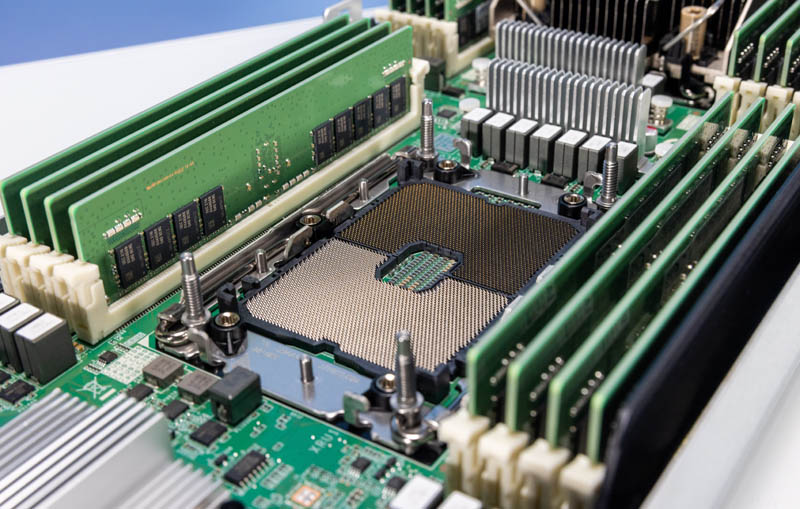

Inside the system, we have two 3rd Generation Intel Xeon Scalable processors, codenamed “Ice Lake”. Each socket is flanked by eight DIMM slots for DDR4-3200 memory in a 1 DIMM per channel configuration. While we gain node density in the 2U4N form factor, we lose half of the DIMM capacity as a result of the more slender form factor.

In the system, Inspur offers a number of processors. Intel Xeon Platinum 8352Y. These 205W TDP CPUs are really interesting. At their base configuration, they offer 32 cores at a 2.2GHz base and a 3.4GHz turbo. One can select a 24-core configuration with a 2.3GHz base and a 185W TDP or a 16-core configuration with a 2.6GHz base clock at the same 185W TDP. Intel has been working to simplify its supply chain by offering options like this where a single SKU can be ordered and installed, then the processor customized at the last moment.

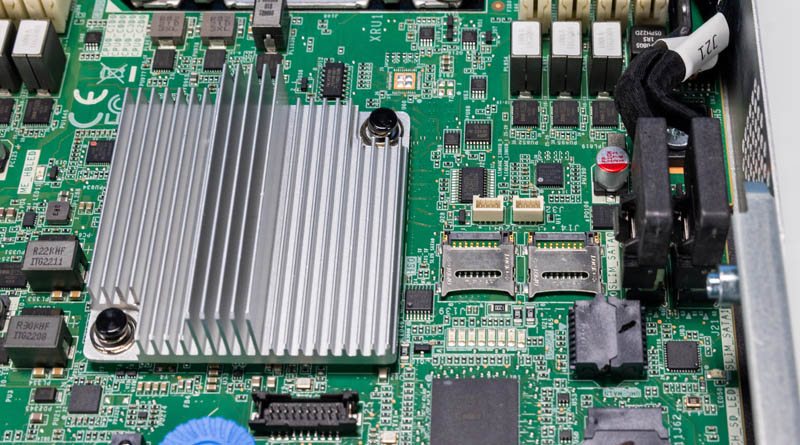

The ASPEED AST2500 BMC is the management processor on the motherboard.

One smaller feature is that next to the PCH, we get dual microSD card slots. We have started to see the popularity of using microSD cards wane, but many still use them.

For networking, we have an OCP NIC 3.0 slot. In this system, we have dual 25GbE Intel E810 NICs.

Our system did not have a riser above the OCP NIC 3.0 slot, but we can see the slot and mounting points for the riser. Part of that is just due to the thermal constraints of the system.

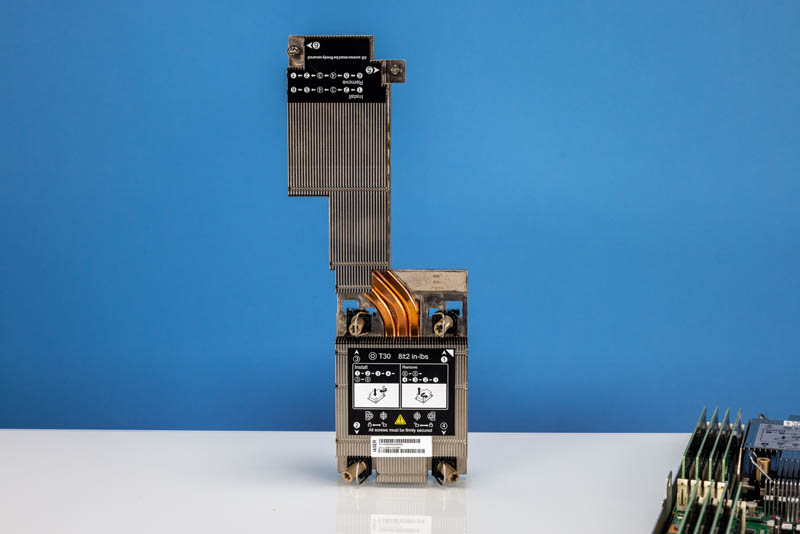

On the subject of thermal constraints, this is now the heatsink on the second CPU. We are no longer in the era where two heatsinks over the CPUs in serial are sufficient. Instead, we need a heatsink with a second extension section drawing cool air from near the DIMM slots (the other side is for expansion card cooling.) This design also blocks using a second expansion slot in the chassis.

For a better view of this amazing heatsink, here it is by itself. While the front heatsink looks fairly mundane, this rear heatsink is clearly a response to the challenges of air cooling as TDPs rise.

The front SSDs on systems like this are generally used for storage. As a result, we see a bigger push towards internal M.2 SSDs for boot. That is what we have here on the side opposite the large heatsink.

Inspur has a M.2 boot card with two M.2 SSDs in M.2 2280 or M.2 22110 sizes. This adds the opportunity for mirrored redundant boot devices internally leaving the front bays for high-performance storage.

The rear of the unit has a management port as standard but uses a high-density breakout connection for USB and video connectivity.

Next, let us take a look at the chassis.