Power Consumption

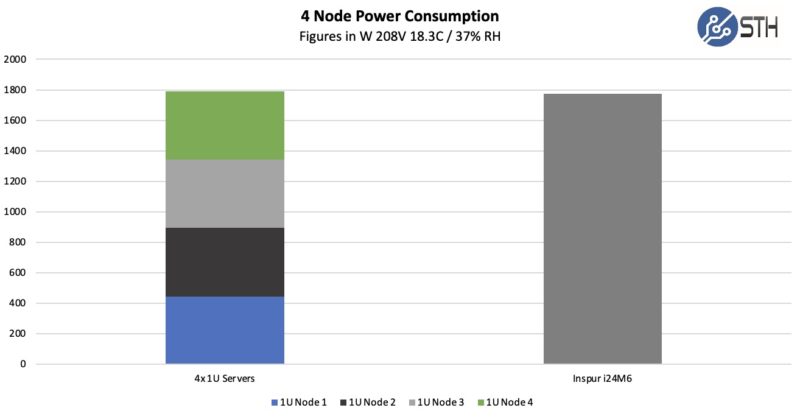

Power consumption is interesting in systems like these. One part of the equation is how much power the entire system uses. The second is how much power this type of solution uses compared to separate 1U platforms. With a pair of Great Wall 2kW PSUs, we get a maximum power limit of around 2kW for all four nodes.

In practice, we were able to get this system into the 1.9kW range, just at the edge of redundancy in this system.

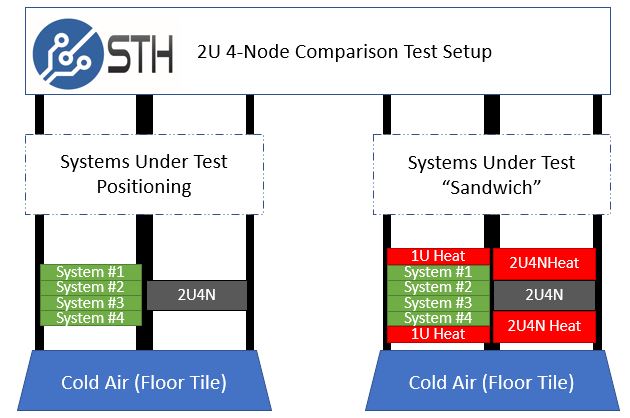

One of the other, sometimes overlooked, benefits of the 2U4N form factor is power consumption savings. We ran our standard STH 80% CPU utilization workload (that now tends to consume closer to 85-90% of the power of a system), which is a common figure for a well-utilized virtualization server, and ran that in the sandwich between the 1U servers and the Inspur i24. With dense servers, heat is a concern, so we replicate what one would likely see in the field. This is the only way to get useful comparison information for 2U4N servers.

Here is what we saw in terms of performance compared to our baseline nodes.

This is only about a 0.9% improvement. On the i24, which is a lower-power platform, we saw about a 1.8% improvement. It appears as though the 2U4N platform’s efficiency from shared power and cooling is offset more by the increased component TDPs in this generation. The i24M6 still has a lead in efficiency, but it is not as much as we have seen in previous generations.

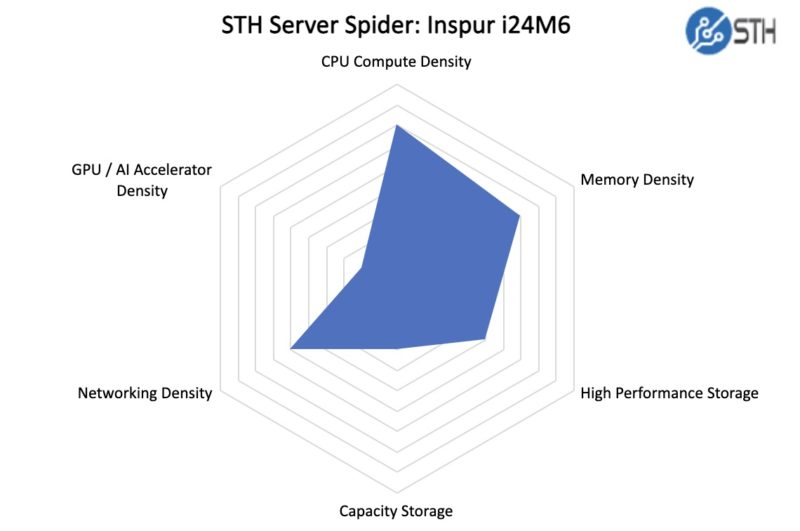

STH Server Spider: Inspur i24M6

In the second half of 2018, we introduced the STH Server Spider as a quick reference to where a server system’s aptitude lies. Our goal is to start giving a quick visual depiction of the types of parameters that a server is targeted at.

This server is focused on compute density. As we can see this is far from being the densest storage, networking, or GPU platform on the market. Instead, the goal of this system is to pack eight CPUs into 2U of rack space. Our system has several trade-offs in other areas to focus the system on air cooling >200W TDP CPUs.

Final Words

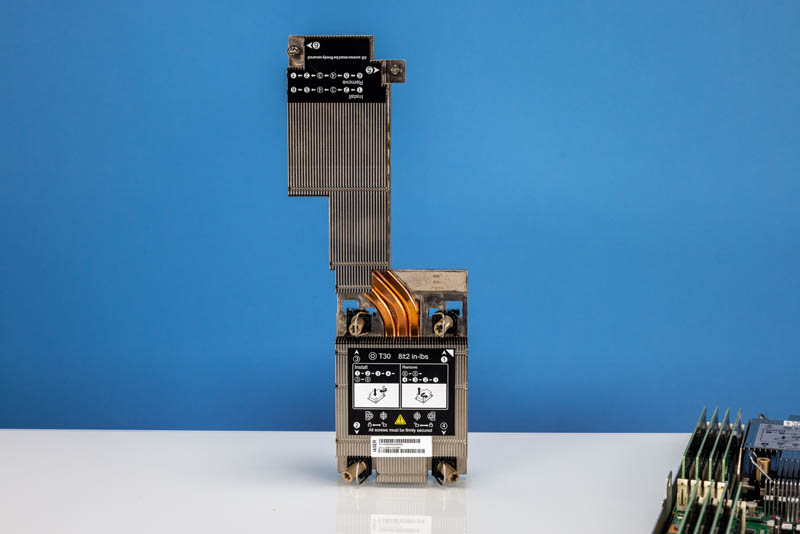

Perhaps the most striking feature of this solution is the cooling solution for the 3rd Gen Intel Xeon Scalable processors. Inspur is going to great lengths to cool the second processor in these nodes.

As TDPs rise in the next generation, this really shows why this class of systems is focused more on liquid cooling for higher-TDP solutions. The larger heatsink limits the ability to add additional expansion devices. On the other hand, this larger heatsink also allows using higher TDP CPUs which is why it exists.

Inspur has a solid design in this generation. Features like the chassis management controller and little touches like the labels for each node are nice when using systems like this in dense racks.

We expect the “Ice Lake” generation of servers to continue shipping in the near future given the extra cost of utilizing DDR5, PCIe Gen5, and more in newer systems. For many, these PCIe Gen4 platforms are going to be attractive long after the next wave of servers is out. That is one of the big reasons that we like the Inspur i24M6, and a topic we will get into early next year on STH.