Today we have a review of an exciting system. The ASUS RS720A-E11-RS24U combines top-end NVIDIA A100 PCIe GPUs with two top-end AMD EPYC 7763 CPUs to provide a platform that highlights some of the best hardware on the market today. To that end, the 2U server also adopts a number of server design principles that showcase changes we are seeing in the market. In our review, we are going to look at this high-end hardware, and show some of these server design trends that the platform adopts.

ASUS RS720A-E11-RS24U Hardware Overview

Something that we have been doing lately is splitting our hardware overview section into two distinct parts. We are first going to cover the external portion of the chassis. We are then going to cover the internal portion.

As with many of the new reviews we are doing, this review has an accompanying video. We always suggest opening the video in a YouTube tab for a better experience. We know some prefer to listen to content, so this should help.

ASUS RS720A-E11-RS24U External Overview

The system itself is a 2U system that measures only 840mm or just over 33″ deep. The importance of this is that the server will fit in a number of existing racks assuming power budgets can keep pace.

On the front of the system, we have a customizable 2.5″ drive bay solution. Sixteen of the drive bays can be utilized through NVMe and eight can be SAS/ SATA as well. ASUS uses a flexible backplane option and has options for its PIKE II SAS3 controllers so there are customization options here as well. These drive bays also utilize a tool-less drive bay solution that we demonstrate with a Kioxia CD6 NVMe SSD in the accompanying video.

While the USB 3 ports on the front of the system we see on many servers, along with power buttons and status LEDs, a less common feature is the handle. ASUS has a small handle that can be used to pull or push a unit for removal/ installation. These handles can then be turned so that the rack doors can be closed without having a large forward protrusion in the way.

The rear of the system has three basic functions. There are power supplies, I/O expansion slots, and then the standard I/O block for the server.

On the power supplies, we get redundant 2.4kW 80Plus Titanium units. This is a high-end system so having high-power and high-efficiency power supplies are required.

We are going to discuss the PCIe I/O subsystem in greater detail later still the rear I/O panel is worth some discussion at this juncture. We have a fairly standard I/O panel with two USB ports and a VGA port.

ASUS also has its Q-Code feature which shows the BIOS POST code on the rear of the chassis. This is handy to quickly identify a system that is having an issue when you are in data center racks. This system worked for us, but we have used it on some Xeon E5 V4 servers that we had in the lab and it was probably more useful than we would have thought before having needed it.

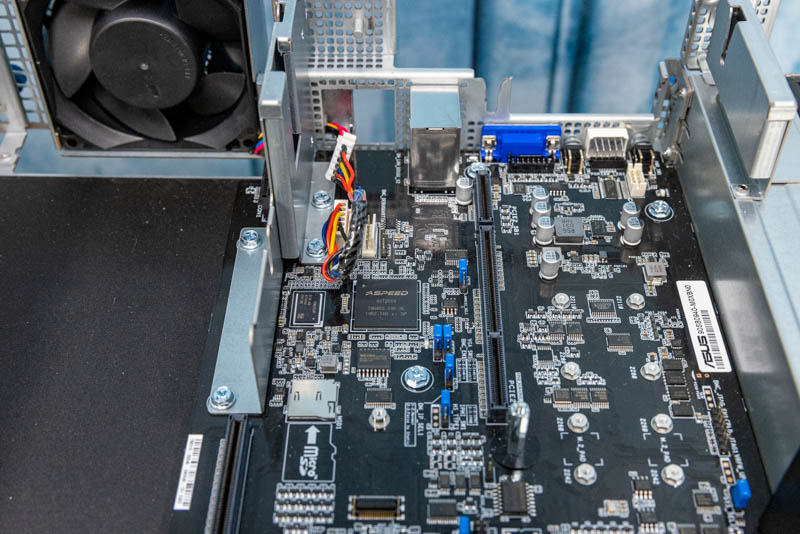

Above the USB ports we have an out-of-band IPMI management port. This is interesting since, as we can see as we flip to the internal view, the BMC powering this is an Aspeed AST2600. This is a newer faster BMC that we are just starting to see replace the AST2500 which was a very popular BMC.

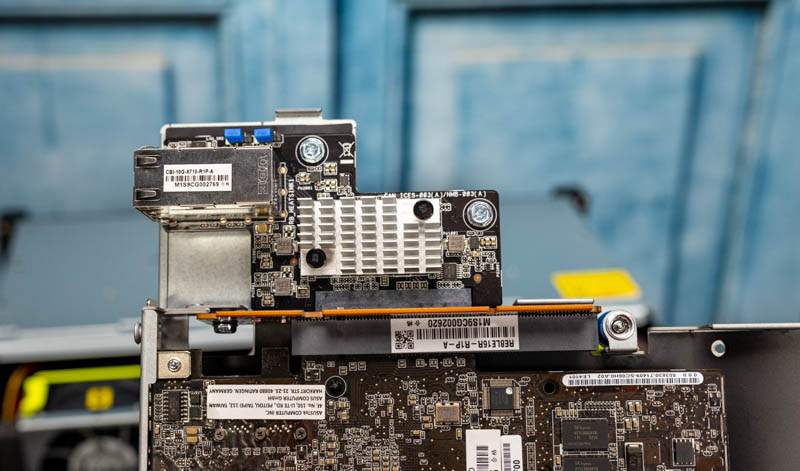

The onboard networking is powered via a proprietary module. This server has dual 10Gbase-T via an Intel X710 NIC, but there are quad 1GbE (Intel i350) options as well.

One other feature that we wanted to point out was the fan unit. This fan is to cool a section of the chassis with two NVIDIA A100 GPUs that we will see in our internal overview.

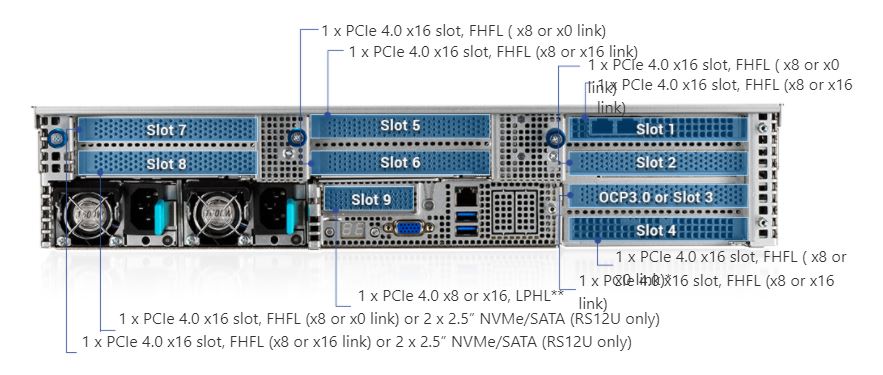

We are going to quickly note that ASUS has a number of configurable options for its risers. Here is what the full configuration looks like with nine slots when it is not in the configuration we are showing with four double-width GPUs:

Speaking of those four double-width GPUs, let us get inside the system and see how ASUS designed the server to cool these units.

Next, we are going to take a look inside the server to see how this system is constructed.

You guys are really killing it with these reviews. I used to visit the site only a few times a month but over the past year its been a daily visit, so much interesting content being posted and very frequently. Keep up the great work 🙂

But can it run Crysis?

@Sam – it can simulate running Crysis.

The power supplies interrupt the layout. Is there any indication of a 19″ power shelf/busbar power standard like OCP? Servers would no longer be standalone, but would have more usable volume and improved airflow. There would be overall cost savings as well, especially for redundant A/B datacenter power.

Was this a demo unit straight from ASUS? Are there any system integrators out there who will configure this and sell me a finished system?

there’s one in Australia who does this system:

https://digicor.com.au/systems/Asus-RS720A-E11-RS24U