ASUS RS700-E10-RS12U Internal Overview

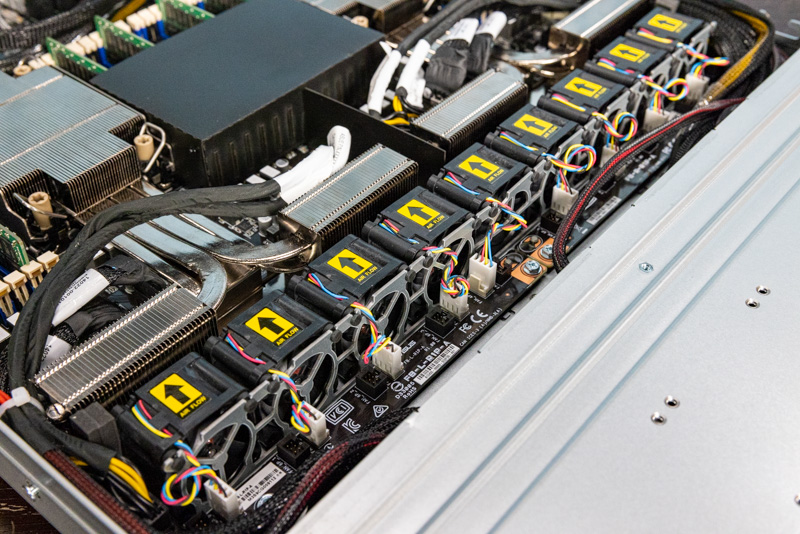

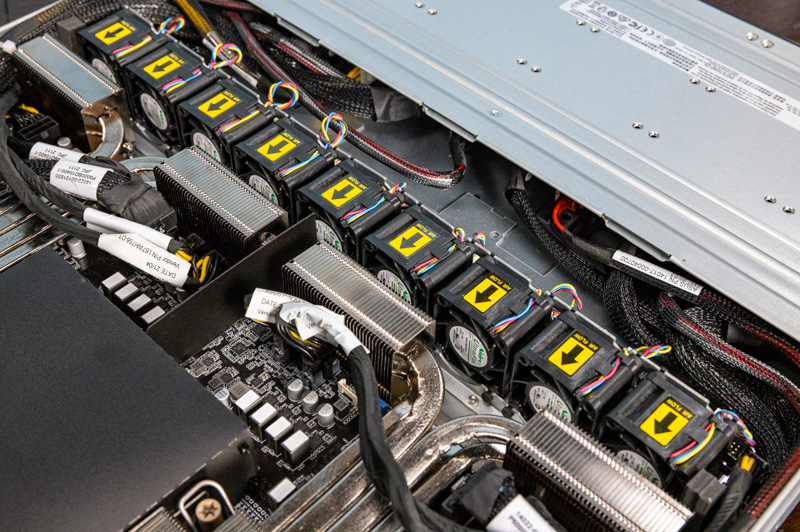

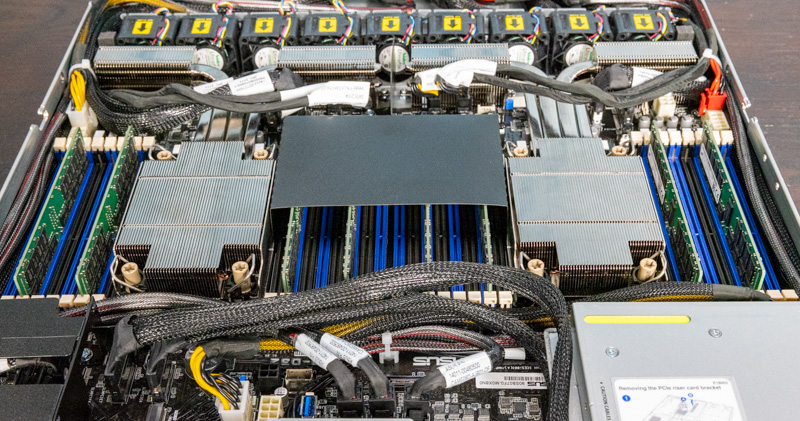

Inside the system, we are going to work from the front to the rear again. Before we get to the fans, there is a small feature that we wanted to point out. There are two fan boards in the system. One can see that the fans are connected via 4-pin PWM fan cables. These cables are very short so servicing is easy. Something that is perhaps more notable is that there are 6-pin fan headers designed for hot-swap fan modules. We typically do not see 1U servers have hot-swap fans just due to component dimensions. It appears as though these fan boards could also be used for 2U hot-swap can chassis.

There are a total of nine fans in the system. We should point out that these are single fan units so these are not counter-rotating fan modules or anything like that.

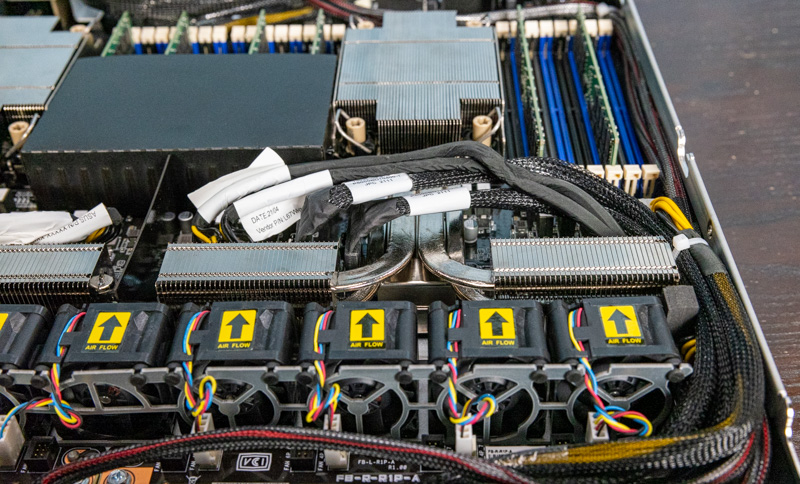

Perhaps the next interesting feature is the heatsink design. To support up to 270W TDP CPUs, the heatsinks have large heat pipes from the main heatsink to auxiliary heatsink sections. These sections are placed in front of the motherboard next to the fans. In many systems, we see these auxiliary heatsink sections placed after the memory.

This placement means that these extra sections can be deeper in the chassis since they extend beyond the motherboard. It also means we get relatively longer heat pipes.

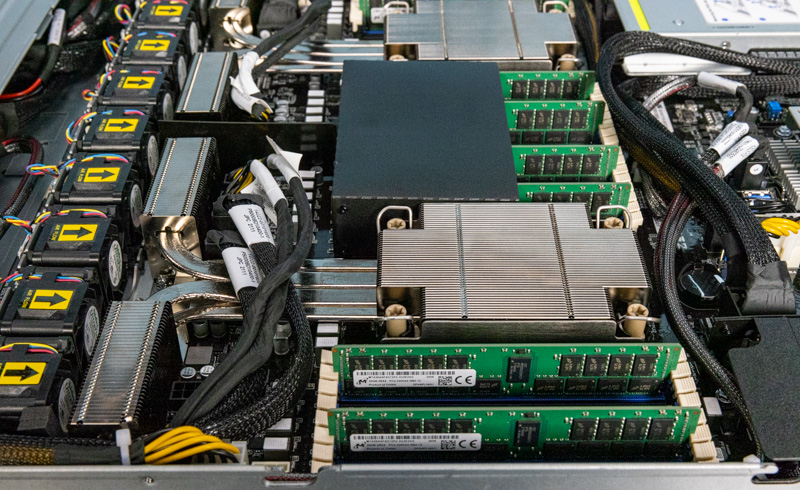

The system is powered by two Intel Xeon Ice Lake generation processors. This system can support the full stack of up to Platinum 8380 processors. For those who have not migrated to EPYC, this is a big jump in core counts while also bringing features such as more PCIe Gen4 lanes.

Since we get many questions around the SKU stack, we have a video explaining the basics of the various Platinum, Gold, Silver, letter options, and the less obvious distinctions.

You can also see our Installing a 3rd Generation Intel Xeon Scalable LGA4189 CPU and Cooler guide for more on the socket, as well as our main Ice Lake launch coverage.

Around the CPU socket, we get a total of eight DIMM slots. These can accept up to DDR4-3200 so long as the CPU supports it (see our 3rd Gen Intel Xeon Scalable Ice Lake SKU List and Value Analysis piece for more on that.) Since ASUS is using a proprietary form factor motherboard (common in servers these days) it is able to fit the full set of 16 DIMMs per CPU and 32 total. We only had 8 DIMMs installed for the photography session because DDR4-3200 DIMMs are in high demand in the lab.

One can also add up to four DDR4 DIMMs alongside four Optane PMem 200 modules. We have more on how Optane modules work, since much of the documentation is not clear on that, here.

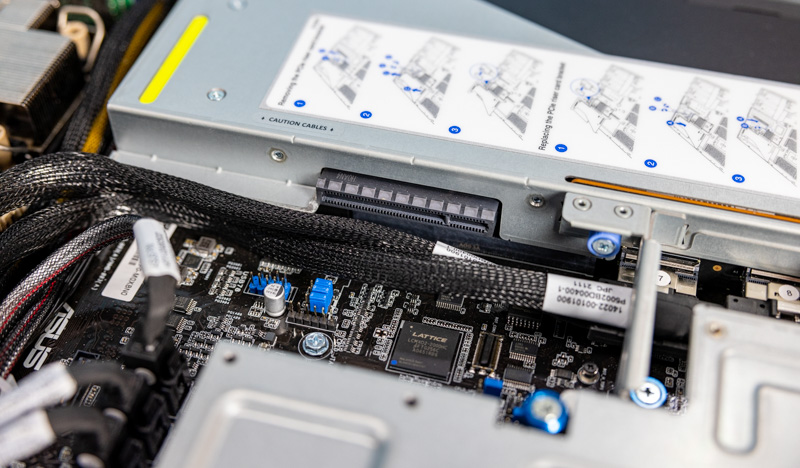

Taking a second to note here that we are seeing the trend towards more cabled PCIe connectivity in servers. With the NVIDIA A100 riser removed we can see more of these cabled connections.

We previously covered the rear I/O risers, but there is one more in this system. We have an internal PCIe Gen4 slot here for applications such as installing an ASUS PIKE SAS/ SATA HBA or RAID controller. Since a RAID controller will typically service the front bays, it can be housed internally in the system without taking up rear I/O space.

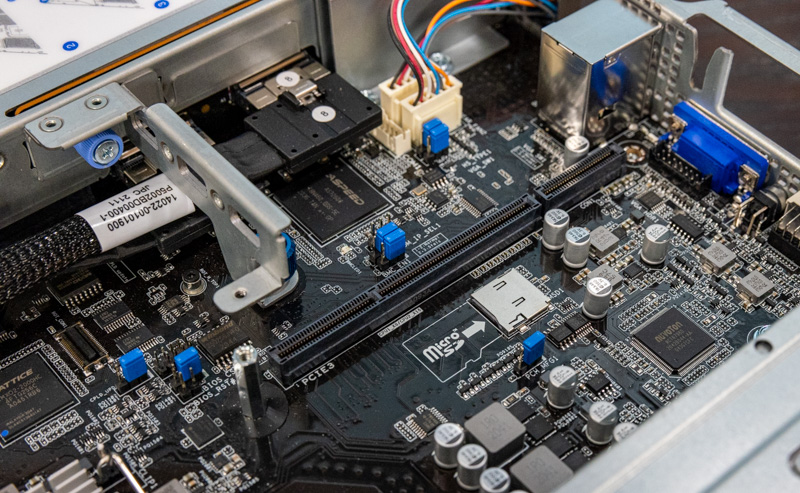

With the middle riser removed we can see the BMC and the MicroSD card slot in the system.

One other small feature that would be easy to miss just looking at photos is that there are two M.2 slots that sit just in front of the power supplies. These M.2 slots get a bit of airflow from the power supply fans as well as the chassis fans.

Overall, this is a fun ASUS spin on a mainstream 1U server. It is certainly a bit different if we compare it to how others are designing servers with the POST code LED, cooling design, and PCIe riser configuration. It is always fun to see how innovation happens even on relatively mainstream platforms.

Next, we are going to check out the block diagram as well as the management before moving on with our review.

haha, the second RS is for Redundant PSU, and first RS stands for Rackmount Server

If that’s true Juno Shi it should be RP (redundant power) not RS

The name basically tells you:

RS – Rack Server

7 – DP Mainstream

0 – 1U

0 – W/O IB

–

E10 – Intel Mehlow / Whitney

R – Redundant PSU

S – Hot-Swap HDD Bay

12 – 12 HDD Bays

U – All Bays support NVME

Hope that clarifies it.

This looks like a great server appliance, however (and I know this is difficult) how does it cost – compared to a similar priced Dell or HPE?

Our in-house IT dept only buys HPE – everything. Sure it’s good hardware but you can’t help but think that we’re paying a premium for the name (and highly likely someone’s brother-in-law works at an HPE channel supplier)

Does this support 6 or 12 NMVe with 1 CPU installed?