The ASUS RS700-E10-RS12U is a 1U server that fits quite a bit into the thin form factor. Powered by dual Intel Xeon Scalable (Ice Lake) processors the server takes advantage of some of the trends we have seen in the market including higher density NVMe 1U configurations and support for GPUs. Let us get into the review.

ASUS RS700-E10-RS12U Hardware Overview

As we have been doing recently, we are going to split our review into the external overview followed by the internal overview section to aid in navigation around the platform. As we have had with a number of our reviews lately, we are going to have a video version of this review as well:

As always, we suggest watching the video in its own YouTube tab, window, or app for a better viewing experience. With that, let us get to the external hardware overview.

ASUS RS700-E10-RS12U External Hardware Overview

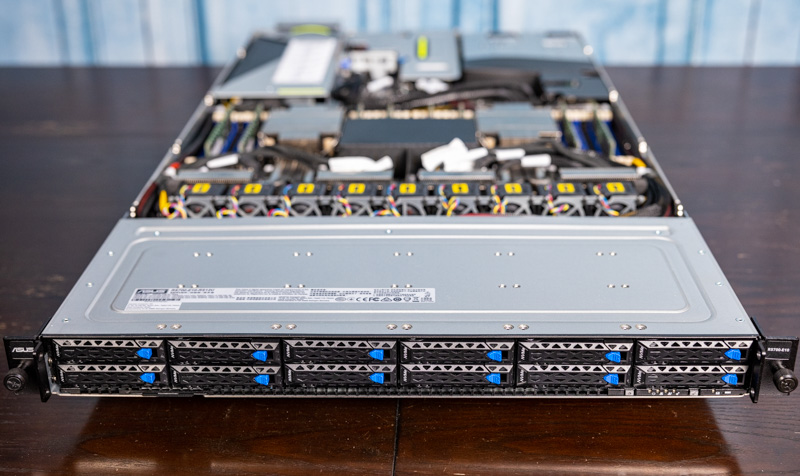

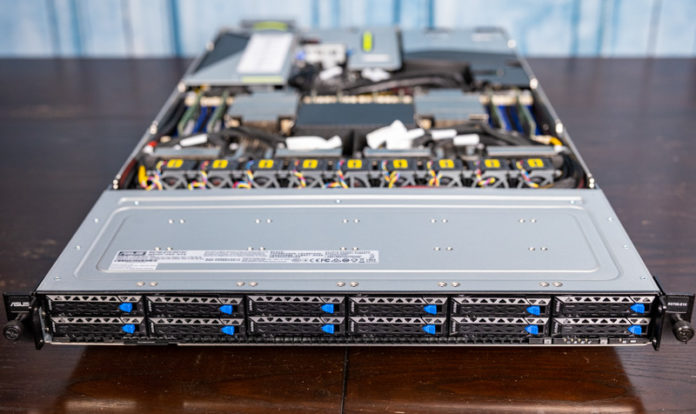

The system itself is a 1U server that is 33.17″ or 842.5mm deep. Practically, that makes the server short enough that it will fit into most racks.

Starting with the front of the system, we get twelve 2.5″ bays. With this generation of Ice Lake Xeons, these can all be connected to PCIe so we get PCIe Gen4 x4 connections to each drive. One of the trade-offs is that the trays are only on the bottom of the drives, not the sides which means the trays are not toolless. Of course, the benefit is that one gets a 20% increase in storage density for the cost of screwing in drives to trays.

On the rear of the system, we have a number of features. One can see the large fan cage to the right of this system, and we are going to discuss that in a bit. Instead, we are going to start with the left of this photo, the redundant power supplies, and move right.

In our system, we have two 1.6kW PSUs. There are options for 80Plus Platinum and Titanium PSUs at the 1.6kW level and an 80Plus Platinum 1.2kW. Since we have the GPU installed, we have larger power supplies.

The rear I/O is a VGA port, a dedicated IPMI port, two USB 3.2 Gen1 ports. There is also a power button which is nice if you need to power cycle a machine before hot aisle service. Perhaps the most unique feature is the POST code screen. This is a feature that ASUS has used for years on servers and even consumer motherboards. Often, it is something that goes unused. Where it is useful is to quickly locate a problem server in a rack. When these are stacked in a rack it can be hard to see which system is an issue and this helps both during initial burn-in testing as well as when the server is deployed.

A feature that is notably absent from our system is onboard networking. This system has three options: 4x 1GbE, 2x 10GbE, or no onboard networking. Our system has the fan module blocking part of the network port area, so it is using the 25GbE adapter in a riser slot for networking. This is a feature that I probably would have preferred if there was even a standard 1GbE networking port onboard.

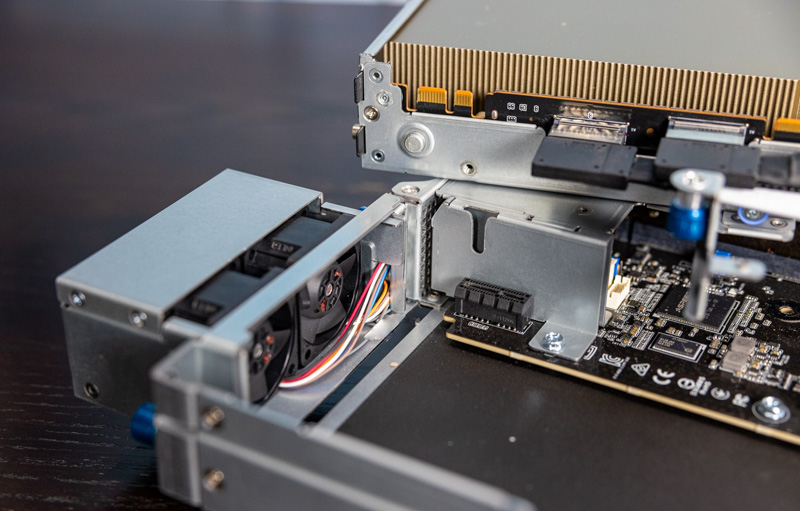

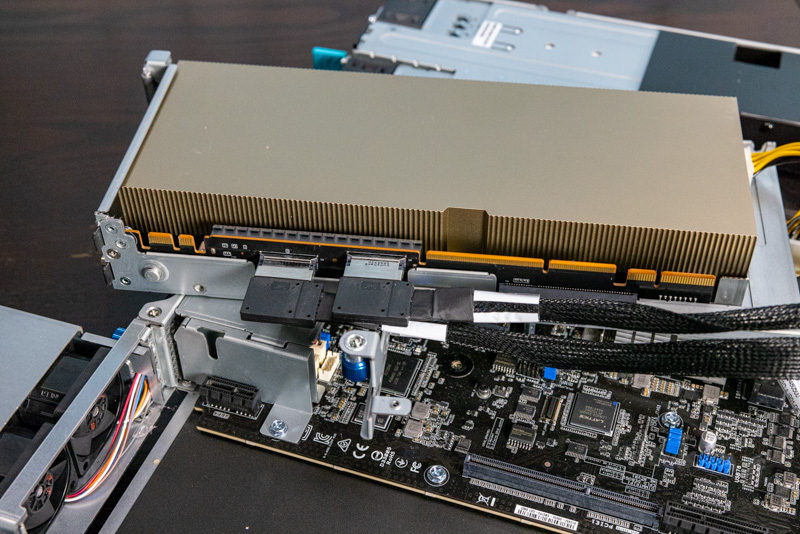

The dual-fan cooling module is optional and is required if one uses a high-end GPU in the primary expansion place. Since we have a NVIDIA A100 here, we need the extra cooling.

Something we will quickly note is that the rear expansion slots are fairly flexible. We only have a single configuration to show, but this is a great example of where the system can be configured in different ways. One can have two full height/ length slots in the primary expansion area, but instead, we have the single PCIe Gen4 x16 slot. The riser houses the NVIDIA A100. Cooling the NVIDIA A100 in a 1U can be a challenge especially because the 1U form factor will heat air from CPUs before it reaches the expansion slots.

As part of a trend we are seeing, there are cabled PCIe lanes to this riser as well.

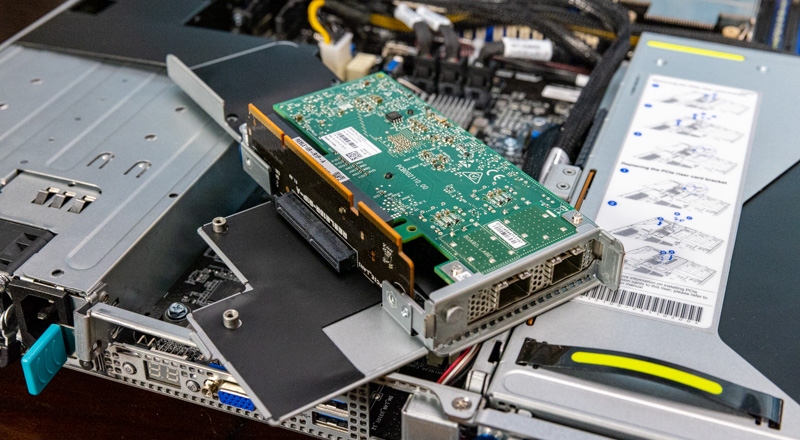

The other rear IO riser is the one that has the Mellanox ConnectX-4 25GbE adapter. One can see the additional slot here and that can be used for adding additional networking to the system.

There is a bit more internal expansion, but to even out the external/ internal discussion we showed the rear I/O risers a bit earlier.

Next, we are going to get to the internal overview where we will see what is inside and even how ASUS is able to fit all of those expansion slots.

haha, the second RS is for Redundant PSU, and first RS stands for Rackmount Server

If that’s true Juno Shi it should be RP (redundant power) not RS

The name basically tells you:

RS – Rack Server

7 – DP Mainstream

0 – 1U

0 – W/O IB

–

E10 – Intel Mehlow / Whitney

R – Redundant PSU

S – Hot-Swap HDD Bay

12 – 12 HDD Bays

U – All Bays support NVME

Hope that clarifies it.

This looks like a great server appliance, however (and I know this is difficult) how does it cost – compared to a similar priced Dell or HPE?

Our in-house IT dept only buys HPE – everything. Sure it’s good hardware but you can’t help but think that we’re paying a premium for the name (and highly likely someone’s brother-in-law works at an HPE channel supplier)

Does this support 6 or 12 NMVe with 1 CPU installed?