ASUS ESC8000A-E11 Power Consumption

Here, ASUS utilizes 3kW power supplies that are 80Plus Titanium rated. We should quickly note that our lab racks are on pairs of 208V 30A circuits. That puts these PSUs into “only” the 2.4kW each range. The 208V rack is a staple in North American racks, but the higher voltages are more common for GPU servers. We just use 208V for everything, but that may change in the future.

In terms of power consumption, with dual AMD EPYC 7763’s at 280W TDP and 8x NVIDIA A40’s at 300W each, we got a maximum power consumption of around 3.6kW. There is still quite a bit of room to go higher by adding a DPU, as an example, in the x16 full-height slot, additional drives, and higher-power devices in the PIKE and the low-profile NIC slots. Our sense is that hitting 4kW in this system is entirely possible. Of course, using lower power GPUs and CPUs can have a dramatic effect so we would also not be surprised to see lower-spec’d solutions in the 2.4kW range, we just were limited by the GPUs we had on-hand for this.

Again, as a plea to our STH readers, if you are involved in designing next-generation GPU systems, especially beyond this generation, please do look to liquid cooling.

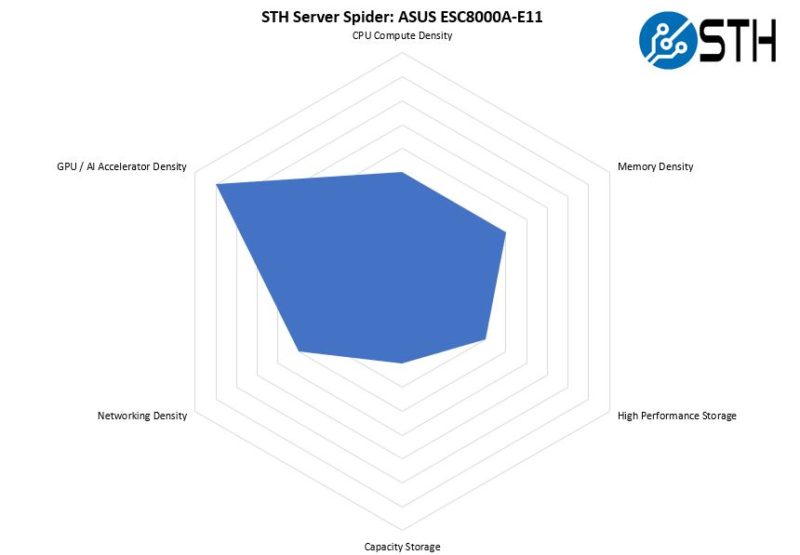

STH Server Spider: ASUS ESC8000A-E11

In the second half of 2018, we introduced the STH Server Spider as a quick reference to where a server system’s aptitude lies. Our goal is to start giving a quick visual depiction of the types of parameters that a server is targeted at.

Overall, this is a GPU compute server. That practically means that the server is not going to have the most storage, the highest-density compute, or the most networking. Indeed, it does not have a base networking capability, it requires using add-in cards (but has slots for these.) That is precisely the design of this server. It is also much denser in terms of GPU compute than some options from other vendors such as the Dell EMC PowerEdge XE8545 that only has four GPUs in 4U of space, and has less flexible GPU options. This is a fairly clear case where ASUS has a market and we can see the specialization of the system for that market.

Final Words

When reflecting on the ASUS ESC8000A-E11, there are certainly some standout features. ASUS has been making servers in the 8-GPU class for some time. A great example is the front of the system where there is cold aisle access for USB and VGA (and the Q Code feature) designed for in-data center service. Some companies would put the management port next to these, but ASUS does a great job putting the management port still on the rear with other networking and cabling. It is a small feature, but one that has clearly been designed through years of experience.

Another aspect is simply the cooling. The dual-fan ducted airflow to the AMD EPYC CPUs along with the 3U/1U split for GPUs/ CPUs and NICs is surprisingly simple, yet effective. One item we would note is that while the CPU fans are redundant, there is not really that same level of redundancy on the GPU side. While that is actually fairly common in these 8x GPU servers, it is just something to keep in mind.

What was very impressive was cooling 8x 300W GPUs. Many companies buying these systems will look at the NVIDIA A30, A100, or other accelerators such as AMD GPUs, or Xilinx/ Intel FPGAs. Some of those solutions have lower TDP requirements, but being able to cool 300W is no small feat. When cards like the NVIDIA GTX 1080 Ti’s were common years ago in this class of system, getting cards cooled above 225-240W was a challenge. Now, this is done out of the box with ease.

Overall, the ASUS ESC8000A-E11 performed well for us. In this review, we tried to show some of the differentiators of the ASUS design since every vendor that has an 8x or 10x GPU system these days has evolved offerings based on their customer base and feedback. Having reviewed these systems for years, it was fun to see the pain points we saw on previous generations directly addressed by ASUS. Still, just having a 4U machine able to cool 300W GPUs and 280W CPUs was very impressive.

Wow. What a system!!!

eth hashrate?

Very interesting system Patrick. Thanks for making this article about it. It is rather impressive to see this amount of GPU and CPU power, along with full DIMM capacity. It is not clear from the ASUS website or the article, but it appears that this system also repurposes one xGMI link, like the R7525, to reach 160 PCIe lanes.

The screenshots from the ASPEED AST2600 iKVM look exactly like the Lenovo SR655.

The choicest web hosting as a replacement for small and good province | Hostupp

If you want to start a web business and use cobweb hosting championing your website, then earliest of all, you have to be au courant of the net hosting services. You should be acquainted with what cobweb hosting is before choosing it as your preferred maintenance provider. If you are looking in behalf of the most suitable trap hosting service in UK that can help you step on it an online self-assurance in prospective, Hostupp is the conservative preference for you. With our web server optimization tools and premium network applications and plugins, we deliver a one and only spider’s web suffer and bear higher conversions with our perspicacious features at affordable prices. We believe in sacrifice scalable solutions without any compromise on quality or deposit through providing 24/7 person support. If you are looking to convert your story into reality, you contain happen to the sound place.

The Best Hosting Serving in 2021. That is not our words; this is powwow of mouth. We had the most satisfied customers from round 30 countries in the domain who are enjoying our network play and drug demonstrative customer strengthen services since starting operations. Start your stoop proceed promoting success with Hostupp now!

Super solidly speed, uncontrolled bandwidth, importance apps and plugins

While comparing to other hosting companies terminated the web, you will find them claiming their server speeds as highest practical ones but when it comes to physical results; they ease up behind a extensive way. With our ultra fast data processing features, dedicated hardware organization and SSD servers we provide a blazingly fast messenger worry hurriedness on all your pleasure ranging from static websites to online stores built with wordpress or magento ecommerce stage, hosting wordpress blogs and wordpress website visitors.

We are Hostupp – The later of snare hosting

What is the purpose of the SD card slot? Would you use it as an OS drive?