ASUS ESC8000A-E11 Management

The ASUS management solution is built upon the ASPEED AST2600 BMC running MegaRAC SP-X. ASUS claims this is faster to boot, but we did not time it. The AST2600 is a jump in Arm compute resources, so that would make sense.

ASUS calls this solution the ASMB10-iKVM which has its IPMI, WebGUI, and Redfish management for the platform. The last EPYC GPU server we looked at from ASUS, the ESC4000A-E10 was still using ASMB9 and the AST2500, so this is an upgrade to a newer faster BMC.

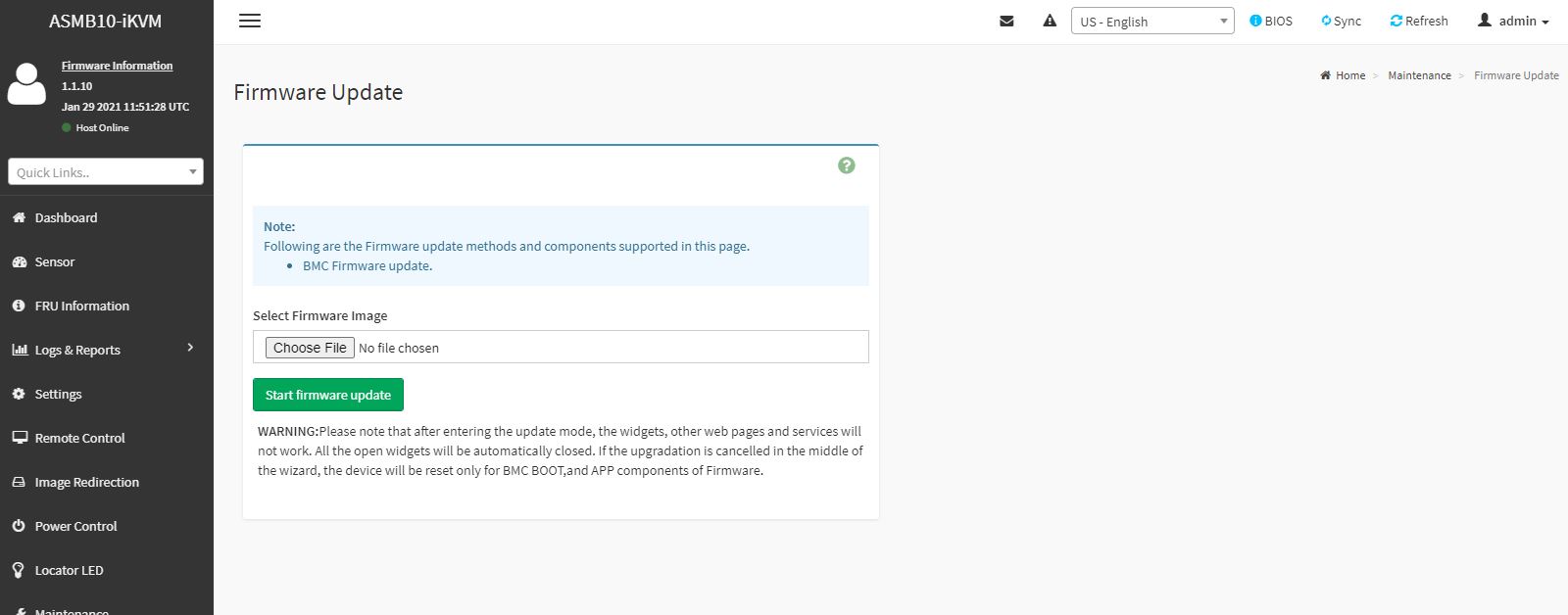

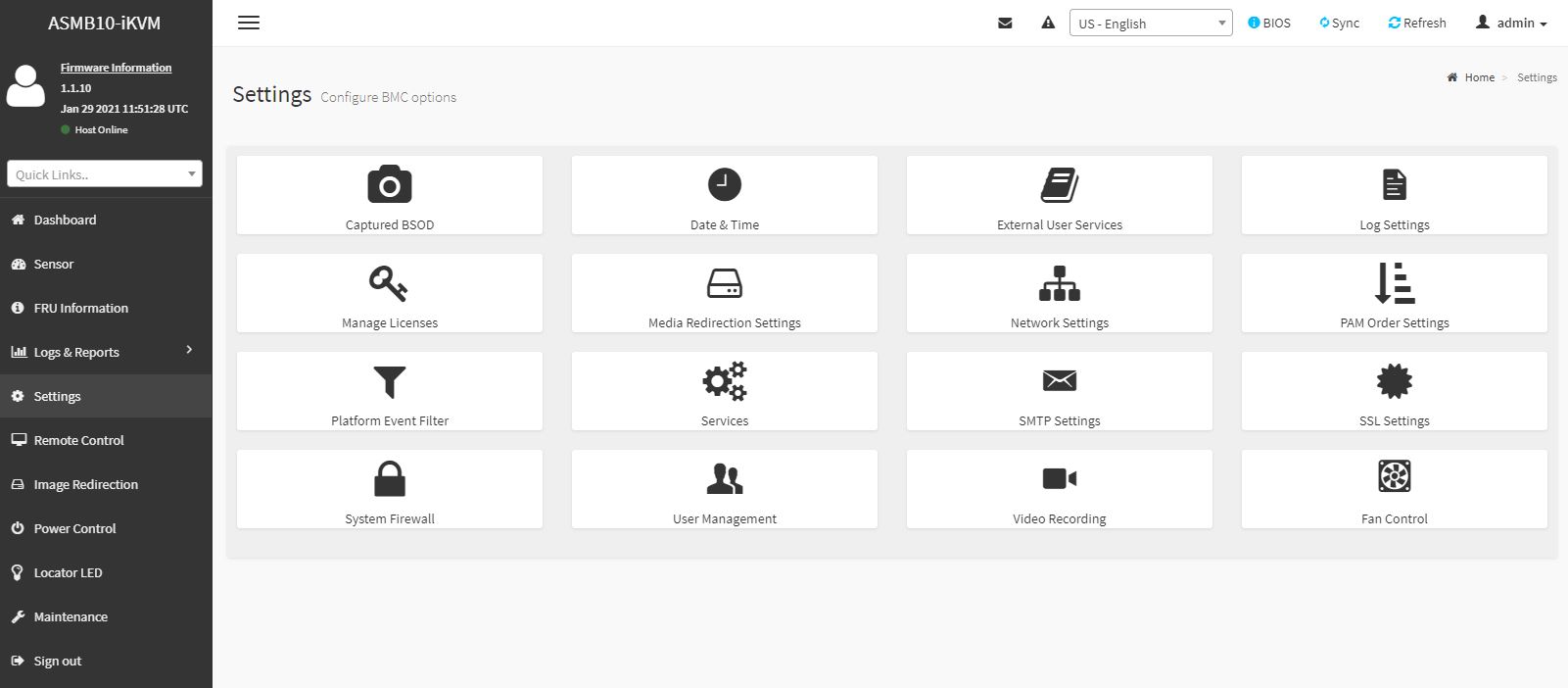

Some of the unique features come down to what is included. For example, ASUS allows remote BIOS and firmware updates via this web GUI as standard. Supermicro charges extra for the BIOS update feature.

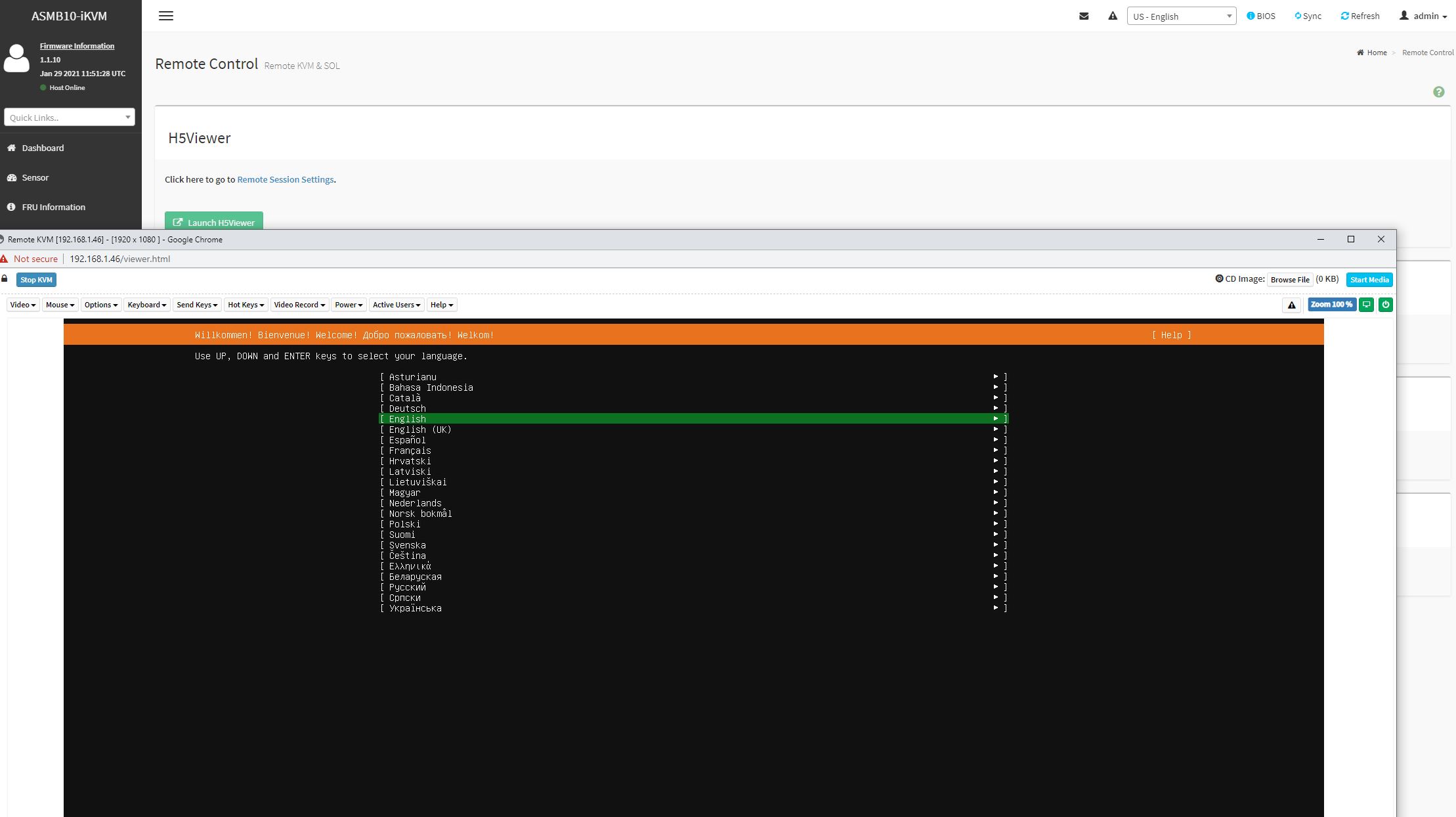

Another nice example is that we get the HTML5 iKVM functionality included with the solution. There are serial-over-LAN and Java iKVM options too. Companies like Dell EMC, HPE, and Lenovo charge extra for this functionality, and sometimes quite a bit extra.

ASUS supports Redfish APIs for management as well. Something that we do not get is full BIOS control in the web GUI as we get in some solutions such as iDRAC, iLO, and XClarity. For GPU servers where one may need to set features like the above 4G encoding setting with certain configurations, this is a handy feature to have.

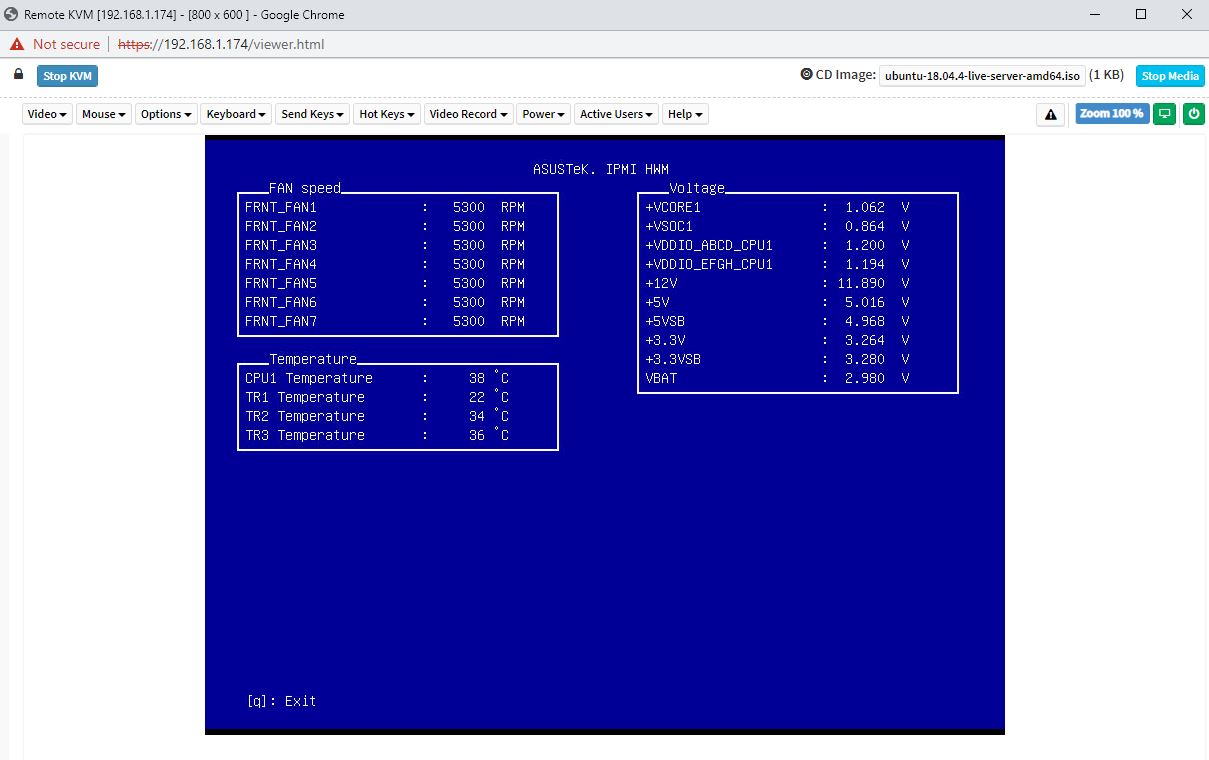

Another small feature that is different from ASUS is the IPMI Hardware Monitor or IPMI HWM. While one can pull hardware monitoring data from the IPMI interface, and some from the BIOS, ASUS has a small app to do this from the BIOS.

We like ASUS’s approach overall to management. It aligns with many industry standards and also has some nice customizations.

Some organizations prefer more vendor-centric solutions, but ASUS is designing its management platform to be more of what we would consider an industry-standard solution that does not push vendor lock-in.

Next, let us discuss performance.

ASUS ESC8000A-E11 Performance

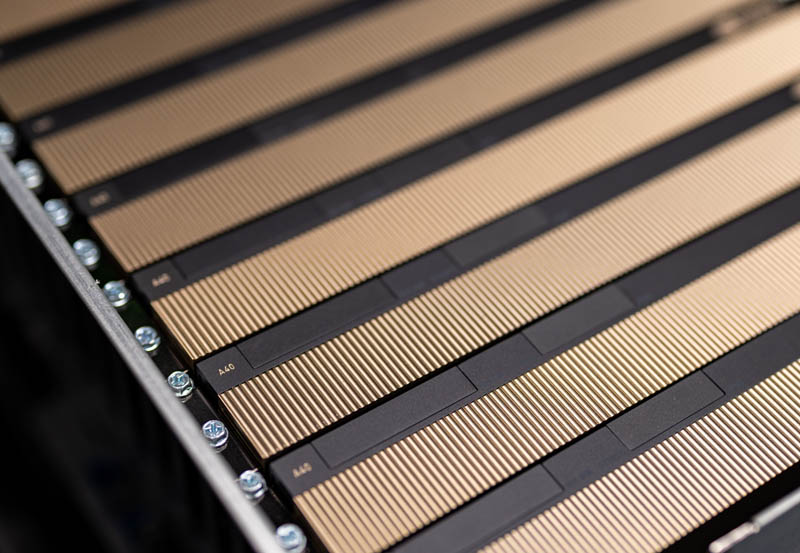

We are actually reviewing a few GPU servers at the moment, but the key to this class of systems is cooling. GPUs and CPUs tend to perform similarly in systems when they are properly cooled, but this server we were able to use both AMD EPYC 7763 280W TDP parts, the top-end of the specs for the CPU, as well as 300W NVIDIA A40 GPUs, the top end PCIe cards in terms of TDP.

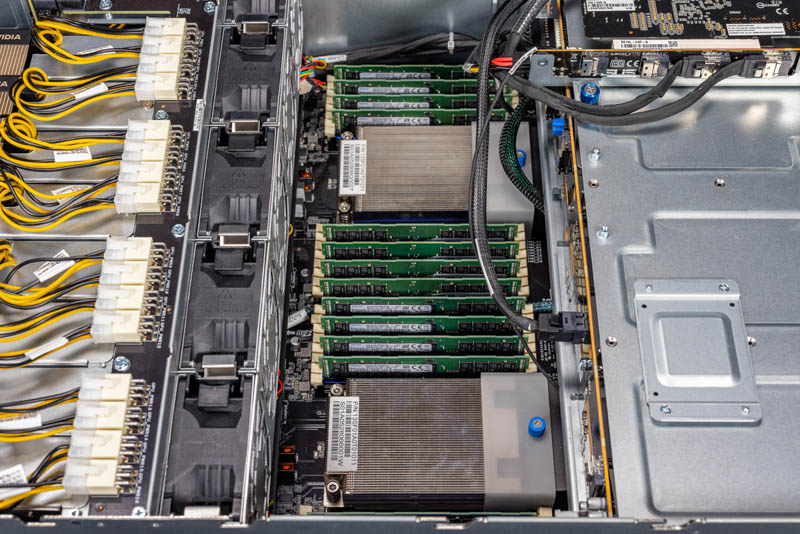

First, we wanted to validate that the CPU cooling was sufficient so we used two 64 core parts at different TDP levels.

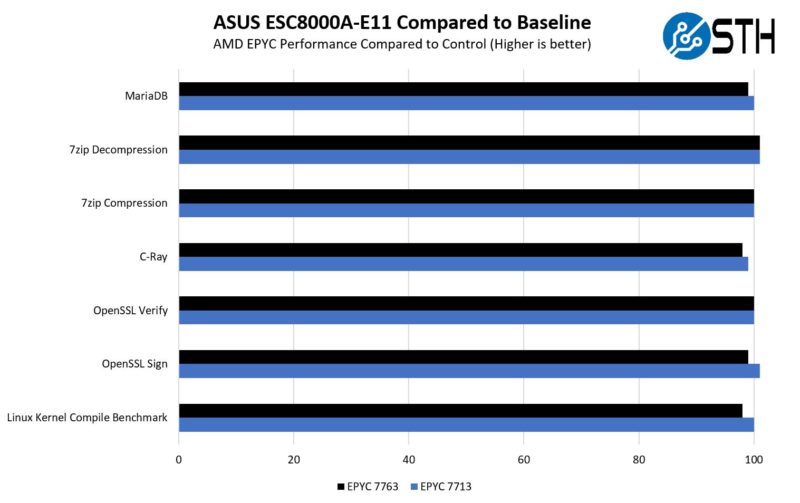

Here is what we saw with the AMD EPYC 7713 and AMD EPYC 7763 64-core CPUs compared to our non-GPU server data:

Overall, this was very close. We will note that the AMD EPYC 7763 was around 1% slower than our baseline 2U non-GPU server figures. Since that is still within the test variation, it seems reasonable, but it does indicate that this is closer to the limit of the solution’s cooling capability.

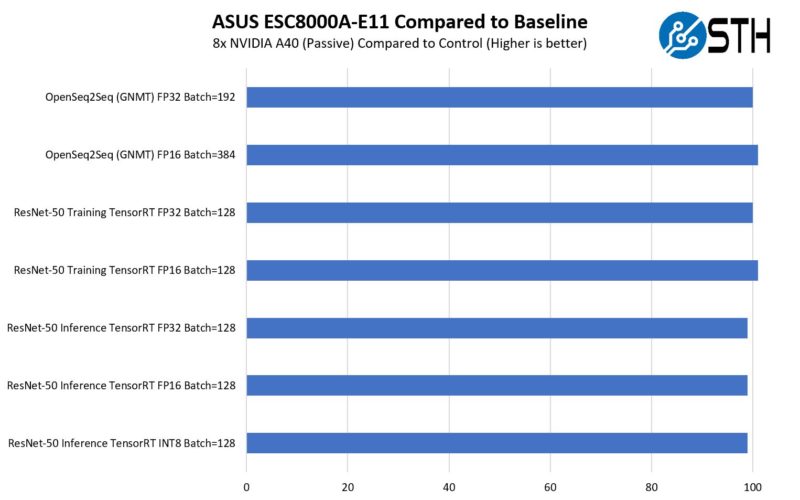

Next, we put the NVIDIA A40’s through our AI/ deep learning benchmarks to see how they would perform.

You are going to see the baseline system very soon on STH, but overall, the cooling seems to have held up well in this configuration.

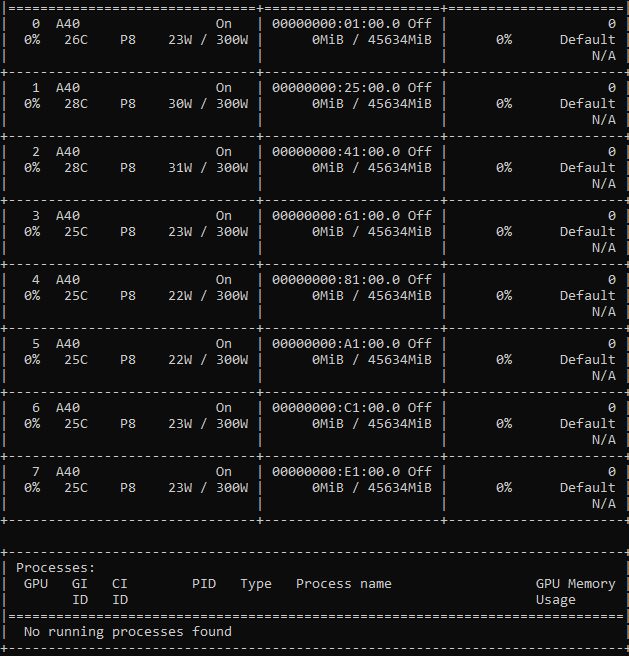

Since we know people love these screenshots, although the maximum power consumption we saw was in the 296-300W range, the idle of each GPU was only 22-31W and the idle temperatures were very cool.

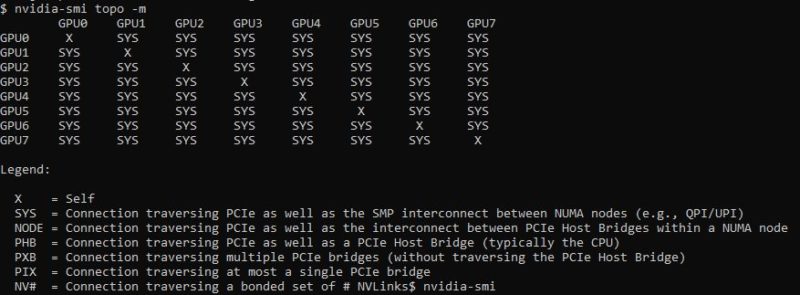

Here is the topology map. We can see the power of AMD EPYC PCIe Gen4 connectivity here.

Overall, performance was solid for a system in this class.

Next, let us get to power consumption and then to the STH Server Spider and our final words.

Wow. What a system!!!

eth hashrate?

Very interesting system Patrick. Thanks for making this article about it. It is rather impressive to see this amount of GPU and CPU power, along with full DIMM capacity. It is not clear from the ASUS website or the article, but it appears that this system also repurposes one xGMI link, like the R7525, to reach 160 PCIe lanes.

The screenshots from the ASPEED AST2600 iKVM look exactly like the Lenovo SR655.

The choicest web hosting as a replacement for small and good province | Hostupp

If you want to start a web business and use cobweb hosting championing your website, then earliest of all, you have to be au courant of the net hosting services. You should be acquainted with what cobweb hosting is before choosing it as your preferred maintenance provider. If you are looking in behalf of the most suitable trap hosting service in UK that can help you step on it an online self-assurance in prospective, Hostupp is the conservative preference for you. With our web server optimization tools and premium network applications and plugins, we deliver a one and only spider’s web suffer and bear higher conversions with our perspicacious features at affordable prices. We believe in sacrifice scalable solutions without any compromise on quality or deposit through providing 24/7 person support. If you are looking to convert your story into reality, you contain happen to the sound place.

The Best Hosting Serving in 2021. That is not our words; this is powwow of mouth. We had the most satisfied customers from round 30 countries in the domain who are enjoying our network play and drug demonstrative customer strengthen services since starting operations. Start your stoop proceed promoting success with Hostupp now!

Super solidly speed, uncontrolled bandwidth, importance apps and plugins

While comparing to other hosting companies terminated the web, you will find them claiming their server speeds as highest practical ones but when it comes to physical results; they ease up behind a extensive way. With our ultra fast data processing features, dedicated hardware organization and SSD servers we provide a blazingly fast messenger worry hurriedness on all your pleasure ranging from static websites to online stores built with wordpress or magento ecommerce stage, hosting wordpress blogs and wordpress website visitors.

We are Hostupp – The later of snare hosting

What is the purpose of the SD card slot? Would you use it as an OS drive?