This is going to be a fun one for many STH readers. We have reviewed 8x and 10x GPU servers (PCIe) for many years on STH. The ASUS ESC8000A-E11 is built a bit differently than other options, which makes it a fascinating design to look at. This server has space for two double-width PCIe Gen4 accelerators, two AMD EPYC CPUs, and additional expansion. This server was also just announced this week at NVIDIA GTC 2021 so this is absolutely a brand new server on the market (we did not cover that announcement since this review was forthcoming.) Let us get to our review so we can start taking a look at how these systems are made.

ASUS ESC8000A-E11 Hardware Overview

For this review, we are going to take a look at the exterior of the server, then the interior. As a fun note here, I got a new lens the day before this review was published so all of these photos were re-shot on the Canon 50mm f/1.2L. Years ago STH photos were all done on a 50mm lens, and I wanted to try a review using the same focal length.

ASUS ESC8000A-E11 External Hardware Overview

The front of the server is nothing short of fascinating. This is a 4U system, and there are some distinctive ASUS features here. The top is an area designed for expansion and customization. The bottom has I/O and is designed for airflow. The middle incorporates 8x 3.5″ drive bays.

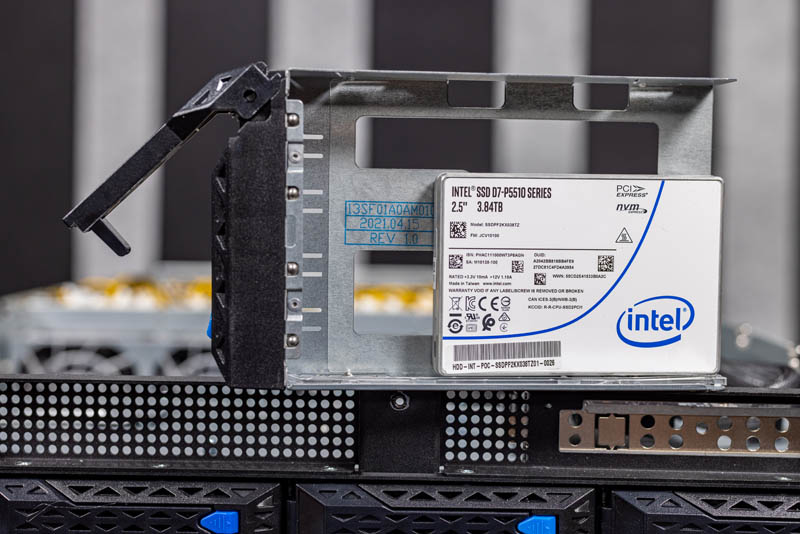

The 3.5″ drive bays can be SAS, SATA, or NVMe depending on how the backplane is configured. One can also use an adapter to fit 2.5″ drives into this 3.5″ drive tray.

Another feature that is really interesting is that we have normal power and reset buttons on the front. What is a bit more unique is that we have the Q Code LCD that shows the POST status codes. There is also a USB and a VGA port. This system is designed to be serviced by a technician through a cold aisle. In racks where there are 10 of these systems each using 3kW+ it is usually much more pleasant to service from the cold aisle versus the hot aisle.

Another feature you may have spotted is that there are two sets of two fans. These fans are specifically there to cool the AMD EPYC CPUs that we will discuss more during our internal overview.

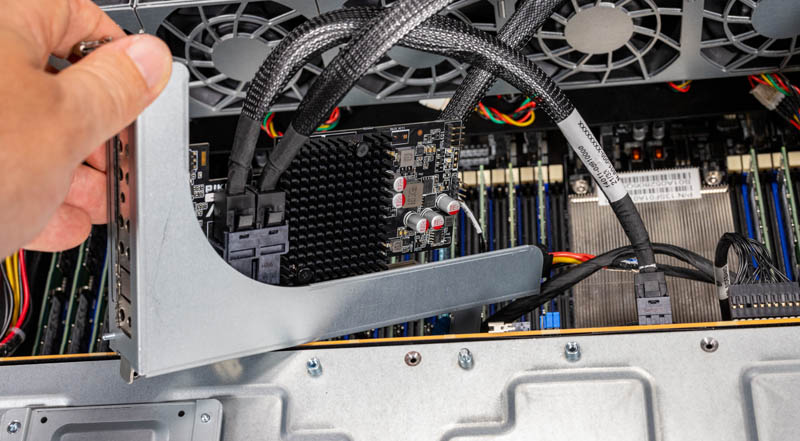

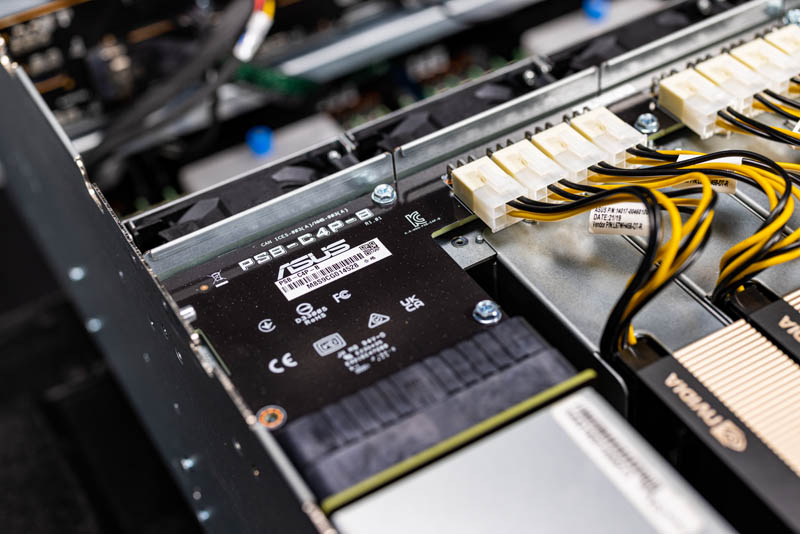

The top section in our test system has a PIKE II card providing SAS connectivity. One can see that the system has additional mounting points along the front for different functions that we do not have, but we will show an example of a M.2 carrier in our internal overview.

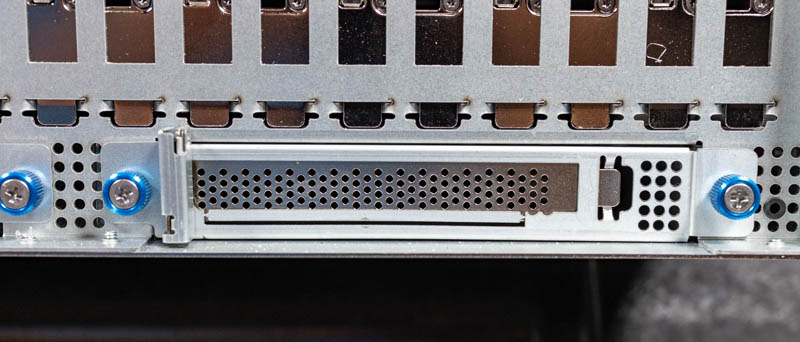

The expansion slot on the front is a PCIe Gen4 x16 slot with an x8 link. This matches well with a HBA or RAID controller like the PIKE SAS 3008-8i solution.

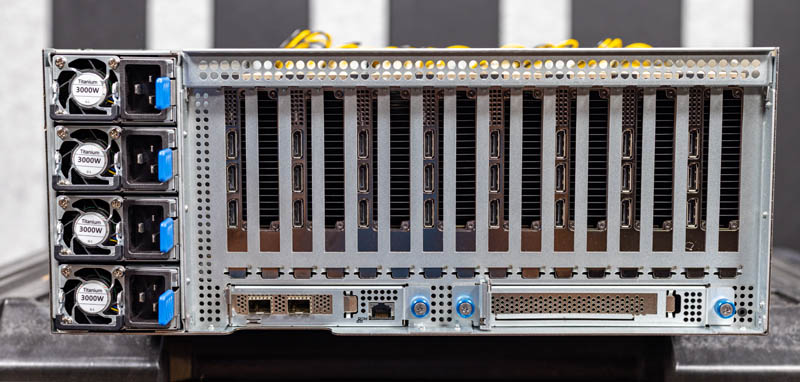

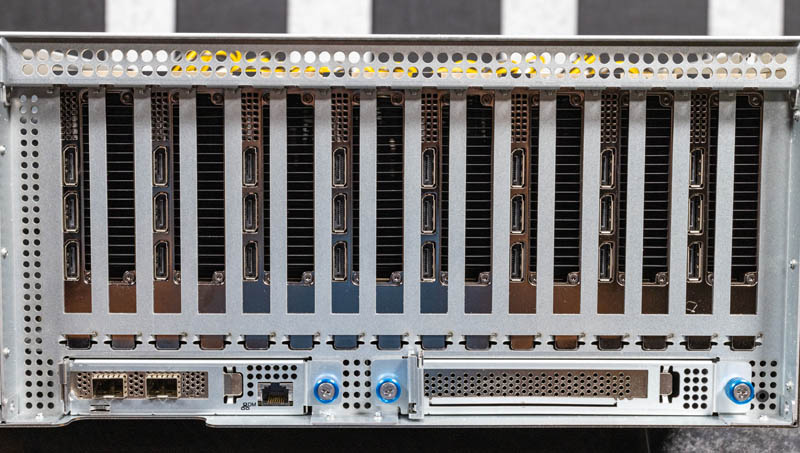

This system is very rear-heavy when configured, and this shot shows why. The rear of this system is dedicated to expansion slots and power supplies.

There are four power supplies. Each is an 80Plus Titanium unit that can put out up to 3kW in the 220V-240V range. This design gives a great level of power redundancy to the system.

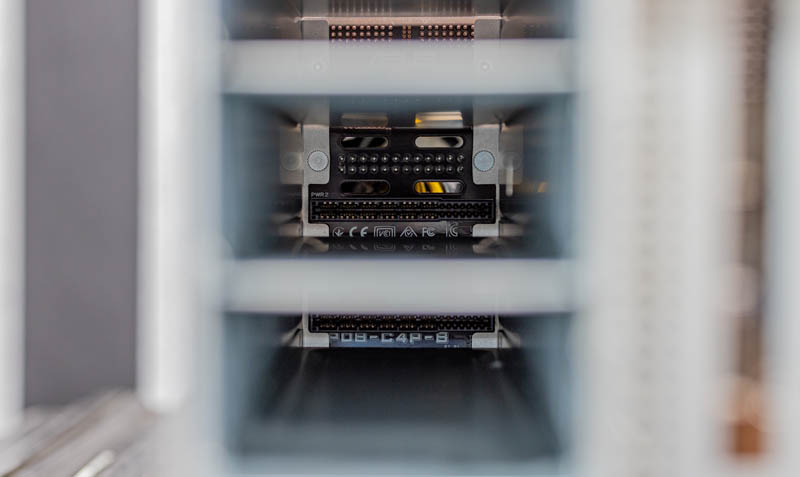

We have recently gotten some requests to show how these PSUs are connected. Here is a look into an empty PSU slot so you can see the connector as well as the perforated backplane, designed to allow air to pass through the PCB and through the PSU.

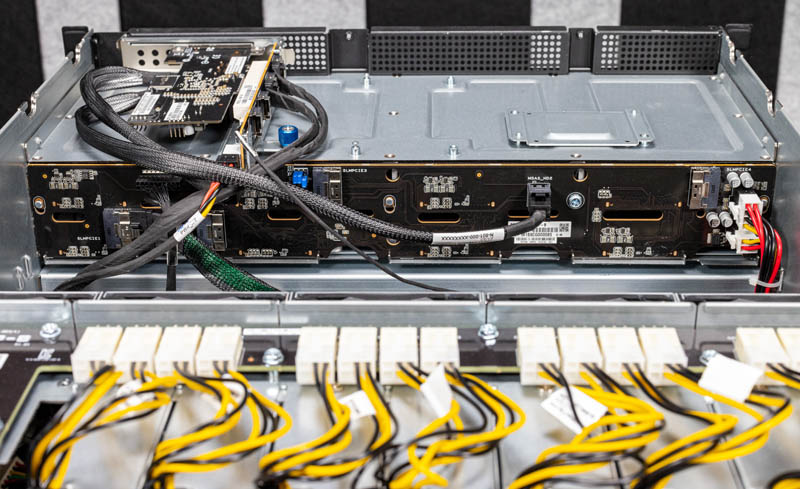

Here is a quick shot of the power distribution board.

Aside from power, there are 10x PCIe expansion slots in the rear of the system to go along with the PIKE slot on the front. Eight of these PCIe Gen4 x16 slots are double-width for GPUs.

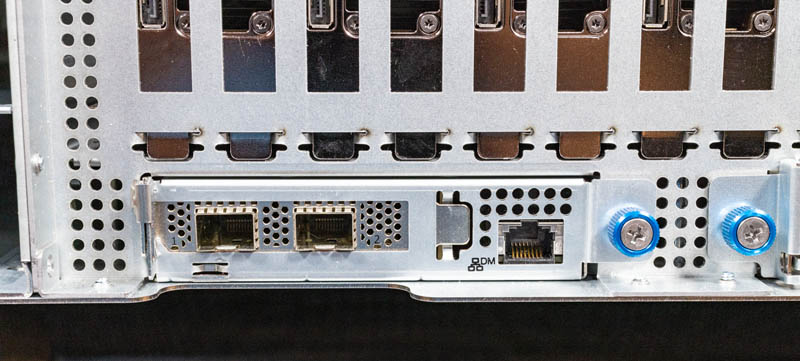

On the bottom left of this area we have a NIC in a low-profile slot along with a management port.

One can see this assembly is relatively easy to service by unscrewing the thumb screw and pulling the assembly out from the chassis. The management NIC is on another PCB on this tray as well.

The other slot is a full-height expansion slot.

This extends for another PCIe Gen4 x16 full-height slot.

Of course, the real magic happens inside, and so let us get to the internal hardware overview.

Wow. What a system!!!

eth hashrate?

Very interesting system Patrick. Thanks for making this article about it. It is rather impressive to see this amount of GPU and CPU power, along with full DIMM capacity. It is not clear from the ASUS website or the article, but it appears that this system also repurposes one xGMI link, like the R7525, to reach 160 PCIe lanes.

The screenshots from the ASPEED AST2600 iKVM look exactly like the Lenovo SR655.

The choicest web hosting as a replacement for small and good province | Hostupp

If you want to start a web business and use cobweb hosting championing your website, then earliest of all, you have to be au courant of the net hosting services. You should be acquainted with what cobweb hosting is before choosing it as your preferred maintenance provider. If you are looking in behalf of the most suitable trap hosting service in UK that can help you step on it an online self-assurance in prospective, Hostupp is the conservative preference for you. With our web server optimization tools and premium network applications and plugins, we deliver a one and only spider’s web suffer and bear higher conversions with our perspicacious features at affordable prices. We believe in sacrifice scalable solutions without any compromise on quality or deposit through providing 24/7 person support. If you are looking to convert your story into reality, you contain happen to the sound place.

The Best Hosting Serving in 2021. That is not our words; this is powwow of mouth. We had the most satisfied customers from round 30 countries in the domain who are enjoying our network play and drug demonstrative customer strengthen services since starting operations. Start your stoop proceed promoting success with Hostupp now!

Super solidly speed, uncontrolled bandwidth, importance apps and plugins

While comparing to other hosting companies terminated the web, you will find them claiming their server speeds as highest practical ones but when it comes to physical results; they ease up behind a extensive way. With our ultra fast data processing features, dedicated hardware organization and SSD servers we provide a blazingly fast messenger worry hurriedness on all your pleasure ranging from static websites to online stores built with wordpress or magento ecommerce stage, hosting wordpress blogs and wordpress website visitors.

We are Hostupp – The later of snare hosting

What is the purpose of the SD card slot? Would you use it as an OS drive?