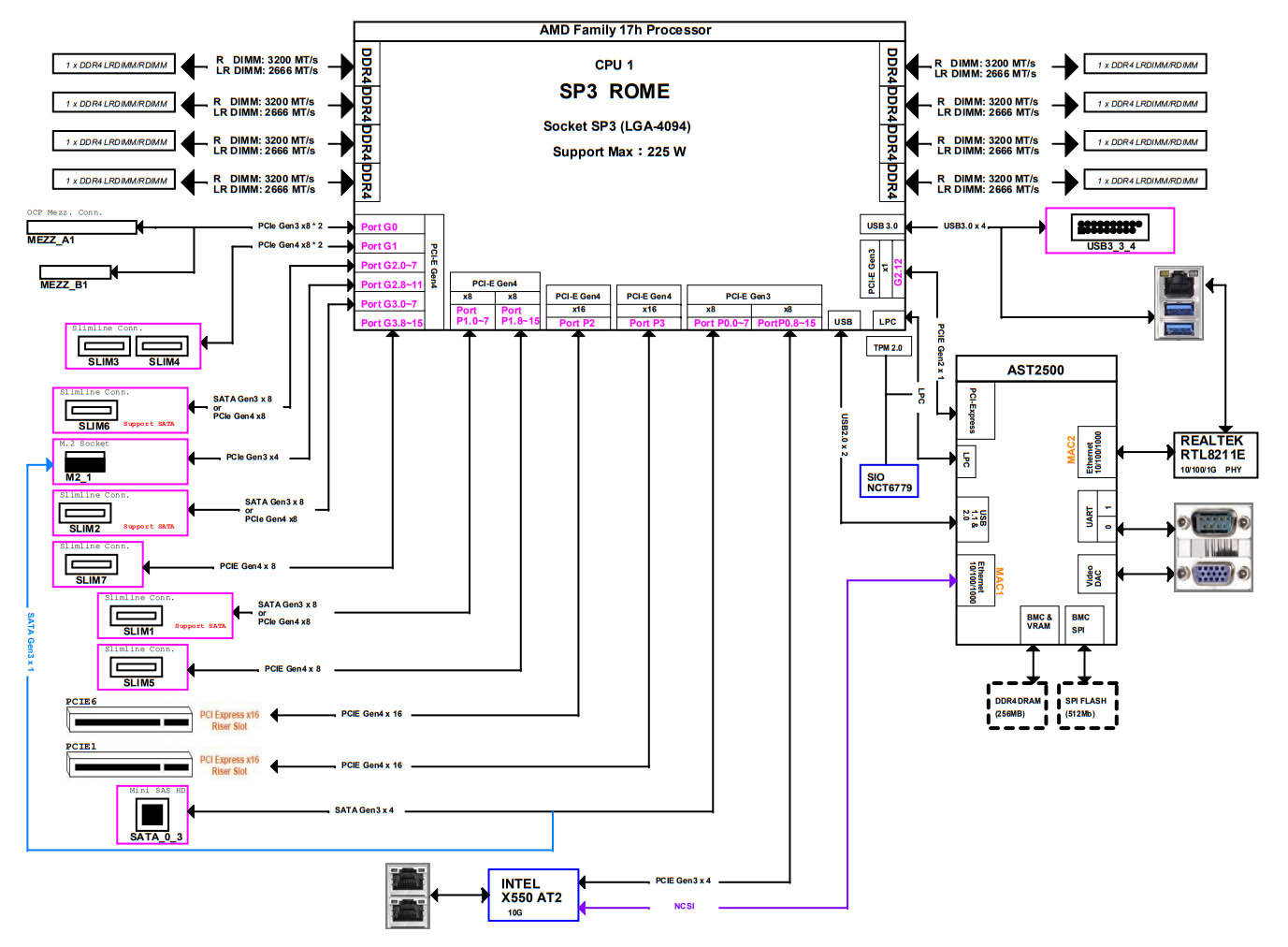

ASRock Rack 2U4G-ROME/2T Block Diagram

We did not have a block diagram for this server. At the same time, this is a single socket AMD EPYC 7002/ 7003 platform so there is only one CPU and no PCH which means the topology is relatively straightforward. Still, we were able to find the block diagram for the motherboard.

Overall, a lot of the PCIe I/O is being used here. It is actually quite impressive which just how much functionality is being exposed using a standard ATX motherboard. At STH we discuss how cabled PCIe connections will become more common, and this is a great example regarding why this is the case.

ASRock Rack 2U4G-ROME/2T Performance

Here we have already taken a look at a number of AMD EPYC CPUs as well as GPUs, so what we wanted to do was to look at whether the cooling was still allowing for maximum performance in the ASRock Rack platform.

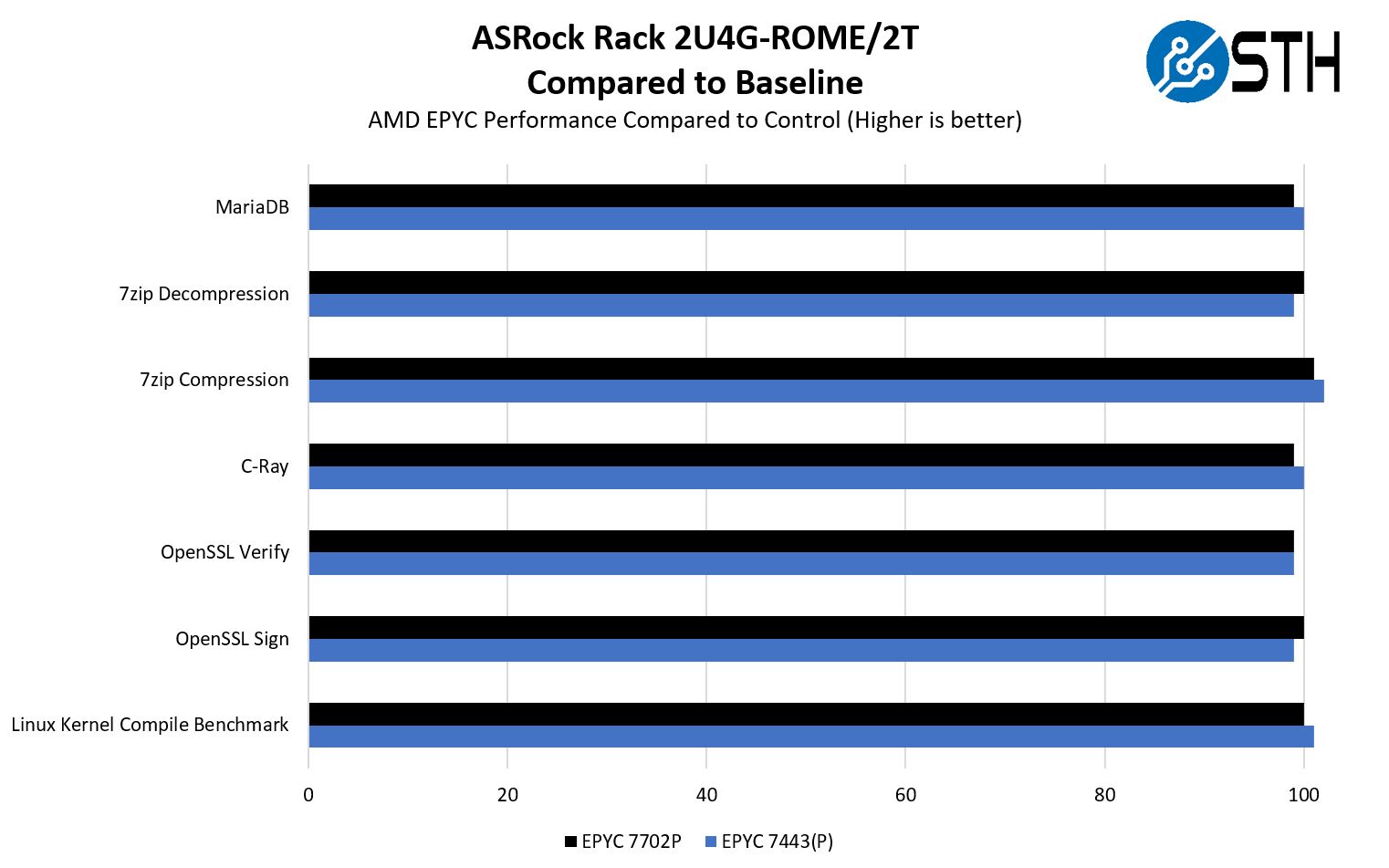

ASRock Rack 2U4G-ROME/2T CPU Performance to Baseline

For this exercise, we are using our legacy Linux-Bench scripts which help us see cross-platform “least common denominator” results we have been using for years as well as several results from our updated Linux-Bench2 scripts.

We wanted to look at two single-socket-focused SKUs. In a system like this, AMD’s discounting for its “P” series parts means that one can benefit from further cost savings with a single AMD EPYC versus dual Intel Xeons driving so many PCIe Gen4 lanes. Since we do not yet have the EPYC 7443P, but we do have the EPYC 7443, and have previously found P series parts to perform nearly identically to the standard parts, we are going to say that we expect an EPYC 7443P would behave similarly to what we saw here.

Overall, from a CPU perspective, ASRock Rack is performing in-line with our control CPU test platform. That means that, despite having four GPUs onboard, the system is able to cool the CPU relatively well. When we looked at the hardware design this was a major question we had. We could zoom in on the above, but to save bandwidth, these results were within a +/- 2% margin which we consider an allowance for test variation.

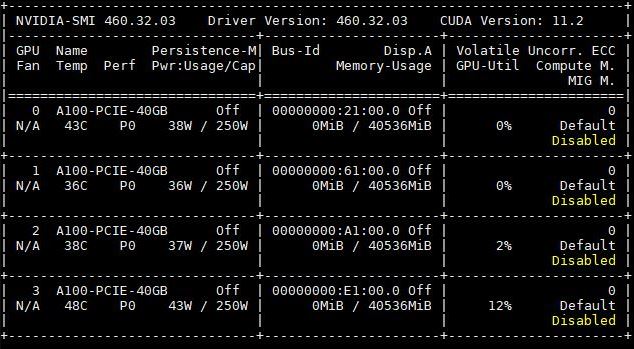

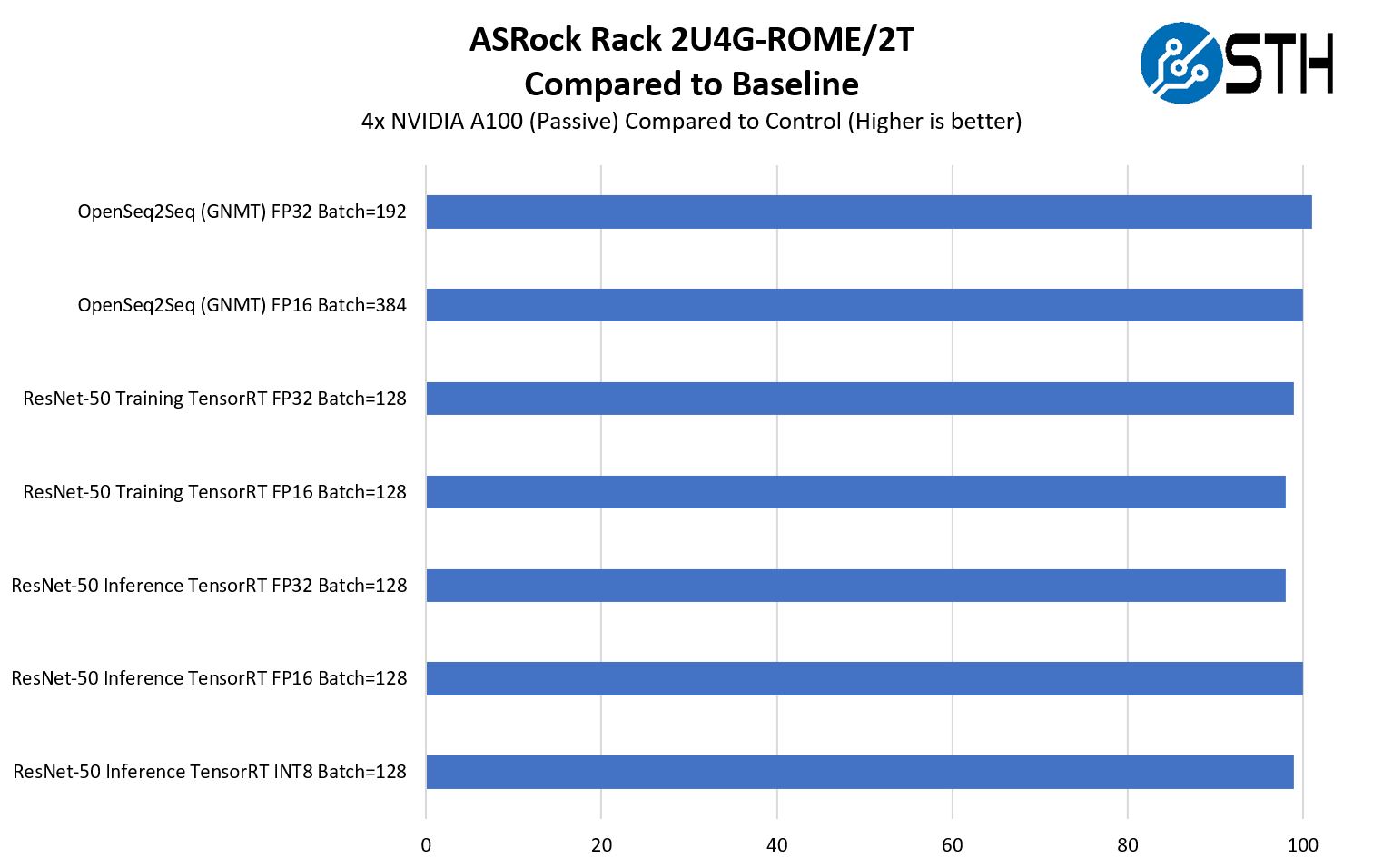

ASRock Rack 2U4G-ROME/2T GPU Performance to Baseline

We wanted to validate that the cooling was again able to cool all of the accelerators since that is a major feature for the server. Here we are using four passively cooled NVIDIA A100 40GB PCIe cards that we put into one of our 8x GPU boxes. We also reviewed the ASUS RS720A-E11-RS24U dual-socket system and have a Dell EMC PowerEdge R750xa review coming.

In terms of cooling, we saw that this system was able to keep the GPUs cool in our testing. Although the figures were within our test allowance, it was on average about 1% lower than baseline with the four A100’s. That is acceptable but we wanted to point that out since it feels more pronounced when looking at the chart.

Something that we did not do was to manually fail the single fan cooling one of the front GPUs that we showed in our hardware overview. We have a lot of testing with the A100’s and unfortunately cannot risk one testing that type of failure event.

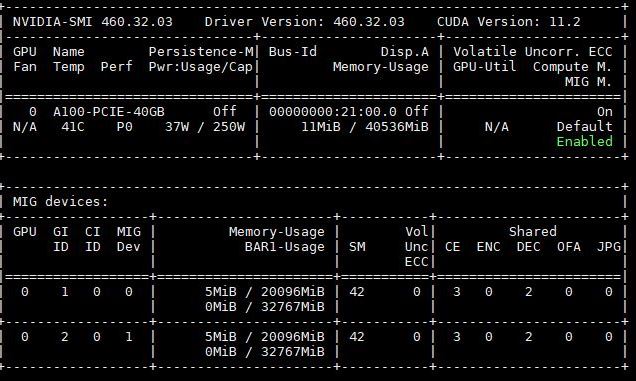

One other feature we wanted to just mention is that the NVIDIA A100’s we have here have MIG or multi-instance GPU support. This allows one to partition a GPU into multiple smaller slices. Here is an example partitioning a single 40GB A100 to two 20GB slices.

This is important since it practically means that one can get up to seven GPU instances per card. With four A100’s, that means this server could have up to 28 A100 slices. For inferencing workloads, a single A100 is often too much. Using MIG functionality means one can get often get the benefit of having many smaller GPUs, like NVIDIA T4‘s, without having to install so many physical cards.

One item we could not test was NVLink in this system. The four separate PCIe GPU risers meant that we could not utilize NVLink bridges on the A100’s as we did in this photo to get NVLink support in this system. NVLink is not relevant for all GPU configurations, and perhaps not even the most common ones in the 2U4G-ROME/2T. At the same time, there are servers out there, like the PowerEdge R750xa that we are reviewing soon that will support this. We just have to point that out since it is a design implication of the server.

Overall though, we saw good CPU and GPU performance in this platform.

Next, we are going to move to our power consumption, server spider, and final words.

We plan to use this chassis for our new AI stations.

Would the GPU front risers provide enough space to also fit a 2 and 1/2 slot wide GPU Card with the fans on top like a RTX3090?

I just like the fact STH has honest feedback in it’s reviews.

Thanks, Patrick!

Markus,

I was wondering the same thing as you!

the HH riser rear cutout also looks like it could take two single width cards.

in fact I will be surprised if this chassis doesn’t attract a lot of attention from ebtrepid modders changing the riser slots for interesting configurations.

jetlagged,

I think I have to order one and test it myself.

At the moment we design the servers for our new AI GPU cluster system and now, since it looks like NVIDIA had successfully removed all the 2 slot wide turbo versions of the RTX3090 with the radial blower design from the delivery chains, it has become very difficult to build affordable space saving servers with lots of “inexpensive” consumer GPU cards.

Neither the Asrock website or this review specify the type of NVME front drive bays supplied or the PCI gen supported. This is important information to know in order to be able to make a sourcing decision. AFAIK, there is only m.2, m.3, u.2, and u.3 (backwards compatible with u.2). Most other manufacturers colour code their nvme bays so that you can quickly identify what generation and interface are supported. What does Asrock support with their green nvme bays?