Last week, Apple announced a number of new products. While Apple announced a number of new products, I wanted to focus on the Apple Mac Studio as well as the Apple M1 Ultra. Specifically, why these new parts are going to be more important in the years to come.

The Apple M1 Ultra Shows the Future of Chip Design

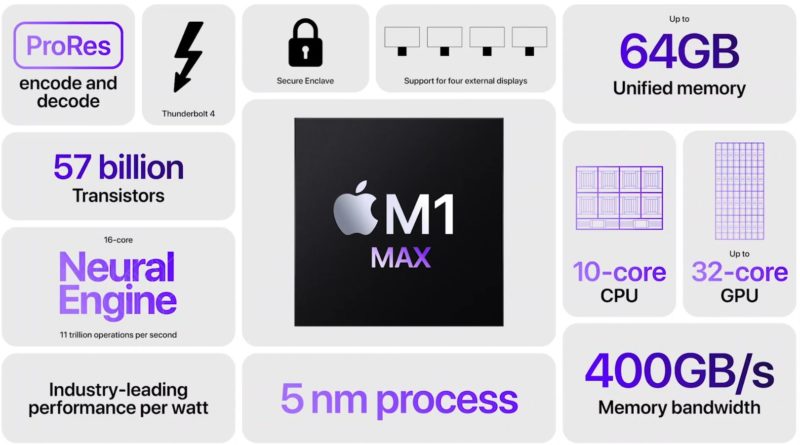

A few days ago, we covered the Universal Chiplet Interconnect Express UCIe 1.0 which looks at creating a modular packaging approach to chip packages. We know that different processes are better for things like Analog, SRAM, and logic and so it is starting to make sense to separate the different pieces into different tiles or chiplets and then integrate them. The Apple M1 started a trend at Apple integrating LPDDR with a SoC containing Arm CPU cores, GPU cores, accelerators for video and AI, and the rest of the I/O. With the M1 Pro and M1 Max, we got more. I am typing this from Alberta, Canada on an Apple MacBook Pro 14 with a 64GB M1 Max SoC onboard.

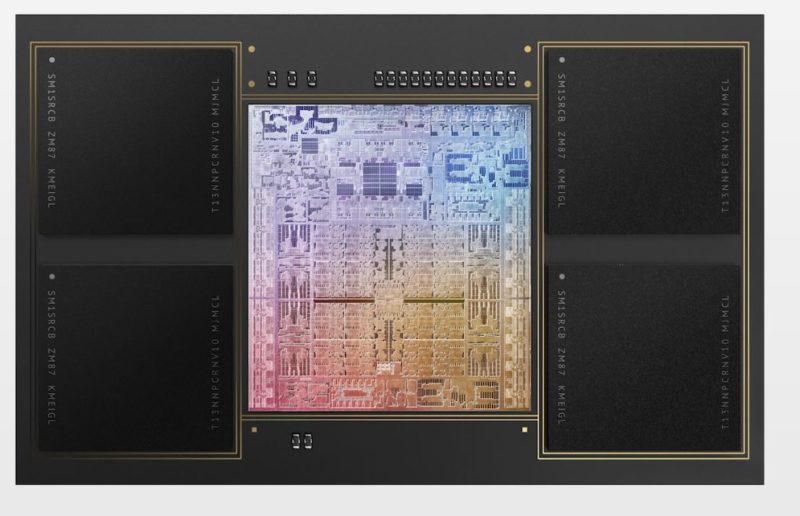

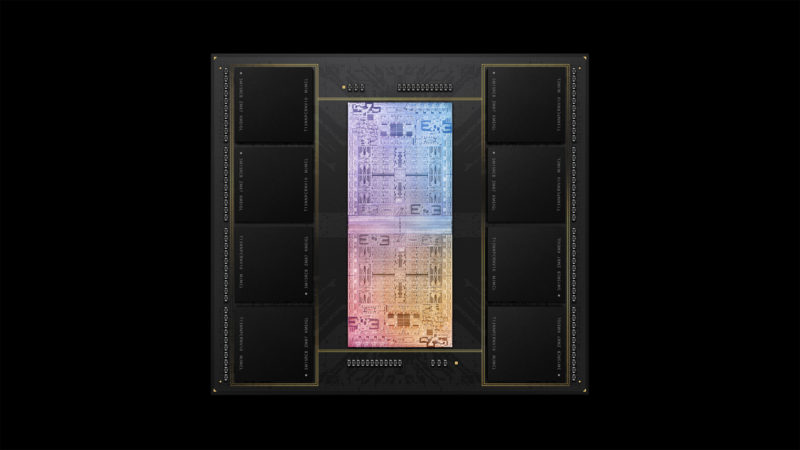

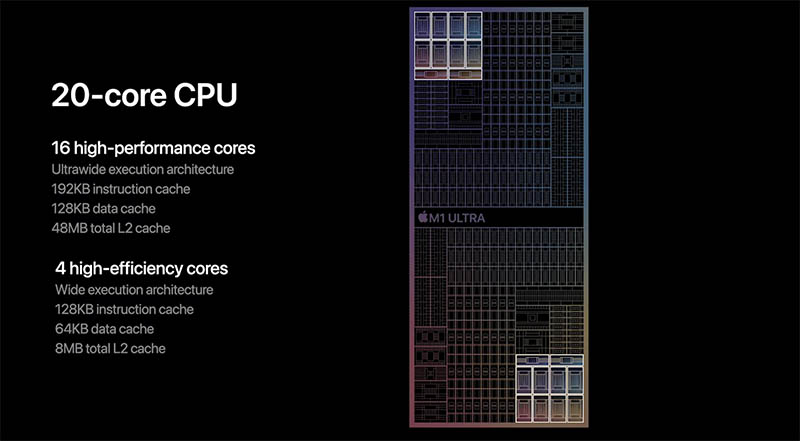

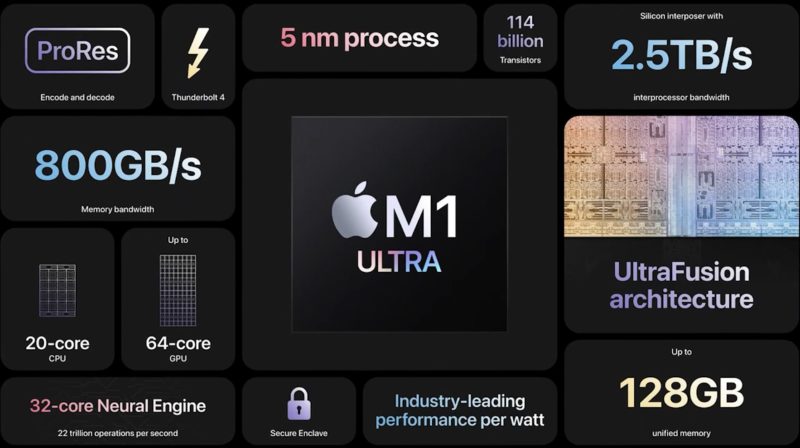

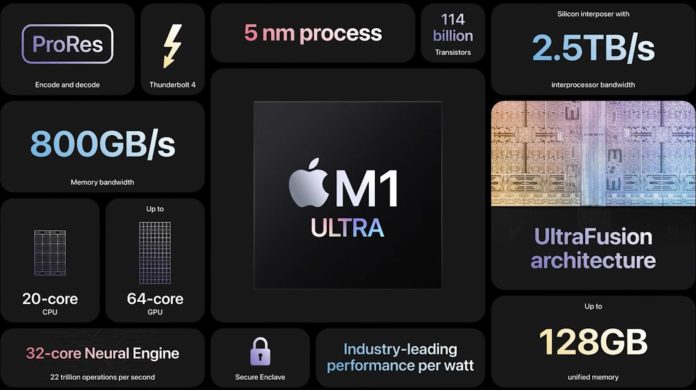

The M1 Ultra adds another layer of complexity. Namely, it doubles the compute and memory of the M1 Max.

There are several points that are worth noting for the future of chip design. First, let us get to the co-packaging of memory and the compute resources. We had a piece some time ago about Server CPUs Transitioning to the GB Onboard Era, and Apple is already there. M1 Ultra systems cost about as much as single-socket servers based on higher-end sockets, so this is very applicable here.

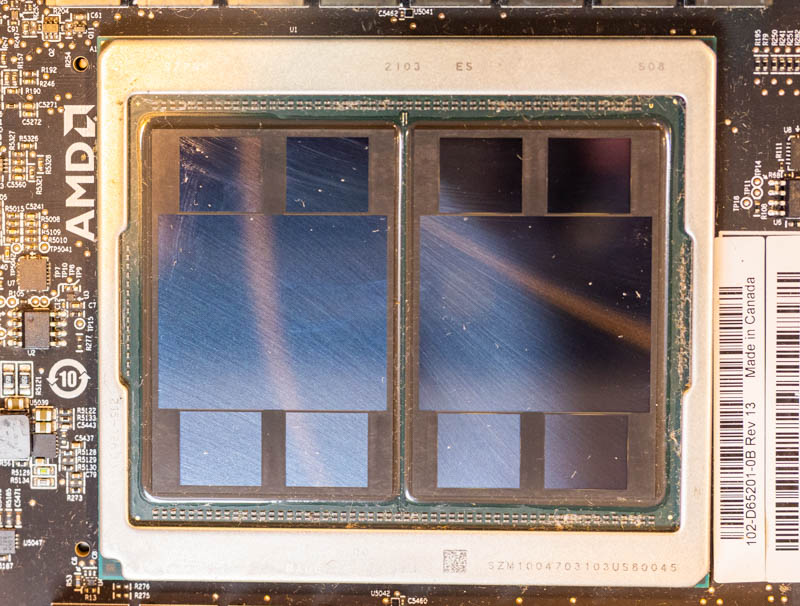

There is also a lot that looks somewhat familiar. AMD has the MI250X GPU, which has two GPU dies each with four memory packages connected. While what Apple is doing is in many ways extremely different in terms of interconnect, memory, and the fact it is a CPU with accelerators not just a GPU on each die, one can see a similar design philosophy.

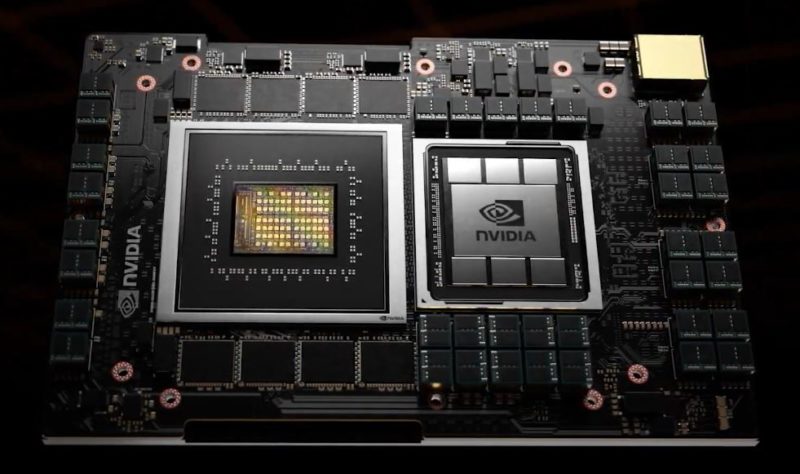

Last year NVIDIA also announced a future product, Grace where we can see LPDDR along the sides of the Arm CPU.

The bottom line is that we are moving into more complex packages, and Apple is moving along this same trendline.

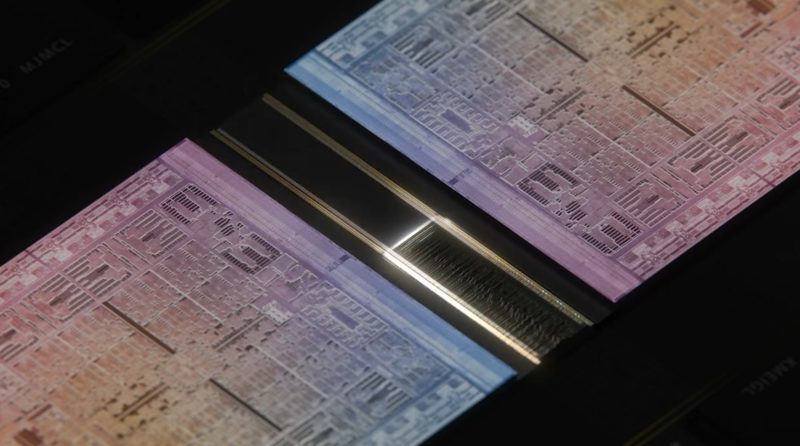

Second, there is a high-speed interconnect between the two M1 Max halves that make up the M1 Ultra. Interconnect is a huge point for future generation chips. We see companies talking about bump density and bits per Joule of efficiency. Apple claims massive bandwidth between these two halves. The important part though is that logically the interconnect makes it fast enough to have the system see the two GPU parts and the two CPU parts as single pieces of silicon. This is a big deal for the industry. It means Apple is moving along the high-speed packaging and technology trendline for its products.

Third, we have heavy use of accelerators. Benchmarks are one thing, but I also wanted to give some anecdotes of performance here. I have the M1 Mac Mini and had the 13″ MacBook Pro with the M1. Both have OK performance, but in general, it felt like working on a lower-end Core i3 Project TinyMiniMicro node, or worse, under many tasks. There were some things like video editing that are clearly better on the M1 than on a Core i3. Under heavy multitasking, the challenge seemed to be MacOS managing memory. Even on the 16GB model, it was very noticeable both on the Mac Mini and MacBook Pro M1 when a browser tab was not fresh. In contrast to the Mac Mini, for example, one can load most modern 1L PCs with two 32GB SODIMMs and rarely feel that issue. The key here is that Apple understands that a modern PC needs more than just CPU cores, and so relatively little space is leveraged for the Arm CPU cores.

A pretty typical day for me has an e-mail client open, usually a few PDFs, Adobe Photoshop, Lightroom, and Premiere Pro, along with many browser tabs. While Apple made it sound like the M1 would be perfect, it was clear that this was not the case. Memory bandwidth is great, but memory capacity is still important. With the completely co-packaged memory, there was a 16GB limit, and that 16GB was both CPU and GPU memory.

Fast forward to the M1 Max in the 14″ MacBook Pro, I love it. With the big Max chip and 64GB I no longer run into the long task switching times with the new system. This does not seem to be a single-threaded task limitation, but rather a memory capacity change so the Max clearly fixes that. The M1 Max’s video encoding speed is awesome due to onboard accelerators. While the M1 Max’s performance gains are not noticeable in a lot of what I do when it comes to rendering video, this is a superstar chip.

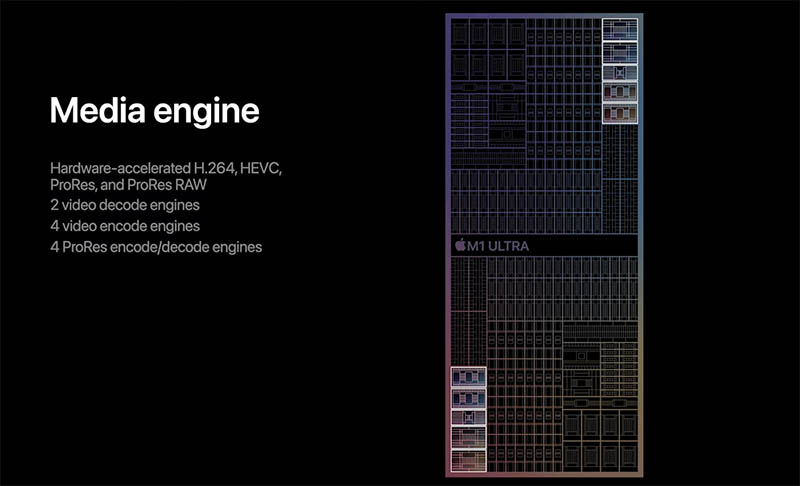

If you look closely at why it somewhat makes sense. Apple leans heavily on accelerators for video work so doing tasks like playing back and encoding video is on specialized chips. With the M1 Ultra, we have effectively a double M1 Max scaling AI engines, and media accelerators. That means more display outputs, although still fewer raw outputs than modern Alder Lake 1L PCs, it is still a lot better than the original M1 Macs. For reference, the HP Elite Mini 800 G9 with dGPU can power seven displays from a 1L desktop now.

At STH, we covered the importance of AI engines in AWS EC2 m6 Instances Why Acceleration Matters. The Apple M1 series chips are OK CPUs and GPUs. They have absolutely awesome media acceleration capabilities because of the acceleration. Apple’s vision with the M1 Ultra is not to go after expandable workstations like the AMD Ryzen Threadripper Pro 5000 Series. Instead, it is to provide a platform with heavy acceleration for content creators.

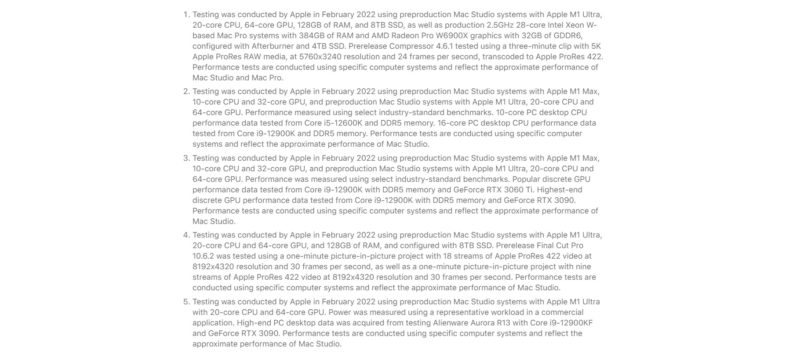

If you look at the footnotes to many of the benchmarks, Apple is often utilizing ProRes acceleration for its performance claims. It has a target market, and it is not gamers nor those that need high-end storage, networking, and heavy multi-GPU compute.

We have a quick video on some thoughts with the Apple M1 Ultra, AMD Threadripper 5000 Series, and the future of chip design here:

As always, we suggest opening this in its own YouTube window, tab, or app for the best viewing experience.

Final Words

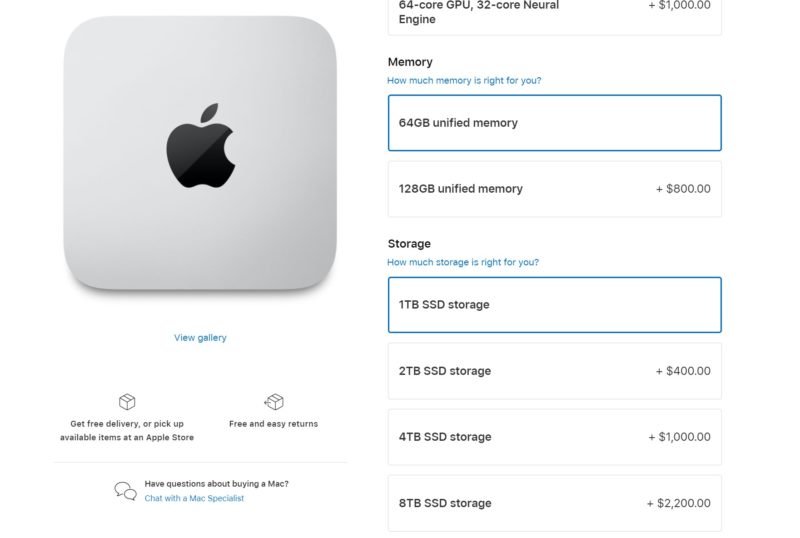

With all of this said, the M1 Ultra Mac Studio with 128GB still seems to be a product of the future, and an expensive one at that. While one can get a 64GB DDR4-3200 RDIMM for $330 to add capacity for a modern workstation, Apple charges $800 and limits one to 128GB of shared GPU and CPU memory. In some cases that is good, but if you need memory for your CPU, that is clearly a limiting factor. Also, the SSDs are extremely expensive. Apple is affixing the NAND and LPDDR to the motherboard and package and that forces folks to upgrade. Despite Apple’s claims of being green, this means that if anything fails, everything is replaced leading to more e-waste.

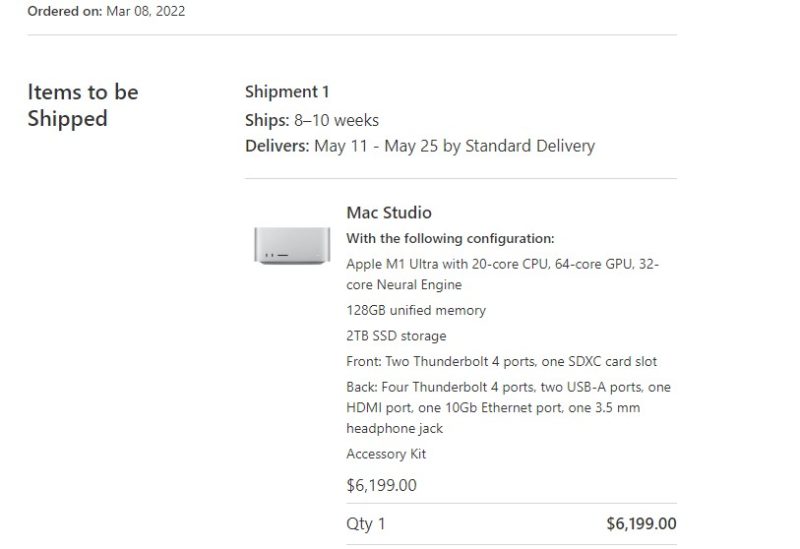

For now, we are going to wait to get ours. Ordering just as they became available last week, the 128GB, 20-core CPU, and 64-core GPU models were realistically two months away. This happened similarly with the M1 Max and that is another downside to tight integration.

Still, we hope to bring you more once ours arrives. There are certainly a lot of great things that Apple is doing, and many very forward-looking things, but there are trade-offs being made.

“I can feel a shrink-ray hitting my bank account…” My wife teaches remotely, has 30K YouTube followers and has 3 Mac laptops collected over 10 years (all of which still work)…I can see a medium-end M1 Ultra (64-GB RAM, 48 GPU cores, 4-TB SSD) in her future.

I always buy her Macs knowing that A) I can’t upgrade them, B) they will last 5+ years, so I tend to go big on the configs…Mentally I think I need to set my NIC’s MTU to 9000, when ordering something off of Apple’s site (just to carry all those digits at checkout)…Ah tending to 1000’s of Xeon/EPYC-based servers with DIMM, PCI slots was and hot-swap drives was so much feeling less boxed in Day One.

“I can feel a shrink-ray hitting my bank account…”

@Carl… MTU… have an upvote.

The 16GB shared RAM vs 32GB shared RAM is a good point. I’ve been getting by with 16GB RAM with the Intel iGPU, but it sounds like the Apple iGPU could be greedier.

Are there ways to see the RAM allocation break down?

It’s too bad Apple didn’t revisit the trashcan design :), and disappointing there aren’t a couple NVMe slots for extra storage. The Mac Mini Studio looks like a good upgrade to the Mac Mini, and I’ll probably pickup a refurbished model later simply because the Mac Mini only supports 2x displays.

I don’t particularly like the trend towards systems where everything is soldered together, that seems like a step backwards to me. It may be necessary for the CPU and at least partly the accelerators but I’m worried this is more about the bottom line of the seller instead of the technology itself. If the RAM or SSD in my Dell Precision fails I can replace it myself, which is a lot less of a loss in productivity as opposed to having to take it somewhere to be repaired or worse replaced entirely.