Today we are looking at the Supermicro SYS-220BT-HNTR, also known as one of the company’s “BigTwin” models, that Patrick recently got some hands-on time with at Supermicro headquarters. This 2U server contains 4 individual server nodes, each of which boasts dual Intel Ice Lake CPUs. The short name for that in the industry is a 2U4N server. All 2U4N servers are not the same though, and the BigTwin is Supermicro’s higher-end line. If Supermicro’s SYS-220GQ-TNAR+ was all about GPU density, then the BigTwin is its CPU-dense cousin. STH has looked at BigTwin configurations in the past, but today’s system is the first time we have had time with the updated 3rd generation Intel Xeon Scalable “Ice Lake” version.

Video Version

This is part of our visit to the Supermicro HQ series, similar to our recent coverage of the Supermicro Hyper-E 2U and SYS-220GQ-TNAR+.

We are going to have more detail in this article, but want to provide the option to listen. As a quick note, Supermicro allowed us to film the video at HQ, provided the systems in their demo room, and helped with travel costs to go do this series. We did a whole series while there and are tagging this as sponsored. Patrick was able to pick the products we would look at and have editorial control of the pieces (nobody is reviewing these pieces outside of STH before they go live either.) In full transparency, this was the only way to get something like this done, including looking at a number of products in one shot, without going to a trade show. Look for the last piece in this series coming to STH over the coming weeks.

Supermicro SYS-220BT-HNTR Overview

This is probably the last time I will refer to this by its model number; BigTwin is simply easier to say. The front of the BigTwin is all storage:

A total of 24x 2.5″ U.2 locking drive bays are present, with each of the individual nodes allocated 6 bays. These are hybrid 2.5″ bays, and can accept either PCIe 4.0 NVMe or SATA drives in them. Additionally, you will notice that there are several vertical portions on the front of the chassis reserved for ventilation holes. Since the BigTwin is Supermicro’s premium 2U4N solution, it is designed to cool the higher-end CPUs and expansion components. Eight Xeon Ice Lake CPUs require lots of direct fresh airflow to keep cool, and this front ventilation provides the avenue for that fresh air to arrive.

One of the fun features, if you have never seen these, is that the rack ears have the power and status LEDs for the nodes, two on each rack ear.

Taking a look around the back of the server, we get to the magic of this configuration. There are four complete server systems housed in this 2U chassis, grouped around a pair of proprietary 2.6kW 80+ Titanium power supplies.

These 2.6kW 80+ Titanium power supplies are actually a big deal. Not only are they high-efficiency, a great attribute in a dense system like this, but they are a custom design. These PSUs are longer than the chassis is wide. That makes the PSUs thin. As a result, more space on either side can be utilized by the nodes allowing for more expandability on each node. This may seem like a small detail, but it is nuances like this that lead to differentiation in the 2U4N space.

Other than physical metal and these shared power supplies, each of the 4 nodes you can see from the rear function as completely independent servers.

Each node sports two LGA4189 sockets supporting Intel 3rd Gen Xeon Scalable Ice Lake CPUs. Thermal constraints limit this system to 36 core and 205W TDP CPUs. Even with that, there is still enough power and cooling to house 288 Ice Lake cores across the 4 nodes. That 205W limit has a caveat though. If there is a cooler ambient temperature data center, for example, then one can potentially go up the stack even further. Above 205W TDP, you will need to get the system/ deployment validated.

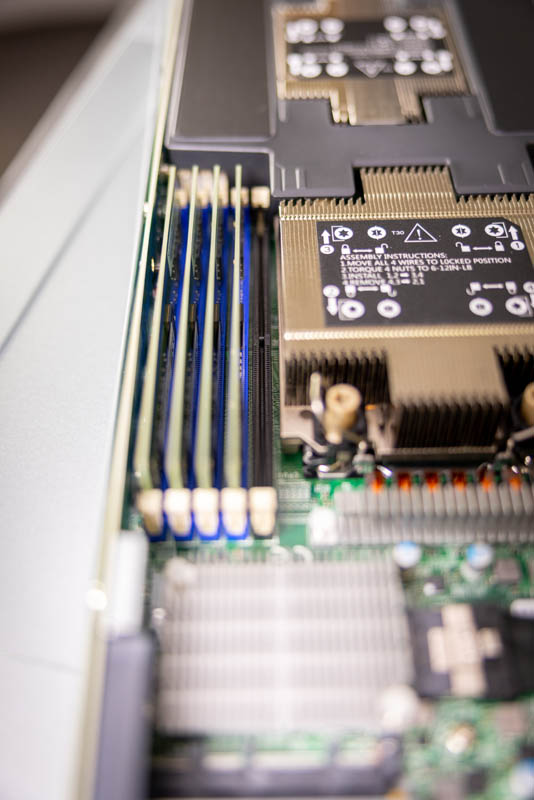

Each node also has a total of 16 DIMM slots, which is just enough to enable all 8 channels of the Ice Lake memory controller. Sharp-eyed viewers will also note the presence of 4x black DIMM slots, two for each CPU. These are dedicated slots for Intel Optane PMem 200 modules that pair with the 3rd Gen Xeon Scalable platform. That gives one the ability to add more than just DRAM to the system and get additional memory capacity as well.

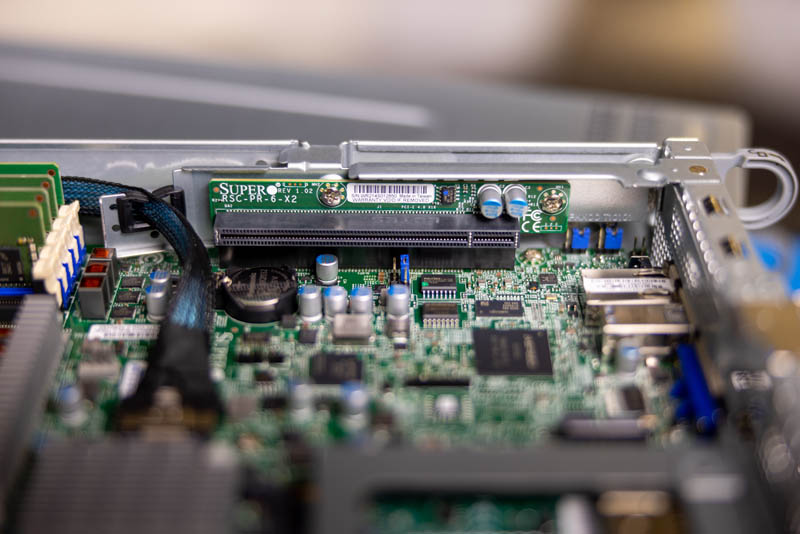

Each node features a pair of PCIe 4.0 x16 low-profile slots and that is something up from previous generations.

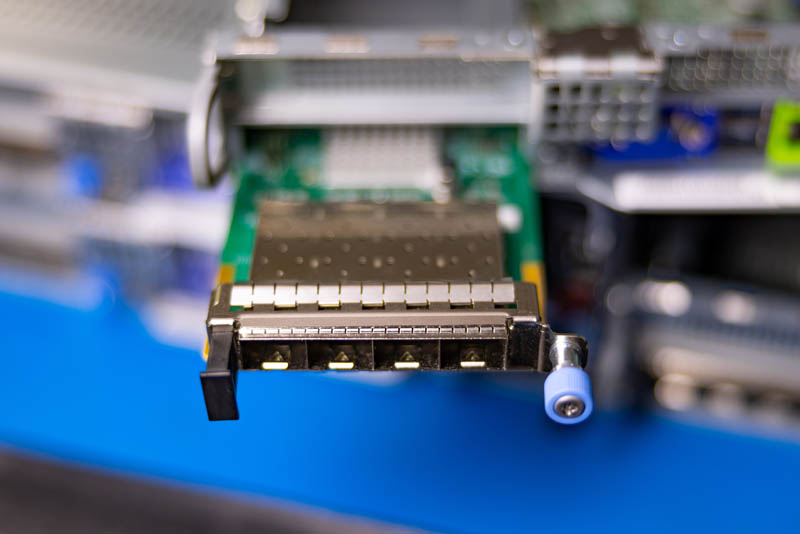

Located just below the left PCIe slot is the AIOM / OCP 3.0 slot, which is a dedicated slot for installing system networking. Other than the dedicated NIC for the BMC/management traffic, Supermicro has not equipped the BigTwin with any fixed onboard network ports; instead, buyers can customize their ‘onboard’ networking via the AIOM slot. If one does not want to use AIOM, one can also use the PCIe slots for networking.

A bit harder to see are the side-mounted M.2 slots, of which there are two on each node. These would mostly be used for boot media such as a hypervisor, leaving all of the front slots available for storage.

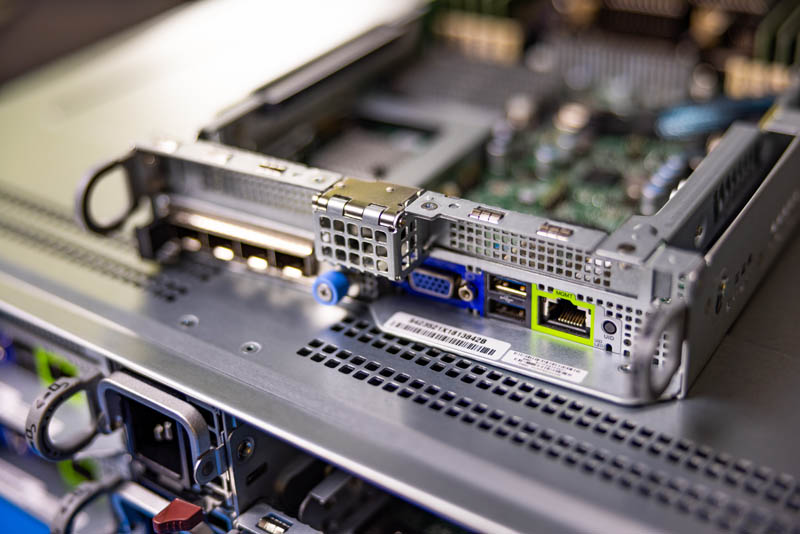

Rounding out the rear of the nodes are a VGA port, two USB ports, and that BMC management NIC previously mentioned. That BMC is an ASPEED AST2600 solution and is something we have seen on other Supermicro Ice Lake servers. Each node’s BMC functions independently, though they do communicate with each other enough to be aware of the overall status of the chassis. I recommend taking a look at our previous BigTwin review for a better explanation of the multi-node BMC support.

Each of the nodes is connected to the larger chassis via a single high-density connector on a leading-edge from each sled. This connector is responsible for carrying all of the front-drive bay connectivity, as well as power for the system. This is a much cleaner solution than some of the lower-end 2U4N systems on the market.

There are a few items beyond just what one gets with the new Ice Lake Xeons and features like the built-in M.2 storage that are nice in this system. An example is that the BigTwin uses a hard plastic airflow guide. Some of the older 2U4N systems and even some of the lower-end systems use relatively flimsy airflow guides that can be troublesome. The lower-end guides sometimes do not seat properly and therefore interfere with sliding a node in-and-out of a chassis. This seems like a small feature, until you experience the challenge first-hand with the alternative.

Overall, there is a lot here. This is a segment that we have now seen on STH for three generations and there is certainly marked improvement with each generation. These 2U4N systems are popular since they make servicing much easier than individual nodes while also increasing density.

Final Words

Supermicro’s BigTwin server series has always been about cramming a ton of CPU compute into as small a package as possible, without delving into the complexity of a full ‘blade’ server design. The SYS-220BT-HNTR is an iterative update on that now-proven 2U 4-node design formula, bringing support for faster memory, higher core count CPUs, and a big expansion in connectivity with PCIe 4.0 support and more PCIe lanes. For such a dense computing solution, the value of upgrading to PCIe 4.0 and enabling much faster networking and storage support is huge in a design like this.

Once again, a big thank you to Supermicro for letting Patrick poke about their demo room. We have got at least one more of these little previews coming, so keep an eye out for that in the near future!

Looking forward to a comparison between this Ice Lake BigTwin and the Epyc 7003 compatible 2124BT-HTR because that’ll be an epic (heh) comparison which would mostly be apples to apples. Both have 16 DIMMs (8ch per CPU), both have ~200-205w maximum TDP and are otherwise extraordinarily similar in execution with some more modern features and exception for pmem and for some reason the AMD one does not have front panel PCIe 4.0 NVMe/SATA 2.5″ bays.

Will you be able to get both for an apples to apples?