Supermicro ARS-210ME-FNR Internal Hardware Overview

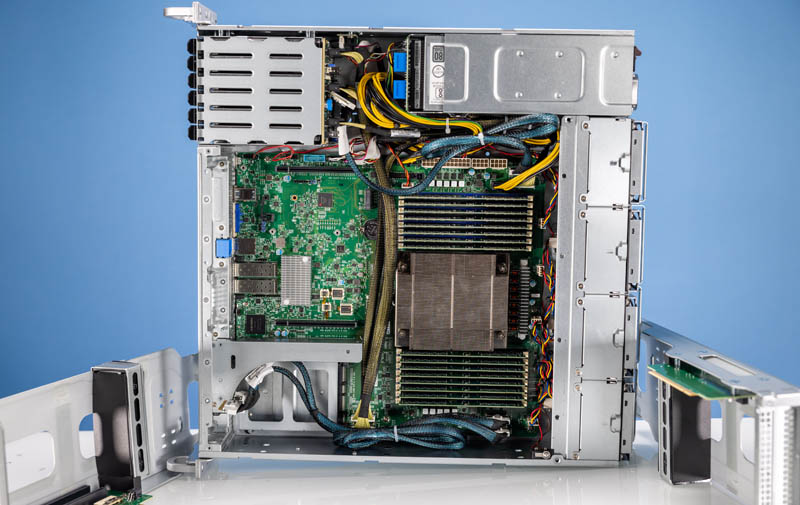

Here is a view inside the system to help our readers stay oriented. We pulled out risers to help let you see what is inside better.

The risers are designed for larger accelerators like GPUs or FPGAs. These mount on large cages that can be easily accessed once the lid is removed.

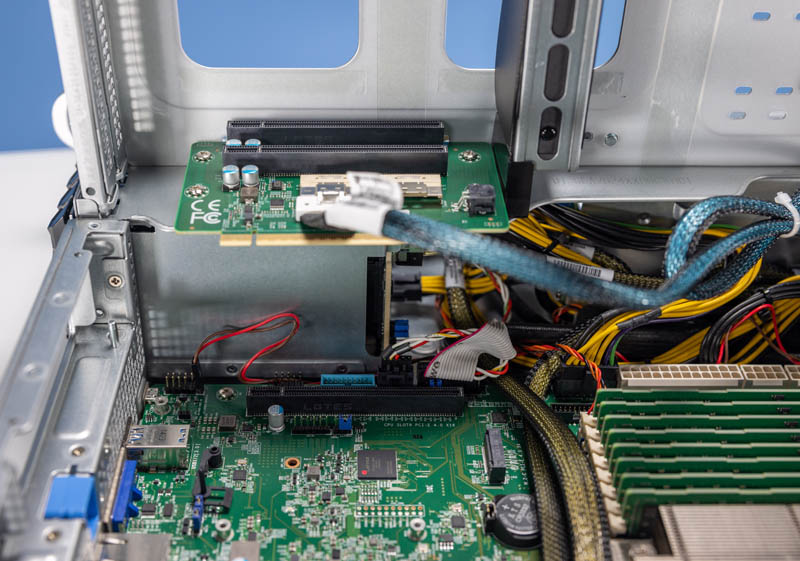

The risers in our test system each had two PCIe Gen4 x16 slots on them.

A trend we are seeing with servers is that risers are often either cabled or a combination of a slot plus cabling. These larger full-height risers are combination risers. Underneath, we have a low-profile slot and the OCP NIC 3.0 slot.

Here is the other riser. One can see this had a x16 connector but then only had an x8 cabled connector for the second slot.

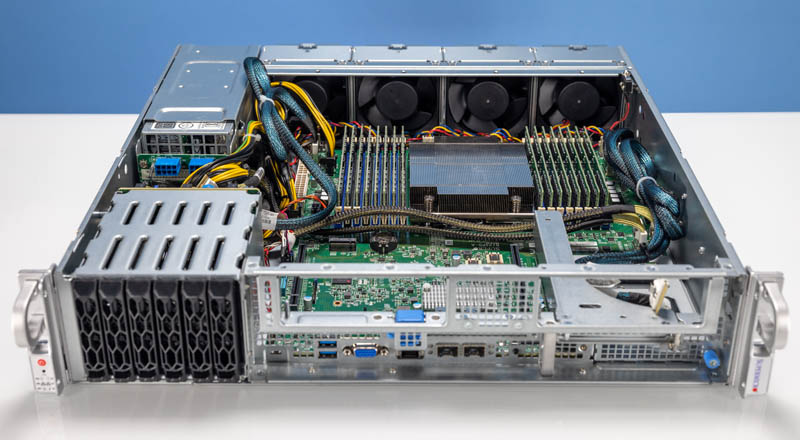

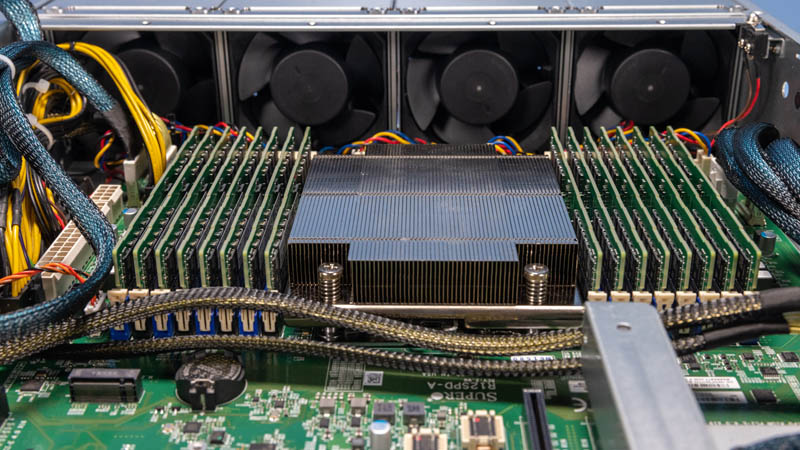

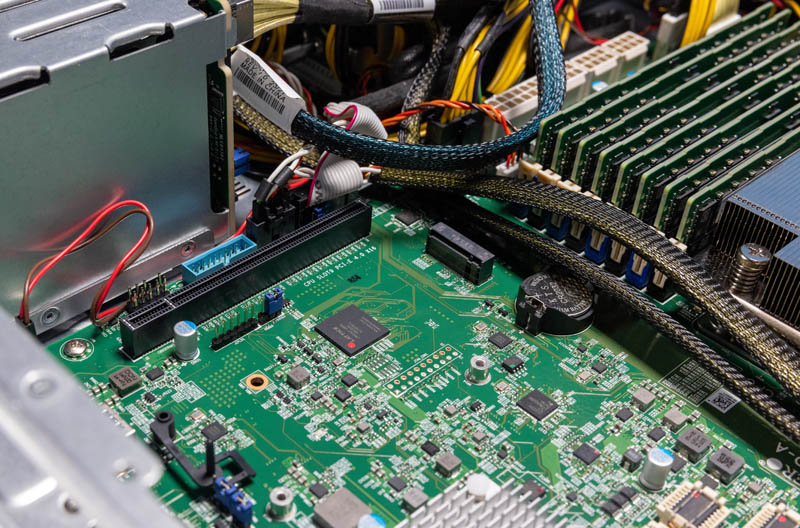

Without the risers in the way, we can see the main attraction here, the processor and memory.

This is a single-socket Ampere Altra Max system. As such, we get 128 Arm Neoverse N1 cores at 3.0GHz. This is an 8-channel memory system, and it is capable of running 2DPC (DIMMs per channel) so we have a total of 512GB of memory installed.

Behind the CPU, the motherboard extends all the way to the rear of the chassis. That is something we are seeing less of in standard-depth servers as they become more modular.

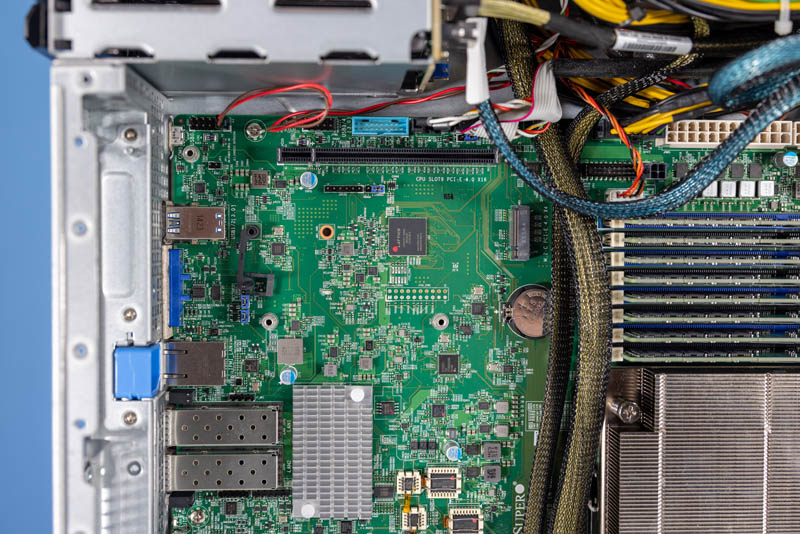

Next to the riser slot, there is a M.2 slot for a boot SSD.

On the other side, there is the ConnectX-4 Lx dual-port 25GbE NIC, as well as the ASPEED AST2600 BMC. More on the management later in this piece.

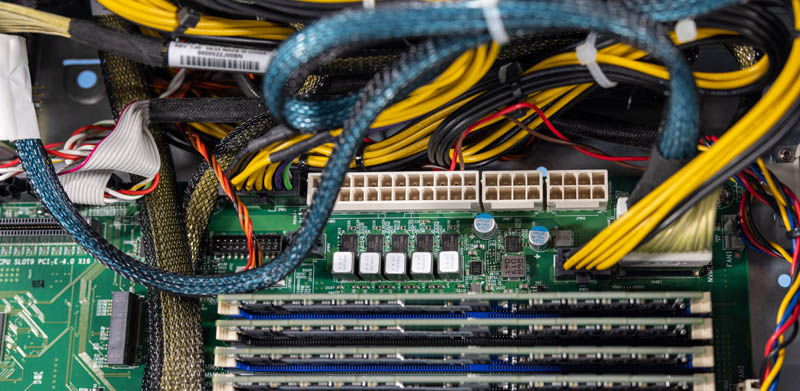

At the top of the motherboard, we see a web of cables. Our unit was a pre-production unit, and we had the risers out for photos, but it still felt like there was a lot of cabling. Still, the most intriguing is that the redundant power supply did not use the standard ATX power on this motherboard.

Supermicro made the Mt. Hamilton platform for a number of applications, and so there appears to be a flexible power input setup onboard.

Next, let us get the system assembled and powered on.

Very cool server. It’s nice to see something smaller for a change. Only one question… How long until we see an Altra or Altra Max based high-end workstation? I think THAT would be really neat, what with all the ground Ampere has gained so far.

Hi Stephen

We want to introduce you to reference the following 2U4GPU ArmServer which is another Server of the Mt. Hamilton program, four double-width GPUs with IO assessable in a 2U form factor system.

https://www.supermicro.com/en/products/system/megadc/2u/ars-210m-nr

Regards,

Roger

It’s great to see an Ampere server reviewed like a x86 server at STH. This makes it seem like they’re real not the $hitb0xes of Cavium

Stephen Beets, haven’t you see Avantek’s ARM desktop offering yet? If not, then https://store.avantek.co.uk/arm-desktops.html

@KarelG

Thanks so much for your reply. I was not aware of either Avantek or their products until you posted (I’m sure Dr. Cutress, aka TechTechPotato, covered their products at some point, I just either missed that or I simply don’t remember it). So cool to see that Ampere’s Altra and Altra Max processors are actually usable for things other than cloud and edge servers. Also, I like the case design of the Avantek workstations. Very neat. Thanks for sharing, man. Hopefully one day some years from now, I’ll find an Altra or Altra Max on eBay to buy and add to my CPU collection. :-)

@Roger Chen

Thank you for your reply and link. I wasn’t necessarily thinking of buying one (I’m not a telco nor do I have anywhere near enough money to afford one), but I did visit your link just to see what was there. Very nice and I’m wishing you and the Supermicro team all the best, saleswise. :-)

That’s a clickbait image. The CPU doesn’t really run outside of the server, it is on the inside. Stop making things up.

Can’t say there’s any surprise there. ARM will never be suitable for heavier workloads without making itself identical to x86 in terms of complexity, power consumption, and price. It works when used for its intended purpose – low-power devices that don’t need heavy CPU resources.