With the move to the cloud, many that use cloud services forget that the instances they use run on servers. Today, we put the bare metal back into cloud offerings as we take a look at the PhoenixNAP Bare Metal Cloud. Instead of just logging onto a bare metal instance, we went a step further and actually physically put a 4th Gen Intel Xeon Scalable “Sapphire Rapids” server we would use in a cloud environment. We then left the data center and accessed it remotely to see how it would compare to a real bare metal server that we have in the STH lab.

As a quick note, PheonixNAP is sponsoring this video and I think Intel may be helping out too. We had to fly to Phoenix, and we have been racking up some cloud bills putting this together. Since we went out to the data center for this, we also have a video for this one:

As always, we suggest opening the video in a new tab, browser, or app for a better viewing experience.

Putting the Bare Metal Server in the Phoenix Bare Metal Cloud

As a bit of background, you may have seen about two years ago when we took our readers on a Tour of the PhoenixNAP Data Center.

When we visited this year, we saw many of the same cages with financial, healthcare, and governmental clients. The second floor which was largely empty in 2021 was expanded and is now mostly full. A building adjacent to the current facility was being opened and is planned for zero water use, a key metric in the Arizona desert.

New for this trip was the activity. PhoenixNAP was a launch cloud provider with the new 4th Gen Intel Xeon Scalable CPUs, and so we wanted to show off the new servers. We went through the facility and had the chance to go into PhoenixNAP’s cages. Those cages included older infrastructure, as well as the new Bare Metal Cloud.

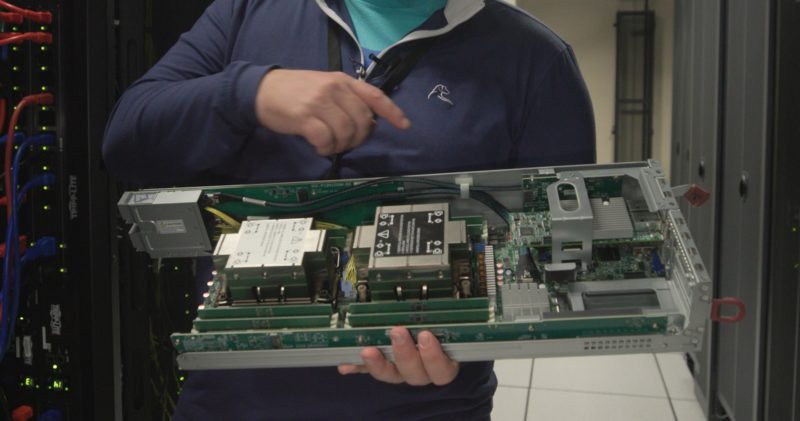

When we were inside, we had the chance to get a node that we would be using and put it into production.

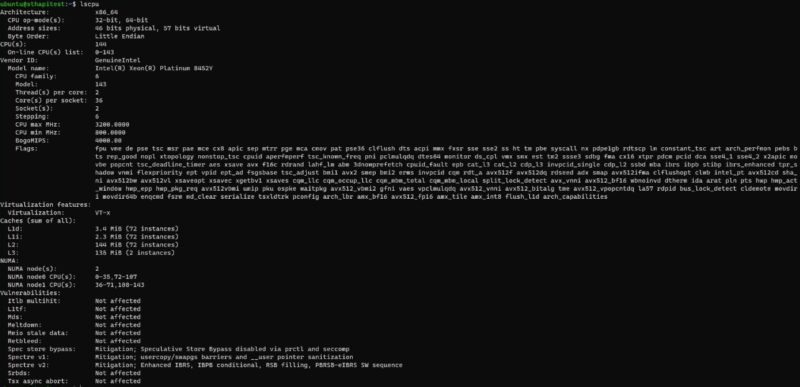

We put a Supermicro-based Intel Xeon Platinum 8452Y server into the infrastructure. The standard d3.m6.xlarge instance has the two Xeon Platinum 8452Y 36 core/ 72 thread processors, 512GB of memory, dual 25GbE networking bonded, and 2x 4TB NVMe SSDs.

Something I wanted to ensure is that the unit we put in is actually the unit we got to test. For that, we made a configuration change to make the exact server we were targeting a “unicorn” of sorts. As a result, we used a 256GB instance instead of a full 512GB. That way, we knew that the server we installed for me was the server I would be using.

Here you can see our special node has only 256GB of memory.

Now that we knew that our special node was installed, I wanted to share some of my experiences.

Thanks. WOW! Lots of complexity & multiple back-up infrastructure at the facility.

I’m sure that leads to Highest Reliability (Uptime Availability, Mean Time Between Failure, etc. etc.).

Could also have validated the node was infact the one you installed using dmidecode to check the chassis’ unique Identifiers.

This is an explosive idea; this is most probably the best and most successful thing about putting the bare metal server in the Phoenix nap bare metal cloud. I love this blog and really happy to come across this exceptionally well-written content. Thanks for sharing!!

I’ve had a great experience myself with allocation at PHX Nap as well. Definitely would recommend it if you have the knowhow to build and deploy.

This is a brilliant plan, and it’s likely the single most important benefit of hosting a bare-metal server in the Phoenix NAP bare-metal cloud. I’m really glad I found this site since the articles are so wonderfully written. Your contribution is much appreciated.

Kind of offputting that you have to register and add a CC (with a 1$ temp charge) to even see the Bare Metal Cloud interface / features. I did register and at the end Received a message saying you should receive login credentials within 5 minutes, if not please contact support. (To be clear this is just to log into the interface, all is pre-purchase).

10min pass, no email creds (or activation of account really, as you provide the creds) – so i contact support- Who says it may take up to 24 hours for your account to be created.

So the 5minutes message is a false (misleading?). in chatting with sales, I asked- if when choosing a bare metal cloud server with 2x drives, if raid-1 was an option for the OS (in my case ubuntu) , they said *NO* , Although it *might* be possible with their new “other OS” option.

i’m a bit surprised that users would run an OS on a single drive, I understand for some hypervisors and specific OSes that may be fine, but a single point of failure for your OS?? so hopefully once i receive my login creds/activation (so that i can make a purchase), i will be able to provision Ubuntu in raid1 configuration, as if not this is not a solution i’ll be able to make use of.

Great review though patrick (and video!) – Your review and video contain MORE information and specifics on PN’s bare metal cloud offering than I was able to find anywhere else on the Internet, including at Phoenixnap.com’s own site