Bare Metal Cloud Performance

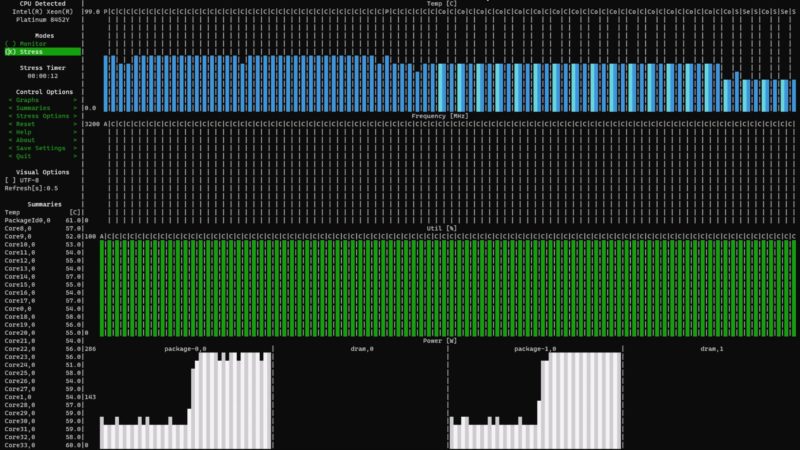

One of the big draws for the Bare Metal Cloud is performance. Here we can get 72 physical cores of the newest Sapphire Rapids generation Xeons.

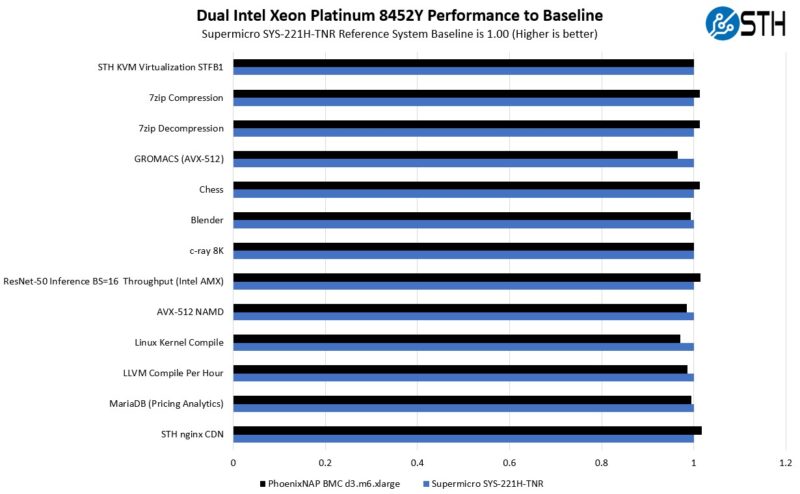

The big question we had, and many of our readers likely have, is how does it perform? Since we just happened to publish our Supermicro SYS-221H-TNR 2U 4th Gen Intel Xeon Scalable Server Review recently, we had a decent Supermicro 2U to a PhoenixNAP-Supermicro cloud server with the same processors. It took a little while to get the new CPUs, but this felt like a better comparison. Here are the results.

Generally, these are very close to the standard 2U. On average we are <1% off of a standard 2U. That is a good result.

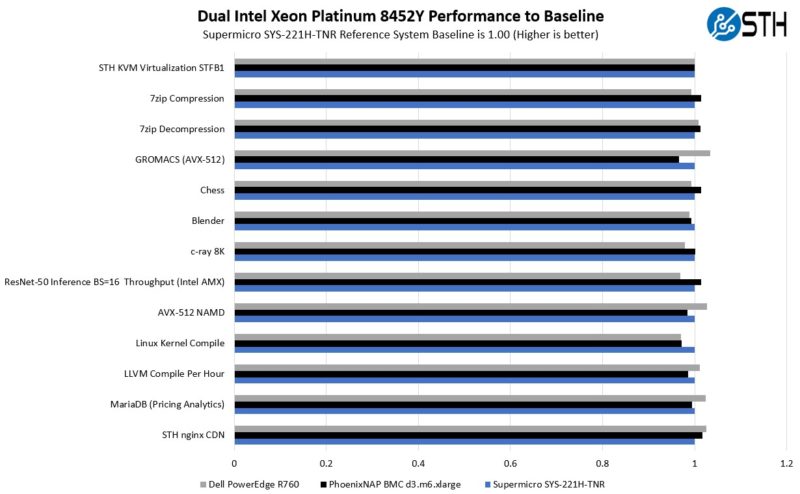

We also wanted to test the performance of servers outside of the Supermicro ecosystem. We used the same pair of Xeon Platinum 8452Y’s in a Dell PowerEdge R760:

These are three different servers, and normally we would expect about a 1.5% workload variation. We went beyond that a few times, but we are close enough to say this is essentially the same as bare metal server performance. Something we also tested was AVX-512 and the new Intel AMX for AI so we could see how some of the heavier acceleration workloads fared. They were generally good as well. This is a very solid result.

PhoenixNAP Bare Metal Networking and Multi-Cloud

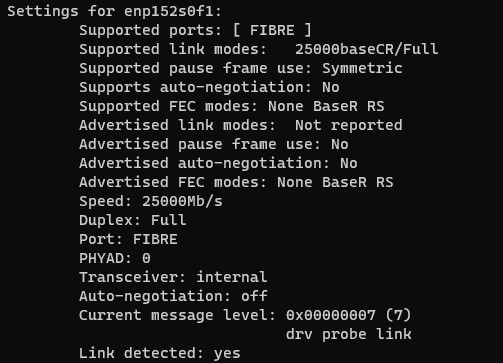

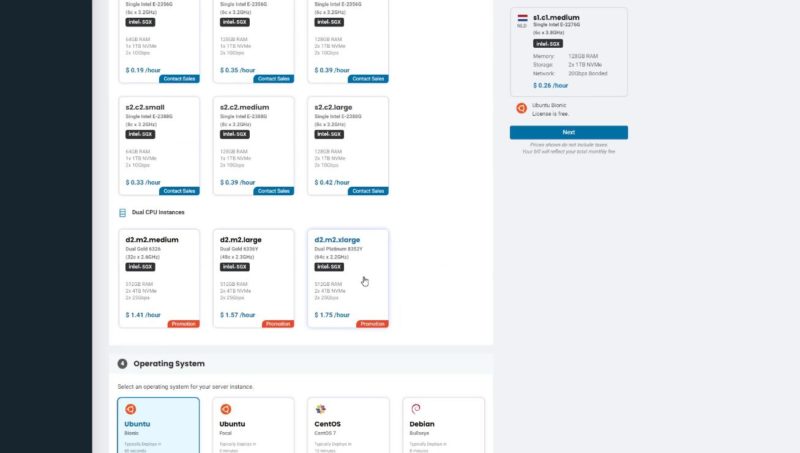

Talking networking for a few minutes, each high-end instance gets dual bonded 25GbE NICs. There is a lot of bandwidth available for these servers. There are lower-end 10GbE options for single-socket servers that we have seen previously, but the d3.m6.xlarge is dual 25GbE.

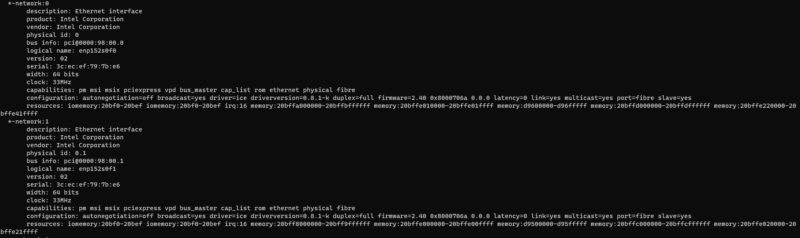

Networking was provided by Intel E810-XXV NICs.

That is just the instance networking. Since this is a cloud service, we wanted to see what switching PhoenixNAP was using. Here we have the Arista switches. We were asked to only take this shot at an angle.

Beyond that, PhoenixNAP has its own NOC and meet-me rooms. There are a ton of providers displayed that have POPs at PhoenixNAP.

Some of the larger providers are organizations like AWS, Google Cloud, and a third major cloud provider that I saw the cages for, but I am not sure if we can talk about yet. We will just say it is another big one. These providers have racks at PhoenixNAP and have high-speed dedicated connections to their clouds. If, for example, you wanted to keep data in a lower-cost colocation/ bare metal cloud, then utilize some resources from both AWS and Google Cloud, then you would need a facility like PhoenixNAP to do so at high speeds.

If you have your own racks, or if you need to be in the same facility as some of the other customers, and then use Bare Metal Cloud for burst or specific workloads, there are also cross-connect rooms. Here is me in one aisle of one of them, but just this one CCR had several rows of racks.

To me, that is one of the more interesting value propositions, it is like a public cloud neutral bare metal cloud.

Final Words

This was a fun opportunity to go look at the PhoenixNAP Bare Metal Cloud and really experience how it is bare metal. I was able to install a Supermicro 4th Gen Intel Xeon Scalable server into the infrastructure, and then access it remotely via APIs, the web GUI, a HTML5 iKVM and SSH. While many may see this as a simple cloud instance via a GUI, behind it is orchestration APIs for Kubernetes and more.

While many experience servers these days as API calls or instances, it was great to see the hardware hands-on, including what runs some of the lower-cost single-socket instance types.

One of the cool features is also just the fact that if you are thinking about using 4th Gen Intel Xeon Scalable “Sapphire Rapids” servers, and just want to try them, possibly for the onboard features like AMX or SGX, then you can just spin one up, try it for an hour or a month, and make a decision. We also showed last year Test Driving the PhoenixNAP Bare Metal Cloud for Big Savings and even how to Build a 5-node Proxmox VE Cluster in 20 Minutes for a Dollar.

I just wanted to say thank you to Ian, Frank, and the PhoenixNAP team for showing us around again. It was great seeing just how much in the data center has changed. We are also looking forward to the >500K sq ft new facility being built next to the current data center.

Thanks. WOW! Lots of complexity & multiple back-up infrastructure at the facility.

I’m sure that leads to Highest Reliability (Uptime Availability, Mean Time Between Failure, etc. etc.).

Could also have validated the node was infact the one you installed using dmidecode to check the chassis’ unique Identifiers.

This is an explosive idea; this is most probably the best and most successful thing about putting the bare metal server in the Phoenix nap bare metal cloud. I love this blog and really happy to come across this exceptionally well-written content. Thanks for sharing!!

I’ve had a great experience myself with allocation at PHX Nap as well. Definitely would recommend it if you have the knowhow to build and deploy.

This is a brilliant plan, and it’s likely the single most important benefit of hosting a bare-metal server in the Phoenix NAP bare-metal cloud. I’m really glad I found this site since the articles are so wonderfully written. Your contribution is much appreciated.

Kind of offputting that you have to register and add a CC (with a 1$ temp charge) to even see the Bare Metal Cloud interface / features. I did register and at the end Received a message saying you should receive login credentials within 5 minutes, if not please contact support. (To be clear this is just to log into the interface, all is pre-purchase).

10min pass, no email creds (or activation of account really, as you provide the creds) – so i contact support- Who says it may take up to 24 hours for your account to be created.

So the 5minutes message is a false (misleading?). in chatting with sales, I asked- if when choosing a bare metal cloud server with 2x drives, if raid-1 was an option for the OS (in my case ubuntu) , they said *NO* , Although it *might* be possible with their new “other OS” option.

i’m a bit surprised that users would run an OS on a single drive, I understand for some hypervisors and specific OSes that may be fine, but a single point of failure for your OS?? so hopefully once i receive my login creds/activation (so that i can make a purchase), i will be able to provision Ubuntu in raid1 configuration, as if not this is not a solution i’ll be able to make use of.

Great review though patrick (and video!) – Your review and video contain MORE information and specifics on PN’s bare metal cloud offering than I was able to find anywhere else on the Internet, including at Phoenixnap.com’s own site