The Supermicro SYS-221H-TNRR is the company’s 2U dual-socket server that is an updated design for a new and very different generation of server processors. With features such as DDR5 and PCIe Gen5 support, the platform is a major generational update. That is why we are going to review the platform today.

Many on STH have already seen the Supermicro SYS-221H-TNRR. This server made a cameo in our 4th Gen Intel Xeon Scalable codenamed “Sapphire Rapids” launch piece. If you want to learn more about that launch, you can also see the video that also shows the server here:

With that platform background, let us get to the server.

Supermicro SYS-221H-TNR External Hardware Overview

The Supermicro SYS-221H-TNRR is a 2U server that is part of Supermicro’s new “Hyper” line. This is one of Supermicro’s higher-end servers where we expect the greatest number of options.

The front drive bays in this system only have the center eight populated. There are room for 24.

Not having the extra drive bays populated is different for Supermicro as it has traditionally populated the entire backplane, even if they are unused. Still, the open mesh allows for more airflow to the CPUs and the rest of the chassis and one can see a relatively clear airflow path.

This system uses Supermicro’s toolless 2.5″ drive trays. These are much easier to install than the older screw models and save time servicing the system.

These use Supermicro’s toolless 2.5″ bays.

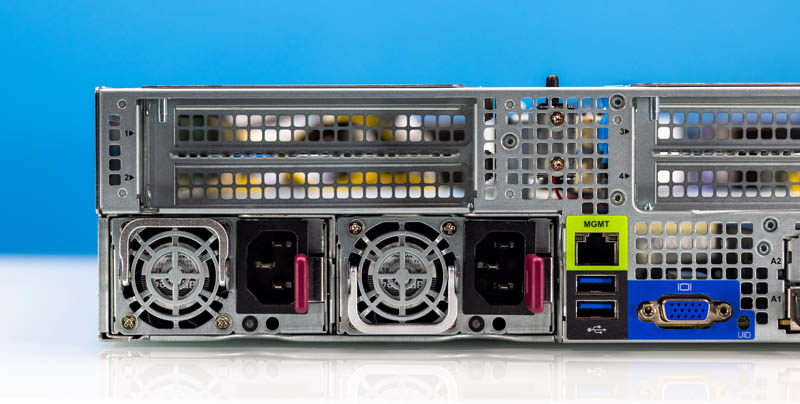

Moving to the rear of the system, we have a fairly standard layout for Supermicro that is similar to what we have seen for a few generations.

The left side has two power supplies. These are 80Plus Titanium units and our system has 1.2kW models installed. Above the power supplies is a riser.

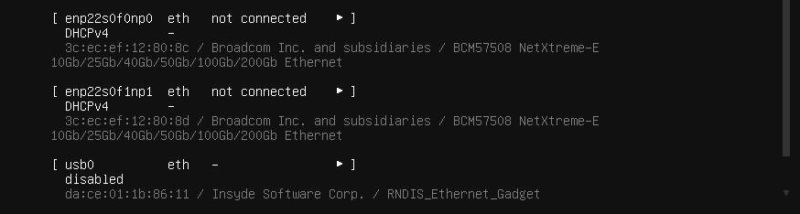

The center block is perhaps the most interesting. Here we have a riser that is identical to the left one. Below that we have a management NIC, two USB ports, and a VGA port for local access. This server does not have onboard networking. Instead, it has up to two AIOM/ OCP NIC 3.0 slots available. Our unit only had one.

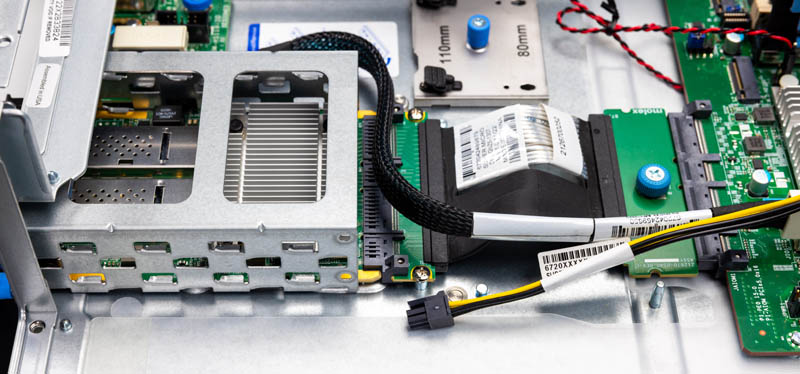

The way this works is fascinating. Supermicro has a mounting structure and then uses cabled connections to service the AIOM/ OCP NIC 3.0 slots.

For those wondering why these cables are needed, with PCIe Gen5, signal integrity over distances is a challenge. This certainly adds cost compared to older servers with direct PCB attachments, but it enables something more interesting. For example, this is not a normal 10/25GbE adapter. Instead, it is a dual port 100GbE NIC with 200Gbps of bandwidth. That is still a PCIe Gen4 device, but we expect over the next few quarters to see 400GbE single port or dual 200GbE NICs in these form factors.

Supermicro has many options, but this is just one that our system was configured with.

The right side is dedicated to full-height PCIe expansion slots.

Next, let us get inside the system for our internal overview.

That looks beautiful. I’m just worried that Supermicro’s trying to be HPE instead of continuing to be the classic SMC

I’m curious if the chassis can fit consumer GPUs like the 4090 or 4080. RTX 6000 Ada/L40 pricing has forced a second look at consumer GPUs for ML workloads and figuring out which — if any — Supermicro systems can physically accommodate them has not been easy.

@ssnseawolf I’ve been building these up for Intel for the past year. They will accept the Arctic Sound/Flex GPU but that’s it.

STH level of detail: Let’s show everyone the new pop up pull tab

“DDR?-4800 memory modules” ??

I wonder if the SSDs get any cooling at all. With there being low-drag open grilles on both sides of the faceplace there is no reason for the air to be sucked through the high-drag SSD cage.

So bad they’re heading where HP already is – one-time use proprietary-everything server, going to trash after 5 years. Most of SM fans loved them for being conservative and standardized. Those plastic pins in toolless trays and tabs are weak and cracks in no time. X10-X11 and maybe H11-H12 probably were the last customisable server systems.

Another great article. And just jealous of all the amazing toys you get to review/play with :) Small textual correction, page 2 : “Instead of slots for PCIe risers, instead the PCIe risers are cabled as are the front drive bays.” I might be tired but somehow my brain can’t read this correctly.