During Intel Architecture Day 2018, the company outlined a roadmap as well as its strategy for its CPU cores going forward. We wanted to take a few minutes to show what Intel is doing, and why. The Intel Core Strategy is something that has broader implications for Intel, but it is also a good high-level framework to use looking at microarchitectural changes in general.

Intel Core Roadmap

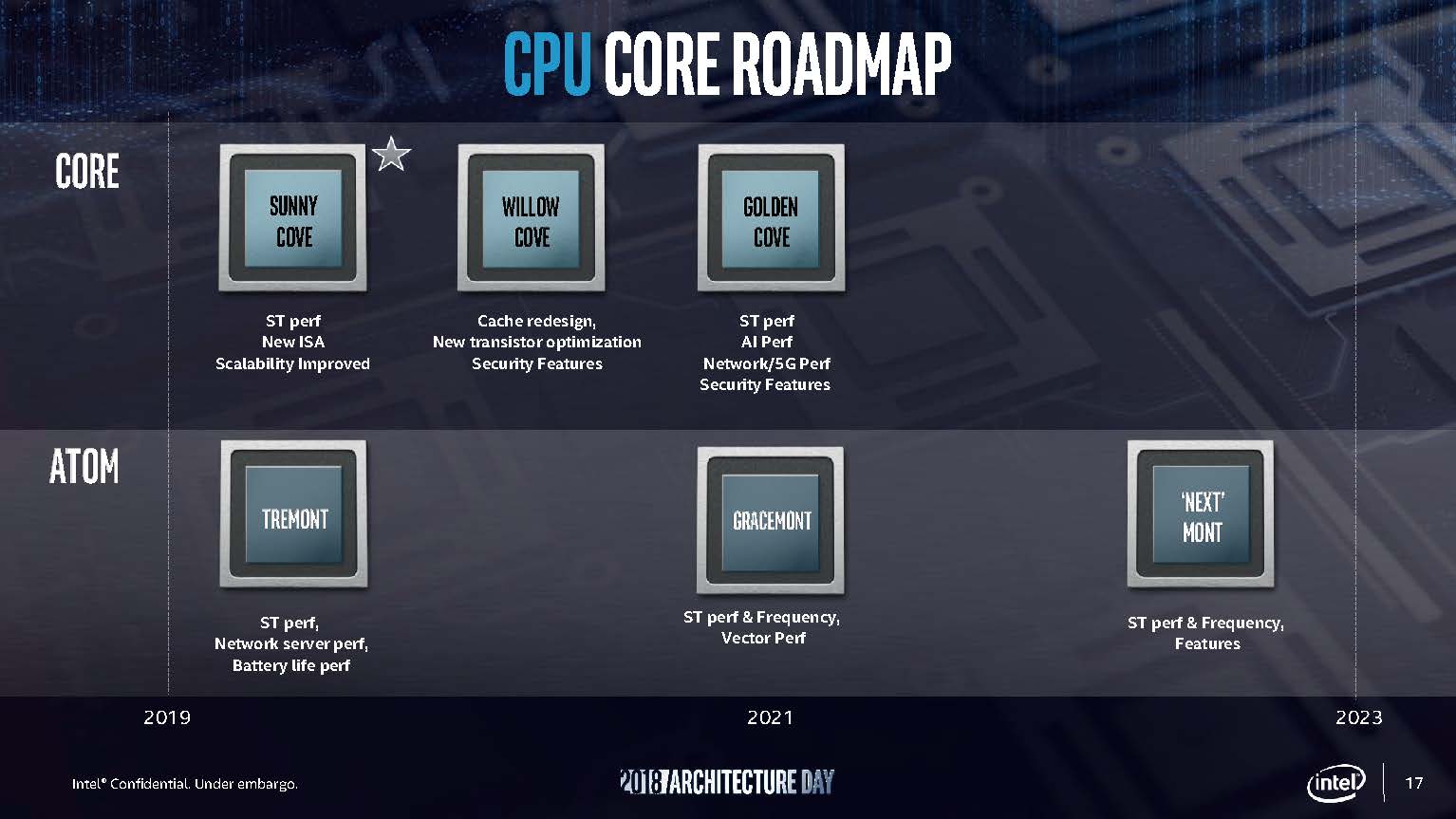

Here is the Intel CPU Core Roadmap that the company showed during Architecture Day 2018. You can see a “Core” swimlane as well as an “Atom” swimlane. The Core swimlane manifests itself in products like the Intel Xeon Scalable CPUs, Intel Xeon D-2100 CPUs, as well as the Intel Xeon E-2100 series processors. The Atom line we see in products like the Intel Atom C3000 series.

The next architecture and the one we will go into more detail on later today is the Intel Sunny Cove architecture. This will likely land in the consumer parts as well as a successor to the Xeon E-2100 series generation first. Intel has a number of new technologies it will be bringing into the fold with this generation. Beyond Sunny Cove is Willow Cove which will have a cache re-design, transistor optimization, and new security features. Golden Cove will have new performance both for today’s workloads, but it will also bring targeted improvements for higher-speed networking and AI performance. Intel expects inferencing to be something all of its products will do in the future with a degree of excellence.

A common theme here is security. Intel has a commitment to delivering not just encryption feature updates but also updates for speculative execution. Each successive generation including the near-term Cascade Lake-SP generation as well as later generations such as Ice Lake built on Sunny Cove will have successively stronger hardware mitigations for speculative execution attacks like Spectre and Meltdown.

On the Atom side, Tremont is next. Followed in 2021 by Gracemont, and in 2022 or 2023 by a future “mont” that Intel declined to put on these slides.

Intel Core Strategy

This was originally a part of our Sunny Cove architecture piece, but we pulled it out since Intel’s Core Strategy has broader applicability than the company’s next generation. Other companies think about cores in a similar way so it is an opportunity to talk about some of the levers chip designers have, and why they pull those levers.

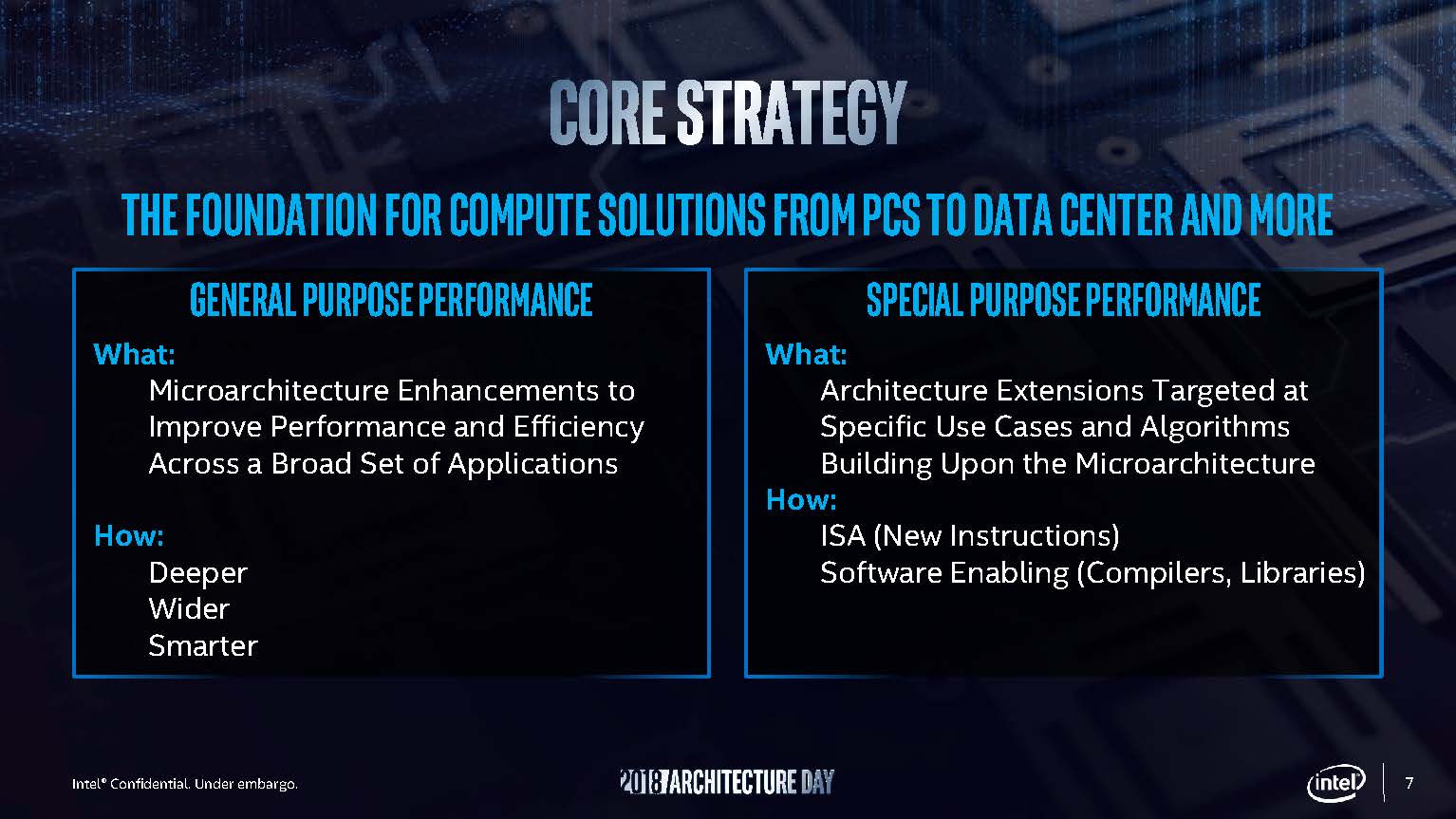

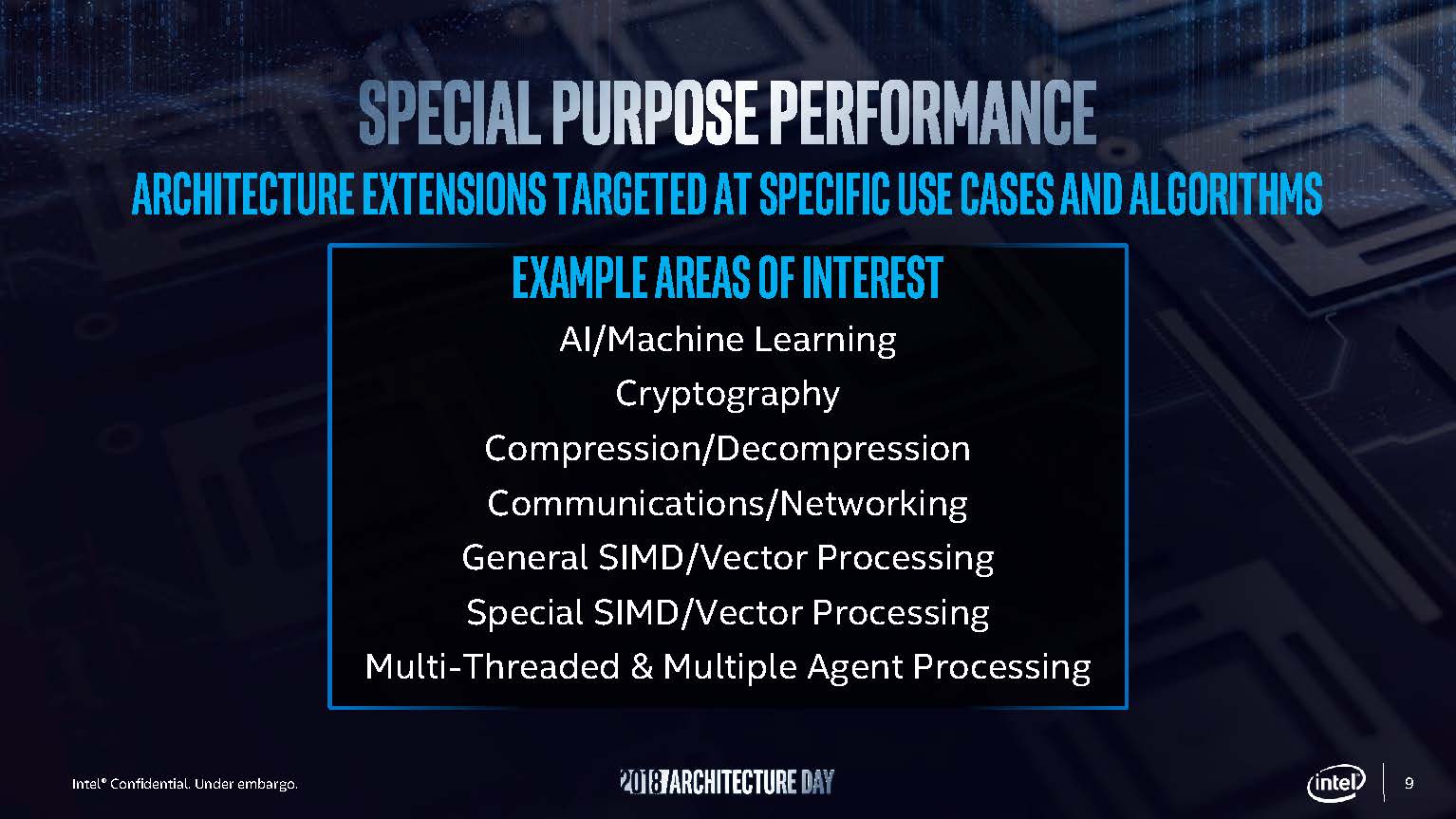

Intel frames its Core Strategy in two large buckets. First is for general-purpose performance. The second is for special purpose performance. This is at a high-level as easy as it sounds. General purpose performance impacts everything, even older unoptimized code. Special purpose performance allows Intel to introduce new instructions for use cases where a larger speedup is needed and can be achieved by adding things like new instructions. Taking advantage of special purpose performance inclusions often requires recompiling application code.

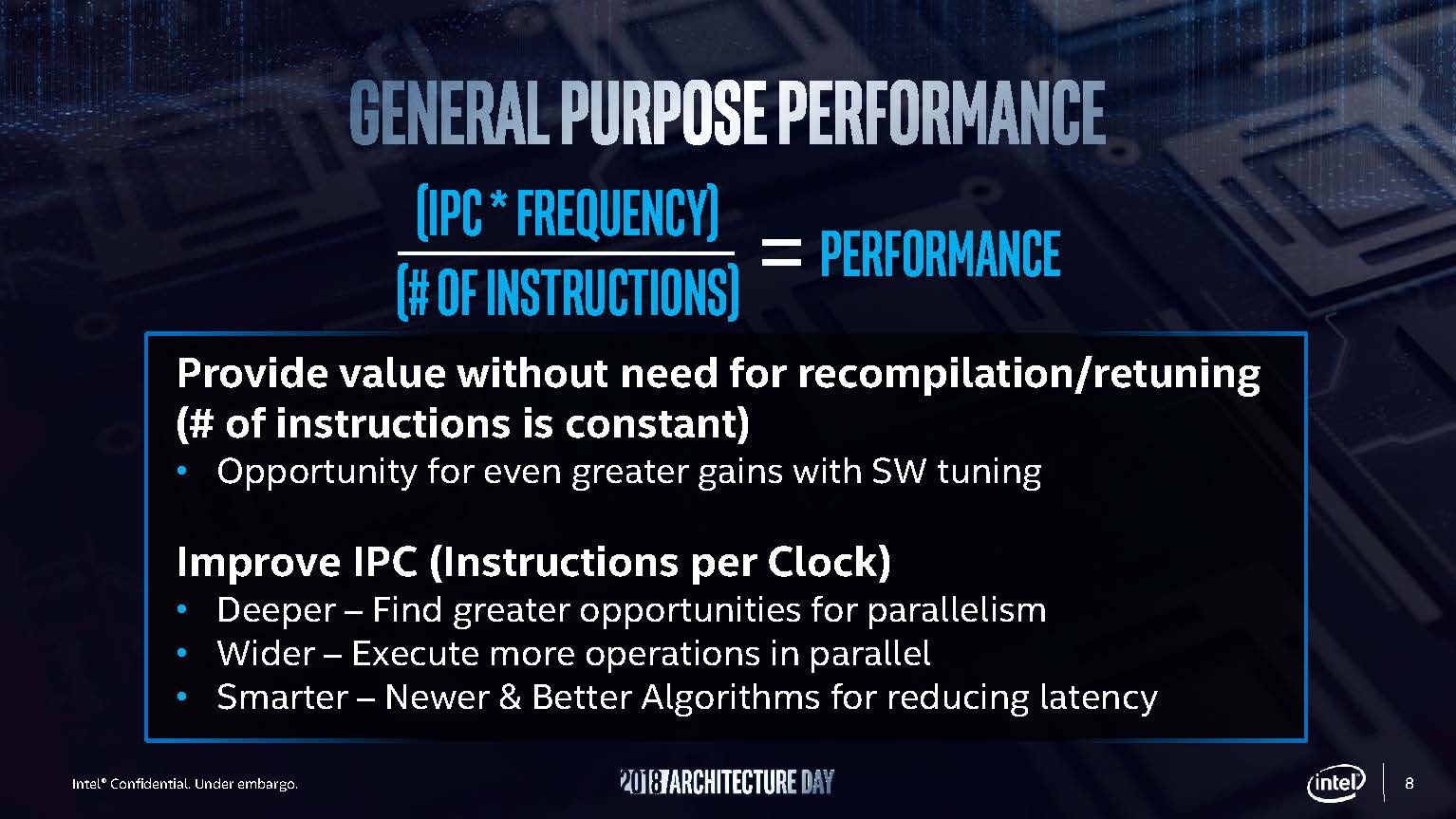

General purpose performance boils down to a relatively simple boilerplate. You can increase instructions per clock (IPC), you can increase frequency, you can advance the software side to use fewer instructions to complete a task. That is about it. If you look back to our recent AMD EPYC 7371 Review Now The Fastest 16 Core CPU piece, this is exactly what AMD did by increasing this generation’s CPU performance by 20-24% simply by increasing clock speed dramatically in a known architecture.

General purpose speedups are extremely important as there is an enormous amount of legacy code out there. AVX-512 can have large performance gains, but since it is a new instruction and falls under special purpose performance, it is still not in widespread use. General purpose performance focuses on delivering gains to existing applications without requiring a re-compile step.

Special purpose performance comes in areas where Intel sees the need to accelerate performance faster than it can with general purpose gains.

Special purpose performance is something that Intel needs to deliver to its ecosystem. At the same time, they require an enormous investment. Not only is Intel committing to adding new hardware instructions and accelerators. Intel also is committing to putting its army of over 15,000 software developers to work supporting new instructions through the software toolchain. Just to give some sense of scale, 15,000 software developers is more than the total employee count (not just developers) at large companies like Red Hat before the IBM acquisition announcement which had around 12,000 employees.

Final Words

During Intel Architecture Day 2018 Intel had an emerging message that should scare the rest of the industry. The company is getting more serious around using its developer resources to more tightly integrate releasing new features on its CPUs, future GPUs, FPGAs, and other accelerators, to software toolchains. This is an important vision for the company to execute on as introducing new instructions becomes an adoption hurdle at every introduction. There are some, like AES-NI that are rapidly adopted by the industry. Others like AVX have seen a slower uptake. If Intel with one API and its software developer teams can solve the hurdle of getting software ecosystems to adopt new instructions and new hardware, then the company will be well positioned to leverage its varied compute assets to increase TAM.