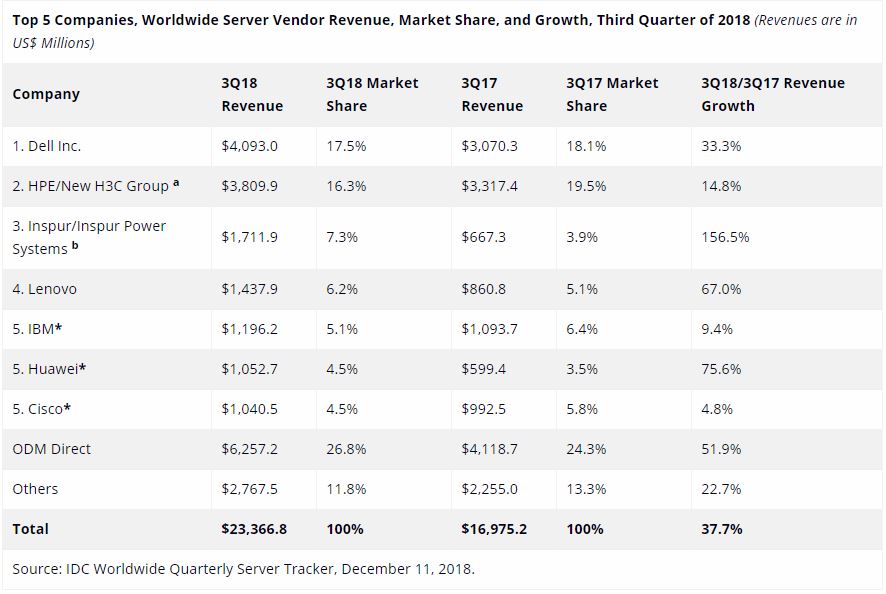

Inspur is huge. You may not have heard of the company, they are now one of the largest AI server vendors in the world. According to IDC’s latest Quarterly Server Tracker, Inspur is now the third largest server vendor behind Dell EMC and HPE/ HC3 Corp (HPE’s Chinese JV.) The company is fueled by over 150% Y/Y revenue and over 90% unit shipment growth. For some context, here is what IDC’s latest picture looks like:

At STH, we started covering Inspur with more regularity in 2018, and you will continue to see more on STH in the coming months. You may have seen our piece around the Inspur AGX-5 and Our SC18 Discussion with the Company. The Inspur AGX-5 is one of the few truly top-end NVIDIA systems out there that only a handful of companies can even attempt to make. The fact is, Inspur, along with its IBM JV for producing POWER systems, is one of the largest, and fastest growing server vendors in the world. They have become the go-to company for deep learning and AI solutions in China, yet there are a lot of buyers of smaller company’s solutions, e.g. Lenovo, that have never heard of Inspur, or who have done so just in passing.

One area where Inspur is an industry leader is in AI and deep learning solutions. Inspur has a stack that starts with the basic hardware and expands to the software and scaling solutions needed to train large models and then put them into production. I wanted to take the opportunity to interview Inspur about their take on the market since they have seen enormous success in AI and HPC.

Inspur arranged for me to interview with Liu Jun, the company’s Assistant VP, GM of AI & HPC. If you look at Inspur’s revenue growth versus unit growth, it is clear that Inspur is gaining momentum by selling servers with higher average selling prices. A key driver for that is Inspur’s AI and HPC systems. Liu Jun and his team are driving a highly-successful AI and HPC business in the industry, making his point of view on the industry very important.

Here is STH’s interview with Liu Jun:

Interview with Liu Jun, AVP and GM of AI and HPC for Inspur

Patrick Kennedy: For our readers who may have seen Inspur in Top 5 server vendors lists, but may not know about Inspur, could you give us a brief overview of the company?

Liu Jun: Inspur is the leader among full-stack cloud data center products and solution providers in China, with its business spanning across Server, Storage, and Network design & manufacturing, plus complete AI & HPC and Cloud IaaS solutions. Inspur’s data center infrastructure and server hardware portfolio is the most comprehensive and complex in the industry, offering x86, POWER & OpenPOWER based systems, and GPU & FPGA compute acceleration technologies. In the past 3 years, Inspur server business kept the highest growth rate, and since 2017Q3 was ranked No.3 globally. Inspur is also the leader for AI servers, with more than 50% market share in China. Inspur’s AI business makes available to our clients complete end-to-end solutions, encompassing computational hardware, management software middleware, and optimized deep learning frameworks.

PK: Who are Inspur’s largest customers?

LJ: Inspur’s largest customers are cloud service providers (CSPs), telecom carriers, finance companies; as well as large manufacturing companies.

PK: Where are Inspur’s focus areas in the data center?

LJ: Inspur data center business is focused mainly on the compute, storage and IT infrastructure innovation for cloud data centers and AI solutions.

PK: From your position, where are we in the lifecycle of AI models? We hear a lot about training, but as models become more useful, inferencing becomes more useful as it allows trained models to serve business and their customers. Are we at the tipping point where we will see more AI servers address the inferencing or will we continue to see a main focus on the training side?

LJ: AI development is in transitioning stage from Lab experiments to the commercial use. AI business implementation is accelerating exponentially. Previously, AI training was widely developed in the labs, but now with more and more AI applications deployed for commercial use, more computing power is needed for inference. We believe that the sever segment for AI inference will grow at a much faster rate.

PK: Inspur is the largest AI server vendor in China, what are the trends you are seeing with what your customers want in GPU servers?

LJ: In the AI R&D stage, customers are focused more on the high performance, by scaling-up on a single server, and the scaling-out in clusters, which can improve the efficiency and reduce the R&D lifecycle. Next, for the commercial deployments online, the customers become focused more on the low-latency, cost-efficiency, and energy saving, which can reduce the CAPEX of AI business implementation.

PK: What is the largest GPU installation that Inspur has done?

LJ: One of the top hyperscale companies in the world uses Inspur GPU servers for R&D AI innovation and deployed Inspur GPU compute servers for their online cloud services.

PK: What kind of storage back-ends are you seeing as popular for your deep learning training customers? What are some of the lessons learned from your customers that STH readers can take advantage of?

LJ: Given the specific features of AI data training, we suggest the customers should adopt multiple NVMe storage systems with large capacities to support the intense workload from deep learning model training and inference.

PK: Inspur makes over half of all of the AI compute servers in China and provides 80% of Alibaba, Baidu, and Tencent’s supercomputers. Many say that servers are not differentiated, so how did Inspur differentiate itself to capture that kind of market share?

LJ: Inspur’s differentiating strategy is the JDM business model. JDM permits Inspur to customize compute and storage infrastructure to meet the specialized needs of its hyperscale clients, while their data centers and cloud infrastructure is becoming largely commoditized. JDM helps Inspur’s customers with significant cost benefits, as well as high design flexibility and specialization. Inspur cooperates with partners and large customers to provide compute and infrastructure resources, and to build an entire ecosystem for multiple application scenarios. It integrates with the customers’ entire business lifecycle management, including marketing & procurement, R&D, manufacturing, and delivery to meet customers’ huge demand.

PK: Are you seeing a lot of demand for new inferencing solutions based on chips like the NVIDIA Tesla T4?

LJ: In the process of AI business deployment, customers need very high computational power, while being cost-efficient and energy-saving. With the AI business implementation’s exponential acceleration, we believe that there will be more demand for AI inferencing solutions based on the new chips like the NVIDIA Tesla T4.

PK: Who are the AI hardware vendors that you are seeing as the next-generation players beyond NVIDIA and Intel?

LJ: Currently, some CSP giants are planning or have already launched their own AI chips, which we believe will exert a great impact on the AI ecosystem in the future.

PK: Are your customers demanding more FPGAs in their infrastructures?

LJ: The online business needs more flexibility and to be more cost-effective. FPGAs can meet the requirements from different online inference application scenarios. The highly flexible FPGAs can be optimized for AI Inference applications. Its low energy consumption makes them more cost-effective for AI commercial use. So more FPGAs will be deployed for AI inference online.

PK: What storage and networking solutions are you seeing as the predominant trends for your AI customers? What will the next generation AI storage and networking infrastructure look like?

LJ: For the CSPs, they will converge the storage and network into their overall data center infrastructure and adopts the 25Gb Ethernet for their cloud file systems.

For the on-prem customers, they will continue to have different storage and networking options, including InfiniBand and NVMe based parallel storage.

Regarding, the next generation of storage and networking trends, the customers will prefer the NVMe storage solutions, and persistent memory technologies, that can deliver higher speed, larger capacities, and can drastically improve the overall AI performance. The 100Gb/s and higher speed Ethernet will enhance the AI cluster scale-out capabilities and will become the mainstream deployment.

PK: Over the next 2-3 years, what are trends in power delivery and cooling that your customers demand?

LJ: As the power consumption of AI servers is getting higher and higher, the customers prefer to deploy the more efficient power supply in-rack solutions, and take advantage of more efficient cooling technologies, such as the liquid cooling, or the combination of air and liquid cooling.

PK: What should STH readers keep in mind as they plan their 2019 AI clusters?

LJ: AutoML will be widely deployed in 2019, and its applications will have a great impact on the AI compute scale-up servers and scale-out clusters, enhanced with persistent memory technologies, and coupled with a high-speed / low-latency network and NVMe based parallel storage solutions.

Final Words

First off, I wanted to thank Liu Jun for taking the time for this interview. Also the Inspur marketing team for arranging this. This is one of, if not the first, interview Inspur has agreed to do in the space.

For our readers, Inspur is a company you need to take note of in the industry. If you are in the US, EU, ANZ or elsewhere where Inspur has a smaller footprint, know this: as I talk to Intel, AMD, NVIDIA, and other major component suppliers, they all are happy to talk about traditional vendors such as Dell EMC and HPE, but Inspur is the company that is coming up more frequently as an example of how to grow the data center business. The company is investing heavily in building out US and EMEA operations to fuel this growth so expect to see more from them in 2019 on STH and in the market.

Great interview. If it wasn’t for your AGX-5 article a few weeks ago I wouldn’t have ever heard of Inspur.

It’s funny, that they’re getting big and I never hear about them at work. If STH and IDC say they’re big because of AI, then they must be.

Hey, I know this is going to sound silly, but doesn’t Jun Liu’s picture look like he’s a model for a menswear catalog or posters advertising for a tailor? That photo is really well done.

Show us the hardware! That’s what STH is famous for.

Great interview. I want to know more about their NVMe storage for AI

I’d like to hear more about their hpc cluster storage with nvme as well.

I’d like to see more about how their systems compare.

Please STH review their stuff. You’re the only site out there doing real server reviews.

You can free to contact me for any further inquiry, lizhq@inspur.com