In the industry, and we do this too, the Intel Xeon Gold 6258R is considered to be a lower cost Xeon Platinum 8280. They both have 28 cores, 56 threads, and similar clock speeds. As part of the Big 2nd Gen Intel Xeon Scalable Refresh, Intel made significant efforts to bring down the list price of compute that is closer to AMD EPYC. Often this means taking SKUs that were Xeon Platinum levels of performance and offering them at Xeon Gold pricing. That is effectively what is happening with this part. There is one difference which is both important, but also a bit harder to see with simple benchmarks that we are going to discuss in this article as well. Beyond comparing the two SKUs, the Gold 6258R had additional significance for Intel. By offering a greater than 60% discount over the Platinum 8280, it reset the competitive landscape by changing the price/ performance of where its competitors needed to measure up to. We are going to explore that aspect in this review as well.

Intel Xeon Gold 6258R Overview

The Xeon Gold 6258R is a part that Intel needed. It is right to think of this part largely as a mainstream dual-socket offering. For the single socket market, the Intel Xeon W-3275 is likely more attractive given higher clocks. For the dual-socket market, as part of the refresh, Intel massively discounted the Gold 6258R in order to reset performance per dollar metrics in the industry. This is a 28 core, 205W TDP CPU so it is basically the highest-end mainstream configuration that Intel is selling today (non-public SKUs excluded.) The highest-end CPU configuration from the largest server CPU vendor is necessarily the industry’s yardstick.

Key stats for the Intel Xeon Gold 6258R: 28 cores / 56 threads with a 2.7GHz base clock and 4.0GHz turbo boost. There is 38.5MB of onboard L3 cache. The CPU features a 205W TDP. These are $3950 list price parts.

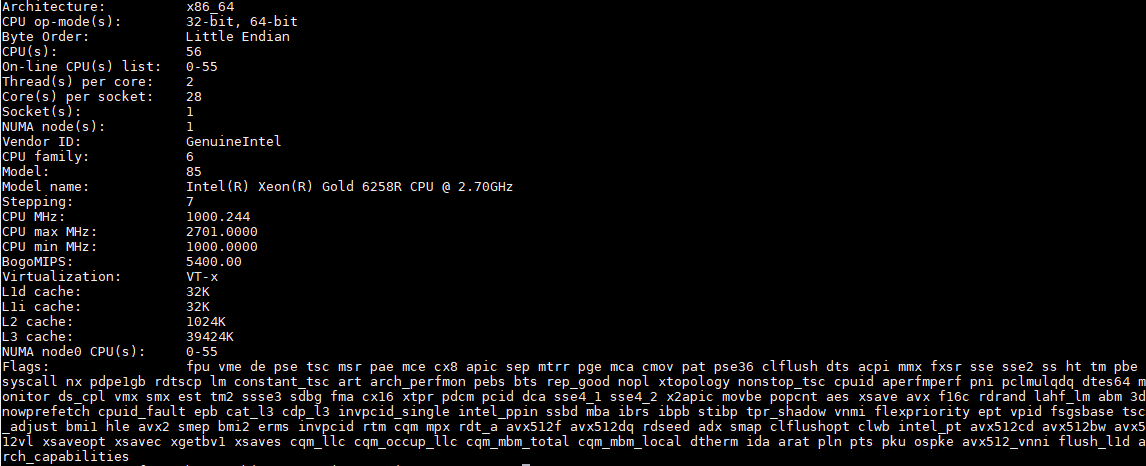

Here is the lscpu output for the Intel Xeon Gold 6258R:

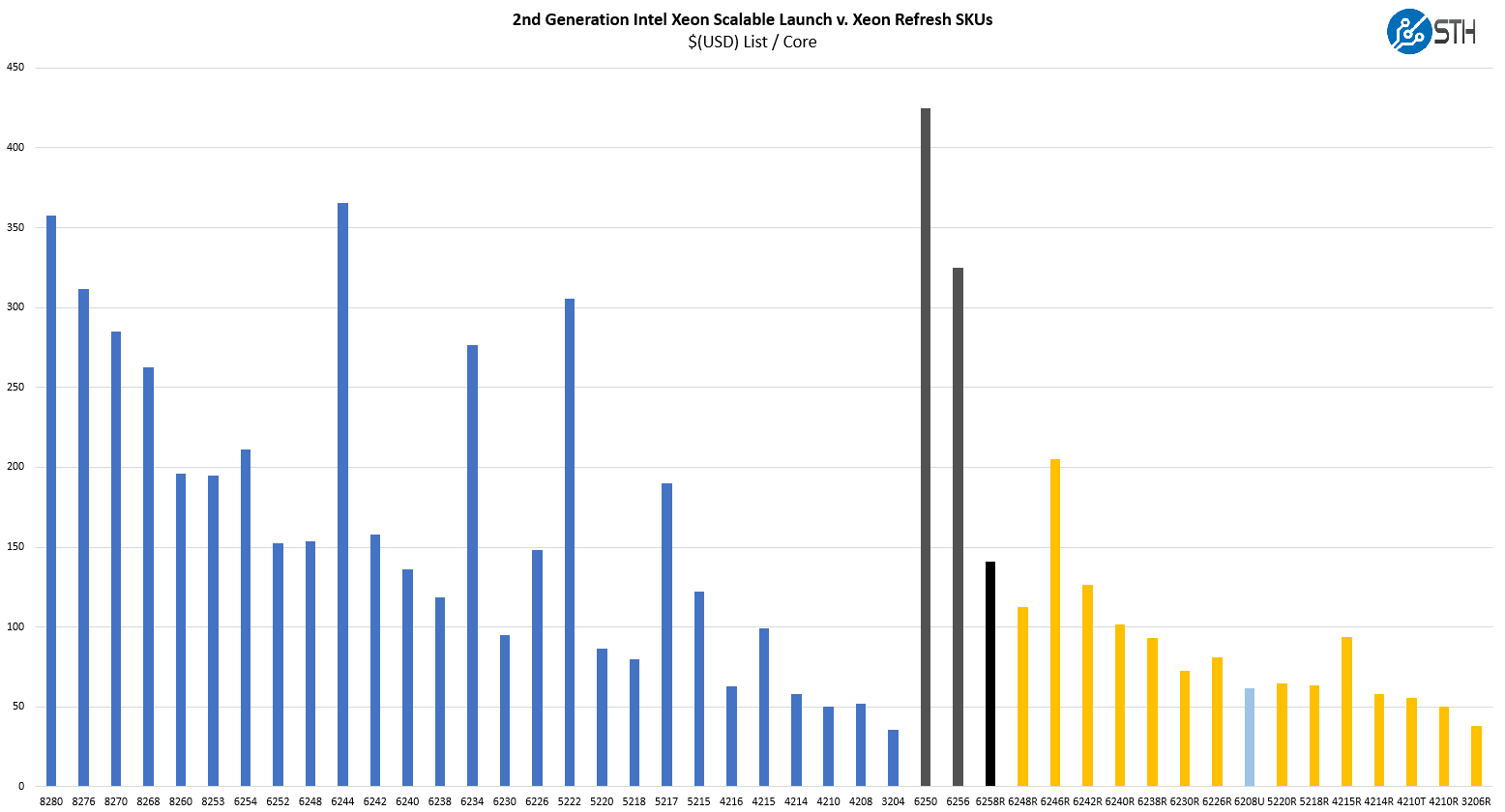

Perhaps the biggest change is in pricing. Here are the 2nd Gen Intel Xeon Scalable SKUs, both original and refresh, on a $(USD) list price/ core basis:

This may be hard to see given the sheer number of SKUs, but one can take the black bar on the middle right of this chart and effectively compare it to the bar that is on the left of this chart to get a relative sense of how much discount Intel is providing. Effectively, this is a greater than 60% discount on a list price per core basis over the Platinum 8280.

The one feature that is not present on the refresh part is the third UPI link. That has two major impacts.

First, one cannot use these chips in 4-socket and greater configurations. These days, perhaps the 3rd Generation Intel Xeon Scalable Cooper Lake chips are now the go-to for 4-socket servers. The Platinum 8280 and Gold 6258R have a feature that the Cooper Lake CPUs do not insofar as they can run their Intel Optane PMem 100 in Memory Mode whereas the “Cooper Lake” Xeons can run PMem 200 but cannot access Memory Mode.

The second is more nuanced but the lack of the third UPI channel means that in dual-socket configurations the Xeon Gold 6258R has a 2x UPI link connection for socket-to-socket communication. A Xeon Platinum 8280, can have up to 3x UPI links. Enabling those three UPI links uses more power, and increases trace count/ complexity in a motherboard. As a result, not all systems support this feature and that is why we noted it in our Supermicro BigTwin SYS-2029BZ-HNR Review.

Not all systems are designed for 3x UPI link in a dual-socket configuration, but that is very popular in markets such as for hyper-converged servers as it adds ~50% more socket-to-socket capacity. That extra UPI link can consume more power which also means that less power is available for the rest of the chip which can be more than offset by the additional bandwidth it provides.

In our benchmarks, we are going to investigate the performance impact. First, we are going to take a look at the test configurations then get into details.

Intel Xeon Gold 6258R Test Configurations

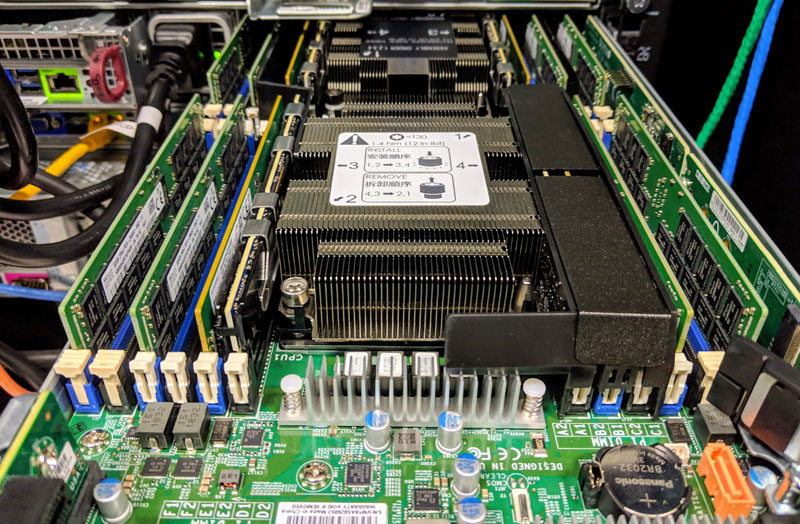

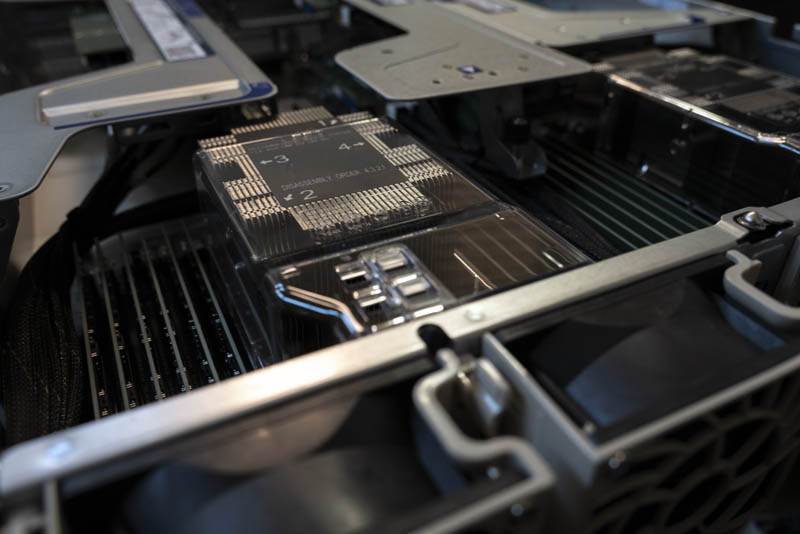

We are using a testbed that is designed for the higher-205W TDPs that some of the new refresh parts can hit, specifically the Supermicro SYS-2029UZ-TN20R25M or “2029UZ-TN20R25M” server. We published our Supermicro 2029UZ-TN20R25M Review recently if you want an in-depth look at the machine.

The Supermicro 2029UZ-TN20R25M is a 2U dual-socket server that is part of the company’s “Ultra” line meant to compete in the higher-end of the server market. We requested this server specifically because it has 20x NVMe SSD bays, it supports Intel Optane DCPMM, and it has built-in 25GbE. 25GbE is a major networking trend and we have already started doing overviews of 25GbE TOR switches such as the Ubiquiti UniFi USW-Leaf 48x 25GbE and 6x 100GbE switch overview and the Edgecore AS7712-32X Switch Overview. We have done adapter reviews such as the Supermicro AOC-S25G-i2S, Dell EMC 4GMN7 Broadcom 57404, and the Mellanox ConnectX-4 Lx. We also have more 100GbE switch reviews in the publishing queue so we wanted to start focusing on the new systems.

This Supermicro 2029UZ-TN20R25M platform is significant for another reason. It supports 205W TDP CPUs. That is a feature not every dual Xeon server has. For this reason, we wanted to use the Supermicro 2029UZ-TN20R25M which is a higher-end platform capable of handling this range of refresh CPUs.

- System: Supermicro 2029UZ-TN20R25M

- Memory: 12x 32GB DDR4-2933 DDR4 DRAM

- OS SSD: 1x Intel DC S3710 400GB Boot

- NVMe SSDs: 4x Intel DC P4510 2TB

Overall, this is is a fairly simple configuration but we are focused on CPU performance here. We are taking a further step and we tested these CPUs both in single and dual-socket configurations, so we will have some results of both views. The Supermicro Ultra server we are using we recommend using only in dual-socket configurations, however, we thought this would be a good way to spice the review up.

Next, let us get to performance before moving on to our market analysis section.

Do you by any chance have the SPECrate2017_fp numbers for this stable of CPUs which you could post or add to article? (and maybe the EPYC 7742 too?)

Would be curious what real-world numbers you get vs. tuned vendor stats. In particular with/without AVX512.

Awesome article – Thanks!