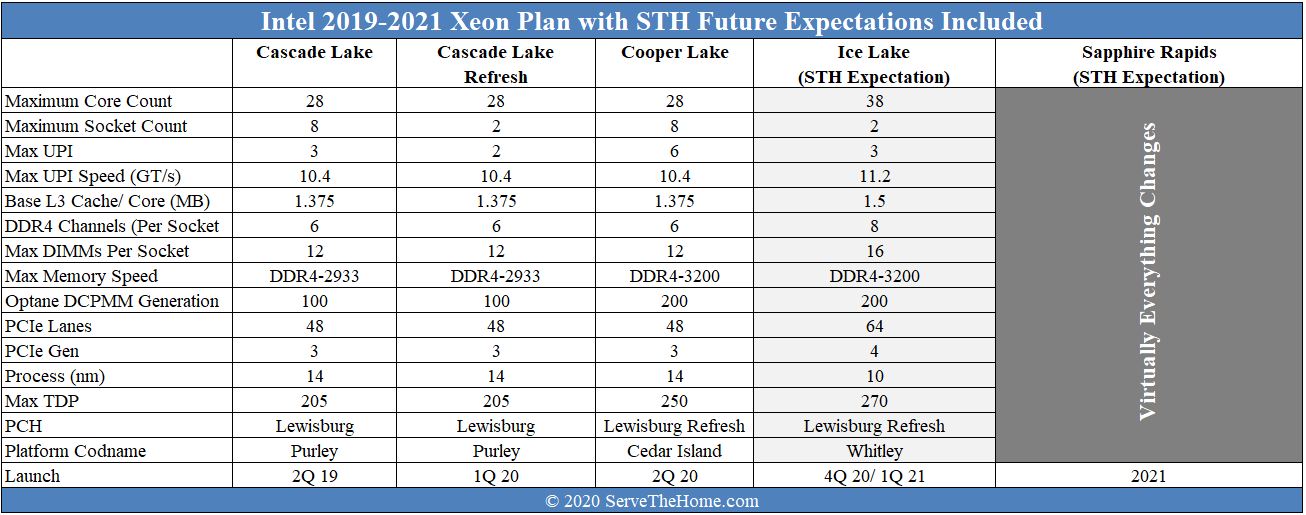

We now have the launch of 3rd generation Intel Xeon Scalable servers codenamed “Cooper Lake.” The new chips utilize a new socket (called Socket P+) that supports up to 6x UPI links (up from 3) per CPU which means they offer around the same inter-socket bandwidth in 4-socket systems as the 2nd Gen Intel Xeon Scalable Refresh SKUs offer in 2-socket systems. Although the core counts remain at 28 per socket, maximum TDP rise from 205W to 250W moving to Socket P+. The new chips support the Intel Optane DC Persistent Memory Module 200 generation which is the company’s second-generation. A big catch is that this is only for App Direct mode in Cedar Island. Memory is still limited to 4.5TB per socket in 6-channel configurations but speeds on top SKUs can reach DDR4-3200 in 1DPC modes. Perhaps the other big new feature is support for bfloat16 operations which will bring enhanced AI training capabilities to Xeon CPUs.

That is a ton to go into. We are going to have breakout articles on the Optane DCPMM 200 series as well as the Lewisburg Refresh PCHs. In this article, we are going to focus on the chips and the platform features they provide. We have a lot of juicy details on the DCPMM 200 series as well as the Lewisburg Refresh PCHs in those articles, so check them out. We are also going to discuss what is next for Intel so you can plan your IT purchasing accordingly.

The Cooper Lake Xeon Saga

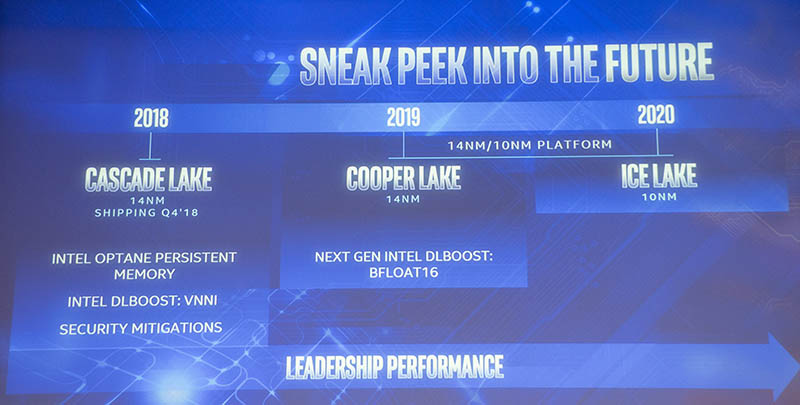

Before we get too far here, the Cooper Lake we are seeing today is not the mainstream Cooper Lake that would have used a multi-die approach to put more cores in a next-gen Whitley socket that would be shared with Ice Lake Xeons later in 2020 (XCC dies)/ 2021 (HCC dies) is the word on the street. Earlier in 2020, we broke that Intel Cooper Lake was rationalized, removing those parts. This has been a long journey, but with schedule slips, Cooper Lake did not make 2019 as Intel showed in Q3 2018, so the mainstream part was rationalized.

Effectively the strategy was to have Cooper be a leading Xeon CPU for Whitley, offering higher core counts to combat AMD EPYC. Intel could then offer what is launching today as a 4-8P platform for scale-up and Facebook. On the topic of Facebook, while most of our readers will experience the Cooper Lake a 4-socket and larger platforms, Facebook has the OCP Sonora Pass dual-socket system for mainstream compute nodes.

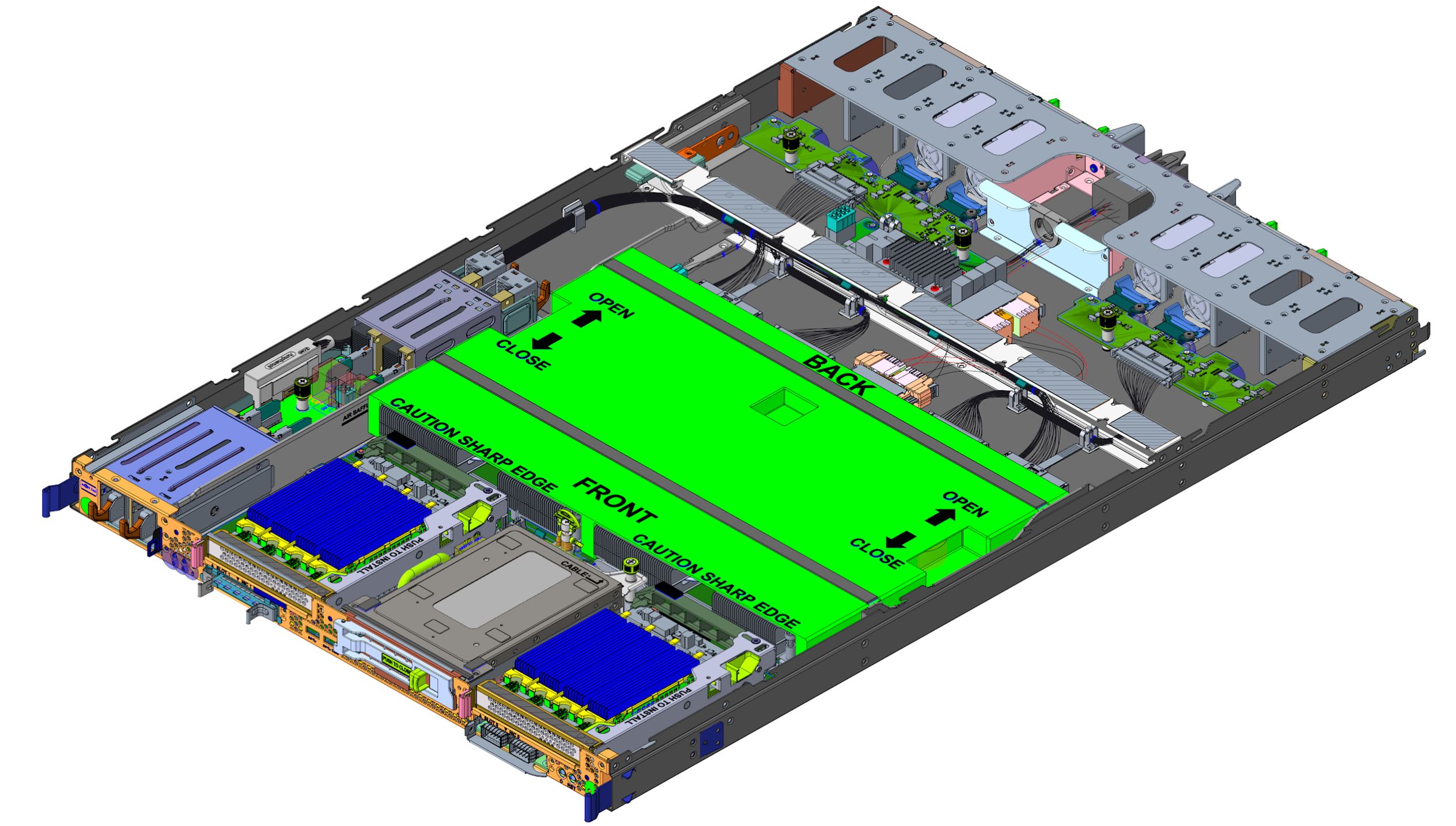

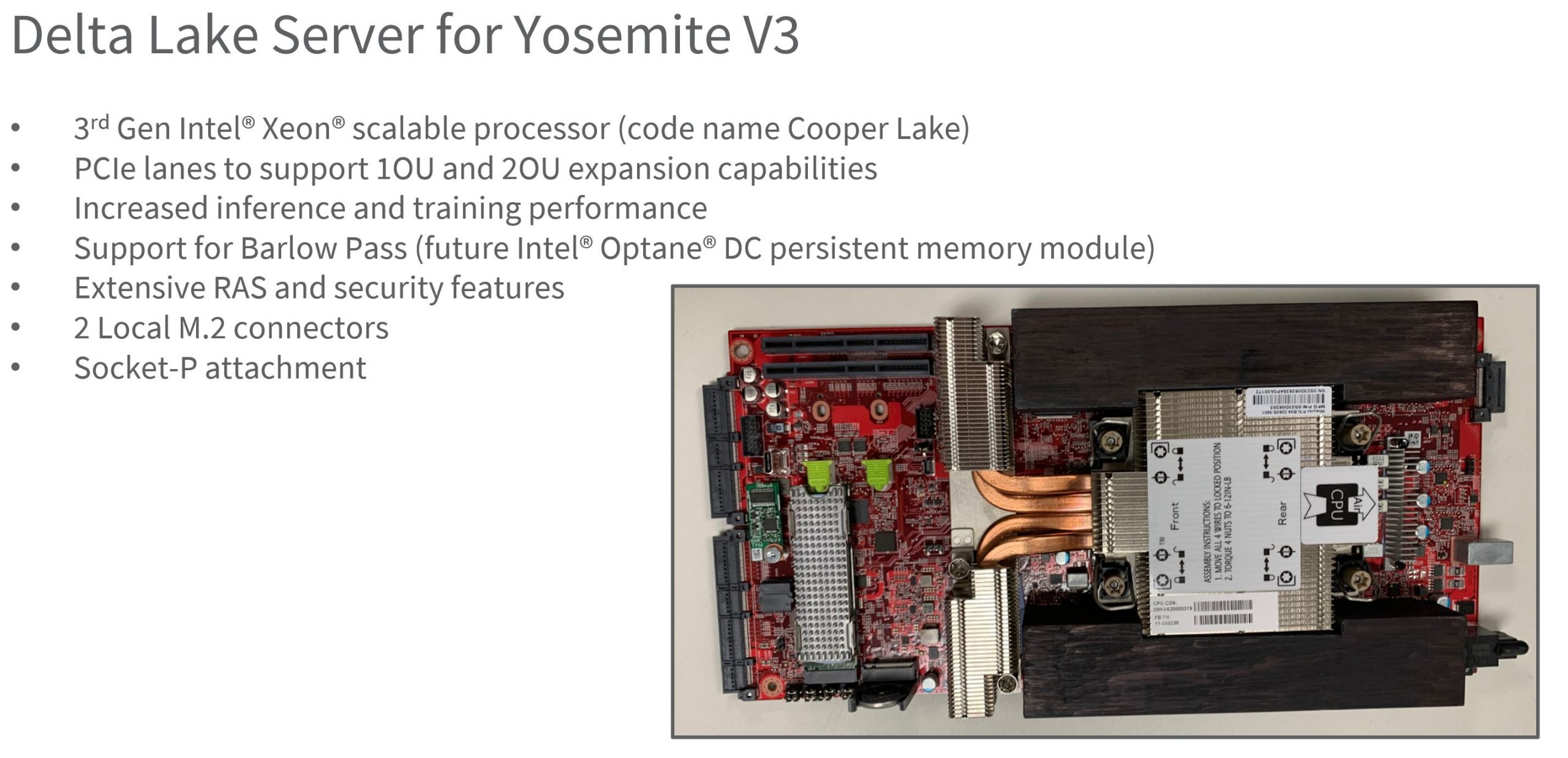

This is also being used in a single-socket configuration in Yosemite V3’s Delta Lake server platform.

Using Cooper Lake allows Facebook to introduce bfloat16 systems both in mainstream compute as well as for its single NUMA node front-end platforms. This is likely the reason we do not have a Cascade Lake-D. We go into details on these platforms and the implications in Facebook Introduces Next-Gen Cooper Lake Intel Xeon Platforms.

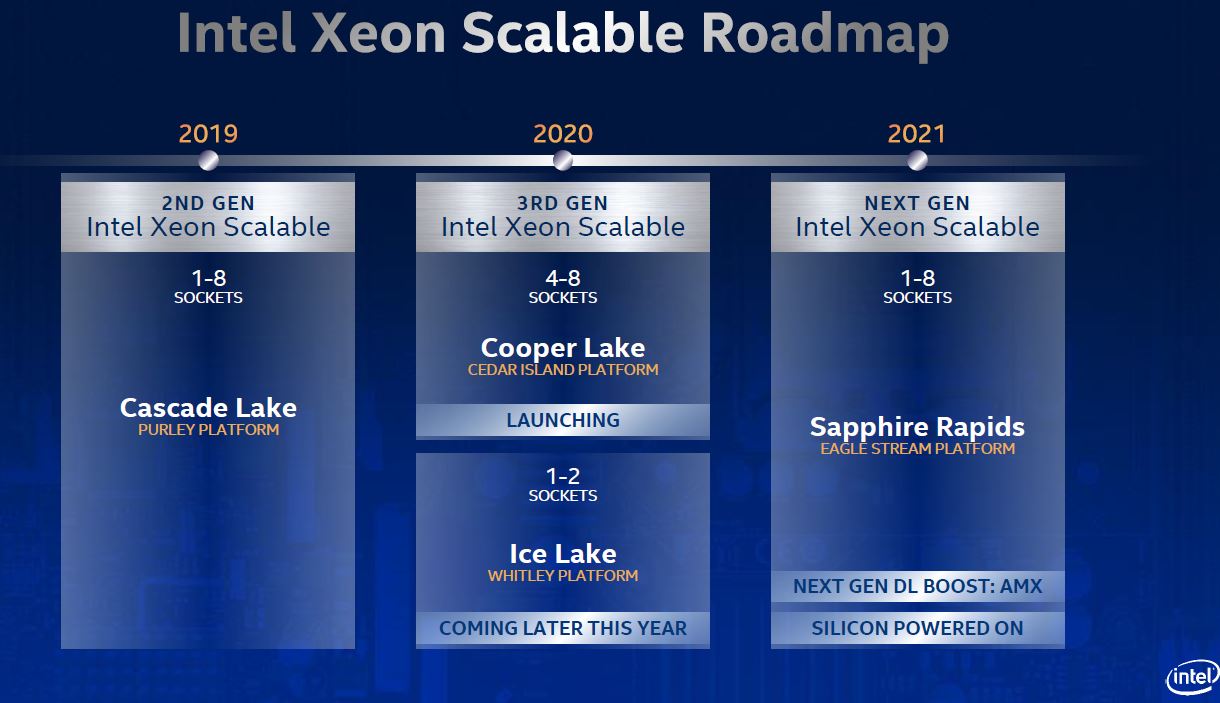

Since Ice Lake Xeons / Whitley will be a 1 and 2-socket only platform, Cooper will be the four-socket option until Sapphire Rapids Xeons launch with an enormous technology refresh in late 2021. It also positions Cooper to have the highest core counts and memory footprint per physical server, with a big caveat.

The big (XCC die) Ice Lake Xeons are expected to top out at 38 cores. This may change, but that means 76 cores and 144 threads per server for Ice Lake by the end of the year along with up to 6TB of memory (8 channels with 256GB DDR4 DIMM + 512GB Intel Optane DCPMM 200 series “Barlow Pass”) per socket for 12TB of memory. In contrast, Cooper Lake 4P buyers get 28 cores per socket * 4 sockets for 112 cores and 244 threads per server. One also gets 4.5TB of memory per socket for a total of 18TB of memory per 4P server. This is the same as the current generation Cascade Lake CPUs.

While Intel is expected to drop the “high-memory capacity” SKUs and associated premiums with Ice Lake, the current Cooper Lake platform still has Cascade Lake Xeon-like high-memory SKUs that carry premiums.

As a result of all of this, a 4P Cascade Lake Xeon server will be obsolete, except for a fairly specific use case. A 4P Cooper Lake Xeon server will still have a place in the market after Ice Lake launches and until we get to Sapphire Rapids Xeons targeted for 2021.

Perhaps the best way to think about the Cooper Lake platform as we go through this article is that it is a refreshed Cascade Lake line, rather than being more similar to the Ice Lake Xeon line. This is another 14nm part, and it shares a lot of features with Cascade Lake, but Intel did a fairly large tweak to the underlying hardware.

Going forward, let us be clear. Intel has discussed Ice Lake Cores and there are going to be major gains. Intel will also have the Whitley socket for higher TDPs, 8-channel memory, PCIe Gen4, new instructions, and a host of other upgrades. The reason we are not seeing vendors push for mainstream 2P adoption of this version of Cooper Lake is that Intel committed to having Ice Lake Xeons (even if just high-performance variants) out in 2020. Most vendors have their Whitley platforms ready because of the Q3 2018 roadmap with Cooper in that platform, so there was likely little appetite to launch a Cooper Lake 1P/2P platform that would be completely eclipsed by Ice Lake in the subsequent two quarters. It takes most large vendors more than two quarters to roll out a line of servers for a new platform.

As a quick note on the above, technically Intel released non-R CPUs such as the Intel Xeon Gold 6250 that were 3 UPI and 4-socket capable during the refresh window, but we are treating them as updates to the original Cascade Lake line rather than part of the Refresh since that view aligns closely with features and pricing instead of aligning with timing.

We are going to talk about what all this means for server buyers in 2020 in a subsequent piece. It is important to realize the context of the “Cooper Lake Saga” before even scratching the details. Without this context, the launch may make sense. With the nuanced positioning of Cascade Lake Refresh, Cooper Lake, Ice Lake, and Sapphire Rapids, we get the story.

With the Saga recounted, let us move on to the new platform.

Why doesn’t anyone else have info on the PMem 200 in memory mode that you’re talking about on page 3. STH is the only place reporting that.

Fred we confirmed this with Intel before the piece went live. To be fair, it was very hard to figure out based on what Intel released in their materials.

This is the most in-depth coverage of Cooper I’ve seen. There’s crazy detail here. excellent work Patrick and STH

Does anyone know what the “MOQ” refers to in the Optane specs, the values (4 and 50) align with the existing Optane DIMMs but could never find what it referred to.

Thanks

“a stopgap step”

From the processor king that has been pumping $Bs Q after Q?

AMD for pumping out products, but Intel for pumping in $$$…

@binkyto… MGTFY.com. OH what help a google could be. MOQ typically refers to Minimum Order Quantity. however…if you had actually googled “Optane” + “MOQ”, without the quotes of course, you’d have come to this link:

https://ark.intel.com/content/www/us/en/ark/products/190348/intel-optane-persistent-memory-128gb-module.html

wherein it says:

Intel® Optane™ Persistent Memory 128GB Module (1.0) 4 Pack

Ordering Code

NMA1XXD128GPSU4

Recommended Customer Price

$1499.00

Intel® Optane™ Persistent Memory 128GB Module (1.0) 50 Pack

Ordering Code

NMA1XXD128GPSUF

Recommended Customer Price

$1499.00

4 pack and 50 packs. they have different sku’s. you’r welcome.

Isn’t MOQ minimum order quantity BinkyTo?

Nice writeup STH.

That Optane PMem 200 I’ve read Anandtech, Toms, and nextplatform and none of them mentioned it in their articles.

Fire.

Keep cutting through the marketing BS at these companies.

Can’t wait to see how it stacks up with the exiting parts and Epyc 7xx2

The use of place names to distinguish these products creates confusion. Reading articles about Intel CPUs requires one to have a decoder ring handy.

Intel should stop being obscurantist.

Is the TDP 15W or 18W? If you go to their site it still mentions 18W.

Also some of their datasheet still mentions the specs for the 100 series. Like here: https://www.tomshardware.com/news/intel-announces-xeon-scalable-cooper-lake-cpus-optane-persistent-memory-200-series

Will the rated 8.1GB/3.15GB R/W bandwidth improve with the Icelake platform? Why does the datasheet say it supports up to 2666MT/s?

Intel got hurt bad by their 10nm process. The delays that caused allowed AMD to leapfrog. The question now is who will be first to market with PCIe Gen 5. That will be an enormous advantage in the server space.

I love competition. I really do.

I’m going to have to dig into this further tomorrow, but what has really caught my eye this week is working Sapphire Rapids chips in Intel labs. Sapphire Rapids is supposed to have both DDR5 and PCIe 5, and they are targeting a 2021 release. The reporting on AMD’s roadmap that I have seen has Genoa and the SP5 socket (with DDR5 and PCIe 5) coming out in 2022. I wonder how many months Intel powered servers will have those features before AMD’s get them? DDR5 looks like a huge upgrade. I thought AMD’s technical dominance in servers would last a couple more years, I might have been wrong.

@Wayne Borean: From a business point of view, Amd has done nothing to really stress Intel as regards profits/revenue over the last 3 years since Epyc has been on the market. Most of the marketshare that Epyc has gained over the last 3 years is miniscule when you look at the revenu/profit that Amd earns from Epyc.

If Amd cannot compete on a volume basis with Intel when Zen 3 come out, Intel will continue to dominate in the only ONE true area that counts for any business: increasing revenue/profits over your competitors

Amd has amazing performance compared to Intel but at what cost??

Once Ice Lake server comes out in volume then and only then will we see a head to head competition and based on the last 3 years when Amd clearly had the performance lead, it does not look too good for Amd in the server space!!!