Today Intel is announcing some major milestones around OneAPI and its XPU designs. Perhaps the biggest is the launch of its Intel Server GPU. As we previewed at Architecture Day 2020, the first GPU we expect for the data center from Intel is the Xe LP GPU. That is exactly what Intel is showing today and we wanted to highlight.

Intel Server GPU

First off, this is not the high-performance Xe HP or Xe HPC GPU for the data center. Instead, this is a lower-end and lower-power part called the Xe LP GPU that is more along the lines of what is found in mobile solutions. This is for a good reason.

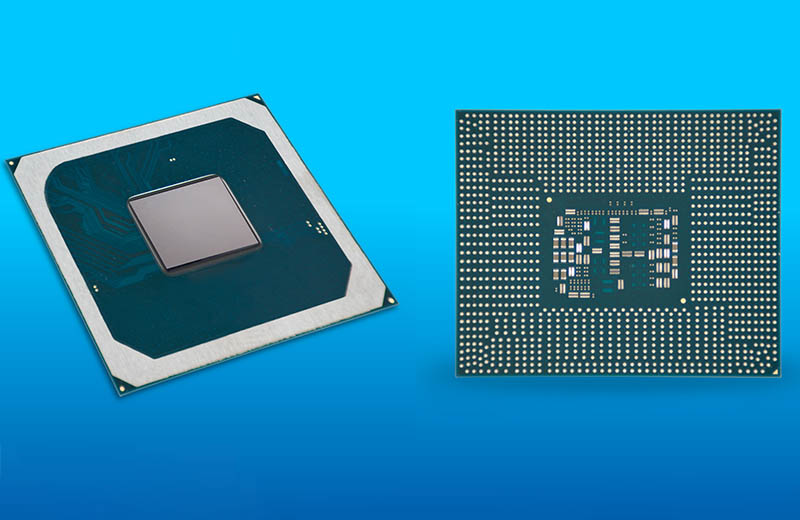

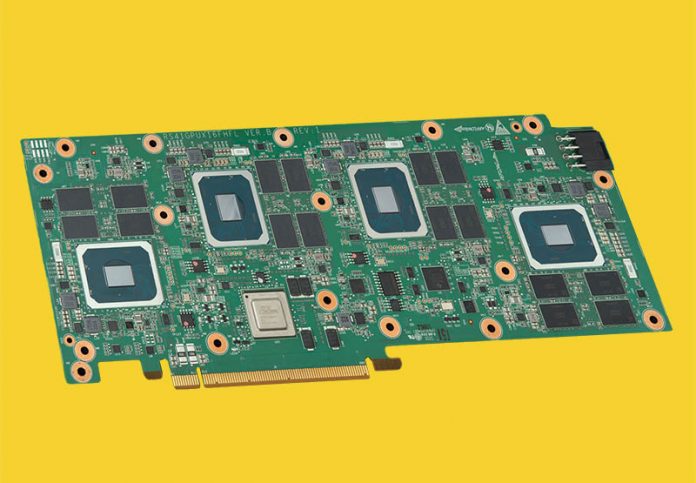

The Intel Server GPU is far from the large GPUs we see from NVIDIA and AMD these days. Here are the package shots we see. We are going to discuss die size in a moment.

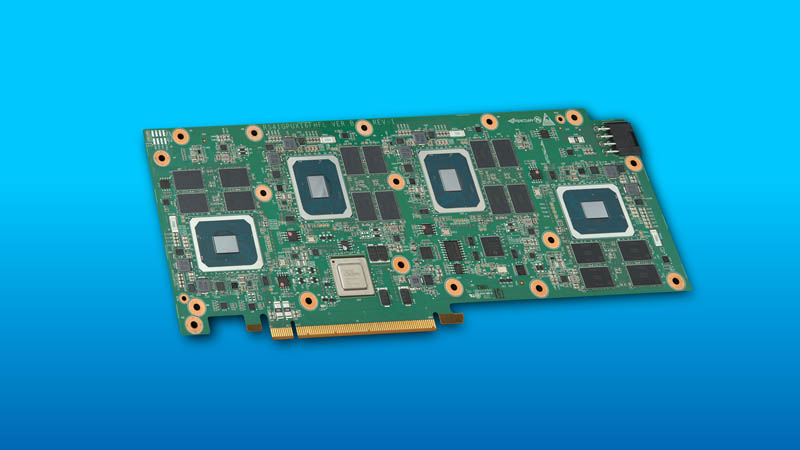

Putting one of these on a PCIe card for a server is not necessarily useful given the other solutions already in the market. Instead, Intel is showing a partner solution from H3C. H3C is the server JV from HPE in China. Since these are made for video transcoding, the fact that they have a major Chinese customer makes a lot of sense.

The H3C XG310 utilizes four of the Intel Server GPUs. Each has 8GB of memory for a total of 32GB on the card. One can also see the PLX switch on the card.

Something that is interesting is that the actual die size of the GPU, based on this view, seems on the same order of magnitude as the 8GB memory packages and smaller than the PLX switch package on the board. For some frame of reference, this is much smaller than higher-end GPUs such as the NVIDIA A100 which is to be expected given its low power focus.

The rear is mostly barren. Again, this is a low power card.

Something that gives us a hint that this is a low-power card is simply the form factor. We see a single width cooler. As a result, it is designed to be packed into servers adding many GPUs to a single server.

Intel Server GPU Use Cases

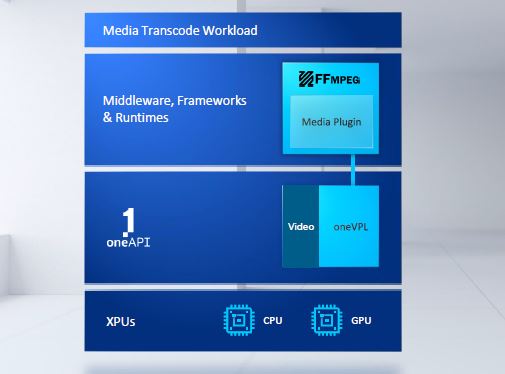

Another hint at how these are being used are the use cases. Intel is touting the ability of OneAPI to move transcoding workloads from the CPU to the array of GPUs. Transcoding is a great example of where having a number of GPUs helps. Intel had the Visual Compute Accelerator where consumer derived systems were added to a PCIe card to get GPU density. Each has its own hardware transcoders so adding more GPUs to a card means more transcoder engines in a system.

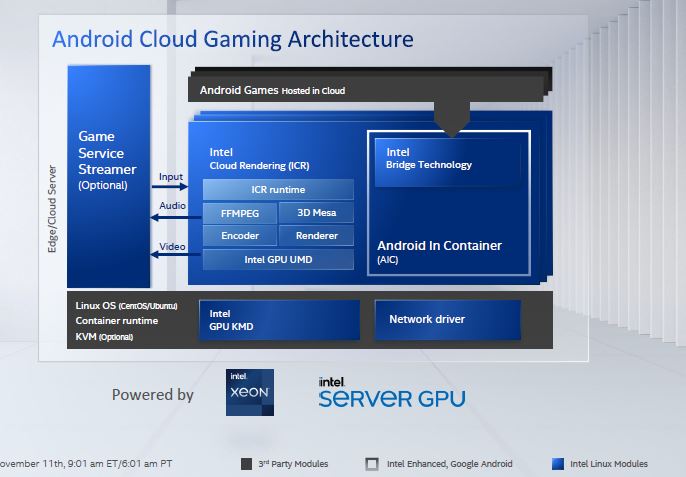

On the cloud gaming side, we did our AoA Analysis Marvell ThunderX2 Equals 190 Raspberry Pi 4 discussing the cloud gaming setup. Here Intel has its Android translators to make games run on x86 Xeons. It can then pass the video rendering/ transcoding to the GPUs and support more sessions per server streaming the results to a user.

The somewhat interesting aspect of these workloads is that the competition is not necessarily just NVIDIA and AMD. For example, Broadcom has specialized transcoding chips for a major US hyper-scaler that does a lot of video content delivery.

Final Words

It is great to see the Intel Server GPU (Xe LP) version out. At the same time, it would have been nice to see examples from other customers. In the China market, there is a huge demand for transcoding given the number of customers that need content delivered via mobile devices. Given the demand in China, India, and other markets, we would have liked to have seen more customers support the new GPU, but it is still early days.

This is certainly a first step and a good one for the industry to have a third GPU player even if it is at a relatively low-end of the market. Using OneAPI, Intel has the potential to build upon this foundation with new higher-end GPUs or different types of processors.

How much does it cost?, if it costs about 1500€ it will be mine

Wonder if this will work with Plex for transcoding when it comes out as a “intel GPU”. Currently using an Nvidia P2200 for transcoding, if this is better/faster/lower power, it might be a win.

“We are going to discuss die size in a moment.”… but where?

Nice article.

Is that plate screwed to the back of the card the only means of cooling, or does cooled air get forced down through the slot in the top?

I’m curious about the pcie switch chip. The DG1 version of this GPU had four lanes of PCIE4, while this particular board is described as having 16 lanes of PCIE3.

Does this version of the SG1 chip only support PCIE3, or is the PCIE3 limitation being created by the PCIE switch?

Also, is the PCIE switch only being used for retiming or is it being used somehow to switch among GPUs? If it is switching among GPUs, does that imply that there are more than 4 lanes of PCIE per GPU?

the board info lists “passive” for thermal.

https://www.h3c.com/en/Products_Technology/Enterprise_Products/Servers/GPU/XG310_GPU/